FPGAs are great, but open source they are not. All the players in FPGA land have their own proprietary tools for creating bitstream files, and synthesizing the HDL of your choice for any FPGA usually means agreeing to terms and conditions that nobody reads.

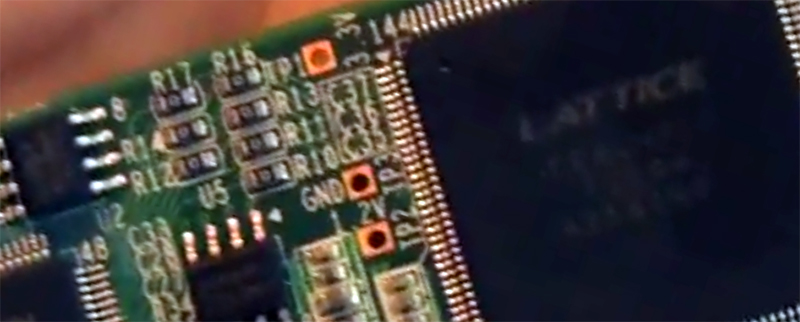

After months of work, and based on the previous work of [Clifford Wolf] and [Mathias Lasser], [Cotton Seed] has released a fully open source Verilog to bitstream development tool chain for the Lattice iCE40LP with support for more devices in the works.

Last March, we saw the reverse engineering of the Lattice ICE40 bitstream, but this is a far cry from a robust, mature development platform. Along with Yosys, also written by [Clifford Wolf] it’s relatively simple to go from Verilog to an FPGA that runs your own code.

Video demo below, and there’s a ton of documentation over on the Project IceStorm project page. You can pick up the relevant dev board for about $22 as well.

Yosys!!! Yosys!!! Yosys!!! Yosys!!!

Never understood the business model of certain chip manufacturers with regard to their restricted programming tools. You’re either selling hardware or software. Pick one.

Yep. That’s stupid. Look at Atmel vs Microchip. Microchip used to be king (at least in my perception) but then the Arduino came out and it was easy to use and free. It was the gateway drug to digital electronics and everyone jumped on board. I’m not sure when Atmel Studio became free but that’s a good idea since it also makes it easy to use Atmel products. I think that Microchip finally got a clue and released a free compiler for their PIC series but it was way too late. These days it is rare that I see something on kickstarter or somewhere else that has anything other than an Atmel chip inside.

FPGA tools are also free, as long as you design for the smaller chips. And if you can afford the big FPGAs, you can probably afford to buy the tool.

The price does not matter – it is all about the freedom, privacy, reliability, independence, sustainability… It is same as with the Free software (or “open source” if you wish): we don’t use it because it is cheaper – the price is not the main reason and the motivation.

Free is not (only) about money. It’s about understanding and being able to fix your toolchain. It’s about whether who controls your critical infrastructure: your vendor, or you.

Free is also about you coming up with elegant hacks your vendor has never thought of.

In the end, it tends to benefit all.

Sure, it’s nice for the user, but there’s not much benefit for the FPGA vendor to open up the tools. And there’s not a big disadvantage of charging money.

I totally disagree lol. The hardest part of getting into FPGA is not Verilog or VHDL, it’s learning how to drive the IDE / ISE or whatever the manufacture provides. I downloaded the Xilinx ISE which took 3 days over my connection. Then it took a week to register it because the registration process failed the first time and I had to wait for them to answer an email, which they didn’t so I created another profile and started over. The the downloaded ISE worked for about a week and stopped working (couldn’t see the hardware programming device). It took me two weeks to work out how to fix this problem so I made a batch file to fix it in future. Now that’s just downloading and installing.

Stage 2), The ISE has so many icons that I call it brainfu(k. I am not at all game enough to explore what they do because I worry that clicking one may ‘break’ the ISE and take me weeks to fix. It does ten thousand things that I will never want. It has no easy mode. The code editor is nothing more that the absolutely most basic text highlighter that only does some keywords. The error message all come from a random part of the tool chain so they have absolutely no meaning because they have no context. They might as well just be error numbers. It generates so many cryptic warning messages and if you google them you find the general consensus is to ignore Xilinx warning messages because no one actually has any clue what they mean.

The Xilinx tool chain itself is absolutely perfect with down to the gate optimisation. The user interface … well I call it Brainfu(k after this – http://en.wikipedia.org/wiki/Brainfuck

I have also used the Altera IDE and so far I am ok with it except I can’t use files for hardware constraints. The be fair I have only just started with the ALtera IDE the worst may be yet to come or it may be ok.

Why do people use the vendor development environments? Well because they have absolutely no choice? If you had a choice then there is no way that you would choose it in a million years! If you did try it then you would end up killing it with a wooden stake and laying it bare in a room full of mirrors open to sun light and covered in religious crosses.

Why are the vendor FPGA IDE’s such a absolute pain in the ass, and I really mean a pain in the ass like if you gave me a million dollars to make a system that was even more of a pain in the ass then I would tell you that a million dollars is not enough. It’s because they have NO competition – closed source tool chain.

No by contrast in the micro-controller world. PIC took off when there were non-vendor programmers and educational tools available. We have a gazillion young people that have cut their teeth on Atmel AVR’s in the Arduino environment.

As far as I am concerned this (open source tool chain) couldn’t of happened soon enough. It will remove so many barriers for entry to FPGA / CPLD.

I have no affiliation with Xilinx, Altera, Micrchip or Atmel.

Actually your complain is with the IDE, not the tool chain. Free software can have terrible IDE too.

There are 3rd parties paid software that does IDE, synthesis and call up Xilinx/Altera/Lattice etc tool to generate the bit stream. Most of the back end stuff are command lines that get called by scripts. I am sure if you look far enough, you could use a make file or script file to drive the build process.

According to the annual reports of both companies Microchip sales were 50% more than Atmel. Arduino sales are likely just a blip on the Atmel sales chart.

FWIW, Microchip’s largest division is actually their Analog group. PIC is a smaller division for them then their Analog product lines.

Okay, atmel studio was long long long way ago before anyone thinked about arduino free…..I don’t know how this was with the pic c-compiler, but the pic assembler was free for all pic’s. That was the times where anyone coded microcontrollers in ASM. Long time I didn’t perceived that there is a PIC that can handle C-Code in usable speed.

I’m not ready to write off Microchip just yet. There are good reasons you find so many PICs in fault tolerant and robust embedded applications. The toolchain they promote isn’t one of those reasons. An opensource toolkit benefits everyone.

Me either – especially given that they bought Atmel not too long ago.

Silicon labs … Purchased!

Marketing … Purchased!

Engineers … Purchased!

Competition … Purchased!

The problem is that parts of the FPGA toolchain are bought-in from specialist developers, so they wouldn’t be able to give everything away even if they wanted to.

In practice it’s not an issue as all vendors supply free tools for any part likely to be used by anyone on a budget.

It’s just that for someone accustomed to open source software and toolchains, the platforms these tools run can be too big of a constraint. Usually either just Windows PCs or sometimes x86 Linux PCs are supported.

Small computers like the Raspberry Pi are capable enough to be used as development platforms for software. RAM permitting, small FPGA designs could conceivably be synthesised on these platforms. Imagine a Pi or similar with an FPGA add-on board that can tweak and synthesise its own design when needed, perhaps reacting to changes in its environment, maybe deployed somewhere difficult to reach.

Yes! A Raspberry Pi hat with an iCE40HX8K is already in the works. The open-source tool chain will run on the Pi and be able to generate new designs.

Correction – there are *two* such hats in development: The , and the [CAT board](https://hackaday.io/project/7982-cat-board).

The first has an SRAM onboard, the second a much larger SDRAM – this makes them both good for different applications.

The first is better for a small embedded control system (SRAM has minimum latency, good for implementing CPU on the FPGA), and the second would be better for streaming acquired data, perhaps to the Pi or out to a USB2.0 fifo like an fx2. (you could wire a bunch of fast ADC into the CAT board, then maintain ADC capture streaming at up to 40 MB/s total into a Allwinner A10 SoC – presuming you had a sufficiently large sata drive plugged in to save it onto…)

The FPGA vendors sub-license bits and pieces of the software in their tools chain. e.g. ModelSim. There are insane amount of patents and bleeding edge IP in their products. So there bound to be some legal terms you have to agree to. Xilinx even compiled their tool chain for Linux.

Most users have no quarrels with the free to use web tools as they are more concern with getting *real* work done or doing cool stuff with their FPGA than wasting time complaining about religion, politics and ideology.

If you can get the open source bit stream to work, good for you.

If the FPGA is being used for security, then having an unauditable blob is often seen as a potential risk.

>unauditable blob

How do you audit the FPGA hardware itself? How do you know the bitstream you load is what is actually configured into the logic?

The same is true of ASIC’s or even CPU’s, it is impossible to define where trust begins. But people have decaped IC’s and backward engineered them, granted older chips (silicon zoo).

The lower you can go the happier people are when it comes to security.

Not to the NSA…

The tool chain isn’t smart enough to detect the intention of your code. Everything about FPGA is painfully low level.

So I don’t expect understand at the system level what you are trying to build and to insert hidden NSA usable back doors into your core. Compiler technologies are not yet there.

The of things to worry about is how secure the vendor security measures are to encrypting your bitstream. Is there a NSA backdoor key somewhere that they can decompile your bitstream? Can the NSA intercept your hardware on route from your CM back to your to insert a backdoor into your chip or replaced your chip with the one that has wireless access? Or even backdoors/trojan in the tool chain to send your FPGA HDL source code to the mother ship.

But there are hard IP blocks in FPGAs. iCE40 LM/Ultra/UltraLite contain I2C controllers so you don’t have to synthesize one. How do you know they didn’t hide a backdoor in there?

So how exactly would you backdoor an I2C block when you have no idea what it will be talking to? And all its activity is clearly visible on the external I2C lines?

To backdoor the I2C block, I’d add logic to detect a magic sequence on the bus that enables an internal I2C slave that can read the internal state of all cells. Something like JTAG, which these devices lack, without all the security features of bigger FPGAs.

You don’t have to use the built-in hard cores for mundane stuff like I2C. The tool doesn’t stop you from implementing your own version of the cores you can trust. If you do worry, you should treat the whole chip as an untrusted part – you do have control on what’s coming out of the I/O pins and access to your board. If you worry about RF bug, use a RF shield over the chip, ferrite beads on power lines etc.

Who is it to say that the chip someone else decap a chip is the same die/metal mask rev as yours or the guy didn’t work for NSA? Unless you want to be the type of guy that build the whole computer from discrete transistors, passives etc and shy away from using a chip. You can use a curve tracer on the transistors to make sure they are real too.

The NSA is not Santa Claus. They can’t be anywhere (yet). At some point you do have to trust your chip vendors, supply chain, couriers and contract manufacturers.

Author of the place and route tool here. Nobody’s arguing the vendors should open their tools. Most of what you say would apply to compilers, too. But I can confidently say we live in a better world because we have GCC and LLVM and we’re not locked into Intel’s compilers. I used them for *real* work every day.

My comments were directed at [eray]…

Sorry, I misunderstood the comment nesting.

Awesome, I have been waiting for something like this for a long while!

Now the only thing I am still hoping for is VHDL support, otherwise I’ll just have to learn verilog.

Very nice this is finaly possible :)

Now the only thing I am hoping for is VHDL support, otherwise I’ll have to learn Verilog.

Learn Verilog, it’s far simpler/basic in comparison :-)

VHDL is more like SystemVerilog (which is Verilog with more features). You’ll soon find the things you no longer need to do (e.g. component declarations repeating entity definition) and loads of features that it doesn’t have (e.g. records).

If you only used std_logic and std_logic_vector ports then it should be quite quick to pick up.

Verilog is an American thing! The rest of the world uses VHDL. While Verilog may seem simpler it is also more abstract and makes it harder to imaging what your code looks like at a logic level.

This may end up being FPGA’Duino. I hope this goes somewhere. There is a severe lack of development tools for CPLD/FPGA.

Erwha?

VHDL and Verilog are equally non-abstract for synthesizable code. Really, they have to be: synthesizers absolutely stink compared to, say, compilers. There are lots of ways to implement the same logic in different HDL, and you’ll get different synthesis a lot of times.

Verilog’s big advantage over VHDL is that it’s nowhere near as verbose.

VHDL’s big advantage is that you can create complex types and pass them around. (You can’t, for instance, pass a multidimensional array through ports in Verilog).

I agree with all you points, Pat.

Oops, forgot. I found that Verilog looks a little too much like C – which it really is not – and I find it easier to make conceptual mistakes. But those are. Y mistakes and not the fault of Verilog.

Mention America … in come the torpedos lol.

You say there are lots of ways to implement HDL at a gate level and you end up with different synthesis. Perhaps this happens to you with Verilog, it doesn’t happen to me with VHDL. I have tested the Xilinx VHDL optimisation and find that it work exceptionally well. I have given it registered logic where there is an equivalent combinational logic and it has optimised out the register. I have given it combinational logic that is specified to glitch due to gate delays and it has implement a register or thrown an error.

You say that VHDL is verbose and yes that is true but because it is so verbose more detail is specified and you are less likely to end up with unintended results.

It still remains that Verilog is predominately an American thing but sure I understand the rest of the world has got it wrong.

For what it is worth my school UNC Charlotte teaches vhdl…. And the teachers are 50/50 American foreign and almost all of them are good teachers though there are always exception.

Vhdl and ada/spark/parasail all look very similar conceivably you could standardize hardware and software around these languages…. Which would be pretty cool.

AFAIK most of the FPGA vendor tools convert the primitives coming off the HDL compilers into bit stream. Primitives are sort of like intermediate forms of compiler code. e.g. I/O cells, logic blocks, memory etc. The choice of your HDL language shouldn’t be a matter as the HDL compiler would have translated it. This is also why you can have mixed HDL in a FPGA project.

It’s not mentioned in the article, but the author of the place and route tool linked above is Cotton Seed. (Yes, that’s me.)

Nice work! Do you have background in writing logic synthesis tools, or is this just a super-human hobby for you?

And more importantly, can you write a verilog synthesis tool that will use 74xx and 4000 series TTL logic chips instead of macro-cells and generate an optimized KiCAD schematic and board layout for a given Verilog RTL design? My vintage computing friends would love you long time and I’m pretty sure you would make HaD again!

You would need a page the size of a football field to print it out. Mid range FPGA’a would be equivalent to tens of thousands of 74xx logic chips.

This might be useful for CPLD’s. Even FPGA IDE’s allow schematic entry but who has the patience to draw a schematic with a hundred thousand gates?

Thanks! I have a background in compiler design, but this is my first attempt at hardware tools. Lots of fun! I hope I will have time to do more.

In the end, the tool was pretty straightforward. I learned most of what I needed from this excellent VLSI course on Coursera:

https://www.coursera.org/course/vlsicad

I definitely recommend it if you want to get involved. (For example, writing an analytic placer…)

The article doesn’t mention it, but the author of the place and route tool linked above, arachne-pnr, is Cotton Seed. (That’s me.)

The article does not mention it but it’s actually me.

Could not resist :)

Am I the only one here that has heard of MyHDL?

No but your are the only one that thinks it’s worth mentioning. It’s a more a rapid prototype tool that still translates into Verilog or VHDL on the back-end (because that’s what everything else eats). In theory you could use it here fronting this process. But the merits of which HDL do you use is not really relevant to the discussion of an open-source HDL to bit-stream process. But we can start another tangent like the Atmel vs Microchip debate above but let us call it Verilog vs VHDL vs MyHDL vs others.

Hit the button a little left and below this to start…

Huge accomplishment guys, regardless of the performance and optimization of the sources. Since most of the guys who built it are on here; what’s the comparison in logic footprint and execution with what you’ve built here versus say, the Lattice tools? Have you run any metrics on say, a CPU core or some other high-functioning, complex design unit?

Also curious, what do you do with prepackaged, presynthesized cores the vendor provides encrypted? i.e. the ones that Suppose you just can’t use them, eh?

“i.e. the ones that you can’t inspect as plain text. Suppose you just can’t use them, eh?”

Thanks! Unfortunately, benchmarking is prohibited by the Lattice EULA. This is our first release and there is still lots of room for improvement. I doubt we can do as well as the Lattice tools at this point, although I hope that will change.

I haven’t looked at presynthesized IP. It wouldn’t be hard to add a similar feature, but it would probably be a whole other reverse engineering job to support their cores.

… prohibited from benchmarking? That’s a really ridiculous EULA provision.

I wonder how the world can accept that these things are legal.

“If you buy this product from as, you agree to be banned from assessing how well it works.”

That’s basically their EULA, isn’t it?

Wow. That’s incredibly cool. Nice work.

This is awesome news! Too bad the shipping costs for the development board is over $60 (shipping to the Netherlands) :( Else I would have bought it and played with the open source tools immediately!

Have you tried farnell/element 14 ? I’m sure that the shipping would be cheaper from within Europe.

Or http://nl.rs-online.com/web/

Mouser has free shipping to the Netherlands on orders over 65 Euros.

Imagine a day when you can write just some VHDL code and a makefile… No 10GB IDEs, just an simple toolchain.

Well, that day has come for Verilog. I must say it is nice to call make and have hardware running in a couple minutes, and it’s also nice to be able to do that under Mac OS X as well. (for which NO vendor provides an FPGA toolchain. But icestorm / arachne-pnr / yosys works!)

Right now in the case of the Xilinx it is about 17GB (unpacked) from which only 0.000000001% is realy usable toochain and all the rest is *bloatware crap*.

It was a reason I have abandon fpga at all when my HD died, I just has not enough patience to download this bloatware again.

Try the Altera IDE. It’s still a bit large but at least you don’t have to offer your genitals on a silver platter to get a registration key.