Around the Hackaday secret bunker, we’ve been talking quite a bit about machine learning and neural networks. There’s been a lot of renewed interest in the topic recently because of the success of TensorFlow. If you are adept at Python and remember your high school algebra, you might enjoy [Oliver Holloway’s] tutorial on getting started with Tensorflow in Python.

[Oliver] gives links on how to do the setup with notes on Python versions. Then he shows some basic setup operations. From there, he has the software “learn” how to classify random points that either fall into a circle or don’t. Granted, this is easy enough to do with traditional programming, so it isn’t a great practical example, but it is illustrative for learning purposes.

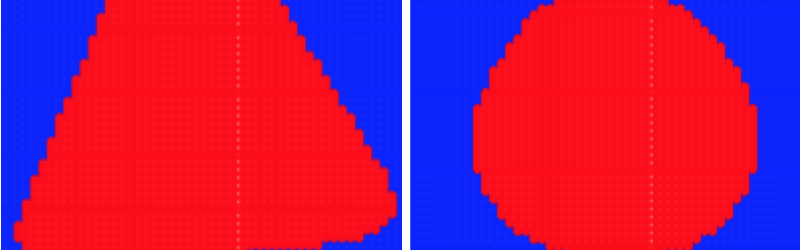

Given that it is easy to algorithmically decide which points are in the circle and which are not, it is simple to develop training data. It is also easy to look at the result and see how close it is to the actual circle. You’ll see that it takes a lot of slow learning before the result space looks like a circle and not a triangle or some other odd shape.

When we discuss neural networks, this is always the hitch. In a broad sense, there is no problem solvable with a neural network that isn’t solvable using traditional techniques. But for hard problems, it can be difficult to figure out how to apply those traditional techniques. For example, speech recognition without machine learning is possible, but using these techniques results in better speech recognition by simply training. The upside is that the neural network is effective and lower effort than trying to develop heuristics manually, while the downside is that you aren’t really in control of what the code is doing.

The question that always comes up is that of how similar to our brains are the neural networks. Most of us would say not very much, and now there is some evidence that your brain is using a mechanism closer to that of a quantum computer. One thing seems clear, though. If you build a computer that thinks like a human, it will probably have all the same flaws that human thinkers have.This may cause a problem, despite making good Star Trek episodes.

We’ve published our own getting started guide, but you never know which one will give you that “Aha!” moment. If you want the quickest possible introduction, be prepared to spend ten minutes.

“Around the Hackaday secret bunker, we’ve been talking quite a bit about machine learning and neural networks. ”

Maybe do an article on how ML and NN has improved your jobs around HaD.