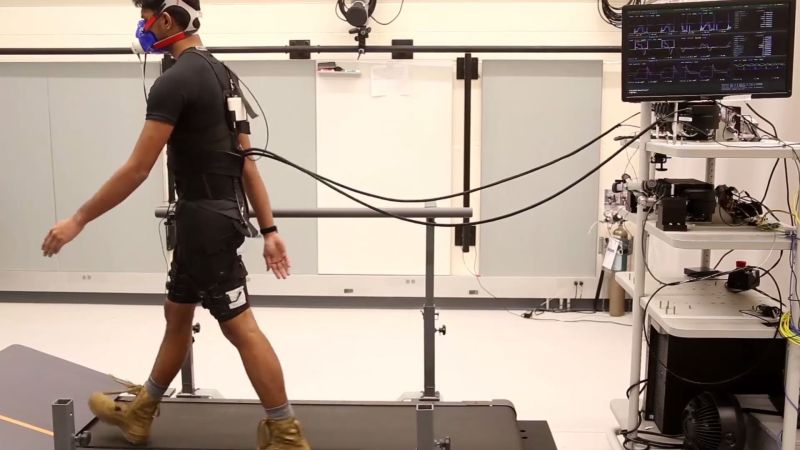

Wearables and robots don’t often intersect, because most robots rely on rigid bodies and programming while we don’t. Exoskeletons are an instance where robots interact with our bodies, and a soft exosuit is even closer to our physiology. Machine learning is closer to our minds than a simple state machine. The combination of machine learning software and a soft exosuit is a match made in heaven for the Harvard Biodesign Lab and Agile Robotics Lab.

Machine learning studies a walker’s steady gait for twenty periods while vitals are monitored to assess how much energy is being expended. After watching, the taught machine assists instead of assessing. This type of personalization has been done in the past, but the addition of machine learning shows that the necessary customization can be programmed into each machine without a team of humans.

Exoskeletons are no stranger to these pages, our 2017 Hackaday Prize gave $1000 to an open-source set of robotic legs and reported on an exoskeleton to keep seniors safe.

I worked on a program for DARPA that funded some of the Harvard work. It is amazing how hard it is to figure out how to assist different physiologies, gaits, and so on. It’s very possible to make a suit that helps one person and really hinders another…which is what most of the other performers ended up doing on this program. As far as I know, the Harvard group was the only moderately successful group.

This is super cool. If it learns well enough from its intended user it would feel a bit like a brain-machine interface, yet probably wouldn’t be useful in the hands of a different user. Sounds like something out of a science fiction novel.