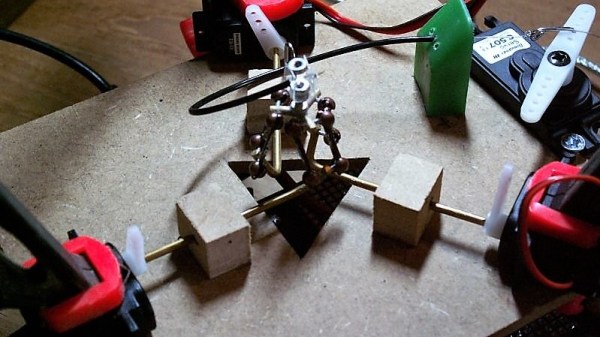

Over the years there have been many designs for pan-and-tilt camera mounts suitable for single board computer cameras. Often they mount small servos for the movement, but those in turn present problems when the device finds its way outdoors. [GOAT Industries] is here with a novel solution to this problem, instead of trying to cover up the servos on the mount itself, the whole thing is remotely controlled by linear actuators through Bowden cables.

Testing was performed using Mole-Grips instead of actuators, and revealed a few design quirks. There are hefty springs to provide tension, and since they work against 3D printed assemblies those in turn have to be reinforced. The layout of the Bowden cable run is also important, as it has a bearing on the amount of springinesss in the system. But it provides a versatile pan-and-tilt mount for a Pi camera mounted in an IP-rated box, which is the object of the exercise.

For anyone wishing to build one the files can be found in a GitHub repository, and there’s a video below showing the device in action. Meanwhile it’s by no means the first pan-and-tilt head we’ve seen here at Hackaday, however many others are by necessity much more substantial affairs.

Continue reading “Pan And Tilt The Weatherproof Way, With Bowden Cables”