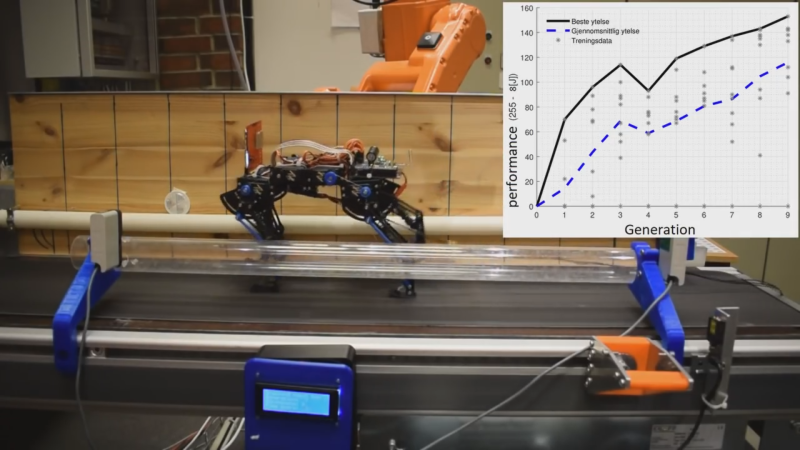

Most humans take a year to learn their first steps, and they are notoriously clumsy. [Hartvik Line] taught a robotic cat to walk [YouTube link] in less time, but this cat had a couple advantages over a pre-toddler. The first advantage was that it had four legs, while the second came from a machine learning technique called genetic algorithms that surpassed human fine-tuning in two hours. That’s a pretty good benchmark.

The robot itself is an impressive piece inspired by robots at EPFL, a research institute in Switzerland. All that Swiss engineering is not easy for one person to program, much less a student, but that is exactly what happened. “Nixie,” as she is called, is a part of a master thesis for [Hartvik] at the University of Stavanger in Norway. Machine learning efficiency outstripped human meddling very quickly, and it can even relearn to walk if the chassis is damaged.

We have been watching genetic algorithm programming for more than half of a decade, and Skynet hasn’t popped forth, however we have a robot kitty taking its first steps.

I made a robot that learned to balance once.

Not a good one, but nevertheless.

https://youtu.be/RD2ZHtSvuco

clearly needs more Kalman ;)

Thus proving the internet is actually all about cats.

Genetic algorithms can show impressive initial growth, but in my experience this tapers off pretty soon. The lack of short term “code”, the inability to plan or experiment within a generation limits the ultimate performance. All behaviour is genetically encoded limiting adaptability within a generation. That being said, a genetic algorithm of ideas may allow a system to “imagine” (simukate) the various outcomes and choose the best genetically designed action on a one off basis.

Back in the 90s I wanted to create a swarm of rudimentary bugbots to experiment with genetic algorithms. The platforms were to be cheap, 2 simple eyes, 2 motors and 2 wheels. Periodic pools of light as food. A simulation seemed a sensible idea as volumes and generations come at little cost. Initial results were much better than ever expected and within 6 generations 2 distinct species formed. One that ambushes the food, waiting for a newly dropped close supply, while the other madly chased any source. Although the results were surprising, subsequent generations did not show significant improvement. Perhaps this was the optimum output for this limited I o granularity and environmental simplicity.

GAs are just another method of maximization — one that’s more resistant to falling for local maxima than more naive ways. In GAs and similar optimization schemes, you’ve got a simple maximizer competing with a perturber: in GA the “mutations”, in simulated annealing the “temperature”, etc.

More or bigger perturbations are less likely get stuck in local optima, but can be slower to converge to the best solution, and vice-versa. Picking the perturbations “optimally” depends on the topology of your objective function. Got lots of peaks, but only one tallest one? You’ll need to perturb a lot to avoid getting stuck on the first mountain you find.

It sounds to me like you weren’t mutating enough. Or maybe, as you say, the environment was just too simple.

Optimization is one of the oldest/most useful math problems. Funny that it’s still so hard.

“Optimization is one of the oldest/most useful math problems. Funny that it’s still so hard.”

Because it’s different things, to different problems.

Can’t wait to see it chasing a laser dot on the floor. :)

We made ours chase a ball:)

https://www.youtube.com/watch?v=qymL_XMXIic

The ending of the video it terrible!

Yeah, it kind of falls in the category of “why the machines will rebel and destroy all humans”.