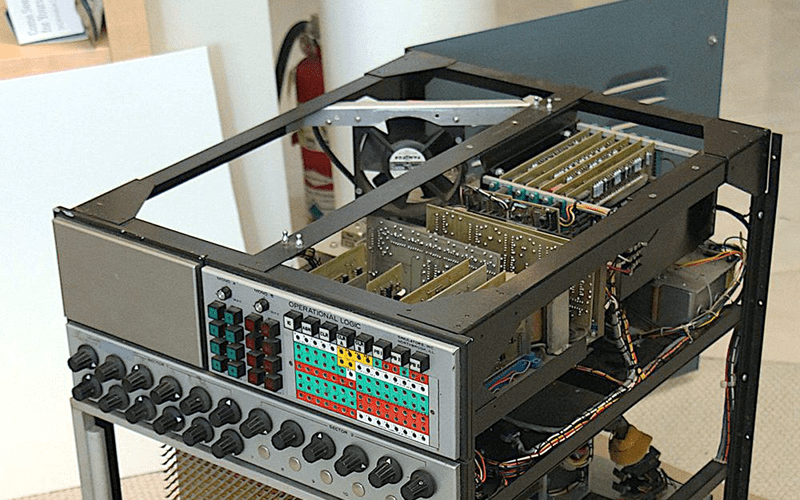

Today, most of what we think of as a computer uses digital technology. But that wasn’t always the case. From slide rules to mechanical fire solution computers to electronic analog computers, there have been plenty of computers that don’t work on 1s and 0s, but on analog quantities such as angle or voltage. [Ken Shirriff] is working to restore an analog computer from around 1969 provided by [CuriousMarc]. He’ll probably write a few posts, but this month’s one focuses on the op-amps.

For an electronic analog computer, the op-amp was the main processing element. You could feed multiple voltages in to do addition, and gain works for multiplication. If you add a capacitor, you can do integration. But there’s a problem.

A typical op-amp is fine for doing the kinds of things we normally ask them to do. For example, amplifying audio is a great use of an op amp. If the performance isn’t so good at DC or a few Hertz, no one will really mind much. But in an analog computer, low frequency or even DC signals are important and any offset, drift, or gain irregularities will affect your calculation.

One solution — the one used in this computer — is to incorporate choppers. A chopper essentially acts as a carrier frequency that is modulated by the input signal. The op-amp can now perform its task as an AC amplifier rather than a DC one, making it much more stable. At the end, another chopper reconstitutes the signal. While the analog computer in question did use ICs for the core op amps, the choppers and other circuitry to make them work well took up an entire board.

Programming is all done with patch cables. If you’d like to see more about how these worked, we talked about them earlier this year. We have to wonder, if digital computers didn’t work out, what the state of the art would be today in analog computing. After all, we had an analog laptop in 1959. Sort of.

I remember reading about chopper amplifiers way back in circuit cellar, Don Lancaster column. I don’t remember ever using one, but the technique reminds me of using an NCO in a digital receiver with a small frequency offset to get rid of the nasty stuff around DC (flicker noise, phase noise, strong interference). Wonder if the future will see a trend back toward analog computers with some kind of biological hybrid technique for that really deep thought?

The future will be better recognition of what’s already present: analog and digital work well together.

In fact, each is strong where the other is weak: digital is good at arbitrary pattern recognition and generation, time delay, and simple math (dividing by a time-varying value is surprisingly difficult, regardless of the technology used). Digital is also massively reconfigurable. Analog is good at handling simultaneous input and concurrency, has huge dynamic range, solves differential and transcendental equations that digital can only do with successive approximation, is fast, and is lightweight.

Systems that combine analog and digital, playing to both of their strengths, can do things that would be hard to accomplish with a pure-analog or pure-digital approach.

We’ll probably see more evolution of techniques for passing information between analog and digital systems. There are some interesting design challenges there.. ADC/DACs are complex, and pulse/frequency methods are relatively slow.

It’s just analog digits.

It is an analog to a digital.

Digital didn’t produce an analog to this, but if it did, it’s logo would have been a square sign.

“there have been plenty of computers that don’t work on 1s and 0s, but on analog quantities such as angle or voltage”

And before that computers were wetware; “computer” was a job title. I’ve known such computers, and some are still alive today :)

One of the most interesting books I own is “Introductory Operational Amplifiers and Linear ICs” by Coughlin and Villanucci. It does a really good job of explaining the theory behind op amps as well as going into using them as analog computing elements. Fascinating book.

I think I’ve got the fourth addition floating around somewhere. Granted it’s all well amd truly ingrained in my mind these days but I seem to remember it being aa pretty good reference.

^^^ edition [ curse autocorrect ]

Always looking for more references like this! Can you confirm the name? I’m finding “Operational Amplifiers and Linear Integrated Circuits” by Coughlin & Driscoll

thanks!

oh nevermind, think I found it.

Hackaday had an interesting article last year on chopper op amps: https://hackaday.com/2018/02/27/chopper-and-chopper-stabilised-amplifiers-what-are-they-all-about-then/

https://phys.org/news/2018-06-future-ai-hardware-based-analog.html

”The future of AI needs hardware accelerators based on analog memory devices”

>Analog techniques, involving continuously variable signals rather than binary 0s and 1s, have inherent limits on their precision—which is why modern computers are generally digital computers. However, AI researchers have begun to realize that their DNN models still work well even when digital precision is reduced to levels that would be far too low for almost any other computer application. Thus, for DNNs, it’s possible that maybe analog computation could also work.

See, e.g. TLC2654 (ACTIVE) Low-Noise Chopper-Stabilized Operational Amplifier

still available. have a ball.