The rapidly-improving speed and versatility of digital computers has mostly driven analogue computers out of use in modern systems, as has the relative difficulty of programming an analogue computer. There is a kind of art, though, in weaving together a series of op-amps to perform mathematical calculations; between this, a historical interest in the machines, and their rarity value, it’s no wonder that new analogue computers are being designed even now, such as [Markus Bindhammer]’s system.

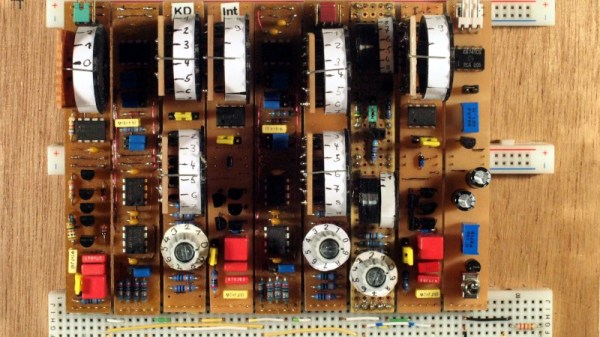

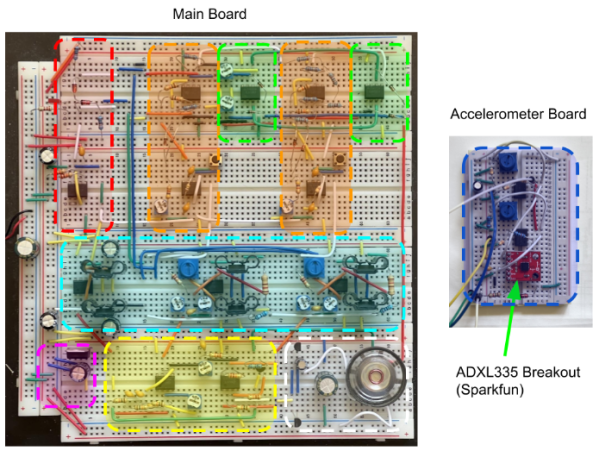

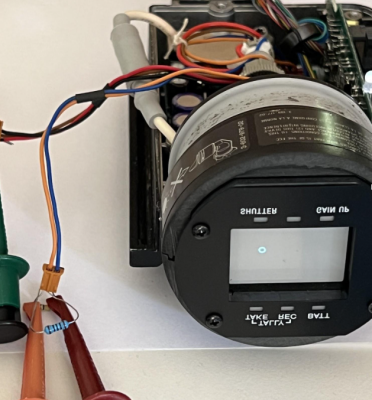

The computer is built around a combined circuit board and patch panel, based on the designs included in three papers in a online library of analogue computer references. The housing around the patch panel took design cues from the Polish AKAT-1 analogue computer, including the two dial voltage indicators and an oscilloscope display, in this case an inexpensive DSO-138. The patch panel uses banana connectors and the jumper wires use stackable connectors, so several wires can be connected to the same socket.

The computer itself has a summing amplifier circuit, a multiplier circuit, an integrator, and square, triangle, and sine wave generators. This simple set of tools is enough to simulate both simple and complex math; for example, [Markus] squared five volts with the multiplier, resulting in 2.5 volts (the multiplier divides the result by ten). A more advanced example is a leaky-integrator model of a neuron, which simulates a differential equation.

We’ve covered a few analogue computers before, as well as a neuron-simulating circuit similar to [Markus]’s demonstration.