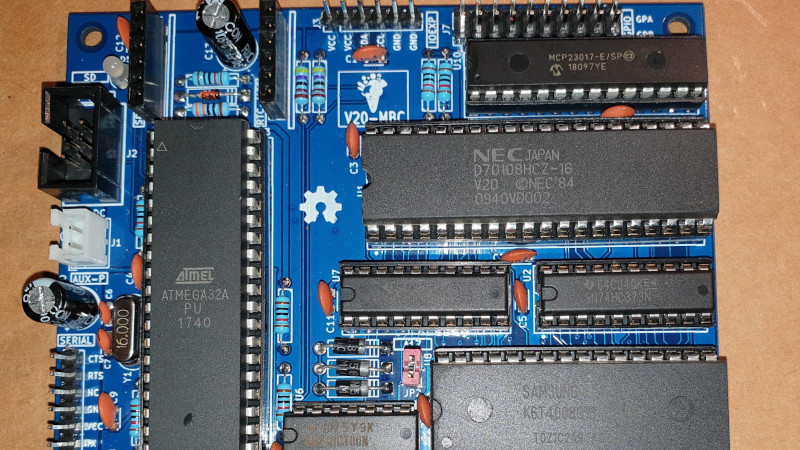

In the days when the best an impoverished student could hope to find in the way of computing was a cast-off 1980s PC clone, one upgrade was to fit an NEC V20 or V30 processor in place of the Intel 8088 or 8086. Whether it offered more than a marginal advantage is debatable, but it’s likely that one of the chip’s features would never have been used. These chips not only supported the 8086 instruction set, but also offered a compatibility mode with the older 8080 processor. It’s a feature that [Just4Fun] has taken advantage of, with V20-MBC, a single board computer that can run both CP/M-86 and CPM/80.

If this is starting to look a little familiar then it’s because we’ve featured a number of [Just4Fun]’s boards before. The Z80-MBC2 uses the same form factor, and like this V20 version, it has one of the larger ATMega chips taking place of the acres of 74 chips that would no doubt have performed all the glue logic tasks of the same machine had it been built in the early 1980s. There is a video of the board in action that we’ve placed below the break, showing CP/M in ’80, ’86, and even ’80 emulated in ’86 modes.

The only time a V20 has made it here before, it was in the much more conventional home of a home-made PC.

If you think it took “acres of 74 chips” to make a Z-80 system, you never made a Z-80 system. Most of the chips on early-80s Z-80 systems were there for supporting things like a CRT display and a disk drive. The Z-80 family, like the 6500 family, included dedicated chips for serial and parallel I/O, and for multiple clock/timers, but for most parallel devices, you could cut way down on the board real estate by using 74LS series latches and bidriectional drivers, and MAYBE three or four chips small to do chip enables to implement I/O and memory maps.

Intel/AMD had made a 8155 – 256 bytes of RAM, GPIO port and a Timer on a 40-pin chip. This simplified what was needed to build a SBC back in the days.

Nat-Semi had something similar, I can’t remember the exact details off-hand – it was nearly forty years ago :) But I think it had two 8bit ports and 128bytes of static ram in a forty pin package.

I guess that it refers to 8088/8086 machines, not Z-80

The 8088 and 8086 had similar support devices. The 8088 was specifically designed to be compatible with most of the Z80 compatible devices available at the time.

“These chips not only supported the 8086 instruction set, but also offered a compatibility mode with the older 8080 processor.”

Z-80. You got it right everywhere else in the text.

But do these chips copy the bugs in the original Z-80? There’s a retro tech article for you. The original Z-80 had some bugs and some software was written to exploit them. Then Zilog decided they’d fix things and produce a new Z-80. There was hollering and complaining because some of the software that relied on those bugs was something expensive. So Zilog sun another version with the bugs restored, and documented.

I had a PCjr with a V20. I ran 22-nice to activate the Z-80 and boot CP/M. It was quite a bit faster than the Xerox 820-II Information Processor I also had at the time. Both were a lot faster running CP/M that the 12 Mhz 286 running 22-nice in Z-80 software emulation mode. Despite that it was still usable. I had 12 megabytes of RAM crammed into that 286, 512K on the motherboard in DIP chips, the rest on three 16 bit ISA Micron RAM cards, split between backfilling the lower 640K, XMS and hardware EMS. It ran Windows 3.11 (not Windows For Workgroups 3.11) *very well*. Windows 3.11 was a short lived OEM only version that still supported Standard Mode.

The problem is that V20 is not compatible with Z80 but the original 8080. The same as 8088 (and 8086) were. They were expressly designed to work like that even though that feature was rarely if ever used (ever wondered why the 64kB segment/offset addressing existed?).

The article never mentions that it is Z80 compatible, Z80 is only mentioned because the author has made a similar previous project with a Z80 CPU.

The 86/88 weren’t compatible the same way the V20 is. The V20 is binary compatible, whereas the 8088 and 8086 were assembly compatible for easier migration of software, not for running it directly.

And I also came here to mention that it was strictly 8080 compatible, not Z-80. In particular it didn’t have the jump relative instructions that Turbo Pascal used, which was popular with CP/M.

And I had a V30 back in the day, in a Tandy 1000 that used an 8086 (not 8088), but I never tried to use 8080 mode on it. It was a slightly faster 8086, but mostly just because I could.

>The same as 8088 (and 8086) were.

No they weren’t. The only compatibility they had was at the source code level, with an 8080 assembler hacked up to generate equivalent 8086 opcodes. That caused early versions of MS BASIC to have very bad block move code, because LD A,(HL) / LD (DE),A (MOV A,M / STAX D in 8080?) didn’t translate well to the way 8086 memory access worked.

It’s also probably why the 8085 has undocumented opcodes. The theory is that Intel put them in, but didn’t document them because they would have messed up the source code compatibility that was part of the 8086 marketing plan.

The undocumented opcodes probably have nothing to do with the 8086 because the 8086 wasn’t even proposed until the 8085 design was completed. They probably happened for the same reason that other machines of that era have undocumented opcodes: the logic that does the instruction decoding doesn’t have the extra logic required to make the opcodes not do anything. Since the instructions weren’t considered particularly useful or might have limited the ability to extend the CPU’s ISA, they were left undocumented, in hopes that nobody would use them and break backwards compatibility.

Look, the 8086 was just supposed to be a stopgap intended to give Intel something to announce in the 1977/1978 time frame so that there wouldn’t be such a large gap between the announcements of the 8085 in 1976 and the announcement of the iAPX 432, which was then supposed to happen about 1980 or 1981. As such, it was done by the 8085’s designer, who wasn’t involved in the 432 project, and was done in a tearing hurry.

The 64k segments weren’t done to make them in some sense 8080 compatible, because it doesn’t, but were intended to save memory because memory was expected to remain expensive over the entire commercial lifespan of the 8086, which they thought would be 5 years or so. In fact, the picture that they had for the use of 8086 machines was they would either be running tasks with a 64k (or less) footprint on single-user machines with 64k or less of RAM or tasks with a 64k (or less) footprint on multiuser machines with perhaps up to 768k of RAM.

As far as software compatibility between the 8080 and the 8086, there really isn’t any. There is a strong family resemblance, an artifact of the fact that the ISA was done by the same guy and the fact that it was done in a tearing hurry. The only concession to 8080 compatibility that I’m aware of is a hardware compatibility item. There wasn’t time or any inclination to design any support chips for the 8086, so they rigged it up to use the 8086’s vectored interrupt scheme. (As I recall, the 8080’s vectored interrupt scheme has the interrupt controller put an special interrupt instruction and an 8-bit address on the data bus. The 8086’s scheme just ignores the result of the instruction fetch and interprets the 8-bit address as the interrupt number, causing it to begin executing at an address that’s a simple multiple of that number.) That meant that they could use the same peripheral chips being used in 8080/8085 systems.

The 80286 better represents what the 8086 was supposed to be and would have been, had there been time. Of course, by the time the 80286 was released, nearly all of the assumptions made at the core of the 8086’s design (limited lifespan, small application memory footprint, etc.) were proven wrong, which meant that the 80386 was a radical departure because it was the first design based upon knowledge of how people actually wanted to use processors in the 8086 family.

Had Intel known what they had in the 8086, they might not have had it. Due to internal politics of both intel and IBM. The Boca Raton boys were trying to fly under the radar of the suits in NY with the PC design, so choosing an architecture seen as highly capable or the next big thing, then would have brought them under scrutiny for potentially fully undermining the mini-computer business market, rather than being a firewall/stopgap to the erosion of the low end of the market by the micros. Maybe the PC team knew what they were doing, leveraging capabilities out of a chip destined to be more of an embedded controller MCU than a CPU of a computer, but also probably felt like they were putting one over on Intel who’d rather have sold them a more premium chip at a more premium price. The same sort of thing chagrined Motorola a bit, when capable computers were built out of the EC versions of their 68k line. Anyhow the 432 turned into a bit of a turkey, since the respun 8086 in the 286 was shown to outperform it in several areas, so they ran with it.

Look at those what those 8085 opcodes do, there is functionality that completely alien to the rest of the design. One of them adds an immediate byte to SP and puts it in DE. No way is that a mere un-decoded side-effect.

Perhaps they were kept quiet for the same reason as the extra stuff in the 6309, to stick to supporting only the stuff that was compatible with the previous chip. RIM and SIM were needed for new hardware in the 8085, which is why they got documented.

Okay… does it run MSDOS?

Just needs a customized BIOS, same concept as with CP/M. Maybe limited to MSDOS 1.x, as later versions rely heavily on IBM-PC specific features.

Almost no application will run on v1, though.

MS-DOS was fully costumizable up to version 2.11 or 3, if memory serves.

After this, the “MS-DOS compatibles” pretty much died out

thanks to programmers who had no manners in terms of programming.

Once it became popular to bypass BIOS and DOS ABIs,

there was little reason to keep MS-DOS portable, I think.

PC-98 was one of the exceptions, though.

It got MS-DOS 6.20, even..

I would have used a V40 or V5x instead to get some of the standard PC chipset. Would have made DOS compatibility a little easier.

My first “PC” ran an 8 MHz V20. IIRC, it was about 15% faster than a stock 8088. Strangely, I never could find much info from NEC about the 8080 mode. I was particularly interested in instruction timings. Nada.

Given NEC also made the 8080, one assumes the architecture was more-or-less copied, and that timings would be the same… Of course…4 times as fast, or double a Z80.

In the 90s, HP had a series of “palmtop” computers that ran MS-DOS. I had the first model, the 95LX. It was based on the NEC V20 (later models were not). When I found out about the V20 having 8080 compatibility, I downloaded a version of CP/M that had been configured for it and ran it. It worked well for the few programs I tried, but I ultimately didn’t do much with it.

I think you’re confusing 8080 and 8088.

The 8088 is binary compatible with the 8086. The main difference between the two is that the 8088 had an 8 bit data bus and the 8086 had a 16 bit data bus. Internally they still both used “real” 16 bit registers and the ALU operated on these in exactly the same way (you could execute the same machine code on both). The 8088 allowed you to build cheaper (slower) systems.

The 8080 is often described as assembly compatible to the 8086/88

NEC V20/V30 were cool, because the had some of the 80186/80286 realgmode instructions.

Windows 3.0 VGA driver did not work on 808x,but the NECs.

At VCFED.ORG there is a patch for it, adding 808x compatibility.

Anyway, the NECs are i8080 compatible only.

They do not support Z80 opcodes.

Borland Turbo Pascal for CP/M-80 won’t run on i8080/i8085.

So the 8080 emulation mode is rather pointless.

A normal CP/M 2.2 emulator for DOS,

like Z80MU is much more useful.

That being said, CP/M-86 could run some CP/M-80 programs via CP/M emulators (which soft emulate a Z80).

DOS Plus 1.2 (8086 OS) also can run CP/M-86 and DOS 2.0 programs..

I forgot to mention that this is no critique on the project.

In fact, I’m really impressed and think it’s neat.

I’m looking forward to further development. :)

Maybe other OSes might find their way onto this platform.

As mentioned earlier, DOS Plus was used on odd platforms such as BBC Master 512 with 80186 CPU.

Some information available here:

http://www.cowsarenotpurple.co.uk/bbccomputer/master512/system.html

https://en.m.wikipedia.org/wiki/DOS_Plus

https://www.seasip.info/Cpm/dosplus.html