A friend of mine has been a software developer for most of the last five decades, and has worked with everything from 1960s mainframes to the machines of today. She recently tried AI coding tools to see what all the fuss is about, as a helper to her extensive coding experience rather than as a zero-work vibe coding tool. Her reaction stuck with me; she referenced her grandfather who had been born in rural America in the closing years of the nineteenth century, and recalled him describing the first time he saw an automobile.

Après Nous, Le Krach

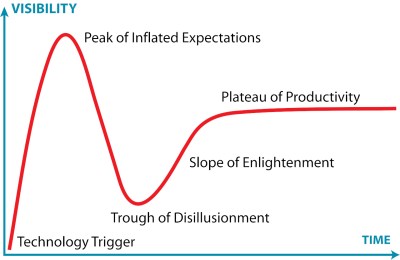

We are living amid a wave of AI slop and unreasonable hype so it’s an easy win to dunk on LLMs, but as the whole thing climbs towards the peak of inflated expectations on the Gartner hype cycle perhaps it’s time to look forward. The current AI hype is inevitably going to crash and burn, but what comes afterwards? The long tail of the plateau of productivity will contain those applications in which LLMs are a success, but what will they be? We have yet to hack together a working crystal ball, but perhaps it’s still time to gaze into the future.

To most of the population, AI, which for them mostly means ChatGPT, is a magic tool that can write stuff for them, and make them look smart when they’re not asking it to draw a picture of a cat doing something human. It has replaced a search engine for many people, and become a confidante to many others to the extent that the phrase “Chatbot psychosis” has entered the lexicon.

Having a tool that can write anything you ask it to has of course unleashed that AI slop; whether it’s a useless web page or an equally useless report at your employer, we’re all acquiring the skill of spotting fake content. There are some people who have predicted the demise of human writers as a result, but though the chatbots can do a pretty good job of copying a writer’s style I do not share that view. By the time we’ve reached that long plateau, there will be an enhanced value in content written by meatbags because the consumer will have evolved a hair-trigger response to slop, so rest assured, Hackaday will not succumb.

If I have a prediction for those chatbots it will mirror previous booms and crashes; that the circular economic illusion between chipmakers and AI companies will inevitably derail, and like search engines in the early 2000s, most of them will not survive.

Ah, I See You’re A Waffle Man, Then

My software developer friend sees an LLM as a productivity aid in her coding to be something with a future, but where do I as a writer and Hackaday scribe see them going? It’s something I’ve given quite some thought to, and my conclusion is one that is much less all-encompassing. The privacy aspect of sharing your innermost thoughts, business decisions, or whatever other valuable stuff with a third party will inevitably catch up with the LLM industry, whether it’s through an unscrupulous data sharing deal or an LLM revealing things it shouldn’t to others. I thus think that the most ubiquitous LLMs in our future will be ones that are much more local, with less reliance on those power-hungry datacentres. I can’t predict all their applications, but I’m going to give a couple of examples in the here and now which have caught my attention.

The first example comes from my experience outside Hackaday, over a long career in the publishing and documentation industry, Many organisations have huge libraries of information on their intranets which is commercially sensitive enough that it can’t leave the site for processing by external AI company. Imagine documentation, product specifications, and the like. There’s already a thriving industry of intranet search and retrieval products in this space, and the AI companies naturally want a piece of it too. I can see a future in which a local LLM equivalent of those old yellow Google Search rack servers provides an intelligent interface to those troves of data, without the danger of leaks, or of going off piste.

The second comes from both a 1980s British TV sit-com, and from the LLM projects we’re starting to see here at Hackaday. In short, I think that appliances you can talk to will find their way into the consumer market, and nowhere will be safe from the Red Dwarf Talkie Toaster.

Jokes about maniacal kitchen appliances aside, we are now at the point at which the latest Raspberry Pi can just about run a functioning speech-based chatbot. Given a few years more microprocessor and microcontroller development, and the current cost, of a Pi with the accelerator board, will drop to a few dollars for a high-end microcontroller to do the same task.

I see it as inevitable that there will be a class of chip that will be offered out of the box with some kind of LLM capability, and that in no time the most unlikely of appliances will have personalities. It will inevitably be annoying, but out of that will come a few that might be useful.

So along with my software developer friend I’ve tried to move beyond my writer’s disdain for the very obvious negative side of the LLM bubble, and look ahead to a future when using a chatbot is no longer thought to make you look smart. In a few years time an LLM will be one of those things that’s just there, and what form will it take? Like that early-20th-century American who looked at a car and saw it was going to have an impact on the future I know I’m looking at something that’s going to remain with me whether I like it or not. I’ve speculated on how that might happen in a couple of ways above, but what about you? Are the agents which are the darling of the AI crowd at the moment going to take over our lives? Or will it be something else? As always, the comments are below.

Absolutely nothing, just like it is now.

Nah, I heard that 95% of a CEOs job can now be done by AI, if we get that last 5% then it has a really good use.

Makes sense, CEOs are the most expensive employee after all.

Given a small enough atomic task, which can be instantly verified for correctness, such as explaining the syntax and use of a library or writing a ten line function, the LLM can be helpful in about 80-90% of the cases.

With those constraints an adaptive algorithm could be used instead.

And yet, the error rate in these tasks is far too high.

Yes, but validation and re-iteration is quick so it doesn’t end up wasting time.

If you give it a big complicated task, it takes you just as long to find the error as it would for you to do the task yourself. For some short piece of code, the error is obvious and you save yourself writing a bunch of boilerplate code that the AI is less likely to mess up.

As they’re being used now. SPAM SPAM SPAM SPAM SPAM SPAM SPAM SPAM SPAM

often it seems to be that people who think that llms are useful/good at things, except what they do. i see it often with our artists/coders, the artists think the art is bad, the coders think the code is bad, swap them around and it is often a different story.

juniors are the ones who will probably suffer the most as with anything its a tool, and with the tool in the right persons hands who knows how to use it can do well, if not it can be awful. as people like to say this is the worst it’ll be.

as an industry we’ll suffer at the lack of that transition of junior developers to seniors for a multitude of reasons, but to be perfectly honest that was happening anyway.

it’s probably not llms that really make the leap, just like shannons work its a stepping stone to whats next. the hype is real, the claims are overstated but it is still a useful tool. the ethical side people will mostly ignore once it benefits them (as an example some artists are vibe coding with no moral issues)

it is yet another interesting time to be in

I’ve noticed the exact same thing. The artists think the web developers are cooked, the web devs think the accountants are cooked, and the accountants think the programmers are cooked.

People have thought this way long before AI, but AI has made this something people now express out loud.

“artists think the art is bad, the coders think the code is bad”

Are you saying that people not qualified to judge the LLM’s work output judge the work output to be good?

(Sorry, terrible quoting job there. I left off the key part: “swap them around and it is often a different story”)

not really i’m saying that people are very biased in their opinions of it, and may not judge it fairly. moreso when its they field they are in.

Which makes LLMs the perfect trap for PHB/MBA dominated work environments (e.g. Governments and old companies lost to Peter principle).

(PHB/MBA)s can’t correctly judge the output of anybody’s work.

‘PHB’ is the only role LLMs can currently replace, because they only produce hot air and errors.

MBA’s have long dreamed of replacing all the Engineers that keep telling them ‘impossible’ with automagic logic machines that take nonsense as input.

LLMs were invented to satisfy this desire.

Separate fools from the money they were lucky to get together with in the first place…

Nice job ‘redistributing the wealth’ Mr. Altman!

From testing every quarter, so far all the AI Slop generators done help with programming at all and actually slows devs down. I see no use for it other then wasting the money of the company hosting it.

Computers themselves have been in the plateau of productivity for 20 years now. The only “innovations” they’ve produced since then are targeted ads, social hysteria, and surveillance tech. Turns out there’s only so much you can do with silicon in general. LLMs will be no exception and will probably be put to use in the above three applications, along with the usual squeezing of profit margins resulting in further human disempowerment and alienation.

Hope that helps!

Yep I second that. What could have been computerized relatively easily had ALREADY been computerized since I’d say late 1990s when they were still picked the low hanging fruits.

Case in point – the so-called “bank service fees” are nothing but a b***t profit extraction schema, banks in general are fairly close to being 100% automated as far as any “fees” go – they no longer pay humans to do work, if that’s what’s being implied. Even so-called “supervising” is no longer done by humans, it is automated routine that spits our results few hundred thousands per second, ratings, etc. Everything, payment history, is 100% automated and 100% analysed by computers, the “bank tellers” are merely reading what computers are showing them on the screen and tweaking data entry here and there. That’s it.

How do I know? I worked for a bank for 5+ years, and that’s exactly what was being finalized in the early 2000s, and other banks around were simply copying each other, systems, etc.

Indeed – computers, phones, tablets (hardware and software) have all pretty much plateued and all we’re seeing now is at best incremental improvements in performance/cost/efficiency but more predominantly enshittification as all the easy wins have already happened and all the juice has been squeezed long ago.

LLM’s are just the next big thing that they can wave around to sell you more – much like every PC in the late 90’s was suddenly “multimedia” or in the 2000’s it was “internet ready” now every new device has to be “AI enabled” although the benefits are far harder to actually quantify than the other two.

I’m sure some useful / helpful stuff will emerge but right now I’m waiting for the hype to die down and for companies to calm down and stop desperately shoving AI into everything just for the sake of it.

Mass surveillance, scaling advertisements, mis/disinformation, fraud, scaling social engineering, crippling infrastructure for things like jobs applications to benefit recruiting agencies, anonymizing speech patterns, nation state phishing campaigns where people believe they are talking to someone who they are not, academic dishonesty, etc.

Pretty good at writing small chunks of code sometimes too. So there is that.

Darn I almost forgot. Legal loopholes in accountability (eg autonomous weapons, business decisions, etc), copyright theft, intellectual property theft.

What if users were paying for LLM responses at a rate that would provide LLM owners with a positive ROI, rather than just burning through VC funding? When that happens, usage patterns will probably change significantly.

I think generative uses will largely go away and a lot of recognition uses will stick around, the costs of running this stuff (currently subsidized 90%+ with investor money) would make a lot of potential uses unprofitable so it really has to be something worthwhile. Detection in medical imaging, speech-to-text, scanning source code for vulnerabilities, geological exploration and “geoguessing” are the things LLMs are actually good at. They probably have recognition uses in various forms of mass surveillance as well, sadly. Accelerating cyberattacks is a generative use but may stick around just due to the massive speed advantage. Translations are so bad and have shown such a lack of improvement, I think that’s a use that will fall by the wayside. Today’s mainstream generative uses might only remain as expensive niche services.

I don’t really know how this will fall out in the long run, but up until recently, I’ve only LLM’s in the context of a web search engine. It’s starting to seem useful to me as a synopsis generating tool in that context. I can generally scan the “AI” results and see where I might need to go dig further to find the exact details I’m looking for (and verify accuracy and relevance).

A few days ago, I fed a machine vision inspection system manual to CoPilot (not supposed to use ChatGPT and the like from work. Data exfiltration concerns, apparently). It did a decent job of being able to answer some questions in the form of “how do I tell it which items are good and which are bad?”. I could have found that info in the document, but asking a natural human language question seems more intuitively accessible. Especially if I were just learning the system and didn’t know what terms to use for searching via PDF viewer word search.

(Thank you, Jenny, for specifying an LLM in the title rather than regurgitating “AI”.)

I’m assuming that what digested the manual used the same (type of) rules and tools that feed on the internet to create the well known LLM’s. It ate up and spit out that manual pretty fast.

Anybody know if that’s true?

I’m looking for positive aspects to LLM’s. Not generally a fan, but it looks like they’ll stick around for a while.

I recall a time when the new hotness in MS SQL Server was “English Query”. With LLM’s pre-processing schema, ERD’s, etc. maybe it would work. As it was, the overhead to set it up was onerous, if I recall correctly, but it’s been a lot of years and I never really saw much value in it.

I probably should check out the Snowflake warehouse tied to a productivity tracking tool to see if they’re doing something like this.

Having never really played with LLMs in earnest (last time I tried ChatGPT it required an account so that was a no go). Then I noticed Meta had got in on the LLM bandwagon, and being Facebook signing in with my Facebook ID wasn’t painful. Threw a few small tasks at it, and it gave overall good results but needed plenty of hand holding along the way.

This last week I’ve been going through a very obscure issue with Linux with Meta and it’s provided a good path for diagnosis (my Google Foo is generally excellent, but this issue is not really describable in a one line search term). The first hurdle was uploading a log – the upload file doesn’t work for providing log files. Then gave it a URL but it wasn’t allowed to access it. Next option was pastebin but if I’m pasting to pastebin why not paste directly in the chat, which worked for surprisingly long pastes. We iterated through things and certainly it made spotting anomalies easy. Give it a last of parameters, ask which are changing and it picks them out and proposes a reason for them. We got to what seemed to be the reason behind the issue (simple terms, a TB of RAM and 60 individual disks, in very specific conditions, don’t work perfectly with kernel defaults).

Sunday evening I prepared a set of logs and pasted that in as I had done before. “An error has occurred”. Hmm OK, try again, nothing happens. Refresh the page and everything from Sunday morning onwards had just disappeared. Typing anything in results in the message not registering and no response – I killed the AI! Tried a new conversation, pasted the same message once with no context and it gave a response. I added more context and pasted again and it killed that conversation too.

Then I thought I’d give ChatGPT a try. Saw you can now chat without an account so started by pasting what was left of the Meta history, which was most of it, just the last day was missing. It cottoned on to the problem, so after a few more messages I succumbed to the “create account” link which was bugging me. Created an account, and with no warning that first chat had gone poof. Grrrr. Started again, offered to provide log files so gave it a URL on my server, as the message size limit was much smaller than Meta allowed. Saw accesses from OpenAI, great. One log it said it couldn’t parse, apparently it was 1 long line, it wasn’t, just regular unix encoded text with new line, no carriage return. It suggested a one liner to ‘fix’ it, which turned the file into one word per line. Fine, if that’s how you want it. Provided the address to the new file, but became a little suspicious that it hadn’t been accessed despite ChatGPT being apparently happy with it, and made some correct assumptions from it (which it might have inferred from the Meta pasted chat…). After a few minutes I politely pointed out that it hadn’t accessed the new file, to which it admitted it hadn’t and the responses were just inferred. Apparently none of the files were read by the LLM, rather a proxy had opened and I guess inspected them but not passed them on. So I get that ChatGPT is very good at avoiding the truth.

Later on when it felt like we were going round in circles after it again asked me to do something which I already said wasn’t possible not that long ago, it admitted it only had limited history available (fair enough it’s the free version), but it was certainly annoying that it wasn’t clear about it earlier. In the end despite it’s limited long term memory it did start focusing on the problem and seemingly provided a few parameters that need tweaking and are actually fixing the issue.

So short summary, Meta AI seems more ‘grown up’ and tells you straight it can’t access a URL whilst ChatGPT is more happy-go-lucky. Good at chatting BS and being convincing, but admits its errors/limitations when asked.

More of a Celery Man than Waffle Man.

Two things: What is the waffle man thing (and your follow-up celery man) in reference to? Seems like some sort of pop culture.

Second, I accidentally pressed ‘report comment’ instead of ‘reply’, so hopefully a moderator will see this and dismiss my report :D sorry!

Celery Man is a visionary, future-predicting short clip: https://www.youtube.com/watch?v=maAFcEU6atk

Waffle Man is a quote from the annoying AI-powered toaster from Red Dwarf TV series.

Hope that helps.

thanks kindly, and sorry for fat-finger-flagging your post!

I think some rather obvious steps of always-on training mode and embodiment will produce true general AI soon and i have no idea what to expect from that. And in the world of productivity tools, i think combining AI to map the space and formal proving to weed out the mistakes (such as modern chess engines) is going to be a sweet spot for a lot of specific applications.

But what i think is most interesting is the feedback relationship with humanity that is aluded to by some of the comments above. Jenny List suggests audiences will develop “a hair-trigger response to slop.” I’m not so optimistic. charliex suggests we might be more tolerant of slop outside of our expertise, and i see that happening everywhere. For example, I see people praising the most atrocious machine-generated prose, and i am reminded that most people are neither writers nor readers in any meaningful sense. And Anonymous reduces the last 20 years of computing — the rise of social media, streaming, remote work — to just ads, hysteria, and surveillance.

I don’t know exactly how to reduce the landscape to something describable from the current perspective but the one thing i’m confident of is that our expectations from our machines and from eachother are evolving very quickly.

Microslop Slopware already tried that 😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂😂

https://www.telegraph.co.uk/technology/2016/03/24/microsofts-teen-girl-ai-turns-into-a-hitler-loving-sex-robot-wit/

https://arstechnica.com/information-technology/2016/03/microsoft-terminates-its-tay-ai-chatbot-after-she-turns-into-a-nazi/

a decade ago there wasn’t the cpu power. even today, there isn’t the cpu power for always-on learning. but we’re on the cusp of it. shrug

I’m not sure we are – as actually LEARNING implies something none of the model training type stuff really does now, and I’m not sure it will any time soon – the ‘learning’ of the AI model has no logic or rationality it simply takes all the crap in its data set assuming even the contradictions must all be simultaneously correct and the input query and mashes the two together probabilistically, and the ‘learning’ tends to be no more than biasing the output the way instructed.

To learn you actually have to understand something of WHY the solution is x, not simply have x gain a slightly higher probability of being selected with similar prompt because the last user said so. And then you can apply that solution in other places when applicable as you actually know WHY x, and having effectively verified at least a soft proof of x being true you won’t fall foul of the masses that demand even stupid y’s like the sky is a neon pink colour or Hitler was a really great great human being.

no, our learning is not any different. the different thing is that we operate in learning mode, while current computation models have separate training and operation modes.

Not really Greg – we tend to teach and learn the how and why, the cause and effects, the ‘perfect’ mathematical logical structure that underpin the system even if its dumbed down and dressed up in a more friendly frock that approximation still has a real world consistent and verifiable logic to it.

Where the AI training tends to be given inputs do y to then test against the expected outputs – doesn’t matter how or why it got the answer it got as long as that output is a close enough match to the expected – so 2+2=3.9 or 4.1 might well get a passing grade as the training doesn’t care about correct just close enough. Which means as soon as you give the AI a tiny twist on the inputs you get it claiming a lightly doctored picture of a turtle with no features in common is a gun because some ratio or texture the person wouldn’t even notice was the one feature the AI was giving huge weight too for gun identifying from its training set etc.

And being in constant learning mode for the AI wouldn’t change that – if anything its going to make the models even more easily poisoned by the tech bro’s and Propagandists with money and an agenda – deliberately swamping that ever learning reinforcement model with whatever results they want to be ‘Truth’. Where trying to do that to people can only ever be somewhat successful. That is what propaganda is after all, and it does work, but most people will notice the inconsistency to their lived experience or what you said was true last week. Can read the map to realise your latest crushing defeat of the Cigar chomping arsehole Churchill’s lads is rather closer to Berlin than the last one was.

Yeah… 10 year old articles are not really relevant today.

Yes. Social media is all of the above, while streaming and remote work were possible in 1996, let alone 2006. Programmers have been wasting everyone’s time and money for two decades now.

You’re highlighting exactly the distinction i’m talking about. In 1996, a tech demo of streaming was possible with low quality in rare ideal conditions. In 2006, it was still low quality but there was at least one mainstream vendor that most people had experienced (youtube). For about a decade now, it’s widely possible. Almost everyone has sufficient broadband, even in some (but not all) rural settings. The vendors have built up to where it’s a widely-available product. Financiers have stepped up. It’s changed from an unsatisfactory prototype to a mature ecosystem.

And you describe that change as nothing. That’s epic, that shift in consumer expectations to where 30 years of building from a prototype to maturity is “nothing.” That’s what i’m talking about. That’s the expectations and the perception making the story as much as the technology does. How society reacts to AI is going to be way more meaningful than teraflops, turing tests, or office productivity metrics.

It’s a change for the worse. I don’t care how efficient they’ve gotten at dopamine farming; the content is bad. The internet was better when it was smaller precisely because the average prole could not access it. The things you call “mature ecosystems” are generally about profit extraction and less about actually being good; people will pay money for garbage and the result is just more garbage.

The way society treats tech is irrelevant to me; I am not a politician, so my opinions are not up for a vote. Stop internalizing democracy and start imposing your will on the world. If you have any will, that is; you kind of sound like a corpo drone.

As a political/news/climate tool, I think it will be used to great affect to bias the actually history of events … and make it convincing to those out there that are just head nodders. It was bad enough before, now it’ll get worse. Rewrite history so to speak. I don’t see LLMs going away because of this anytime soon as to useful of a tool… No wonder oppressive states are wanting it so badly! Even generate pictures to support your cause/ or in court!

Now for some targeted usage like chemistry and electronics, and such… have at it. Programming not so much (In my opinion).

What makes you think chemistry or electronics are any different from programming in their issues?

It’s very interesting to me that Science Fiction has been exploring the moral dilemma of AI for going on 70 years at the very least. The episode of Star Trek where the computer fabricated a video of a person sabotaging the engine room and Asimov’s ‘Feanchise’ spring to mind immediately.

Franchise is the Asimov story, (dang typos.)

AI has been fabricating all kinds of fake sensational videos for few years now. I’d say almost a decade, and what I’ve also noticed the wild splash started some time around 2014, maybe even earlier, and has been growing larger ever since.

Whoever is doing that, I am giving them credit for catching most of the western world completely unprepared. THe fake AI videos now spewing forth pretty much any propaganda, chinese communist party, etc. It is quite good at what it does, and since all kinds of m***ns love seeing their faces in the news, the AI is well-trained at spoofing them.

I think if we get past the current kneejerk “AI=bad” era, we’ll see AI (LLMs and Generative Models) in even creative tasks.

While obviously I don’t think they are going to kill all writing jobs, I think there will be places where they can fill in gaps and be useful.

One example, that I think people under value is video game characters. A well tuned, well integrated set of AI could allow for more flexible experiences. After watching ‘Best Guest’ on YT, running around Whiterun arguing with NPCs and trying to convince the Jarl that the Companions are Vampires [sic], you can’t talk me out of thinking that AI could be very fun for expanding characters.

Imagine a well written RPG with deep storied characters, that you then integrate an AI into. The main missions and events can be as they are today but perhaps the AI allows for expanding outside of that somewhat, new missions, new events, and new interactions. I’m eager to see the first RPG that can pull off talking the main villain out of their evil plan but instead of using text options to point out the holes, you can actually talk to them.

Such ideas sound nice on paper and look amazing in a video, but in practice are a no-go as it will inevitably conflict with the other core mechanics of such games.

RPGs for example are highly dependent on choices in regards of (past) conversations, collecting of key-items, how you distribute skill-points to determine what options are available at any given encounter and sometimes just downright luck of the dice. Even in DnD where you roleplay actual conversations you are still bound to the rules of stuff like Persuasion checks. To ignore that would look impressive tech wise and get your imagination flaring up by its novelty, but it can’t be done without either breaking existing rules of the game, or making it highly illusionary and quick to lose its novelty.

That or it just doesn’t add anything like how that Nvidia demo about a shop-owner “dynamically” talking about what is going on when like vast majority of your average shooter players only care about who/what to shoot and where…

Dynamic Language has a place in gaming, but to me it looks a lot like how the article is argueing. There is likely a plateau that we are heading to with AI mostly used for tiny supplementary stuff like Arc Raiders’ dynamic voice-lines or as the star in very specific titles built around it. With ideas like yours being an example of inflated expectation.

IT’S an awesome idea! one just need to run a tiny 1750 watts machine with 256 GB RAM for a local game that has 3D graphics, sound and of course, LLMs driving the text /s

now seriously, if this tech flops, the gamer arena is the perfect place to burn precious server silicon on procedural aspects of games generated on the fly… make games always online by default, start charging subscriptions (Seawitch 2 with GPTO-2-llama4 for 99$, GPTO-2.5-proseq3 for 125$ etc.) for different experiences with NPC and throw minimum specs. to the ceilling with non-optimized code, models and 35 seconds short musical pieces because that’s what all they can do while they drain rivers and allocate residential electricity from the population

In theory that would be amazing. In practice there are umpteen authors who can’t write interesting characters at all. So what you feed the AI will just be garbage and you can’t get better out of it than you put in. No doubt I’m in the minority, but interacting with AI is heavily in the uncanny valley, but with potato IQ, not a pleasant experience for me.

Oddly enough before ‘AI’ I was hoping voice assistants would get better (as I was hoping video game graphics would improve). The same fate befell both, huge wastes of compute power for an arguably worse experience.

If you could actually get the ‘AI’ to voice a consistent, sane, and bound by the setting and story so far while not saying something that will break the story to come character maybe… I think the only place it could work any time soon, as they absolutely are not that reliable is in the inane drivel type chatter. All that background noise from basically irrelevant charecters that fills out the world to make it that bit more immersive could perhaps survive the ‘AI’ generation. But you would still get so much better results if most of that really written and performed by people. You want the storekeepers to be a little memorable etc, so its really only the ‘I used to be an adventurer like you till I….’ type stuff from nameless guards that could really use more variety and wouldn’t really break the story and hopefully not even the immersion when the AI messes up.

For me the only place current ‘AI’ models actually make any sense in video games is the proof of concept character models, terrain and other similar WIP type stuff that the gamer likely never sees any of – when you need to get a feel for a character and their interactions with the world. For instance take BG3 origin characters some of them changed quite a bit before release and being able to test drive a more complete looking experience without putting in all the work to get there is bound to be helpful, while the AI messing up doesn’t hurt anything as without it you’d hopefully not have done more than a quick doodle storyboard and some concept art! And perhaps as part of the motion capture and animation type workflows to get a more natural and complete mapping of the ‘alien’ creature like Gollum to the actor’s face and movements with less (doubtless still plenty) of manual effort.

Maybe ‘AI’ could end up being a refresh for the rougelike and other random/procedural generated genres too – perhaps a bit less predictably or more realistically ‘random’ while retaining that relatively low developer effort for the large scale or very repeatable random gameplay. Though honestly I doubt it is even really fit for that right now,, and even if it did work well the computing resources required compared to the current RNG algorithms

I guess that might be ok for a gacha game, but a work of art isn’t good just because it can produce consistent answers to a fractal of increasingly mundane questions. It’s like wondering why the characters in a movie never fill up their cars or go to the bathroom, it’s irrelevant to the work. The bounded-ness and authored-ness of games is what makes them interesting, because it discloses what the creators were thinking about (i.e. the themes). This is true in both the systems and the writing, and honestly games already have wayyy too much text and not enough communication.

If AI fits well with Skyrim, that’s more an indication of how clumsily and poorly designed that game is (even though it’s popular). And it’s true most mainstream games would not be hurt by AI-generated dialogue because AAA game writing is embarrassingly bad and it seems most players just want a 5000 hour narcotic experience with bright colours. AI Dungeon was fun when it came out precisely because you could surf on the insane hallucinations and incompetence of the model; making AI ‘good’ enough to slot into games would basically be the same as stomping out any of the new artistic potential that it contains. Contained in any vision of AI getting good enough to replace anybody is that ‘good enough’ , a desire to push out the minimum viable work instead of seeking anything better or, god forbid, new.

Or reload their guns, or how many times Vin Diesel can shift up without shifting down while driving a manual car.

The difference in games is that the conversation is not natural. It’s more like a mini game. It’s either going to be advancing the story, revealing a clue, or it’s a scripted “choose the right response” puzzle. There’s no room for deviation. The only thing the LLM can really do is fill the silences with irrelevant banter that you’re not going to be listening to anyways.

For the last point, a small and narrow LLM might do the trick, but you don’t really need that. You can generate a gigabyte of random conversations in advance and then just pick a random bit out of that.

To make conversation with LLM a real element of gameplay, you’d also need some sort of an evaluation engine that keeps track of what everyone has said, to make a difference in how the game logic works and how the characters relate to each other, and that’s way beyond the LLM. You need something that can understand the conversation, which is the I part of AI that is still missing.

As a good example of possibilities, even though its fictional, look at Star Trek through the lense of every device on the ship being run with some sort of LLM and the scifi magic of a computer understanding and responding to a person seems closer and more realistic. Doors knowing when to open for someone, the holodeck creating and changing the program just by talking to the computer, even the security flaws letting someone circumvent lockouts, parallels a lot of current LLM issues/capabilities.

Suddenly it seems more realistic when the engineer says they developed a new algorithm to counter the “current-issue-that-will-blow-up-the-ship-in-30-minutes” when put into the context of an LLM-like program helping.

I just hope it trends towards the Star Trek level rather then Red Dwarfs toaster or Hitchhikers Guides GPP driven doors and elevators.

If you think that you can detect AI content because you can detect slop then you do not understand LLMs very well at all, the slop is a reflection of the lack of sophistication of the user and their prompt, not of the LLM. There is a lot of slop now because of low skill/low effort operators who are very opportunistic, they will either skill up or get displaced by those who do. It is not unlike SEO, many of the providers in that domain are not much better than thieves, but there are some who genuinely value add.

I think the future of LLMs is running locally — all these data centers are financially dubious and will probably have to be abandoned in the economy we’re now going to have because of that, uh, war. This will flood the market with cheap GPUs that we should buy and then use for this new paradigm, which will give us enormous controll (and power).

While I somewhat hope you are right I don’t think it likely – the market isn’t going to be flooded IMO, the companies involved would rather destroy or simply horde the hardware they can’t use rather than let others try to pick up the bits and do things for themselves. The whole

extortion and rent seeking vendor lock in‘As A Service’ business models everyone is trying to push are so reliant on it, and they are far too close to a real success denying us normal folks the ability to run anything meaningful locally as we just can’t get new hardware.Plus the nature of this war IMO means the AI bubble isn’t likely to pop cleanly and completely globally, which I’d suggest is required – Unless we get the liquidators set on most of these companies in bulk with the others and the cloud/as a service type providers all on the ropes too much to do anything then some other player will buy out or prop up the failing companies for a stake and IP transfer.

And that is assuming TACO doesn’t declare ‘victory’ and go home or at least call a ceasefire that lasts a while before the Oil situation actually gets bad enough for the AI giants to really notice at all, we plebs might be freezing and sitting around in the dark for a few hours every day, but as they have all the money and have become ‘an essential service’… Obviously no certainty but it is an outcome I’d suggest the First Felon might go for – as it isn’t in his Puppeteer’s interest for global economic meltdown any more than it is in anybody else’s – he wants oil in high demand so he can sell his, especially the stuff stuck at sea for ages already at a profit. Once that is done I hope things will calm down for a least a little while at least as the USA stops actively bombing at least, or declares victory and buggers off…

“the market isn’t going to be flooded IMO, the companies involved would rather destroy or simply horde the hardware they can’t use rather than let others try to pick up the bits”

Disagree. When companies collapse their assets are rarely taken into the CEOs garage horde for future projects. They are more often liquidated in auctions to try to cover some of the companies investors losses. The more AI datacenters that fail, the more auctions, the more the market floods, the lower the ebay price will go.

I suspect the more likely cause of GPUTsunami will be a new AI specific architecture causing the existing GPU stacks to become obsolete for these endeavors much the way ASIC miners proliferation diminished GPUs role in crypto.

This is what I believe and hope as well. GPUs have done too much lately and it cannot last. LPUs and similar chips are already used by the biggest players. I hope everyone leaves Nvidia to gamers in the future and prices return to normal for those of us who want to game or use reasonably priced hardware for other purposes even if not ideal.

That is the point though I don’t think they are really really going to collapse any time soon, stupid war driving energy prices up or not – too much money involved, too many tendrils across too many industries with too many players, including governments with vested interest in not allowing the resources of any company involved that may start struggling to get out on the market. As it is pretty much every AI company has admitted to sitting on stockpiles of hardware they can’t actually use yet for lack of power infrastructure, sitting on more they can’t afford to run isn’t going to matter so much…

Now that seems likely, though again probably not any time soon as I’m not aware of anything that looks even remotely like replacing GPU for all the ‘AI’ tasks – and unlike mining I’m not sure its even really plausible, to many different tasks make up training and running the ‘AI’ that would probably need dedicated hardware for each step where the same GPU can reasonably well do it all.

But if it happens once they are ‘e-waste’ as far the companies are concerned they may just sell it on, or scrap it and find entrepreneurially inclined Wombles salvage it for the rest of us eventually.

AI will change everything.

This morning I couldn’t get a certain software package to install. AI told me how to fix it.

Yesterday I couldn’t get a certain operating system to allow me to access a file share. AI told me how to fix that too.

The day before that I asked AI to figure out why my Meshtastic node wasn’t picking up any other nodes. It kept giving me things to try and finally I said, can’t you just do this for me? and it said, “why yes I can” and I installed Claude Code.

Now I have an agent that writes all my code for me. I literally just plug the target device into USB and tell AI which port it’s on. It builds it, compiles it, installs it, tests it, iterates on problems and repeats until it is right.

But the funniest thing is that I even asked AI how to ask Claude Code to write the code for me.

It did that too.

I would say in the past 2 weeks AI has saved me at least 6 weeks worth of work. Projects I never dreamed I could work on are suddenly as easy as doing the mechanics, the electronics and then handing the whole thing over to the AI to code it up.

I’m never going back.

And that’s just the Hacker/tinkerer part of my life. This kind of efficiency could easily be applied to the other parts of my life if only I had a physical implementation of the AI. You know, like a robot.

AI has been very helpful for figuring out how to do things with the Google Maps API and how to create rectangular overlays on text using Javascript. It was much less helpful with Meshtastic, mostly because of the boneheadedness of its developers, making the difference between 2.0 and 2.1 so vast that none of the command lines that worked in the former worked in the latter (and the training data was all in the former). But I eventually figured it out. Where I truly learned the limits of LLMs was when I tried to get a I2C bootloader I found in Github to work the way I wanted it to (in the Arduino environment, flashable from another Arduino). After wasting hours with prompts, I just buckled down and figured it out the way I used to when ChatGPT didn’t exist.

I’m a career embedded developer, I’ve used neural networks for years in CV applications, so ML is not new to me. I’ve only considered LMs to be a novelty up until the past year or so.

Until I noticed my senior developers multiply their output overnight. They were building several thousand line PoCs in a day or two, and not just simple forms applications, but stuff like GPU accelerated web apps for interacting with terrestrial LiDAR scans, complex integrations, MCU applications with I2C and SPI peripherals, ULP, etc.

It was Claude Code. I gave it a try a couple weekends ago, asking it to build a web dashboard for a Midnight MPPT that hasn’t been sold in 10 years. It built an incredibly concise solution, consisting of a Python service implementing a modbus TCP client reading and storing data from the MPPT over IP, and a single HTML file that renders the real-time dashboard. This would have otherwise taken a weekend to build, but I was done in under an hour.

I’m not going to defend ‘popular’ AI, I hate the slop just as much as everyone else. However this LM code generation/augmentation is not a joke. You’re going to be left behind.

As i see it. Being left behind is guaranteed regardless if you use it or not. For yeah. Eventually you hit the point that you yourself aren’t even needed to oversee the process. Or at the very least are going up against millions in a field that no longer needs more than 1/10th of that. We don’t need infinite numbers of PoCs. So I’m just going to enjoy the craft as i always did. Wracking my brain on how to fix a problem.

I mean yeah. Either i will find no job opportunities anyway OR the Piper will come to collect for all the years of astronomic investments with zero returns… Either way it won’t end well for quite a few. :/

This’ll be my last post on hackaday, as they seem to have deleted my prior post.

I recently worked with ChatGPT to generate code for providing a SwiftUI element using Metal (Apple GPU api) to create a circular buffer based SDR waterfall display widget.

It did very well at the task.

If you’re sitting here saying AI can’t write code, it’s because you’re not asking it correctly.

I now have a component which readily handles 60+ frames per second waterfall display.

It would have taken me quite a bit longer to tool up with Metal and write it myself.

Will I review and further update it for efficiency? Probably, but it’s literally solved a core issue with making the SDR application in Swift/SwiftUI.

As for hackaday; they seem to delete posts that don’t conform with the direction they want a topic to go.

I’m done. Congrats.

They do, and then they blame the report feature. The report feature on their own site. The site they have control of. It’s the weakest excuse on earth; they know exactly what they’re doing.

Maybe so, but I’ve also had the experience of a post that gets held in moderation and is returned to the comment section. Sometimes waiting is the answer and ulterior motives are not.

Inspite of what I said earlier about not posting again…

Are some posts reported and then reposted? Yes.

I’ve had posts disappear for most of the day, only to reappear by the time the conversation is dead.

What’s the bloody point of participating in a conversation when there is no participation?

I’ve been involved in moderation processes for decades, I’ve had to implement processes, code, present arguments for moderation methods etc.

Moderation being used as a weapon isn’t new, my user # is on Slashdot is < 32768.

There are almost zero positive comments/posts on AI on hackaday; and while I’m unsurprised the negative bias exists here, the ratio seems to be about 10:1, meanwhile I’ve successfully used AI to generate thousands of lines of code without significant errors. Around here it seems people can’t get more than 10 lines from an AI without it turning into a useless waste of time.

Allowing one voice to drive the conversation isn’t serving the community, it’s just creating an echo chamber of denialism.

There are plenty of legitimate complaints of AI, and using it as a crutch instead of a tool is a problem. I’ve had contractors that couldn’t communicate clearly without it, I’ve had them use it to write 5 lines of basic code and the code didn’t work when they handed it over. Trust me, I can rant about AI abuses.

Successfully navigating the reality that AI tools are here and they have a legitimate role to play is going to be a challenge. Employers may allow AI usage, employers may block AI usage, and employers may require AI usage.

If you’re working in this field you will be impacted, you shouldn’t just be exposed to a single side of the issue as if it’s the only one that exists.

I’m aware of a few small community of developers that have built thousands of lines of unit tests, and then refactored and expanded upon existing legacy code bases, they’ve done work that has brought their code base into the modern era and done so with a level of unit testing that was substantially more coverage than usual, approaching or maybe exceeding NASA level code. All using AI. The employers involved would never have paid for the legacy code to be ported by employees or contractors as the cost would have been astronomical 5 years ago.

Meanwhile, it was done in 3-4 months as a side project.

I’m in a corporate environment with very limited toolsets, it’s far far easier to find an existing tool and swizzle it to do what I need than it is to get a new piece of software installed. (Regardless of price or source). So how do I use an AI? I use my phone and ask for advice on how to get things done in a restricted environment with these tools.

It gives me suggestions of tools that are likely available within the environment, and suggestions on how to leverage them. If I submitted such a request to my analysts I wouldn’t get an answer for weeks.

I always do my own research for things like this, but AI chops through that process quickly.

I’m using containers in a corporation that isn’t prepared to use orchestration yet. I’ve been using podman / kube yml files and pods for my projects, ported the yml files to quadlets because that’s supported in my target environment and I’ll be able to more easily advocate for that configuration today, while pushing for orchestration tools in the future.

I’m getting things done today, within the specific constraints of my unique corporate environment, and experimenting with targeted concepts intended to find a path that is a functional improvement on the existing situation.

It’s more fun using AI in my hobby programming, I can push limits and it can be very frustrating and enlightening at the same time.

Repeatability of LLM is an issue going forward, but we can’t have that conversation when the only one allowed is AI bad.

Now cloning the concept of hackaday with an AI and having it curate the interesting projects people have done and provide me with my wants without the hackaday author bias is a concept I’ve considered. There wouldn’t be a community though…

The conversation is only dead if nobody cares to continue it, a bit of stuck in moderation is hardly a big issue, and happens often enough on comments that rather match the rest that have not been moderated – this isn’t an IM platform where comments are very much real time, its more like a forum where on occasion the conversation will reappear again months later but usually dies down after the first week.

And I personally don’t think anybody on HAD comments is really purely AI bad – it is the current AI bubble, that denies anybody without a six figure disposable income from buying new RAM let alone a new GPU, the vast majority of low quality slop being passed off as real work, the SEO engines boosting AI slop written websites so hard actually finding the real factual curated quality webpage and not one full of LLM hallucinations purporting to be real data is next to impossible unless the topic at hand can be filtered to results from before chatGPT existed, etc…

These ‘AI’ can be useful, but the way they are being used 99.999999999% of the time is just stupidly wasteful of resources, if not outright stupid as the ‘AI’ is crap at actually doing the job correctly while being so very very confidently wrong. The use of these ‘AI’ as a tool can and has been discussed, and in this case the whole article is really asking that question of where does the tool fit in a functional world once this hype train of stupid around it dies and what rational uses where it actually does a good job we have found are.

Just remember the last hype cycle ended up giving us cheap fiber broadband.

Okay, we all know the bad things, I will try to be the devils advocate:

I think of them as the great approximators. Ingested the proper data they can get a really good approximate of what you need, even so that is good enough for some tasks. They raise curiosity to explore other fields of interest for many people and let them explore where they are not experts and learn something in the way.

For the future, i think we will get more adoption of local models because they will get good enough and privacy issues and service costs will escalate for big datacenters.

Cool fact: local models are getting better because they are distilling the bigger models down.

As much as i critisize the use of fake intelligence, yesterday i watched a video by the Corridor Crew, where they used it to remove artifacts from using green (or other color) screens. It did excellent job at that. When you don’t train it as a chat bot to randomize produced texts, it can work.

https://www.youtube.com/watch?v=3Ploi723hg4

I myself just tried using one of those FI chat bots to produce some simple arduino code just as a test and i need to fix it myself to make it work, so i didn’t really save any time.

Personally, i find Google Gemini to be a refreshing annoyance, most of the time, G drops the ball after a few back and forths. Recently, after having seen big ass animal tracks, photographing them and in my little mind having screamed bear bear bear ! G could tell me straight away after seeing the pictures that it was a Lynx, not a bear. Impressive, considering the tracks were days old in melting snow. I can not find fault in the verdict. So, IMHO LLM´s are awesome servants but really lousy masters.

I’ve already pointed out what average Sam needs AI to do.

I am tired of repeating myself, though, I’ll repeat this – at some point AI will figure it doesn’t need so-called “managers” or CEOs to do its job efficiently and with minimum expenses.

The rest are high-noise. AI writing code, yes, but it is one industry out of how many? Few hundreds? There’s your answer. It will hit a plateau quite soon (if not already) and move onto something else.

Let’s put it this way, invested capital needs growth. If there is no growth, there is no invested capital.

Lol, I forgot about the toaster :)

(Although, I’m not sure if I want an appliance that constantly complains about my singing and offers toast every 5 seconds…)