The hobbyists of the early days of the home computer era worked wonders with the comparatively primitive chips of the day, and what couldn’t be accomplished with a Z80 or a 6502 was often relegated to complex designs based on logic chips and discrete components. One wonders what these hackers could have accomplished with the modern components we take for granted.

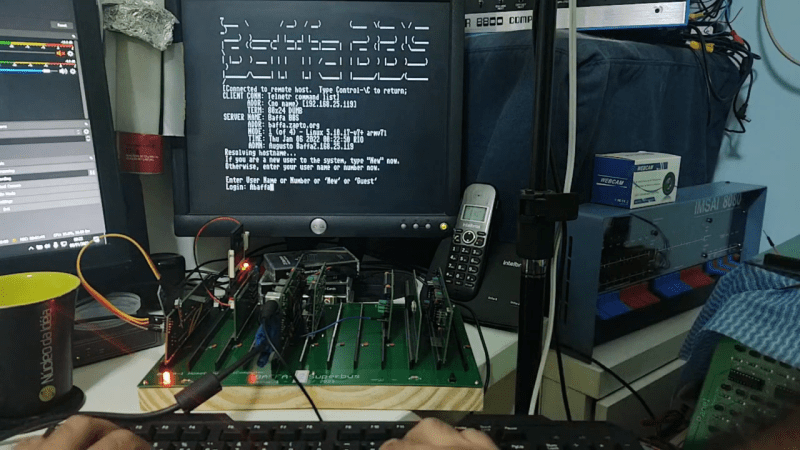

Perhaps it would be something like this minimal serial terminal for the current crop of homebrew retrocomputers. The board is by [Augusto Baffa] and is used in his Baffa-2 homebrew microcomputer, an RC2014-esque Z80 machine that runs CP/M. This terminal board is one of many peripheral boards that plug into the Baffa-2’s backplane, but it’s one of the few that seems to have taken the shortcut of using modern microcontrollers to get its job done. The board sports a pair of ATmega328s; one handles serial communication with the Baffa-2 backplane, while the other takes care of running the VGA interface. The card also has a PS/2 keyboard interface, and supports VT-100 ANSI escapes. The video below shows it in action with a 17″ LCD monitor in the old 4:3 aspect ratio.

We like the way this terminal card gets the job done simply and easily, and we really like the look of the Baffa-2 itself. We also spied an IMSAI 8080 and an Altair 8800 in the background of the video. We’d love to know more about those.

Looks like Grant Searle’s circuit but uses VGA instead of composite. Still isn’t “modern”. The ATMega328 is fairly old and it uses PS\2. If you want modern I would look at the Pi Pico VGA Terminal. Or a Pi Zero W2 so you can use HDMI and USB.

I built a few of Mr. Searle’s designs (original, not the modified USB designs) from scratch and those had composite output; unfortunately, they were not 80×24 (with PAL being somewhat better than NTSC). Without the use of a reduced resolution character box, you just can’t do 80×24 on NTSC. Even with a reduced resolution character box, it takes a really clean circuit (forget about RF modulation) and a very good monitor to do NTSC 80×24 or 25 legibly.

Or let’s just forget about PAL/NTSC and use a pure, crystal clear monochrome signal (VBS)!

That’s what people with analogue green monitors had to work with, after all. ;)

And if we’re clever, we’re using the PAL timings for a higher resolution (625 lines vs 525 lines)..

Or let’s use TTL signals and a modified monochrome monitor.

720×348 was used by Hercules, for example.

Or 800×400, as used by the Sirius 1/Victor 9000.

You can’t do 80×24 on NTSC because it’s way higher than the chroma filter frequency. You can barely even do 40 columns. All monitors that take an NTSC composite input need a low-pass filter to remove the 3.58MHz chroma signal. Almost anything that was sold as an NTSC televison, even monochrome, had the chroma filter. Even monochrome NTSC televisions from before color still needed a filter for the 4.5MHz (?) audio subcarrier in the RF signal. You could modify them to take out the filter, but hot-chassis was a thing for a long time, and you don’t want that on your video input connectors!

That’s what S-video is for. It’s been around since the early ’80s, first appearing on the C-64. (Atari used it internally, but never exposed it.) The 4-pin connector came in the late ’80s. Basically it gets the chroma signal away from the luma signal, eliminating the filter that limits the resolution. The plain luma signal is fine for 80 columns on a monochrome CRT, many of which were sold with the Apple II. The trouble is with a color CRT you also need a better shadow mask, and most of those were on RGB monitors, which usually didn’t support Y/C color unless they were sold for both the C-64 and Amiga. Or they were VGA monitors, which rarely supported 15KHz because it was so far from the 30+ KHz VGA scan rates. (sadly, even LCD monitors rarely support 15KHz scan for RGB)

That’s all fine, but I was speaking of monochrome video monitors. Or what used to be “normal” monitors -> green monitors, data displays. As used by tinkerer’s or geeks in the 1970s. They aren’t affected by NTSC’s lousy color resolution.

They are (were) essentially performing like a Commodore 1702 with its Luma/Chroma inputs for 80 chars, albeit only with Luma being used. Like a 1702 with only Luma input.

Those conventional monitor devices without RF tuner, I mean. Some of which not even had a speaker built-in.

I don’t mean 90s era portable TVs in a bulky black chassis and with composite inputs as an extra.

– They do have CVBS filters, of course. Albeit not exactly good ones (no real comb filters, good DSPs).

Rather, I meant, some of the traditional video monitors, say from the security sector, which could display up to 800 or 1000 lines. Monitors with knobs for h-sync/v-sync etc etc.

Or let’s just think of France’s 737 line’s system.

These CRTs are (were) technically not very different from those used in Hercules compatible monitors or classic serial terminals (glass terminals), which did support 80×25 (or 80×24 due to status line).

(I’m not exactly sure how to write down what I mean to express. Perhaps since I’m no native English speaker or maybe because I lack knowledge of living in NA.)

Those monochrome monitors were common in the 70’s and 80″s.

For some reason I cannot paste a link.

Search for, “Apple Monitor III” and you will find one. Amdek and many others made these.

Most will display a PAL or NTSC signal and most will do 6 to 800 lines horizontally. The little 9″ Amber one I have does 800 plus.

At the time, some machines would throw up to 132 columns 25 lines on a non interlaced signal.

These monitors are quite sharp. 80 columns off a composite line is no real problem for them.

A 1702 isn’t going to work because it’s still a color CRT tube with a color TV resolution shadow mask, and the dots are too big. You can only see 80 column text with a proper monochrome tube. (or a VGA or better color tube, which probably won’t sync to NTSC)

A monochrome 80×25 8×8 characters can be done on composite easily enough. Last time I did that I used a Propeller chip and it worked great with the simple 3 resistor circuit provided. One gets 6 brightness levels at least. Many monochrome sets will display this nicely.

Color sets may have a coarse CRT phosphor mask and that can limit detail. I found inexpensive analog sets to be the worst and more appropriate for 64 columns.

There are some good options for color.

One is to use S-video. Both Propeller chips output it specifically. Older sets won’t have it. Those that do also tend to have finer pitch phosphors and may perform well. Phase alternating signals work best.

On composite, the 8×8 characters need a pixel clock much higher than composite can carry without a lot of artifacts.

Rather than keep the color phase constant, like Apple 2 style graphics, it can really help to phase shift it every line, but keep that phase shift pattern constant across frames. This combination results in no dot crawl and color fringing well distributed along the character rather than to one side or the other and in big patches hard to ignore. On analog sets, the CRT phosphors may be too coarse. On finer pitch tubes and many newer sets, this combination can work great! Recommended for LCD and capture cards. When this works, it works great. Better overall color and detail than almost anything else.

Or, limit your frame rate to 30 FPS and do a full interlaced signal. This does allow for better character definition.

In all cases moderate color saturation really helps as does avoiding extremes, like violet text on green background.

Regarding Propeller chips specifically, the version 2 design has DAC output and can drive both composite and S-video to near pro grade quality. And the signal is still under software control allowing for the subtle tricks I mention here.

When using devices that are not so software driven, a significant improvement can be had on S-video capable sets and capture cards by sending the composite into both S-video signal inputs. A very small cap on the color input, say 10pf really helps and does isolate the signal for the benefit of the display circuits.

Newer HDTV sets may have amazing filters. I have a Samsung plasma display that basically gets 80 column text over composite almost right with only the very worst cases showing fringing. I use the phase alternating, non interlaced signal described above. The processing in that, and some other LCD displays I have tried seem to really perform on that signal combination.

All this is pushing it for NTSC, but good results can be had.

Personally, I’d want instant-on, so the Zero would be out.

I see all these posts about peoples’ “retro battlestations” but what I really want to put together is a workspace with the *minimum* number of monitors/keyboards/cabling with the most possible options. Somwhere I can plonk down a random machine and hook it up with a minumum of fuss. Still trying to figure out exactly what I want, but a basic serial terminal like this I could patch in is definitely on the list.

4:3 ratio or 5:4?

That might be a really old 1024×768 monitor actually. Not the more common 1280×1024

Looks like the IMSAI 8080 is the replica made by the High Nibble. I have one myself. You can tell because it’s a much thinner case.

https://thehighnibble.com/imsai8080/

Yep, It’s a IMSAI8080Esp from Highnibble. I also have an Altairduino https://www.altairduino.com/ and other replicas – uKenbak-1, PiDP-8 and PiDP-11

I would be more interested if someone can explain why more younger people are still interested in CP/M in these days. I am the first generation of kids that are grown up with computer. My first hardware (when I was 15y) was a EPROM-Burner for ZX-Spektrum. Later I build some cards for an ][+ running CPM most of time. (for example a flasher for MCS48) So I think I know the time, especialy if you think that this was before internet, so we had to develope our things by ourself and not copy everything like in this days. But it is absolutly out of my understanding why someone should interested in CPM in this days?

There are are much more interesting operating systems. (RTOS/UH or UCSD/p for example) But CP/M?

I don’t get it either. I wanted an Apple II, had to make do with an OSI Superboard II in 1981.

By the time I was given an Apple II about 1990, I just had no interest in spending the time learning, not when I had better computers. When I was given a broken Mac Plus in 1993, that gave me a “mainstream” computer for the first time, but it was overall a step down from my Radio Shack Color Computer III, running Microware OS-9. The better specs of the Mac was used to.run the GUI, not give me better performance.

For a long time, I was running behind, not for nostalgia but from not spending money on new computers.

So I have old computers, bought when they were new, or cheap when nobody wanted them, but I have no interest in using them.

I fear there’s a subset of anti-technology.

>I fear there’s a subset of anti-technology.

Jeez, dramatic much?

For all it’s warts, CP/M has a couple desirable properties.

First off, it was designed to be highly portable and is extensively documented, so it’s pretty simple to get it running on anything with an 8080 or Z80.

Second, there’s a large collection of software already out there and ready to use. Other simple OS’ might be nicer, but it’s hard to beat the convenience of literal decades of existing software for CP/M.

Now, you might ask why these old systems are so popular? Well, for me it’s the fact that they’re extremely simple compared to modern computers. Nothing is hidden under hundreds of layers of abstraction, you can interact directly with the hardware, and the service manuals that came with these old computers often explain how everything on the computer works in great detail, from the glue logic on the board to the implementation of it’s software. The simplicity of it all and easy mod-ability are quite attractive. Sure, not everyone is into that, but for a computer engineer like me I enjoy toying with them.

“Nothing is hidden under hundreds of layers of abstraction, you can interact directly with the hardware,” – exactly. Pure metal. Clarity. Simplicity.

Yep. There’s a sense of … pride? … accomplishment? … comfort? in being able to understand a system bottom to top, especially in one you’ve built yourself, better yet *designed*. With z80 and 6502 being so common and well-documented, most people choose one of them. CP/M is pretty terrible (PIP? Really??), but usable and with a bunch of software.

Hopefully something like FUZIX will continue to grow and provide a decent alternative.

You’re right and Fuzix is in my plans :)

You’re right and Fuzix is already in my plans. But It’s necessary to increase the clock and for now I’m using 8mhz. For the future, I’ll try a 33mhz Z80… but other CPUs like 8088 are also in my future plans… I’ll try to reach an 386.

Agree. It’s like studying dinosaurs, or the Roman Empire. Long gone and probably not super useful in today’s world, but they present a complete potted slice of biology/history/technology that can be simple enough so that dissection and operation is in itself a useful and often enjoyable learning process.

I think CP/M is probably popular for homebrew computers because it’s easy to implement as long as you have a Z80, there’s a pretty big software base, and it can provide an option for saving programs. It also provides an easily available interface over a serial interface, as opposed to writing a bunch of software to work with a video chip. Importantly, it gets a bunch of BASIC versions and other programming languages for free, so you don’t have to port Microsoft BASIC to your homebrew Z80 system yourself.

Because it is essentially the ancestor of DOS and people love stuff they are familiar with. On top CP/M is relatively easy to understand and modify to your needs. It runs on affordable retro-hardware, see the MBC2 for a cheap platform, and it has a vast library of software to explore.

CP/M was so popular that even the 8086 MS-DOS and related operating systems supported its API. It’s one of the first microcomputer operating systems that supports files. There was a lot of stuff written for CP/M, even if you don’t count things that just made life with CP/M suck less. It also makes no assumptions about video hardware. Even full-screen stuff like Wordstar was designed to work with terminal control codes. Having memory-mapped video just made things run faster.

But back in the day I had a TRS-80, and having ROM at 0000 that couldn’t be remapped was like being an exile. You basically can’t run CP/M if you can’t have a large RAM space starting at 0000. I recently had a look at the Z180 specs, and IIRC its simple memory mapper didn’t even support replacing 0000 with RAM. If I was to throw together a Z80 and some parts, I’m not sure that I’d want to run CP/M other than it being the 8-bit equivalent of running Doom. Also, using the sysgen process to merge it with a BIOS looks like a pain. These days it would be easier to just disassemble it and change the ORG statement.

Even for writing new code I wouldn’t want to write it on such a system. Back in the days, pros would use cross-assemblers on a minicomputer, and modern computers are so much more powerful than that. I already have much better tools than I could ever run on vintage hardware. There’s no good reason not to use a “make run” step in your makefile to start an emulator, and just check it on real hardware every now and then.

The better question is why they would care about “more interesting” operating systems like those you mention.

RTOS/UH is specifically designed for process automation and UCSD/p is Pascal centric, based on a a

quick google search. Why would anyone be interested in them instead of a more general purpose OS?

Why interest in CP/M? I’d say it’s similar to restoring/hotrodding an old car; you can work on it develop it, and have a good understanding of what’s under the hood. Back when CP/M was current, I built a couple of systems and ran Wordstar on them, and wrote a few magazine articles before moving to DOS. I recently built a “retro” Z80180-based system with static RAM, SD card storage etc. It wasn’t for using, it was for fun (I’ve got Zork, Wordstar, C, Basic, and other old programs running on it). Like restoring an old car but a lot cheaper. Except you can drive a car around; CP/M is too slow for current practical use. A CP/M system is a pride of accomplishment thing. That’s my opinion, anyway.