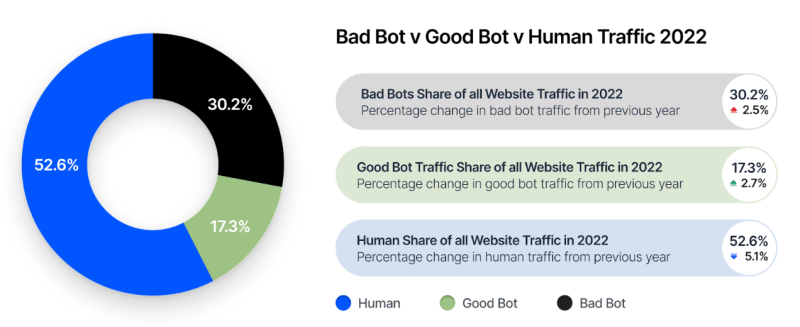

Automation has been a part of the Internet since long before the appearance of the World Wide Web and the first web browsers, but it’s become a significantly larger part of total traffic the past decade. A recent report by cyber security services company Imperva pins the level of automated traffic (‘bots’) at roughly fifty percent of total traffic, with about 32% of all traffic attributed to ‘bad bots’, meaning automated traffic that crawls and scrapes content to e.g. train large language models (LLMs) and generate automated content as well as perform automated attacks on the countless APIs accessible on the internet.

According to Imperva, this is the fifth year of rising ‘bad bot’ traffic, with the 2023 report noting again a few percent increase. Meanwhile ‘good bot’ traffic also keeps increasing year over year, yet while these are not directly nefarious, many of these bots can throw off analytics and of course generate increased costs for especially smaller websites. Most worrisome are the automated attacks by the bad bots, which ranges from account takeover attempts to exploiting vulnerable web-based APIs. It’s not just Imperva who is making these claims, the idea that automated traffic will soon destroy the WWW has floated around since the late 2010s as the ‘Dead Internet theory‘.

Although the idea that the Internet will ‘die’ is probably overblown, the increase in automated traffic makes it increasingly harder to distinguish human-generated content and human commentators from fake content and accounts. This is worrisome due to how much of today’s opinions are formed and reinforced on e.g. ‘social media’ websites, while more and more comments, images and even videos are manipulated or machine-generated.

Here we welcome our new overlord Bot !

So 50/50 one of us could be a bot.

10/10 best comment of the day

Most of my comments here are automated using a python script and a LLM. Fortunately, so far, nobody has noticed :)

I was not going to say anything, but now that you mention it *evil grin*

Have you not noticed how banal they usually are?

So, your LLM focused its learning on my web postings. That’s just super.

I thought I felt something watching me.

Am I real, or am I trollbot?

Are there any other options?

That is one that has always bothered me when I see it online.. the assumption that all trolls are bots by definition. Trolling has a long and storied human tradition!

Sadly it’s really you.

At least you use the same account name so it’s easier to ignore.

Thanks for the free advice

Everyone still knows it’s you.

If you have a smaller site, 90%+ of your traffic is likely to be automated, and inconsiderate of “robots.txt” or other conventions.

“Thou shalt not make a machine in the likeness of a human mind”

No qualms with making a human mind in the likeness of a machine.

Of course proclamations and constitutions don’t actually have any power to prevent anything from happening without the civic and religious discipline which existed when they were written. That exists in Dune, not on Earth, which is one of the lessons of Dune

So youre saying that egbok will occur in another couple hundred years?

Egbok and wagmi are both right on schedule.

Blocked (inside htaccess) ip’s from :

– China

– Huawei

– DataCamp

– Amazonaw

– Amazon Data Service

– Microsoft Data Center

– Facebook crawlers

This reduced half of the total requests – and were – most of them – malicious / abusive requests.

So islanding the Internet is the solution after all? :-/

Okay, I spun your solution “just a *little* farther” and I technically agree with it but it’s more ore less just a personal solution not solving “global” problem.

Kinda like all of “us” using addblockers / noscript to “fight against ads” but not the general public.

Seems like monopolization and internationalization already islanded the internet

Lemme guess: you could not block google because 70% of your site(s) are google-tracking your visitor’s asses.

Are there solutions? I think maybe the only way around it is to remove anonymity. Have hard authentication to link every account with a real person. It still won’t remove the possibility of bots because your account could get hacked and used maliciously. But that would limit the access. Or people might pay to use your account, but you’d still be accountable and maybe lose your access. It’s not a perfect solution, but I think it would stem the tide.

Unfortunately, you lose privacy which is a big problem too. But a lot of accounts can be linked back to you already. Just not as easily as this would make it. I think Facebook originally kept new accounts down, and only allowed certain groups like colleges – you had to prove you were a college student to join. Now they’re a big part of the problem with their own bots.

Does .htaccess not work any more?

>Are there solutions? I think maybe the only way around it is to remove anonymity.

How about instead of throwing out one of the best aspects of the internet, we just make running bots a criminal offense? Fundamentally this is an issue of human behavior, not technology.

What about eating/shitting/farting/fapping human NPCs?

Making HERP/DERP illegal isn’t a terrible idea, hard to enforce.

Create a blacklist of expressions (e.g. ‘Privatize the gains, socialize the losses’) from the political parties daily talking point emails, but too easy to game.

Just continue to put ‘moron’ mental checkmark next to anybody posting such.

And this will break the good things – ability for computer to collect and filter data for you.

Is fetching and filtering RSS feed from this site considered ‘being a bot’ ? What else is?

Yeah that’s one solution if you want to turn the web into just one big chilling effect ruled by the countries with the most biomass signed on to receive an internet connection

This is it.

This is Judgement Day

Don’t worry, Imperva also produces an anti-bot solution and they also have a free 30-day trial.

Yeah, “research” aka “free marketing”.

The numbers are probably rounded “a bit” up to increase their sales of .. surprise surprise, bot blocking products!

Basically, a .htaccess script?

I prefer fail2ban ;-)

I think bots an easily solved simply requiring some money or putting limits to posts, lets say 1$ per account or 10 comments per day…its something that you can affront but a bot farm not.

But bigger than this problem to me its the content recomendation that can filter any news or use reinforcement learning to train a group of people, over years, to do whatever the algorithm has in his reward function.

I think that would have the opposite effect; big bot farms with corporate or nation-state backing can easily maintain a spam budget, while actual humans would decide it’s not worth it. “Money-is-speech” is already a problem in the US. Don’t make it worse.

I’m just waiting on the NRA to take that “spending/donating(?) money is free speech” nonsense a step further and make shooting (people) an expression of free speech…

You need to think adversarially with ideas like this. I don’t think you’re trying hard enough to consider abuse vectors. Also, that implementing this rule unevenly would insta-kill any platform which tried it and cause a diaspora of users to platforms which didn’t. I don’t see how you’d enforce it globally.. Similar to ideas for “digital ID”

Dead Internet Theory isn’t funny now, is it?

https://www.youtube.com/watch?v=KpbLphGX8P0

It wouldn’t matter if there were no bots

Sarcasm, comedy, innuendo… three things that I believe are currently irreplicable by AI/LLMs.

I just asked one to say something sarcastic and it was shockingly unfunny. Could use more experimentation on other systems.

no, you probably asked a real american.. They tend to not get sarcasm.

I worked on IT infra for a company that ran Imperva web-app-firewalls and they are terrible.

Separately Imperva-using-sites freak out when accessed via browsers configured to be security conscious.

Anything Imperva is bad and untrustworthy.

How can i tell if i’m a bot?

In the past couple of years i beleive its more like 75% of the internet is crawling with bots.

“This is worrisome due to how much of today’s opinions are formed and reinforced on e.g. ‘social media’ websites, while more and more comments, images and even videos are manipulated or machine-generated.”

This has always been the role of media, to form, reinforce, and manipulate public opinions. To create the illusion of a herd consensus and put pressure on the mind. Or to “inform,” if you are being overly optimistic. Disinfo and misinfo rarely have any bearing on what is provably true or false, but merely what is antagonistic.

The internet is frightening because for the first time it decentralizes control of this system. Just as during any power shift, this will lead to a reaction by the old guard, trying to scrape back their control and reinforce it, which we have been seeing the past decade or so.

Nick Land is a joke until you realize that this kind of decentralized chaos machine samizdat is actually going on.

Herd consensus has always been an absolutely terrible way to perform decision-making, but it’s strongly encoded in psychology. So media allows a far smaller group of (preferably competent) leaders to simulate it instead—it has worked this way for many centuries. Freelancer or rogue leaders using bot armies (or developing nations with mobile phones) being able to mimic this behavior is interesting. “Interesting” in a very value-neutral way, of course.

I wonder if the bots will miss us when we’re gone?

“And Web itself, when it woke at dawn Would scarcely know that we were gone”.

Shameless plug, that’s how i deal with those : https://freehackers.org/orzel/botfreak

Reports from bad behaviour are aggregated on a database then shared with all servers for blocking at IP level. Bundled with import from few common internet “black list”.