If you’re reading this, you probably have some fondness for human-crafted language. After all, you’ve taken the time to navigate to Hackaday and read this, rather than ask your favoured LLM to trawl the web and summarize what it finds for you. Perhaps you have no such pro-biological bias, and you just don’t know how to set up the stochastic parrot feed. If that’s the case, buckle up, because [Rafael Ben-Ari] has an article on how you can replace us with a suite of LLM agents.

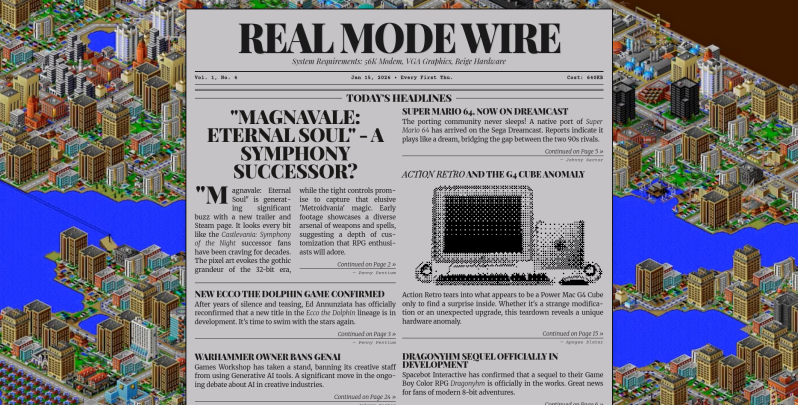

He actually has two: a tech news feed, focused on the AI industry, and a retrocomputing paper based on SimCity 2000’s internal newspaper. Everything in both those papers is AI-generated; specifically, he’s using opencode to manage a whole dogpen of AI agents that serve as both reporters and editors, each in their own little sandbox.

Using opencode like this lets him vary the model by agent, potentially handing some tasks to small, locally-run models to save tokens for the more computationally-intensive tasks. It also allows each task to be assigned to a different model if so desired. With the right prompting, you could produce a niche publication with exactly the topics that interest you, and none of the ones that don’t. In theory, you could take this toolkit — the implementation of which [Rafael] has shared on GitHub — to replace your daily dose of Hackaday, but we really hope you don’t. We’d miss you.

That’s news covered, and we’ve already seen the weather reported by “AI”— now we just need an automatically-written sports section and some AI-generated funny papers. That’d be the whole newspaper. If only you could trust it.

Story via reddit.

This is one of the better uses of LLMs. I like it.

I may be missing something here, but I really don’t get the point of the whole “container-ized sandboxing” for LLMs. Why are we pretending there are “reporters” and “editors”? Its the same LLM that’s doing it behind the scenes. It should instead be scrape web -> see if something is relevant -> summarise -> repeat

I mean if you change the context text, message history, whatever you call it, you get access to the LLM without any previous prompts having affected the output. Its just a matter of I guess, making the LLM wear different hats “you’re an editor”, “you’re a reporter” and so on

I am working on an python XMPP chatbot system and I will be honest, I too got really caught up with anthropomorphizing LLM run personalities. But then it got really complicated and I eventually came to my senses.

LLM’s being forward-only in their generation, I’m not surprised that passing the text again as input yields some improvement. It lets the ending of the text affect the earlier parts, which is otherwise not possible.

Whether “you are the editor” or “your job is to make the text clear and concise” as a prompt gives a better result is something worth testing.

Considering all the “swamp filling” that’s happening, one almost needs an LLM to stay on top of it.

I think you meant ‘agentic’ sports section.

Ugh, probably. Thanks for pointing it out. Since that’s the ugliest neologism to blight the English language in decades, I’m not fixing that typo, but replacing it with a real word.

I remember when HackADay surfaced cool shit but here recently it’s all Clanker Slop all the time. 🤣

That’s funny; considering all the hype around how Clankers are going to be able to do everything all the time and you won’t need meat bags to do anything Very Soon™, I find it remarkable how few AI projects cross our desks.

Yeah. I can be bothered with this slop. At least an LLM would have written something entertaining to read.

That reminds me more of Transport Tycoon Deluxe.

TTD has a modern successor – OpenTTD that’s still being developed. They just put out a big update last month. It’s available on Steam for free.

The SimCity 2000 newspaper was obsessed with llamas, bring this project full circle.

Can someone help me find a reference to “Cortexinterface wireless memory recall” that is mentioned in the picture of the Wednesday Jan 21 issue of The Gradient Descent? I can’t find any mention of it on the web. I suspect it is an hallucination.

I think you’re right; I couldn’t find anything either. That’s the rather big fly in the ointment, but hey, look on the bright side: it might be fake news, but it (probably) isn’t being faked to manipulate you.

Why can I hear that SimCity 2000 screenshot?

Oh right, because I played it for hundreds of hours through the most formative period of my life.

I enjoy reading things that are not what I expect to see.. Not sure LLMs can do that, if I keep telling it what I want to read about.

As my Therapist told me, if you keep doing what you are doing, you’ll keep getting what you are getting..

By Visiting things I do not normally do, I see things I do not expect to see.. That is called Life..

Cap

If I were to make my own hackaday with a roster of LLM agents, I’d then have to make a whole litany of agents to act as the commentors. Some would be super helpful, others would yell about lawns with kids on them, and some agent would even have to be me. Could be fun, but ultimately, less interesting I’m sure.

It has been insisted upon that LLMs are destroying the planet. I have since done everything I can within the capacity of my financing to fund as much AI growth as I am able to without starving myself. It seems to upset all of the right people. Spite is a good enough reason to do anything.

Plus I’m sure whatever story an AI comes up with is going to be a lot more informative, and substantially less mentally impotent, then anything you can find on Reddit. Which is not exactly a high bar to step over, but it is certainly higher than what the humans there come up with. If you consider them human.

now we just need an automatically-written sports section

Uhhm… The Associated Press (among others) has been using machine-generated sports reporting for a decade now.

The engine is “Wordsmith” by Automated Insights.