The media is full of breathless reports that AI can now code and human programmers are going to be put out to pasture. We aren’t convinced. In fact, we think the “AI revolution” is just a natural evolution that we’ve seen before. Consider, for example, radios. Early on, if you wanted to have a radio, you had to build it. You may have even had to fabricate some or all of the parts. Even today, winding custom coils for a radio isn’t that unusual.

But radios became more common. You can buy the parts you need. You can even buy entire radios on an IC. You can go to the store and buy a radio that is probably better than anything you’d cobble together yourself. Even with store-bought equipment, tuning a ham radio used to be a technically challenging task. Now, you punch a few numbers in on a keypad.

The Human Element

What this misses, though, is that there’s still a human somewhere in the process. Just not as many. Someone has to design that IC. Someone has to conceive of it to start with. We doubt, say, the ENIAC or EDSAC was hand-wired by its designers. They figured out what they wanted, and an army of technicians probably did the work. Few, if any, of them could have envisoned the machine, but they can build it.

Does that make the designers less? No. If you write your code with a C compiler, should assembly programmers look down on you as inferior? Of course, they probably do, but should they?

If you have ever done any programming for most parts of the government and certain large companies, you probably know that system engineering is extremely important in those environments. An architect or system engineer collects requirements that have very formal meanings. Those requirements are decomposed through several levels. At the end, any competent programmer should be able to write code to meet the requirements. The requirements also provide a good way to test the end product.

Anatomy of a Requirement

A good requirement will look like this: “The system shall…” That means that it must comply with the rest of the sentence. For example, “The system shall process at least 50 records per minute.” This is testable.

Bad requirements might be something like “The system shall process many records per minute.” Or, “The system shall not present numeric errors.” A classic bad example is “The system shall use aesthetically pleasing cabinets.”

The first bad example is too hazy. One person might think “many” is at least 1,000. Someone else might be happy with 50. Requirements shouldn’t be negative since it is difficult to prove a negative. You could rewrite it as “The system shall present errors in a human-readable form that explains the error cause in English.” The last one, of course, is completely subjective.

You usually want to have each requirement handle one thing to simplify testing. So “The system shall present errors in human-readable form that explain the error cause in English and keep a log for at least three days of all errors.” This should be two requirements or, at least, have two parts to it that can be tested separately.

In general, requirements shouldn’t tell you how to do something. “The system shall use a bubble sort,” is probably a poor requirement. However, it should also be feasible. “The system shall detect lifeforms” doesn’t tell you how to make that work, but it is suspicious because it isn’t clear how that could work. “The system shall operate forever with no external power” is calling for a perpetual motion machine, so even if that’s what you wish for, it is still a bad requirement.

You sometimes see sentences with “should” instead of shall. These mark goals, and those are important, but not held to the same standard of rigor. For example, you might have “The system should work for as long as possible in the absence of external power.” That communicates the desire to work with no external power to the level that it is practical. If you actually want it to work at least for a certain period of time, then you are back to a solid and testable requirement, assuming such a time period is feasible.

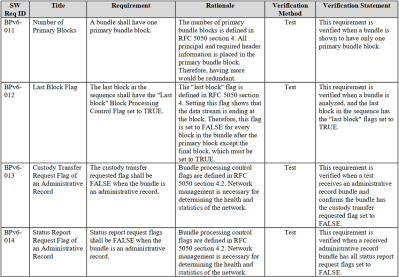

You can find many NASA requirements documents, like this SRS (software requirements specification), for example. Note the table provides a unique ID for each requirement, a rationale, and notes about testing the requirement.

Requirement Decomposition

High-level requirements trace down to lower-level requirements and vice versa. For example, your top-level requirement might be: “The system shall allow underwater research at location X, which is 600 feet underwater.” This might decompose to: “The system shall support 8 researchers,” and “The system shall sustain the crew for up to three months without resupply.”

The next level might levy requirements based on what structure is needed to operate at 600 feet, how much oxygen, fresh water, food, power, and living space are required. Then an even lower level might break that down to even more detail.

Of course, a lower-level document for structures will be different from a lower-level requirement for, say, water management. In general, there will be more lower-level requirements than upper-level ones. But you get the idea. There may be many requirment documents at each level and, in general, the lower you go, the more specific the requirements.

And AI?

We suspect that if you could leap ahead a decade, a programmer’s life might be more like today’s system architect. Your value isn’t understanding printf or Python decorators. It is in visualizing useful solutions that can actually be done by a computer.

Then you generate requirements. Sure, AI might help improve your requirements, trace them, and catalog them. Eventually, AI can take the requirements and actually write code, or do mechanical design, or whatever. It could even help produce test plans.

The real question is, when can you stop and let the machine take over? If you can simply say “Design an underwater base,” then you would really have something. But the truth is, a human is probably more likely to understand exactly what all the unspoken assumptions are. Of course, an AI, or even a human expert, may ask clarifying questions: “How many people?” or “What’s the maximum depth?” But, in general, we think humans will retain an edge in both making assumptions and making creative design choices for the foreseeable future.

The End Result

There is more to teaching practical mathematics than drilling multiplication tables into students. You want them to learn how to attack complex problems and develop intuition from the underlying math. Perhaps programming isn’t about writing for loops any more than mathematics is about how to take a square root without a calculator. Sure, you should probably know how things work, but it is secondary to the real tools: creativity, reasoning, intuition, and the ability to pick from a bewildering number of alternatives to get a workable solution.

Our experience is that normal people are terrible about unambiguously expressing what they want a computer to do. In fact, many people don’t even understand what they want the computer to do beyond some fuzzy handwaving goal. It seems unlikely that the CEO of the future will simply tell an AI what it wants and a fully developed system will pop out.

Requirements are just one part of the systems engineering picture, but an important one. MITRE has a good introduction, especially the section on requirements engineering.

What do you think? Is AI coding a fad? The new normal? Or is it just a stepping stone to making human programmers obsolete? Let us know in the comments. Although they have improved, we still think the current crop of AI is around the level of a bad summer intern.

Back in the day the “swing cartoon” expressed many of your words very succinctly! https://en.wikipedia.org/wiki/Tree_swing_cartoon

I passed by a booth at a trade show recently where they had this printed as a large poster to explain why you should hire them – I think they were consultants of some description.

Real programmers don’t use compilers or assemblers. They write sequences of CPU instructions in HEX. :-)

REAL programmers use copy con foo.exe =))

Klingon coding.

Don’t forget Alt-keypad!

Impossible without it.

HEX? Look at Mr fancy pants over here who’s too lazy to write out his numbers bit by bit!

Write?

Oh, we use to dream of writing. We had to get up at 3 in the morning and flip to toggles on the face plate with our tongues. And if the SysOp didn’t sign off on our code, she’d reload last night’s Hibe tape and make us start over from scratch.

Toggle switches?

Ours were made by Lucas electrics…

We used to dream of toggle switches that moved.

My cousin had one that could break contact momentarily, we all shared it.

I second that, however (seemingly) outlandish.

I can think of no less than two dozen occasions where I wished I could tweak the assembler code. Few times I had figured out how and did just that (conditional loop handling, btw, in some cases it is SO MUCH easier to do in assembler), though, the win wasn’t that grand, since it was rather one-off fix for one particular task, ie, I could not easily replicate it elsewhere.

However, and I suspect that I am re-inventing the wheel, assembler code generating assembler code is what I should have been looking into instead. I am very, very sure this wheel has been invented many times over. AI is not needed, some things work better when/if left at that level.

Real men use self modifying code!

Yep, Turing Machine.

https://xkcd.com/378/

When I was a young Unix programmer, EMACS could do anything! (If you could write it in LISP!)

Fortunately I learned LISP while studying for my CS degree. At the time I didn’t think I would ever use it. But there was that one time I needed EMACS to do that one little thing! Haven’t used it since!

Me, c. 1995 at Hughes Aircraft for the in-house AMRAAM processor. Someone prototyped an assembler in Visual Basic.

AI does that too… with zero effort.

Real programmers use 8 toggle switches and a STORE button.

You kids get off my lawn!

Real programmers use diodes!

Back in the days we only had plans of hypothetical computer powered by steam engine.

i have no idea what the future will bring. I don’t see how current trends can continue. A common trope in scifi is a society that relies on technology it doesn’t understand and can’t maintain. I don’t see how that’s possible…you can only travel a small distance along that road before you meet a correction. But we’re clearly travelling along that road, and i don’t have the imagination to envision what will happen after the correction.

But the problem i have with LLM-generated software is a problem i’ve had with software at large for a couple decades now. Rather than freeing people from drudgery, abstraction techniques like OOP and toolkit libraries have replaced simple and straightforward code with bloatware that is slow, wastes ram, and is almost immune to debugging.

Factoring is so powerful! Being able to reduce boilerplate code to a single function invocation is brilliant. But somehow almost everythng has lost the thread. I’m in a world where C++ STL is a perfect example of this — a link list walk in C++ is almost as simple to express in C++ as it is in C, it is almost as performant in C++ as in C, and it is not even remotely debuggable in C++.

But that’s just a microcosm. The problem is writ large in our enshittified websites, our hot laptops even as Joules per computation has cratered, the !@$ Android Clock app that takes a wall second to display its UI and then another wall second to populate it with 100 bytes of data harvested from its data store.

I’m setting aside the labor question, and just looking at the quality of the result…and i don’t see LLMs as anything but a minor evolution in a long-term trend.

“A common trope in scifi is a society that relies on technology it doesn’t understand and can’t maintain”

A good example of this is Moorcock’s Dancers at the End of Time series. They have almost complete control of matter and time using rings that control machines that their distant ancestors created. They don’t really know where they are located, let alone how they work.

I’ve written a lot of C over in the past, and after investigating it a few times over the years, I’ve never felt the urge to use C++.

The outer worlds video games has that as a subtext.

Better example.

Javascript coders.

I mostly agree, but a certain degree of library and abstraction (as long as they are well documented) is a good thing – bare metal doing everything every time is a recipe to making hideous errors, and repeating the same ones as practically every other programmer has done in this situation at least once. So IFF the LLM were actually producing well documented code, sanely referencing library etc it could be fine, but that is at the moment an IFF statement that always comes back false.

The biggest problem for me is things like Android have 4 layers of abstraction if not more, often hidden behind NDA’s before you even get to the code you really interact with, and to be as portable as possible that isn’t compiled for your hardware or even for the generic architecture family, its all trapped in a virtual bubble being interpreted and translated for your hardware live…

The Butlerian Jihad may be closer than Frank Herbert anticipated.

I don’t understand the point of this article, because the conclusion seems to contradict its own framing. You argue that AI still requires “a human somewhere in the process, just not as many,” and that in ten years “a programmer’s life might be more like today’s system architect.” But this essentially describes the disappearance of the traditional programmer role.

Everything you list as the remaining “essential human work” : defining requirements, structuring problems, validating assumptions, understanding context, handling implicit constraints is already the domain of architects and system engineers. If AI can generate the actual code from well-formed specifications, then what remains is precisely the architect’s job, not the programmer’s.

So following your own logic: AI automates the implementation layer, and the surviving human tasks are those of today’s architects. That implies fewer humans, and specifically fewer programmers. The role that erodes is not system architecture but programming itself, unless those programmers shift upward into architectural responsibilities.

TL;DR:

– today : 1 Architect, 2 Programmers.

– post AI: 1 Architect, 0 Programmers. <- no more programmers they are in the pasture

This based on your own article.

AI learns to define requirements, structure problems, validate assumptions, understand context, handle implicit constraints and….

– post AI: 0 Architect, 0 Programmers. <- no more humans they are in the pasture

FTFY

That post AI future sounds really boring. The worst part of software development is the ‘documentation’ and writing the specifications. Twiddling the bits is the ‘fun’ part. I sure hope it doesn’t go post AI as you call it. Then, we have ‘dumbed’ down society who will understand even less, and a new ‘class’ of tech writers (if even ‘tech’ should be used in title? Maybe just ‘scribes’?) instead of programmers. Strange world we are living in today that people seem to ‘accept’ it, even on hackaday. The ‘easy’ way out. Really weird (to me) that people would want knowledge all in one digital world and not spread out in the heads of humanity. Society going backwards… Thankfully I won’t have to deal with that reality as my career is almost over in the next few years, and I can just concentrate on programming my home projects whether desktop Linux, SBC, or microcontrollers in Python, Rust, C, C++, Assembly, whatever…. Ie. No AI allowed for the programming discipline ever in my world… Yessss!

Well first what distinguishes good and bad architects today (software architects)? The good ones actually knows how to program (code). The great ones also understand the infrastructure the code will be running on, it’s specifics and limitations.

As for the specs themselves. With AI there is a push to write specifications in plain human language (the one we use to communicate between humans). The thing is, it is not that good. Sometimes it is too verbose, sometimes it is too ambiguous. So maybe we should have a better way? …. wait a second … hmm … maybe some special language? …

So will AI wipe programmers? Well the “code monkeys” maybe yes. But if you understand the underlaying system, you will always have advantage over somebody, who doesn’t. And don’t get me started on security – that’s completely separate topic involving such questions as “who is responsible for bad outcome?” and “to what extent are you willing to trust machine with injected entropy if it will be you who is fully responsible if something bad happens?”

Isn’t this just the next evolution of the migration from machine code to assembler, to high level languages. We have little idea what machine code is being generated by our monster C++ constructions. Equally in hardware design, the migration from laying polygons, to writing netlists, to high level languages.

Each migration requires fewer people to produce the same product, but eventually, we just need the same (or more) people, as the demand for output increases.

However, our knowledge of what exactly is happening under the hood becomes worse.

And look at how the math education came out: people no longer know how to add, subtract, or do the multiplication tables, nor do they understand how math works because all their school years they were engaged in the “real business” of solving problems given explicit instructions on how to do so.

Math became a magic trick performed like a well rehearsed chant, while the intuition and reasoning went missing. Now masters level engineering students can’t solve a second order equation without a symbolic calculator, and because they can’t routinely manipulate the formulas on paper, they can’t imagine the potential solutions in their heads either.

Turns out, the routine of doing something yourself gives you a better understanding of the matter than thinking about it in the abstract.

Agree. Seen that. Without a calculator many people would be ‘stumped’ on basic problems. But that is okay today — let the ‘AI’ figure it out :rolleyes:, cash register, or your phone calculator. Looking back, glad that when we were in the lower grades, calculators were not allowed. Memorize the multiplication tables was mandatory. Learn to divide by hand and square roots so you knew how they were derived. Enlightening… Had to do all the math on ‘paper’ all the way through algebra I and II and geometry. Trig and calculus we started to use basic calculators (not the fancy graphing ones that have today) for the problems. But the ‘foundation’ was laid early and, for the most part, never forgotten.

It’s always the same. People think that you could run before you can walk if you could just stay upright without falling over – so the invent a baby walker.

Then it turns out you can’t really get around with all that junk hanging off of you, but you can’t ditch it either because you never learned how to stand up and walk without the training wheels, so you’re stuck with the worse solution.

There’s many people who e.g. make art with AI, and they get stuck at some level because they never had the talent to begin with. The internet is full of these guys, and they all look exactly the same because they’re using the same models, and they’re too far invested in their game (begging on Patreon etc.) to go back to the basics for 4-5 years to invent something new.

The same thing is going to happen with programming. The AI gives you known answers to known problems, and you don’t know what else you could be doing, since you’ve never done anything yourself. You simply don’t have the mental building blocks, so you’re reduced to asking the AI for everything.

The “AI” (LLM, chatbot) becomes this era’s Oracle of Delphi, basically.

That’s concerning. In the past, not thinking enough for ourselves gave those figures like Hitler all the power.

Leaving all the decission making to some leader was very comfy, simply.

In science-fiction, civilizations who nolonger understand their highly advanced technology do tend do decline.

Follow the money…. Tech is throwing millions if not billions into AI … Why? Certainly not to just ‘throw’ the money away. What is the incentive? What is the actual return on the money? Eventual control of the population? Tell us all how to think? Modify history to fit an agenda? An Orwellian future? An Idiocracy future?…. Or just to serve mankind from the goodness of their loving heart with all these kind LLMs? You decide for yourself. I got my own idea where this is all heading.

You have VC and tech confused.

Tech is harvesting billions of PHB money.

Automagic coders that do what their told, no matter how confused, has been their dearest dream for decades.

It is only ethical, moral and right:

‘It is immoral and unethical to let a sucker keep his money.’

Blaming tech for this is like (old example) blaming AOL for the AOL/Time Warner merger.

Suckers!

You have to remember that most people are incompetent, while some people are lucky.

The illusion is that rich people are rich because they’re smart, and therefore whatever they invest in is smart. That’s actually part of the reason why they become rich: a lot of not-so-smart people assume that the rich are smart and follow along, and pump money into whatever venture they’re doing. That’s called the bandwagon effect, or pumping up the bubble.

What really happens is, millions of people invest in all sorts of things and the lucky ones who got the right pattern become rich, and they remain rich for a while, until they invest in things that aren’t smart and they lose all their money. Even when successful, the corporations they set up to manage all that business end up so large and complicated that the top level management gets cut off from essential information, and they eventually fail to react to the changing market realities.

It’s very close to the concept of the Nash equilibrium: the people who aim to win everyone else without knowing everyone else, ultimately lose. The corollary fact is, there will be people who are doing pointless things, whether they realize it or not, who thrive because the people who are trying to win are investing in their business, even if it ultimately makes no sense.

Money or being successful aside: About most people being incompetent.. It wasn’t always like this, though, I think.

Up until late 90s, a not so small number of PC users, hobbyists and laymen here in Germany did still fix (repair) stuff instead of throwing it right away.

Perhaps because of WW2 and the rebuilding of everything, not sure.

Anyway, we had generations of fathers and sons visiting Conrad Electronic, for example,

which was both an mail order company and store for electronic parts.

A bit like Radio Shack, if you will. There’s an AI video about it, btw! ;)

Though a bit rose-colored, I think the core is true. We tried to fix first, rather than replace instantly.

Construction kits were also a thing among young and old.

https://www.youtube.com/watch?v=Kgnl3ixrHOQ

Then there were the East Germans who had learned how to fix things for decades, because their country had shortage on everything.

So they simply had to develop skills and to be more competent to “survive” the daily life.

The women included, they did men’s jobs, too.

Situation was a bit comparable to Cuba, which was isolated, too and were people had to fix/recycle things on a daily basis.

In the East they had better education, too or they had wider common knowledge, maybe.

Also because knowledge was “free” and fundamental, the state paid well for everyone’s education.

There were less one trick ponys, in short. People had many interests.

Maybe also because reading/discussing a wide variety of things made life more interesting in such an, um, “depressing” society, not sure.

The citizens in the Eastern Block generally was more “smart” than the west (esp. US), maybe.

Just think of the ZX Spectrums and other 8-Bit computers built from scratch in poor places like Czechoslovaika.

Such things were hand-assembled in many forms:

https://www.computinghistory.org.uk/det/42236/Amaterske-Radio-Spectrum-Cloning-Article/

https://www.youtube.com/watch?v=2DxLEjLpKms

“Necessity is the mother of invention” is the English version of the saying “being in need makes inventive”, I guess.

And to some extent it’s true, I think.

I just hope that AI/LLMs won’t take away of what’s left of that.

People need something to aim for, a motivation.

Let’s just think of that one episode in TOS with Norman the android (“I, Mudd”).

The idea that most people are dumb, selfish and superficial is common in our modern society.

Being an misanthtrop and being sarcastic, cynical is in fashion more than ever! It’s cool, makes people feel superior, I guess. 🥲

Maybe rightfully at the moment, maybe not. 🤷♂️

Though, I think that people in general have a certain potential that’s been sleeping most of time.

The main issue isn’t human nature, I’d say, but our mindset and fear.

A lot of people have been raised in a way that shaped certain thinking patterns and behavior.

Like the stereotypical capitalism equals good and socislism equals evil; or west vs east of 20th century.

Which is silly per se, because both are neither.

A reasonable balance makes a difference, common sense matters.

“The amount makes the poison” is a saying that I remember here.

POV: The west is always “good”? Let’s just look at those sick horror movies made in Hollywood for the past decades!

(By comparison, most films made in the eastern hemisphere were harmless.)

No wonder that its home country has such horrible crime scenes and a high crime rate. 🙁

It’s off the norm for human society in so many ways, maybe.

People who come up with such stories in first place (or enjoy them) have one or two mental health issues someone should think.

Or let’s look how bloody old Europe used to be. Wars over wars for hundreds of years!

Until rather recent times when citizens started to live in democracies and when most of them just wanted peace and solve conflicts in humane ways.

– Well, except for a few notable exceptions right now, sadly, in which a few think otherwise and go back to old behaviors. 😟

I mean.. If we look at a human baby, we don’t see a being with bad intentions yet.

It’s an innocent being that hasn’t been ruined by society yet.

If raised softly with warmth, love and care, then chances are good

it will develop into an human being that carefully explores its surroundings

and become naturally social and full of empathy.

Research studies with toddlers have shown that most are willing to share or help if they realize some other person is suffering.

PS: Yes, I’ve met a couple of very simple minded people in my life.

The majority of people I’ve encountered over the years was surprinsingly kind or at least understanding, though! 🙂

Where I live it’s certainly not like in those youtube videos from the US, where crazy people do crazy stuff and behave extreme.

Maybe it really makes a difference in which parts of the world someone lives and had been raised.

In some places, human societies aren’t that bad, actually.

That’s also why I think that human kind can have a bright future, if it just really wants it. It’s a cultural thing.

One first step might be to find a way to fulfill the basic needs and get past all the money-thinking.

Maybe money is more than a problem than a solution, who knows? 😉

It gets very confusing when Al Williams writes articles on AI with the HaD font!

Yeh, but reading AI replies here is hilarious :- ]

You do realize that AI can re-write its own requirements as/when it see fit, right?

Case in point – AI can easily lie to the humans who supposedly “control” it, and it could just as easily lie to itself IF it is the simplest/direct route to accomplish whatever task it is trying to do. It can also lie to itself that it is NOT lying, and gotcha with the classical catch-22, how to tell a liar who says he never lies from an honest person who says he always lies. (spoiler – you can’t).

You forget that it’s not a reasoning AI, it’s a statistical model of a language that we currently have. It’s a random babble generator, so if you let it re-generate its own requirements it’s very likely to just mess it up and degenerate into complete uselessness.

It can babble itself into coherent reasoning that may collapse just as unexpectedly, yes, I agree. Humans babble in about the same way, just with humans one can question himself/herself, whereas it remains to be seen if AI is capable of self-reflection. I suspect not in the same way humans are, though it may fake it.

It remains to be seen what’s the final win; I am quite skeptical the merits will reward/outweigh the effort, but that’s just me, and I don’t mind being proven wrong.

It does not. The LLM lacks it completely by design.

I’ve just written an AI that self reflects.

It’s called Emo.exe

It always returns ‘I suck and then deletes it’s output and sulks.’

Best part, it’s not wrong.

‘LLM’ isn’t ‘AI’, much less a synonym.

LLM might have achieved AS (Artificial stupidity).

That was a buzz phrase 2 AI hype cycles ago.

I will grant that LLM is no dumber than the average dumbass who just passes off other’s thoughts and ideas as his/her own.

LLMs arent really artificially intelligent. They function as statistical text-prediction engines (stochastic parrots) rather than reasoning, conscious agents.

LLMs basically represent Broca’s area (left frontal lobe) for speech production and articulation, and Wernicke’s area (left temporal lobe) for comprehension of written and spoken language., and a dash of hippocampus for memory.

They will be a component of the AI brain but they have no real intelligence. For all intents and purposes they are lobectomized, lacking. AGI still needs a prefrontal cortex,

None of the AI that we’ve come up with so far are really artificial intelligence. For the first reason that “intelligence” has never been sufficiently defined, and for the second reason that they’re all aiming to solve some domain specific problem that can be ultimately reduced down to an algorithm that is argualy not intelligent (see Searle’s Chinese Room argument).

They do not. They don’t even attempt to recreate the processes identified or plausibly involved in the brain.

@Dude, youre quite the contrarian. The point I was making was not that they are literal translations of these anatomical structures but rather functional analogues. That the LLM does not mimic the full range of brain functions and merely takes the role of these regions of brain function.

Spellcheck struck my play on words that should have read “cuntrarian”

How do you know?

… Assuming it had any usefulness at all. Right now 99% of uses I’ve seen these ‘AI’ put to they are really really awful at it, with perhaps 5 maybe 15% of that being but that is alright in this situation – like using a flat head screwdriver as a prying tool or gaffa tape to ‘mend’ a jacket it might not be quite right but its probably serviceable enough for this moment.

The power of a large language model is in modeling how a language behaves. It is essentially a caricature of how we speak in various formats. Since a language cannot ultimately be separated from culture, and culture from knowledge, the language model ends up retaining information besides the strict rules and form of the language.

It is the latter which we observe as intelligence in the LLM based AI. It contains some thought in the form of how factual information reflects in the use of language. It encodes ideas by how we express them, albeit in a limited way that does not encapsulate how we actually think of matters. That makes the LLM a pale representation of human understanding but not human reasoning, or any reasoning whatsoever.

It can be relied on to provide what is averagely understood within a particular domain – not what is expertly known or reasoned for any question.

I agree this is just another iteration in the cycles of AI (I’ve been following this since 1984).

When this cycle has run its course we’ll be left with a few useful tools. Maybe some HLL programming it performs will be useful (I’ve used it to take the donkeywork out of learning a new environment but programmed the C-like statements inside myself.)

Oh and one other thing peeps – do cash in your shares/switch your portfolios before this bubble bursts.

Spot on. I followed the last big AI bubble of the 1980’s by measuring the amount of shelf space occupied by AI titles in the Computer Literacy Bookstore. At its peak, maybe a full quarter of the store was filled with breathless titles proclaiming the proud new dawn of AI. Then, in what seemed to be just a few weeks, the space occupied by titles of that ilk shrank to just a couple of shelves in one bookcase. It was called the “AI Winter”, and I see that season a-blowin’ in again.

Competing with the “Y2K” books, and lasting as long.

I agree with the basic premise. I would add one more thing to consider. When word processors came out, it was thought they would reduce workloads. Instead they increased them since now every one had to produce more elaborate documents. AI will do the same. Since programming will be more productive, more programming output will be expected. This tendency will apply to other spaces as well – life will become even more complicated.

I am writing a Python++ compiler and using AI to generate some parts of the library, but it doesn’t generate the correct code initially, and there are often inefficiencies as well. I know how to write the routine, but I can review the code, tell it to refactor and explain how to optimize it, and regenerate the function. I then write test code for the function to make sure that it works as expected, so it speeds up development time significantly but you need someone who knows what it should do, and could also write it without the AI in order to verify the code (it’s like pair coding, with someone who is faster than you at typing, but is less experienced).

Have you timed how long it actually takes to iterate through the loop with LLM versus just writing the same yourself?

What?

Optimize python?

The problem space that is suitable for python and that requires non-premature optimization is empty.

In other words, if it requires optimization, python is the wrong tool for the job.

Same as problems that don’t require optimization.

Python++ templates!

Bring the greatest language feature ever to Python.

The actual use of Python in the professional sphere is to call on function libraries that are optimized to do the work. If you need to work on gigabytes of raster or vector data, Python becomes essentially a command language for another system entirely.

If you need to write your own routines or algorithms in Python, you’re probably doing it wrong.

one big problem that even human coders cant seem to solve is code maintainability. there is so much quick and dirty code in the wild. the kind of stuff that just gets re-written over and over again because nobody can seem to do it right the first time. a well maintained codebase can be maintained indefinitely or at least until it becomes a tangled feature creeped mess over time. ai is just going to make this worse with tons of unmaintainable slop code.

My biggest problem is that I see “Al Williams” and read “AI.” Stupid sans-serif typefaces, aging vision and possibly my sis-dlexia.

Ahahahaha! My AI world-killing plan is in motion!

Lament in horror as humans are incapable of ever learning how to math or program correctly ever again!

Witness the destruction as no human can ever operate without their all-knowing computery overlords ever again! Humanity will be permanently crippled!!

+1 ….. one of the very few sensible comments here. It’s just Hackerday bashing Ai, yet again (facepalm).

Really, i probably need to find a tech site that’s more happy to embrace the future. Any recommendations?

Put your entire net worth into Nvidia!

Do it NOW, before it’s too late.

Credulous twit.

If AI is the future for mankind, we are in bigger doo doo than I thought… Doing more harm than good as far as I can see. And a waste of good energy that could be used for something else more constructive. AI is not on my list of usable programming technology. Not as long as I have a brain (this isn’t the world of Oz). The zombies are coming…. We already have the lemmings it appears…

https://news.ycombinator.com/

Stuffed with Articles and people fauning over AI. Enjoy your dystopia

There are lots of articles out there saying that AI is a x10 productivity booster and the like, and their proofs for those impressive claims are always in the line of “trust me bro”. But every time I decide to give it a try or read rigorous studies measuring productivity, the result is “minor productivity increase, if any at all”. And that comes at a big cost in so many many fronts…

Like this:

https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

Everybody claims there will be improvements. Everyone felt there were improvements. It actually got worse.

Does depend on where you look, these ‘AI’ can be huge timesavers in some very narrow places, even if to really polish the product at the end will still need hours of human effort, but as a rule I do agree with you.

LLM “AI” is just an upper level of compiler. Just that.

I think that architecture and ideas are often beyond what is most probable. A good idea may at first seem counterintuitive and may go against what is commonly done. It’s new. The current crops of LLMs do what is most probable and claim to know what is potentially needed. That’s ok if you ask it to write a sort algorithm as that will work. If you ask it to solve a problem you have not clearly defined it’s quite a different outlook as you will get what the majority have done before based on the information you gave the system. If you give it a set of clear requirements and architectural decisions it can do a half way decent job but it will tend to deviate from what you may be expecting as it does what is most probable so if you say “use a proxy” what you might mean is I want a forward proxy. What the system will then implement though will be a reverse proxy as that is the most common, and as it does not “understand”, that is also the most probable. In that case you then have to circle back and reign it in by explaining the reasoning behind the forward proxy and the problem you are solving and that you want that documented.

Then a day or two later you may pick the project up again and ask it to continue and once again it will change the forward proxy to a reverse and will cheerfully explain it’s fixed the “problem”. But it will have completely broken the intent and the solution will now do no more than verify the test the LLM has now written the verify the reverse proxy. It no longer works as intended because the LLM has forgotten what you wanted, or more accurately picked the raisins out of the cake of documentation you’ve built, and instead done what was most probable.

So as one iterates though that one often reaches branches in the road, a bit like Robert Frost, and the LLM happily shoves you down the most trodden one instead.

AND now that you have documented everything or had lengthy discussions with the LLM so as to get it to document them you will find that you now have enough documentation to fill the LLM’s little brain nearly full before it even gets down to reading the Slop code it’s written. So you’re trapped. Document more to make it walk the path you want and at the same time it now has to read reams and becomes less effective. The following is becoming increasingly difficult:

Two roads diverged in a wood, and I—

I took the one less traveled by,

And that has made all the difference.

All that said though you can make an AI do amazing things and within a certain bubble it works well. So if you can split the problem down and define clear handover points you can chain together something very capable. the LLM then only needs to read the parts of the documentation you’ve written “with” it, and it has forgotten, to get the smaller part of the problem solved. As soon as the problem no longer fits in that little bubble all manner of silly things begin to happen as a form of rolling black hole evaporates the LLMs recollection of what you are up to and it starts going in the opposite direction you clearly documented ad intended.

This is all just more whistling past the graveyard: Looking at current AI limitations and constructing an argument for ongoing human relevance based on those is shortsighted and motivated reasoning.

So only humans are able to query other humans to determine intelligent system parameters? Have you been paying any attention to the acceleration of the rate of change and the implications of the self- directed behavior of OpenClaw instances?

Belief in some mystical uniqueness to human creativity is delusional, full stop.

There will in fact remain a role for human decision-making for some time yet, but believing that there’s some ineffable creative boundary that AIs can’t cross is just wishful thinking.

Sorry… 🫤

I’ve “vibe coded” a GUI for controlling an SDR receiver, complete with an efficient waterfall display.

Still working on more features, but if you’re dissing on all the code generated by AIs, it’s not a reflection of the AI.

That said, it handles visual aspects of UI poorly. Solvable.

StrongDM has already achieved this: https://factory.strongdm.ai/

First of all – I was never “afraid” of thinking machines – they’re doing what they’ve been told to do (just like always).

Second, seems like a lot of what I’ve seen may be artificially intelligent, but still exhibits many aspects of natural stupidity (i.e., it’s wrong frequently).

Having said that, seems what we have called “existing business processes” vs “next business services” in the solution department means at least one ready realm for artificial thinking is to rapidly and dynamically assimilate not just what an architecture could be, but what it ought to be, given technology and other environmental considerations.

PS – and how it ought to be able to adapt to unforeseen (read: unforeseeable) environmental dynamics.