__ __ __ ___ / // /__ _____/ /__ ___ _ / _ \___ ___ __ / _ / _ `/ __/ '_/ / _ `/ / // / _ `/ // / /_//_/\_,_/\__/_/\_\ \_,_/ /____/\_,_/\_, / retro edition /___/Now optimized for embedded devices!!

| About | Successes | Retrocomputing guide | Email Hackaday |

Even in a field you think you know intimately, the Internet still has the power to surprise. Sound cards of the 1990s might not be everyone’s specialist subject, but since the CD-ROM business provided formative employment where this is being written, it’s safe to say that a lot of tech from that era is familiar. It’s a surprise then when along comes [DOS Storm] with a new one. The IBM Mwave was the computer giant’s offering back in the days when they were still pushing forward in the PC space, and sadly for them it turned out to be a commercial disaster.

The king of the sound cards in the ’90s was the SoundBlaster 16, which other manufacturers cloned directly. Not IBM of course, who brought their own Mwave DSP chip to the card, using it as both the sound card and the engine behind an on-board dial-up modem. This appears to have been its undoing, because aside from its notoriously flaky drivers, using both sound and modem at the same time just wasn’t a pleasant experience. To compound the problem, Big Blue resorted to trying to bury the problem with NDAs rather than releasing better drivers, so unsurprisingly it faded from view. Perhaps the reason it was unfamiliar here had something to do with it not being sold in Europe, but given that the chipset found its way into ’90s ThinkPads, we’d have expected to have seen something of it.

In the video below the break he introduces the card, and with quite some trouble gets it working. There are several demos of period games which sound a little scratchy, but we can’t judge from this whether they’d have sounded better on the Creative card. If you’d like to immerse yourself in the folly of ’90s multimedia, have a little bit of Hackaday scribe reminiscing.

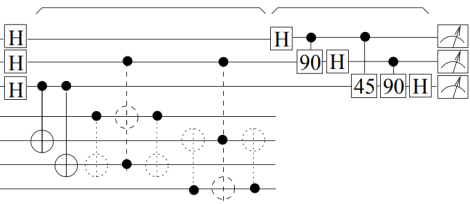

If you are to believe the glossy marketing campaigns about ‘quantum computing’, then we are on the cusp of a computing revolution, yet back in the real world things look a lot less dire. At least if you’re worried about quantum computers (QCs) breaking every single conventional encryption algorithm in use today, because at this point they cannot even factor 21 yet without cheating.

In the article by [Craig Gidney] the basic problem is explained, which comes down to simple exponentials. Specifically the number of quantum gates required to perform factoring increases exponentially, allowing QCs to factor 15 in 2001 with a total of 21 two-qubit entangling gates. Extrapolating from the used circuit, factoring 21 would require 2,405 gates, or 115 times more.

Explained in the article is that this is due to how Shor’s algorithm works, along with the overhead of quantum error correction. Obviously this puts a bit of a damper on the concept of an imminent post-quantum cryptography world, with a recent paper by [Dennish Willsch] et al. laying out the issues that both analog QCs (e.g. D-Wave) and digital QCs will have to solve before they can effectively perform factorization. Issues such as a digital QC needing several millions of physical qubits to factor 2048-bit RSA integers.

Although it can be hard to imagine in today’s semiconductor-powered, digital world, there was electrical technology around before the widespread adoption of the transistor in the latter half of the 1900s that could do more than provide lighting. People figured out clever ways to send information around analog systems, whether that was a telegraph or a telephone. These systems are almost completely obsolete these days thanks to digital technology, leaving a large number of rotary phones and other communications systems relegated to the dustbin of history. [Attoparsec] brought a few of these old machines back to life anyway, setting up a local intercom system with technology faithful to this pre-digital era.

These phones date well before the rotary phone that some of us may be familiar with, to a time where landline phones had batteries installed in them to provide current to the analog voice circuit. A transformer isolated the DC out of the line and amplified the voice signal. A generator was included in parallel which, when operated by hand, could ring the other phones on the line. The challenge to this build was keeping everything period-appropriate, with a few compromises made for the batteries which are D-cell batteries with a recreation case. [Attoparsec] even found cloth wiring meant for guitars to keep the insides looking like they’re still 100 years old. Beyond that, a few plastic parts needed to be fabricated to make sure the circuit was working properly, but for a relatively simple machine the repairs were relatively straightforward.

The other key to getting an intercom set up in a house is exterior to the phones themselves. There needs to be some sort of wiring connecting the phones, and [Attoparsec] had a number of existing phone wiring options already available in his house. He only needed to run a few extra wires to get the phones located in his preferred spots. After everything is hooked up, the phones work just as they would have when they were new, although their actual utility is limited by the availability of things like smartphones. But, if you have enough of these antiques, you can always build your own analog phone network from the ground up to support them all.

Although generally iPads tend to keep their resale value, there are a few exceptions, such as when you find yourself burdened with iCloud-locked devices. Instead of tossing these out as e-waste, you can still give them a new, arguably better purpose in life: an external display, with touchscreen functionality if you’re persistent enough. Basically someone like [Tucker Osman], who spent the past months on making the touchscreen functionality play nice in Windows and Linux.

While newer iPads are easy enough to upcycle as an external display as they use eDP (embedded Display Port), the touch controller relies on a number of chips that normally are initialized and controlled by the CPU. Most of the time was thus spent on reverse-engineering this whole process, though rather than a full-depth reverse-engineering, instead the initialization data stream was recorded and played back.

This thus requires that the iPad can still boot into iOS, but as demonstrated in the video it’s good enough to turn iCloud-locked e-waste into a multi-touch display. The SPI data stream that would normally go to the iPad’s SoC is instead intercepted by a Raspberry Pi Pico board which pretends to be a USB HID peripheral to the PC.

If you feel like giving it a short yourself, there’s the GitHub repository with details.

Thanks to [come2] for the tip.

Due to historical engineering decisions made many decades ago, a great many irrigation systems rely on solenoid valves that operate on 24 volts AC. This can be inconvenient if you’re trying to integrate those valves with a modern smart home control system. [Johan] had read that there were ways to convert these valves to more convenient DC operation, and dived into the task himself.

As [Johan] found, simply wiring these valves up to DC voltage doesn’t go well. You tend to have to lower the voltage to avoid overheating, since the inductance effect used to limit the AC current doesn’t work at DC. However, even at as low as 12 volts, you might still overheat the solenoids, or you might not have enough current to activate the solenoid properly.

As [Johan] found, simply wiring these valves up to DC voltage doesn’t go well. You tend to have to lower the voltage to avoid overheating, since the inductance effect used to limit the AC current doesn’t work at DC. However, even at as low as 12 volts, you might still overheat the solenoids, or you might not have enough current to activate the solenoid properly.

The workaround involves wiring up a current limiting resistor with a large capacitor in parallel. When firing 12 volts down the line to a solenoid valve, the resistor acts as a current limiter, while the parallel cap is initially a short circuit. This allows a high current initially, that slowly tails off to the limited value as the capacitor reaches full charge. This ensures the solenoid valve switches hard as required, but keeps the current level lower over the long term to avoid overheating. According to [Johan], this allows running 24V AC solenoid valves with a 12V DC supply and some simple off-the-shelf relay boards.

We’ve seen similar work before, which was applied to great effect. Sometimes doing a little hack work on your own can net you great hardware to work with. If you’ve found your own way to irrigate your garden as cheaply and effectively as possible, don’t hesitate to notify the tipsline!

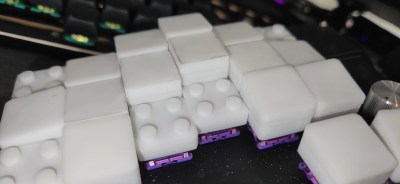

Now, we can’t call these LEGO key caps for obvious reasons, but also because they don’t actually work with standard LEGO. But that’s just fine and dandy, because they’re height-adjustable key caps that use the building block principle.

In the caption of the gallery, [paper5963] mentions foam. As far as I’ve studied the pictures, it seems to be all 3D-printed material. If they were foam, they would likely be porous and would attract and hold all kinds of nastiness. Right?

[paper5963] says that there are various parts that add on to these, not just flat tops. There are slopes and curves, too. They are also designing these for narrow pitch, and say they are planning to release the files. Exciting!

In the early days of AI, a common example program was the hexapawn game. This extremely simplified version of a chess program learned to play with your help. When the computer made a bad move, you’d punish it. However, people quickly realized they could punish good moves to ensure they always won against the computer. Large language models (LLMs) seem to know “everything,” but everything is whatever happens to be on the Internet, seahorse emojis and all. That got [Hayk Grigorian] thinking, so he built TimeCapsule LLM to have AI with only historical data.

Sure, you could tell a modern chatbot to pretend it was in, say, 1875 London and answer accordingly. However, you have to remember that chatbots are statistical in nature, so they could easily slip in modern knowledge. Since TimeCapsule only knows data from 1875 and earlier, it will be happy to tell you that travel to the moon is impossible, for example. If you ask a traditional LLM to roleplay, it will often hint at things you know to be true, but would not have been known by anyone of that particular time period.

Chatting with ChatGPT and telling it that it was a person living in Glasgow in 1200 limited its knowledge somewhat. Yet it was also able to hint about North America and the existence of the atom. Granted, the Norse apparently found North America around the year 1000, and Democritus wrote about indivisible matter in the fifth century. But that knowledge would not have been widespread among common people in the year 1200. Training on period texts would surely give a better representation of a historical person.

The model uses texts from 1800 to 1875 published in London. In total, there is about 90 GB of text files in the training corpus. Is this practical? There is academic interest in recreating period-accurate models to study history. Some also see it as a way to track both biases of the period and contrast them with biases found in data today. Of course, unlike the Internet, surviving documents from the 1800s are less likely to have trivialities in them, so it isn’t clear just how accurate a model like this would be for that sort of purpose.

Instead of reading the news, LLMs can write it. Just remember that the statistical nature of LLMs makes them easy to manipulate during training, too.

Featured Art: Royal Courts of Justice in London about 1870, Public Domain

The BBC recently published an exposé revealing that some Chinese subscription sites charge for access to their network of hundreds of hidden cameras in hotel rooms. Of course, this is presumably without the consent of the hotel management and probably isn’t specifically a problem in China. After all, cameras can now be very tiny, so it is extremely easy to rent a hotel room or a vacation rental and bug it. This is illegal, China has laws against spy cameras, and hotels are required to check for them, the BBC notes. However, there is a problem: At least one camera found didn’t show up on conventional camera detectors. So we wanted to ask you, Hackaday: How do you detect hidden cameras?

Commercial detectors typically use one of two techniques. It is easy to scan for RF signals, and if the camera is emitting WiFi or another frequency you expect cameras to use, that works. But it also misses plenty. A camera might be hardwired, for example. Or store data on an SD card for later. If you have a camera that transmits on a strange frequency, you won’t find it. Or you could hide the camera near something else that transmits. So if your scanner shows a lot of RF around a WiFi router, you won’t be able to figure out that it is actually the router and a small camera.

Sometimes you just know that you have the best ever idea for a hardware product, to the point that you’re willing to quit your job and make said product a reality. If only you can get the product and its brilliance to people, it would really brighten up their lives. This was the starry-eyed vision that [Simon Berens] started out with in January of 2025, when he set up a Kickstarter campaign for the World’s Brightest Lamp.

At 50,000 lumens this LED-based lamp would indeed bring the Sun into one’s home, and crowdfunding money poured in, leaving [Simon] scrambling to get the first five-hundred units manufactured. Since it was ‘just a lamp’, how hard could it possibly be? As it turns out, ‘design for manufacturing’ isn’t just a catchy phrase, but the harsh reality of where countless well-intended designs go to die.

The first scramble was to raise the lumens output from the prototype’s 39K to a slight overshot at 60K, after which a Chinese manufacturer was handed the design files. This manufacturer had to create among other things the die casting molds for the heatsinks before production could even commence. Along with the horror show of massive US import taxes suddenly appearing in April, [Simon] noticed during his visit to the Chinese factory that due to miscommunication the heatsink was completely wrong.

Months of communication and repeated trips to the factory follow after this, but then the first units ship out, only for users to start reporting issues with the control knobs ‘scraping’. This was due to an issue with tolerances not being marked in the CNC drawings. Fortunately the factory was able to rework this issue within a few days, only for users to then report issues with the internal cable length, also due to this not having been specified explicitly.

All of these issues are very common in manufacturing, and as [Simon] learned the hard way, it’s crucial to do as much planning and communication with the manufacturer and suppliers beforehand. It’s also crucial to specify every single part of the design, down to the last millimeter of length, thickness, diameter, tolerance and powder coating layers, along with colors, materials, etc. ad nauseam. It’s hard to add too many details to design files, but very easy to specify too little.

Ultimately a lot of things did go right for [Simon], making it a successful crowdfunding campaign, but there were absolutely many things that could have saved him a lot of time, effort, lost sleep, and general stress.

Thanks to [Nevyn] for the tip.

As anyone who extrudes plastic noodles knows, the glass transition temperature of a material is a bit misleading; polymers gradually transition between a glass and a liquid across a range of temperatures, and calling any particular point in that range the glass transition temperature is a bit arbitrary. As a general rule, the shorter the glass transition range is, the weaker it is in the glassy state, and vice-versa. A surprising demonstration of this is provided by compleximers, a class of polymers recently discovered by researchers from Wageningen University, and the first organic polymers known to form strong ionic glasses (open-access article).

When a material transforms from a glass — a hard, non-ordered solid — to a liquid, it goes through various relaxation processes. Alpha relaxations are molecular rearrangements, and are the main relaxation process involved in melting. The progress of alpha relaxation can be described by the Kohlrausch-Williams-Watts equation, which can be exponential or non-exponential. The closer the formula for a given material is to being exponential, the more uniformly its molecules relax, which leads to a gradual glass transition and a strong glass. In this case, however, the ionic compleximers were highly non-exponential, but nevertheless had long transition ranges and formed strong glasses.

The compleximers themselves are based on acrylate and methacrylate backbones modified with ionic groups. To prevent water from infiltrating the structure and altering its properties, it was also modified with hydrophobic groups. The final glass was solvent-resistant and easy to process, with a glass transition range of more than 60 °C, but was still strong at room temperature. As the researchers demonstrated, it can be softened with a hot air gun and reshaped, after which it cools into a hard, non-malleable solid.

The authors note that these are the first known organic molecules to form strong glasses stabilized by ionic interactions, and it’s still not clear what uses there may be for such materials, though they hope that compleximers could be used to make more easily-repairable objects. The interesting glass-transition process of compleximers makes us wonder whether their material aging may be reversible.