Today, Nvidia released their next generation of small but powerful modules for embedded AI. It’s the Nvidia Jetson Nano, and it’s smaller, cheaper, and more maker-friendly than anything they’ve put out before.

The Jetson Nano follows the Jetson TX1, the TX2, and the Jetson AGX Xavier, all very capable platforms, but just out of reach in both physical size, price, and the cost of implementation for many product designers and nearly all hobbyist embedded enthusiasts.

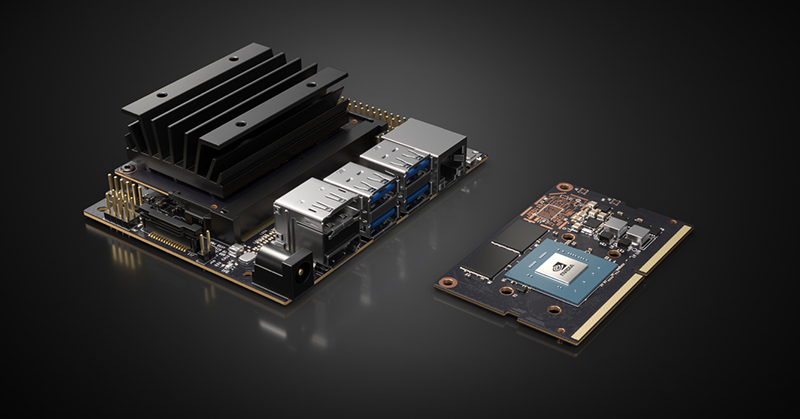

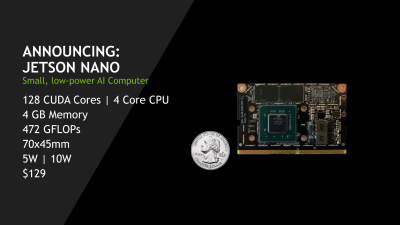

The Nvidia Jetson Nano Developers Kit clocks in at $99 USD, available right now, while the production ready module will be available in June for $129. It’s the size of a stick of laptop RAM, and it only needs five Watts. Let’s take a closer look with a hands-on review of the hardware.

The Nvidia Jetson is something we’ve seen before, first in 2015 as the Jetson TX1 and again in 2017 as the Jetson TX2. Both of these modules were designed as a platform for ‘AI at the edge’, an idea that’s full of buzzwords, but does make sense from an embedded development standpoint. The idea behind this ‘edge’ is to build and train all your models on racks of GPU, then bring that model over to a small computer for the inference. This small computer doesn’t need to be connected to the Internet, and the power budget doesn’t need to be huge.

This ‘AI at the edge’ paradigm isn’t new — we had dedicated AI chips in the 1980s, even if the Internet of Things hadn’t been invented yet — and Google recently released Coral, a board loaded up with an Edge TPU platform and custom ASIC that has the same form factor and power budget as a Raspberry Pi. Intel has a Neural Compute Stick that’s designed to plug into a single board computer. Again, this is proof, rendered in silicon, we are in the second AI renaissance. The Jetson Nano is the latest board that fits into this market, and its main features are its portability and as a module that can go into your product.

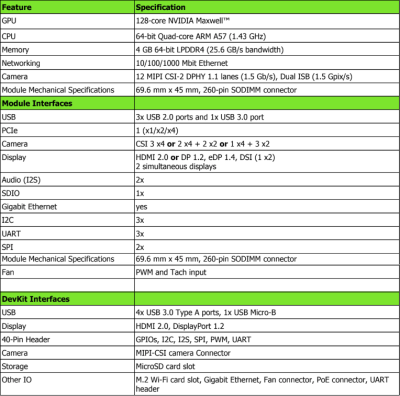

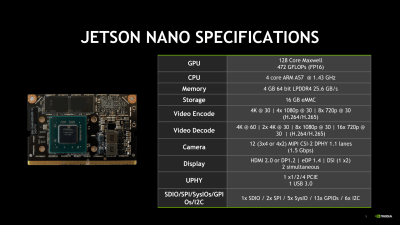

Here are the Nvidia spec sheets published along with this launch:

Specs and Hands-on

The Jetson Nano comes with a quad-core ARM A57 CPU running at 1.4GHz, and since this is Nvidia, you’ve also got a Maxwell GPU with 128 CUDA cores. Memory is 4 GB of LPDDR4, there is support for Ethernet and MIPI CSI cameras.

The Jetson Nano comes with a quad-core ARM A57 CPU running at 1.4GHz, and since this is Nvidia, you’ve also got a Maxwell GPU with 128 CUDA cores. Memory is 4 GB of LPDDR4, there is support for Ethernet and MIPI CSI cameras.

There are two versions of the Jetson Nano, and two options for storage. The Developer’s Kit, including a carrier board with DisplayPort, HDMI, four USB ports, a CSI camera connector, Ethernet, an M.2 WiFi card slot, and a bunch of GPIOs, uses an SD card. The Developer’s kit costs $99. The Jetson Nano — not the developer’s kit — comes without a carrier board (and would require building your own carrier board) but includes 16 GB of eMMC Flash. Yes, they could have made this naming scheme easier.

These specs put the Jetson Nano in a class a bit above the Raspberry Pi 3, which is to be expected because it costs more. The review unit I was sent runs a standard Ubuntu desktop at a peppy pace, and with Internet this is a computer that performs as you would expect.

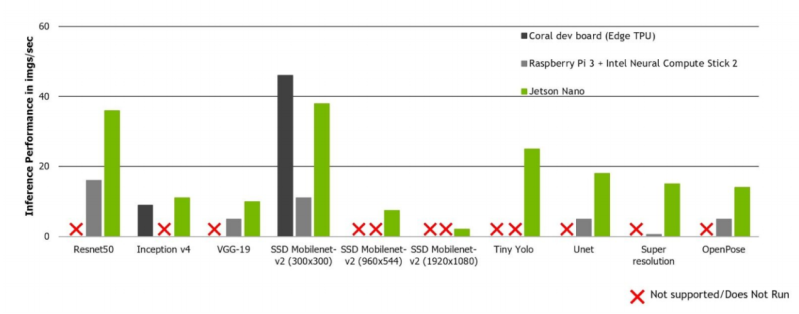

But the Jetson isn’t designed to run a GameCube emulator, even though it probably could (and someone should). The entire point of the Jetson Nano is to do inference. You train your model on a big computer, or use one of the many models available for free, and you run them on the Jetson. Here, the Jetson Nano is vastly more capable than the Google / Coral dev board recently launched, or a Pi with an Intel compute stick. You also have a CUDA GPU, and support for, ‘all the popular frameworks’ of deep learning software.

A Robot, For The Makers

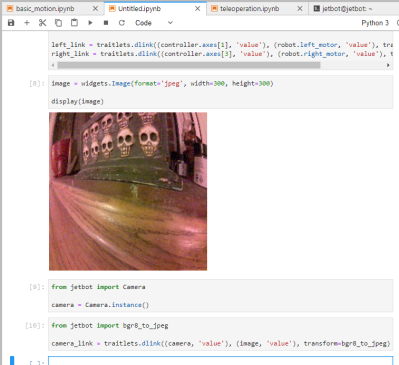

With the release of the Jetson Nano, Nvidia is focusing on the maker market, whatever that may be, and they’re releasing tutorials, examples, documentation, and the killer app of all single board computers, a 3D printed robot. The bill of materials for the Jetbot includes a 3D printed robot chassis, a Raspberry Pi camera, a WiFi card (an Intel Wireless-AC 8265), gear motors, a motor driver, and a 10000 mAh USB power bank. Add in a few screws, and you have a functioning Linux 3D printed robot.

Writing code for the Jetbot first requires connecting it to the local WiFi network, but after that it’s as simple as pointing Chrome to an IP address and opening up a Jupyter notepad. All the code in the examples is in Python, and in minutes, a kid can can drive a car around with an Xbox controller, with live video coming back to the browser. This is what robotics education is.

Writing code for the Jetbot first requires connecting it to the local WiFi network, but after that it’s as simple as pointing Chrome to an IP address and opening up a Jupyter notepad. All the code in the examples is in Python, and in minutes, a kid can can drive a car around with an Xbox controller, with live video coming back to the browser. This is what robotics education is.

The tutorials and examples for the Jetson Nano include basic teleoperation, continuing to collision avoidance by training a neural net to object following. This is a setup that gives you a capable machine learning platform and a two-wheeled robot chassis. Can you use this to solve a Rubik’s cube? Yes, if you build the software. You can build a self-driving car, and it gets kids interested in STEM.

There’s a learned lesson at work here. Providing examples that can get users up and running fast was the missing element that doomed Intel’s Maker Movement efforts, and it is the key to the success of the Raspberry Pi and Arduino. If you don’t produce documentation and examples, the product will fail. Here, Nvidia has done a remarkable job bringing a GPU on a small Linux module to educators and students.

The Future of the Jetson Platform

This is not Nvidia’s first Jetson product. In 2015, Nvidia released the Jetson TX1, a credit-card sized brick of a module that was intended to be the future of AI ‘at the edge’. Despite the buzzwords, this is a viable use-case for very fast embedded processors; you can train your model on all the GPUs Amazon owns, then put your model on a small embedded device. It’s AI without the cloud or a connection to the Internet. The Jetson TX2 followed in 2017, again with a credit card-sized brick of a module attached to a MiniITX motherboard. This was the module you wanted for selfie-snapping drones, or machine learning for a self-driving car.

The Nvidia Jetson Nano is a break from previous form factors. The Nano, like the Raspberry Pi Compute Module, fits entirely on a standard laptop SO-DIMM connector. This is a module designed for the same application as the Pi Compute Module; you need to build a carrier board that handles all your I/O, and this tiny little module will handle all your data. It’s a viable engineering strategy, even if it’s not for people who want to stuff modern electronics in an old Game Boy shell and run emulators. People do real work with electronics, you know.

While the Jetson Nano is the first in its form factor, there are some hints that Nvidia is going to be developing this platform over the long term. One of the biggest engineering constraints on a project at this scale is the power budget, and the Jetson Nano ships with a carrier board that includes a micro USB power input (there is an additional barrel jack adapter, rated at 5V, 4A). This micro USB power input is limited to 5V, 2A, or 10 Watts. There’s only so much computing you can do per Watt, and if you want more, you have two options: use a smaller process for your silicon or use more power. Nvidia has come up with an ingenious way to save some engineering time on the next version of their carrier board. They’re stacking footprints for USB connectors. The carrier board also supports a USB-C connector:

Sure, it’s just a tiny detail that would go unnoticed by most, but the carrier board is already designed for a USB-C connector and the increased power that can deliver. Nvidia is clearly planning for a future with the Jetson, and with a significantly more convenient form factor we might just see it.

Love to see the inclusion of a dual USB-C and Micro-B footprint! Strange that the copper seams to be unplated…

It’s probably prottected with organic solderability preservative: https://en.wikipedia.org/wiki/Organic_solderability_preservative

Looks like an ENIG finish post selective solder. Sometimes the carriers get dirty with cooked flux and it gets on the boards. This one doesn’t look cleaned post solder.

And of course, no analog I/O – the usual product of purely digital designers who were told by their bosses to make something for “makers.”

This is a modul. you are suposed to build your own carrier board. The carrier board, tht comes with the Nano is just for prototyping.

Create an external board with a 12-bit 1.45Msps ADC like the MSPM0L1105 (Or multiplex two dozen of them) and access them over SPI ?

https://hackaday.com/2023/03/21/new-part-day-ti-jumps-in-to-the-cheap-mcu-market/

So it’s basically a shrunk down Shield TV that’s also a bit cheaper but with half the compute power? While useful for some projects, I would like to see a higher end module (that’s still reasonably priced) with more compute power.

Compared to the TX1, this looks like the GPU is cut in half and probably underclocked. It’s still a quad core ARM though it’s underclocked too.

I guess all the work with the Switch and Shield TV helped push the cost down on this chip. The faster TX2 seems like it never really caught on.

I’ll wait and see who wins out in this edge computing battle.

JENSEN NEED MORE LEATHER JACKET!

Or you could buy an Arduino Mega for about a third of the price at $38.50 USD and get a whopping 256 KB of which 8 KB used by bootloader of flash memory and 4 KB of EEPROM and a 16 MHz clock speed.

Not sure that the Arduino Mega is only a third the specifications here though.

We are going to get faster clock speed Arduinos some day, right?

I wouldn’t count on it. The market for faster chips seems fully occupied by an army of ARM alternatives and it’s not unlikely the design of the AVR chips needs significant rejigging before it’ll work at higher clock speeds.

There are quite a few stm based arduinos, those run at 80mhz

and also esp32 arduinos at 240Mhz

Esp32….

Esp8266….

Arduino due….

Stm32….

M0….

Teensy….

And so on. The avr architecture is a single instruction/cycle with no pipeline, so not to much opportunity to speed it up without breaking compatibility with existing code that relies on precise timing. Of course if you want that, china made an avr clone that can go at higher clocks, has a pipeline, and is binary compatible.

The Arduino Mega is an 8- bit CPU. One does not use an 8-bit CPU when they care about speed. But for $2.5 you can buy a board that is 10X faster and still runs on the Arduino IDE. If you need a Mega-like product that runs at 72 MHz and is 32 bits search eBay for “STM32F103C8T6 minimum system” This is a 32-bit ARM C that works just fin in the Arduino IDE. Most new Arduios are ARM-based now.

Faster Arduinos are here. The official Ardion web site has one. It is based on a 32-bit CPU running at 84Mhz

https://store.arduino.cc/usa/due

But the above “STM32F103C8T6 minimum system” works well at 1/10th the price if you are willing to learn how to install the bootloader

While the AVR is always quoted as “only 8 bit”, it has a whole range of instructions that operate on 16bit. Which makes it quite a fit faster at more complex tasks then you would initially guess.

It’s still slow tough, even with the fastest 20Mhz versions.

However, it’s core redeeming quality that people tend to overlook is that they are pretty indestructible. The IO pins can take much more abuse over their “absolute maximum rating” then most ARM controllers. Which makes them very nice for people that just tinker around with a bit of electronics.

” It’s the size of a stick of laptop RAM, and it only needs five Watts.”

Seems bigger than that.

“Again, this is proof, rendered in silicon, we are in the second AI renaissance. ”

The third will be the singularity.

“You also have a CUDA GPU, and support for, ‘all the popular frameworks’ of deep learning software.”

Thing is how much of all this “deep learning” is cross-platform or tied to a particular one?

Why would you want anything other than Linux?

Most these cross platform frameworks work with either a GPU (Nvidia) or any generic CPU.

“deep learning” is actually something that is done in the lab. The network is trained using a “ton” of data then the trained network is moved to a device like this. It need not be cross-platform because you don’t deploy this onto someone else’s computer. You put in on the Jetson then put the Jetson inside your product then sell the product.

“cross-platform” is only needed for software that gets deployed to random end-user computers that might be Macs or Windows or Linux or even a phone. THIS software is always sold with hardware so the developer controls the platform.

Price on Nvidia’s website says 99 USD.

good write up brian, we got BLINKA running on it, so that means 130+ libraries work with it … post is here: https://blog.adafruit.com/2019/03/18/adafruit-blinka-support-for-the-nvidia-jetson-series-nvidia-gtc19-nvidiaembedded/

So it could be used running my coded simulation also?

Sorta reminds me of a Pentium 2 style mounting. I wonder if that hunk of heat sink and board will stress the lock in socket designed for flat wafers covered over. Shock and awe for G forces, and for disturbing the eventual baked condition of the heat sink dope in any app that moves like robots.

Higher computing power at a cheaper cost and smaller size.

The TK1 was available in 2014.

And how quick everyone is to forget the awesome little board. I’ve just installed Debian on mine, and loaded up asterisk to have some fun with.

Two things to note:

To quote hackster: “NVIDIA recommend that the Jetson Nano Dev Kit should be powered using a 5V/2A to 3.5A micro-USB power supply, with a further recommendation to use a 5V/4A power supply if you “…are running benchmarks or heavy workload.””

So you’re supposed to use the barrel jack, and that’s also the reason why you need that big heat sink.

The second thing, is that this doesn’t come with WiFi or BT built in.

Cool board, and good on nVidia to finally enter the SBC market. And as an aside, it’ll be interesting to see where the newly anounced http://beagleboard.org/ai will slot in price wise in comparison to this; with eMMC, WiFi, lower power envelope, real time compute and analog IO … but far with less grunt of course.

Caught my eye as well… “recommendation to use a 5V/4A power supply” – how does it correspond with the advertised 5W power draw?

5w idle, 20w doing anything else. Typical NV stuff.

Doesn’t seem to be the case, I replied to milord above, but essentially, 20W input is for powering external peripherals. 5W of which is for the Jetson Nano.

After talking with John from NVIDIA that was on the Hack Chat this week, he said that the Jetson Nano really is only 5W, and the 20W recommendation is for powering external peripherals such as the camera and USB3 devices. He also mentioned there is a command line tool for tuning power consumption if that suits you.

Interesting that the Odroid XU4 uses a barrel jack to connect to a 5V/4A PSU as well.

Interesting indeed – so interesting, if you have an XU4, that PSU works great with the Nano; an easy way to save $$$ not having to buy another adapter or being forced to use mUSB 5W mode.

It defaults to 10W mode; I think many will be reporting the board crashes because I don’t believe it will auto enter into 5W mode…(?). Could be wrong on that though…

As soon as they announced a barrel jack option & a 5V/4A PSU for optimum performance, my mind went straight to the XU4 as well.

Dat heatsink, though.

Pretty darn big heatsink for 5 watts.

5v@4a = 20w

Really good to see this gadget released today!

Apart from the specs, it should be mentioned that previous members of the Jetson family are solid, functional devices that actually work as they should do …… and most importantly …… You can deploy your own custom models on it. Try deploying custom models on the Intel NCS2 setup …… they dont work properly. Whether Intel will fix this, who knows? Have a look at Google TPU, no training of custom models available and, strangely, no community forum.

The great thing with Nvidia is that the community support is very active and quite often you can talk directly with the top developers. I’m going to use the Nano for my garden slug detector:

https://hackaday.io/project/164137-fugslucks-2019

….. Since, generally, slugs dont move too quickly. For moving machines, the Tx2 or Xavier.

Hopefully there will be an Ai category in the Hackaday 2019 prize ????

The Jetson boards have great Hardware – but if NVIDIA did continue with their software dependency…

For the Tx1 you HAD TO USE one Ubuntu version.

This still would be fine, if it would been RHOS compartible – if its not i hope you know how to compile working custom kernels – cause i don’t…

I would love to see an open project where you suck IP camera streams, annotate motion and object detection, and then republish the IP cameras for your home NVR/NAS that has all the info you need that most systems cannot do. The republished IP camera stream would have re-rendered video and have the data channel with the extra data for standard NVR/NAS systems.

Blue Iris or Blue Cherry?

They each run their own NVR, but at least with blue Iris I think that it’s compatible with some of the most popular NVRs… But I believe that the functionality is in the other direction- taking video from an NVR and recoding it to push to the web or mobile devices.

Any guesses on what the empty component group up in the top right of the module was aimed to be?

Looks dense, and pads for a shielding can. Maybe wireless?

https://developer.nvidia.com/sites/default/files/akamai/embedded/images/jetsonNano/JetsonNano_Module_Beauty_Standing_trimmed.jpg

Yeah, look at J1 and J2. Seem to be antenna tracks with footprints for SMA type connectors

Well J1 and J2 on that right-hand edge do look like UFL-type landing pads so evidently some form of RF port

Maybe that’s what the $129 module comes with. ie Wifi and Bluetooth

But can it run Crysis

4GB…I want 12+++ at least. 24GB preferably for my 4kDCGAN. If even that is enough.

So can I play Tetris on it?

I realize you’re kidding, but you can actually compile MAME for it and play any arcade game you want.

Any affordable CSI-2 camera for it? Can I use raspberry pi camera with it? e-consystem cameras are expensive when bought in low quantity :(

My dream camera is a camera with a good resolution/latency (even if the FPS is not that high, don’t need more than 1/latency FPS) and a big plus if it can be synchronized to an external source or at least provide the capture timestamp, and under 100$, but I didn’t find anything better than the raspberry pi with raspberry camera yet

Nvidia actually sells a camera for about $30 that is designed to work with Jetson and it does what you want. It is basically a “Pi Camera” These (and the Pi camera) are a standard type. There is a company called “arducam” that sells on Amazon and they have a huge selection of these as well as better quality lenses with lower geometric distortion.

is it pin compatible with raspberry pi compute module? /raspberry pi pin headers,

it looks similar but probably isnt

Looks like a perfect motherboard for 4k 15″ laptop.

“Nvidia is clearly planning for a future with the Jetson,”

So, there is a microphone hidden on it?

(Disabled, of course)

B^)

The Jetson Nano was the winner of an edge device benchmark report we recently published. We compared it to Coral Dev Board and Intel Neural Sticks, among others, via an image classification task.

You might find the performance outcomes interesting. You can see them here if you like:

https://tryolabs.com/blog/machine-learning-on-edge-devices-benchmark-report/