Many of us have been asking for some time now “where are our robot servants?” We were promised this dream life of leisure and luxury, but we’re still waiting. Modern life is a very wasteful one, with items delivered to our doors with the click of a mouse, but the disposal of the packaging is still a manual affair. Wouldn’t it be great to be able to summon a robot to take the rubbish to the recycling, ideally have it fetch a beer at the same time? [James Bruton] shares this dream, and with his extensive robotics skillset, came up with the perfect solution; behold the Binbot 9000. (Video, embedded below the break)

Nvidia Jetson Nano6 Articles

Open Source Kitchen Helps You Watch What You Eat

Every appliance business wants to be the one that invents the patented, license-able, and profitable standard that all the other companies have to use. Open Source Kitchen wants to beat them to it.

Every beginning standard needs a test case, and OSK’s is a simple one. A bowl that tracks what you eat. While a simple concept, the way in which the data is shared, tracked, logged, and communicated is the real goal.

The current demo uses a Nvidia Jetson Nano as its processing center. This $100 US board packs a bit of a punch in its weight class. It processes the video from a camera held above the bowl of fruit, suspended by a scale in a squirrel shaped hangar, determining the calories in and calories out.

It’s an interesting idea. One wonders how the IoT boom might have played out if there had been a widespread standard ready to go before people started walling their gardens.

STEP Up Your Jetson Nano Game With These Printable Accessories

Found yourself with a shiny new NVIDIA Jetson Nano but tired of having it slide around your desk whenever cables get yanked? You need a stand! If only there was a convenient repository of options that anyone could print out to attach this hefty single-board computer to nearly anything. But wait, there is! [Madeline Gannon]’s accurately named jetson-nano-accessories repository supports a wider range of mounting options that you might expect, with modular interconnect-ability to boot!

A device like the Jetson Nano is a pretty incredible little System On Module (SOM), more so when you consider that it can be powered by a boring USB battery. Mounted to NVIDIA’s default carrier board the entire assembly is quite a bit bigger than something like a Raspberry Pi. With a huge amount of computing power and an obvious proclivity for real-time computer vision, the Nano is a device that wants to go out into the world! Enter these accessories.

At their core is an easily printable slot-and-tab modular interlock system which facilitates a wide range of attachments. Some bolt the carrier board to a backplate (like the gardening spike). Others incorporate clips to hold everything together and hang onto a battery and bicycle. And yes, there are boring mounts for desks, tripods, and more. Have we mentioned we love good documentation? Click into any of the mount types to find more detailed descriptions, assembly directions, and even dimensioned drawings. This is a seriously professional collection of useful kit.

Home Automation At A Glance Using AI Glasses

There was a time when you had to get up from the couch to change the channel on your TV. But then came the remote control, which saved us from having to move our legs. Later still we got electronic assistants from the likes of Amazon and Google which allowed us to command our home electronics with nothing more than our voice, so now we don’t even have to pick up the remote. Ushering in the next era of consumer gelification, [Nick Bild] has created ShAIdes: a pair of AI-enabled glasses that allow you to control devices by looking at them.

Of course on a more serious note, vision-based home automation could be a hugely beneficial assistive technology for those with limited mobility. By simply looking at the device you want to control and waving in its direction, the system knows which appliance to activate. In the video after the break, you can see [Nick] control lamps and his speakers with such ease that it almost looks like magic; a defining trait of any sufficiently advanced technology.

Of course on a more serious note, vision-based home automation could be a hugely beneficial assistive technology for those with limited mobility. By simply looking at the device you want to control and waving in its direction, the system knows which appliance to activate. In the video after the break, you can see [Nick] control lamps and his speakers with such ease that it almost looks like magic; a defining trait of any sufficiently advanced technology.

So how does it work? A Raspberry Pi camera module mounted to a pair of sunglasses captures video which is sent down to a NVIDIA Jetson Nano. Here, two separate image classification Convolutional Neural Network (CNN) models are being used to identify objects which can be controlled in the background, and hand gestures in the foreground. When there’s a match for both, the system can fire off the appropriate signal to turn the device on or off. Between the Nano, the camera, and the battery pack to make it all mobile, [Nick] says the hardware cost about $150 to put together.

But really, the hardware is only one small piece of the puzzle in a project like this. Which is why we’re happy to see [Nick] go into such detail about how the software functions, and crucially, how he trained the system. Just the gesture recognition subroutine alone went through nearly 20K images so it could reliably detect an arm extended into the frame.

If controlling your home with a glance and wave isn’t quite mystical enough, you could always add an infrared wand to the mix for that authentic Harry Potter experience.

Continue reading “Home Automation At A Glance Using AI Glasses”

Nvidia Jetson Robots Get A Head Start With Isaac Software Tools

We live in an exciting time of machine intelligence. Over the past few months, several products have been launched offering neural network processors at a price within hobbyist reach. But as exciting as the hardware might be, they still need software to be useful. Nvidia was not content to rest on their impressive Jetson hardware and has created a software framework to accelerate building robots around them. Anyone willing to create a Nvidia developer account may now play with the Isaac Robot Engine framework.

Isaac initially launched about a year ago as part of a bundle with Jetson Xavier hardware. But the $1,299 developer kit price tag pushed it out of reach for many of us. Now we can buy a Jetson Nano for about a hundred bucks. For those familiar with Robot Operating System (ROS), Isaac will look very familiar. They both aim to make robotic software as easy as connecting common modules together. Many of these modules called GEMS in Isaac were tailored to the strengths of Nvidia Jetson hardware. In addition to those modules and ways for them to work together, Isaac also includes a simulator for testing robot code in a virtual world similar to Gazebo for ROS.

While Isaac can run on any robot with an Nvidia Jetson brain, there are two reference robot designs. Carter is the more expensive and powerful commercially built machine rolling on Segway motors, LIDAR environmental sensors, and a Jetson Xavier. More interesting to us is the Kaya (pictured), a 3D-printed DIY robot rolling on Dynamixel serial bus servos. Kaya senses the environment with an Intel RealSense D435 depth camera and has Jetson Nano for a brain. Taken together the hardware and software offerings are a capable and functional package for exploring intelligent autonomous robots.

It is somewhat disappointing Nvidia decided to create their own proprietary software framework reinventing many wheels, instead of contributing to ROS. While there are some very appealing features like WebSight (a browser-based inspect and debug tool) at first glance Isaac doesn’t seem fundamentally different from ROS. The open source community has already started creating ROS nodes for Jetson hardware, but people who work exclusively in the Nvidia ecosystem or face a time-to-market deadline would appreciate having the option of a pre-packaged solution like Isaac.

Hands-On: New Nvidia Jetson Nano Is More Power In A Smaller Form Factor

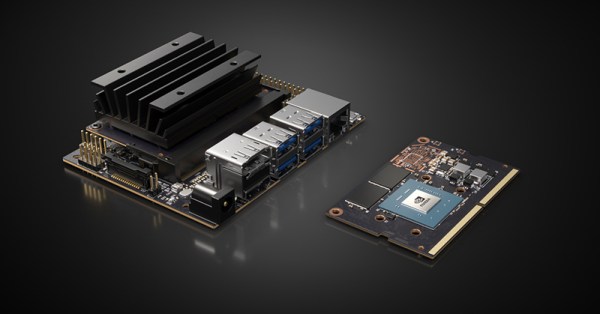

Today, Nvidia released their next generation of small but powerful modules for embedded AI. It’s the Nvidia Jetson Nano, and it’s smaller, cheaper, and more maker-friendly than anything they’ve put out before.

The Jetson Nano follows the Jetson TX1, the TX2, and the Jetson AGX Xavier, all very capable platforms, but just out of reach in both physical size, price, and the cost of implementation for many product designers and nearly all hobbyist embedded enthusiasts.

The Nvidia Jetson Nano Developers Kit clocks in at $99 USD, available right now, while the production ready module will be available in June for $129. It’s the size of a stick of laptop RAM, and it only needs five Watts. Let’s take a closer look with a hands-on review of the hardware.

Continue reading “Hands-On: New Nvidia Jetson Nano Is More Power In A Smaller Form Factor”