The “Marauder’s Map” is a magical artifact from the Harry Potter franchise. That sort of magic isn’t real, but as Arthur C. Clarke famously pointed out, it doesn’t need to be — we have technology, and we can make our own magic now. Or, rather, [Dave] on the YouTube Channel Dave’s Armoury can make it.

[Dave]’s hardware store might be in a rough neighborhood, since it has 50 cameras’ worth of CCTV coverage. In this case, the stockman’s loss is the hacker’s gain, as [Dave] has talked his way into accessing all of those various camera feeds and is using machine vision to track every single human in the store.

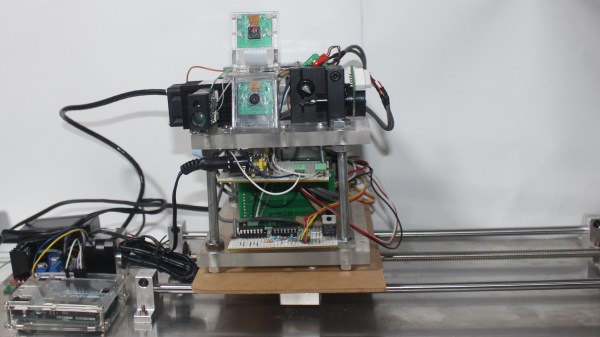

Of course, locating individuals in a video feed is easy — to locate them in space from that feed, one first needs an accurate map. To do that, [Dave] first 3D scans the entire store with a rover. The scan is in full 3D, and it’s no small amount of data. On the rover, a Jetson AGX is required to handle it; on the bench, a beefy HP Z8 Fury workstation crunches the point cloud into a map. Luckily it came with 500 GB of RAM, since just opening the mesh file generated from that point cloud needs 126 GB. That is processed into a simple 2D floor plan. While the workflow is impressive, we can’t help but wonder if there was an easier way. (Maybe a tape measure?)

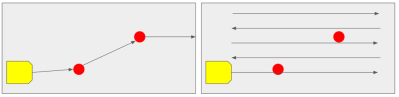

Once an accurate map has been generated, it turns out NVIDIA already has a turnkey solution for mapping video feeds to a 2D spatial map. When processing so much data — remember, there are 50 camera feeds in the store — it’s not ideal to be passing the image data from RAM to GPU and back again, but luckily NVIDIA’s “Deep Stream” pipeline will do object detection and tracking (including between different video streams) all on the GPU. There’s also pose estimation right in there for more accurate tracking of where a person is standing than just “inside this red box”. With 50 cameras, it’s all a bit much for one card, but luckily [Dave]’s workstation has two GPUs.

Once the coordinates are spat out of the neural networks, it’s relatively simple to put footprints on the map in true Harry Potter fashion. It really is magic, in the Clarkian sense, what you can do if you throw enough computing power at it.

Unfortunately for show-accuracy (or fortunately, if you prefer to avoid gross privacy violations), it doesn’t track every individual by name, but it does demonstrate the possibility with [Dave] and his robot. If you want a map of something… else… maybe check out this backyard project.

Continue reading “Hardware Store Marauder’s Map Is Clarkian Magic”

To give the game a bit more of a physical feel, [piles.of.spam] made an actual crossbow for the player to wield. Its handle was cut from a scrap piece of wood, using a band saw for the general shape and a CNC machine for the delicate cut-outs that hold a laser pointer, an ESP32 and a microswitch-based trigger. The laser shines onto the game screen, while the ESP32 sends out a data packet over WiFi when the trigger is pulled.

To give the game a bit more of a physical feel, [piles.of.spam] made an actual crossbow for the player to wield. Its handle was cut from a scrap piece of wood, using a band saw for the general shape and a CNC machine for the delicate cut-outs that hold a laser pointer, an ESP32 and a microswitch-based trigger. The laser shines onto the game screen, while the ESP32 sends out a data packet over WiFi when the trigger is pulled. The computing hardware consists of a pair of Jetson Nano boards, which sport quad-core ARM A57 CPUs as well as powerful graphics hardware to generate the game’s visuals. The end result is impressive, especially given the fact that all of this was designed and built in just three weeks. It was apparently a great hit with its intended audience, as visitors queued to try their hand at shooting the hungry zombies.

The computing hardware consists of a pair of Jetson Nano boards, which sport quad-core ARM A57 CPUs as well as powerful graphics hardware to generate the game’s visuals. The end result is impressive, especially given the fact that all of this was designed and built in just three weeks. It was apparently a great hit with its intended audience, as visitors queued to try their hand at shooting the hungry zombies.