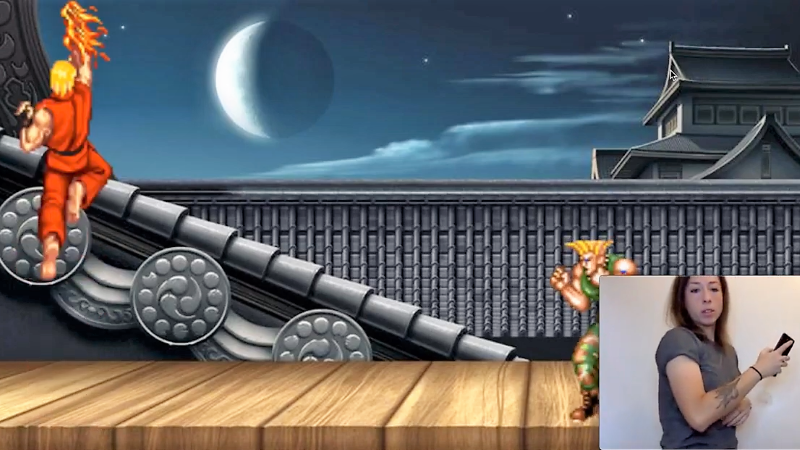

A question: if you’re controlling the classic video game Street Fighter with gestures, aren’t you just, you know, street fighting?

That’s a question [Charlie Gerard] is going to have to tackle should her AI gesture-recognition controller experiments take off. [Charlie] put together the game controller to learn more about the dark arts of machine learning in a fun and engaging way.

The controller consists of a battery-powered Arduino MKR1000 with WiFi and an MPU6050 accelerometer. Held in the hand, the controller streams accelerometer data to an external PC, capturing the characteristics of the motion. [Charlie] trained three different moves – a punch, an uppercut, and the dreaded Hadouken – and captured hundreds of examples of each. The raw data was massaged, converted to Tensors, and used to train a model for the three moves. Initial tests seem to work well. [Charlie] also made an online version that captures motion from your smartphone. The demo is explained in the video below; sadly, we couldn’t get more than three Hadoukens in before crashing it.

With most machine learning project seeming to concentrate on telling cats from dogs, this is a refreshing change. We’re seeing lots of offbeat machine learning projects these days, from cryptocurrency wallet attacks to a semi-creepy workout-monitoring gym camera.

Thanks to [baldpower] for the tip!

I like the idea, sort of like BeatSaber is getting people to do ‘stealth’ exercise in VR.

What’s the deal with that wierd flash version of Street Fighter though? It looks….off

Very nice !

[ following is to the author of the project, at least mainly ;) ]

Have you already tried using TensorFlow on the hardware side ? ( that is, training your model while it performs with the firmata firmware then embedding it on capable uC ? ex: replacing the phone by a TensorFlow-capable one that’d then send “simple”/translated gamepad inputs to the actual game controller ? ) -> could be quite neat also ;)

I’ll have a deep look at your article on dev.to to better grasp how you did make the magic happen, being quite curious on how TensorFlow does ML ( For anyone looking frde such tool, I had some fun with a quite simple lib ( in usage ) named “brainjs” ( kudos to the author ;) )

Als, did you try using ML with the emotiv hardware or related ? this ‘d be quite interesting ( ex:training a model with mood feedbacks from inputs given to the user, .. )

This being said, I recently revived an old gamecube controller ( replacing the onboard chip by an arduino mini pro* ) & I’d really much enjoy giving friends the ability to smashbros irl ( could end up quite messy .. )

Also, I’d love to see some ML algo that handles playing Mario 64 ( & winning the never-ending battle with the game’s camera,via computer vision … )

*nothing new there ( aside a little trick on D13 to share it for a button & the indicator led ) but I’ll post a repo link if anyone had some interest in that ( also kudos to Nicohood for its Nintendo lib )