When we see RGB LEDs used in a project, they’re often used more for aesthetic purposes than as a practical source of light. It’s an easy way to throw some color around, but certainly not the sort of thing you’d try to light up anything larger than a desk with. Apparently nobody explained the rules to [Brian Harms] before he built Light[s]well.

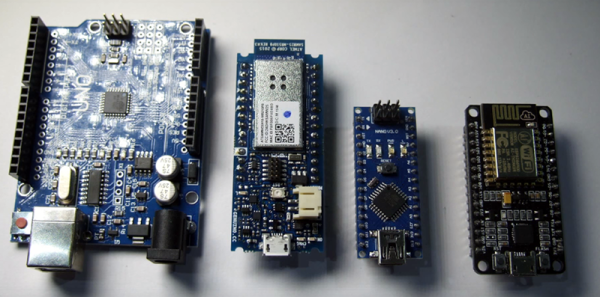

Believe it or not, this supersized light installation doesn’t use any exotic hardware you aren’t already familiar with. Fundamentally, what we’re looking at is a WiFi enabled Arduino MKR1000 driving strips of NeoPixel LEDs. It’s just on a far larger scale than we’re used to, with a massive 4 x 8 aluminum extrusion frame suspended over the living room.

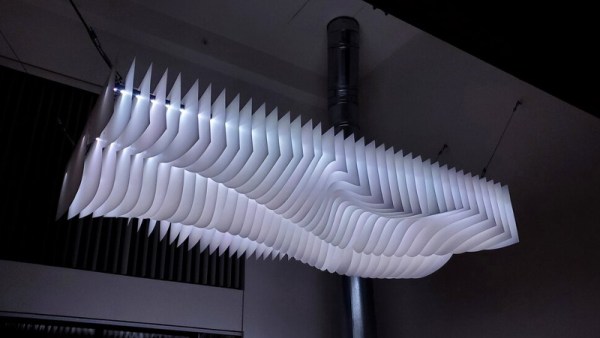

Onto that frame, [Brian] has mounted an undulating diffuser made of 74 pieces of laser-cut cardstock. Invoking ideas of waves or clouds, the light looks like its of natural or even biological origin while at the same time having a distinctively otherworldly quality to it.

Onto that frame, [Brian] has mounted an undulating diffuser made of 74 pieces of laser-cut cardstock. Invoking ideas of waves or clouds, the light looks like its of natural or even biological origin while at the same time having a distinctively otherworldly quality to it.

The effect is even more pronounced when the RGB LEDs kick in, thanks to the smooth transitions between colors. In the video after the break, you can see Light[s]well work its way from bright white to an animated rainbow. As an added touch, he added Alexa voice control through Arduino’s IoT Cloud service.

While LED home lighting is increasingly becoming the norm, projects like Light[s]well remind us that we aren’t really embracing the possibilities offered by the technology. The industry has tried so hard to make LEDs fit into the traditional role of incandescent bulbs, but perhaps its time to rethink things.