For just about any task you care to name, a Linux-based desktop computer can get the job done using applications that rival or exceed those found on other platforms. However, that doesn’t mean it’s always easy to get it working, and speech recognition is just one of those difficult setups.

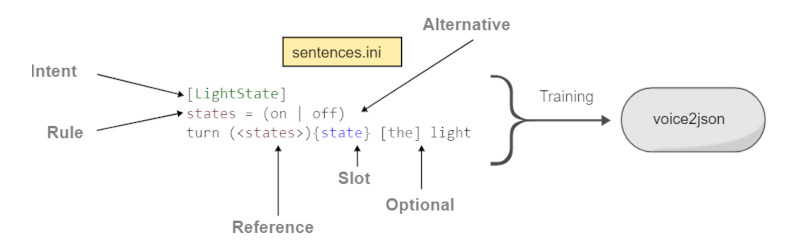

A project called Voice2JSON is trying to simplify the use of voice workflows. While it doesn’t provide the actual voice recognition, it does make it easier to get things going and then use speech in a natural way.

The software can integrate with several backends to do offline speech recognition including CMU’s pocketsphinx, Dan Povey’s Kaldi, Mozilla’s DeepSpeech 0.9, and Kyoto University’s Julius. However, the code is more than just a thin wrapper around these tools. The fast training process produces both a speech recognizer and an intent recognizer. So not only do you know there is a garage door, but you gain an understanding of the opening and closing of the garage door.

In addition, the tools are all made to work in Unix-style pipelines which is refreshing. Here’s an example configuration from the project’s website:

[GarageDoor]

open the garage door

close the garage door

[LightState]

turn on the living room lamp

turn off the living room lampThere are templating features so you can specify optional words and alternative words in a single rule. There are other features like mapping an object like living room lamp into something more computer-friendly.

Overall, this looks like a fun tool to have in your kit. If you do something interesting with it, be sure to drop us a tip so we can cover it. Meanwhile, we’ve been watching Linux speech for quite a while. Of course, what we really want is speech commands like the USS Enterprise, and we have to admit it is getting closer.

Can anybody recommend a good offline speech recognition engine that has pretrained models for german?

Try Vosk (https://alphacephei.com/vosk/) which for unknown reasons is missing in the above list. One of the languages it supports is does is german. So far I only tried the english model but I had good results with it:

https://hackaday.io/project/25406-wild-thumper-based-ros-robot/log/190631-higher-accuracy-speech-recognition-in-ros-with-vosk

@Martin said: “Can anybody recommend a good offline speech recognition engine that has pretrained models for german?”

Maybe…

—-[Mozilla DeepSpeech & German]—-==

* Search for: “Mozilla DeepSpeech German” without the ” “s:

https://duckduckgo.com/?q=Mozilla+DeepSpeech+German&t=ffab&ia=web

* DeepSpeech for German Language

https://discourse.mozilla.org/t/deepspeech-for-german-language/36527/14

* German End-to-end Speech Recognition based on DeepSpeech

https://www.researchgate.net/publication/336532830_German_End-to-end_Speech_Recognition_based_on_DeepSpeech

* AASHISHAG / deepspeech-german

https://github.com/AASHISHAG/deepspeech-german

* ynop / deepspeech-german

—-[Mozilla DeepSpeech in-General]—-

* mozilla / DeepSpeech

https://github.com/mozilla/DeepSpeech

* Welcome to DeepSpeech’s documentation!

https://deepspeech.readthedocs.io/en/r0.9/?badge=latest

For speech recognition and easy approach with python, you can use Vosk API Wich uses Kaldi. It’s offline and it works great.

And for dictation, best I’ve found is nerd-dictation which build on Vosk.

The best thing for building assistants I’ve found so far is Rhasspy. I plan on doing a proper writeup of setting up my instance once I’m finished. I still have to make that reaspeakerd work on recent Debian (without doa it’s sad performance) and figure out wakeword training.

“Illuminate”

“deluminate”

I always wonder why this epic voice command wasn’t included with alexa or google home

What about Simon & Blather? Haven’t tried them yet but Simon, for instance, seems pretty good and simple enough to use. Although, I’m not sure it’s still developed. The last version I could find was 0.4x, released in 2017 but it was supposed to lead to v0.5, which I can’t find.

i have been wanting to dive into a voice recognition project for home use for many years now… however the only thing that i can find that seams to work uses a third party for the translating.. what i would like is some thing that is considered private (NO GOOGLE). i have been using Linux for several years now and had lots of fun learning from it, so i am not exactly a beginner but after several hours of trying to understand how to install/setup such a machine seams to be quite difficult. nothing i have found on the internet explaining the steps needed to setup such a machine, they always end with or start with creating a google account .. i am not worried about how complex the setup might be but more about basic instructions that do not require a degree in rocket science. so if some one was able to write a human readable tutorial on any one of the popular open source projects i am sure that project will become quite popular overall as i have tried to read and understand the jargon (quickly getting lost in web searches). It seams there is definitely a need to simplify the installation process….