Itanium was once meant to be the next step in computing, to compete with the likes of IBM, Sun and DEC, but also for Intel to have an architecture that couldn’t be taken from it, as the PC was from IBM by its clones. Today, however, Itanium is a relic of the past. [Asianometry] tells us the story of Itanium.

By the ’90s, servers were an established market dominated by RISC architectures and Unix-like operating systems. Intel wanted to compete in this market, due in part to worries of losing control over x86. So, when Hewlett Packard came to Intel in late ’93, Intel eventually agreed to collaborate on a new project in EPIC (Explicitly Parallel Instruction Computing).

The project initially called PA-WW (later IA-64 and Itanium), was also a radical approach to ILP (Instruction-Level Parallelism). As HP engineers saw RISC architectures potentially hitting performance limits in the future, the idea was a compromise between fully compiler-driven VLIW and the fully hardware-driven superscalar and out-of-order computers.

The collaboration between Intel and HP did not go without problems, however. Internal politics, both between HP and Intel disagreeing about design choices and Intel’s Itanium and x86 teams internally competing who was making the new big product, were early signs of trouble. The x86 team’s work eventually came to be the Pentium Pro, which was now catching up with the fastest RISC architectures.

In the mean time, Itanium had been delayed once and twice, due to Intel underestimating the true scale of the project and the fabrication technology required. The mounting delays eventually caused a release in 2003, 4 years late. And the competition wasn’t waiting in the mean time. New RISC chips were still being released year after year, eating in to what would have been Itanium’s performance advantage.

In an ironic twist, Itanium’s attempt to dislodge x86 actually solidified it. AMD realized that Intel had made a mistake; software developers would not want to recompile for a completely different architecture. And so, yet more competition began in the form of AMD’s 64-bit extension to x86, the specification written by the legendary Jim Keller. And, while sales numbers were lower than projected, AMD had still won; more AMD64 chips were being sold than Itanium ones.

In the end, Itanium died a slow death due to delays and increasing competition. With it, AMD made a major change to x86, the first time Intel was on the back foot in the x86 race, eventually leading to their adoption of AMD64 (now called x86-64) with some minor changes. By the time Itanium 2 launched, the writing was on the wall: Itanium had failed to capture the market.

History often rhymes, and so does the story of Itanium to that of VLIW; an architecture perhaps too ambitious for its own good.

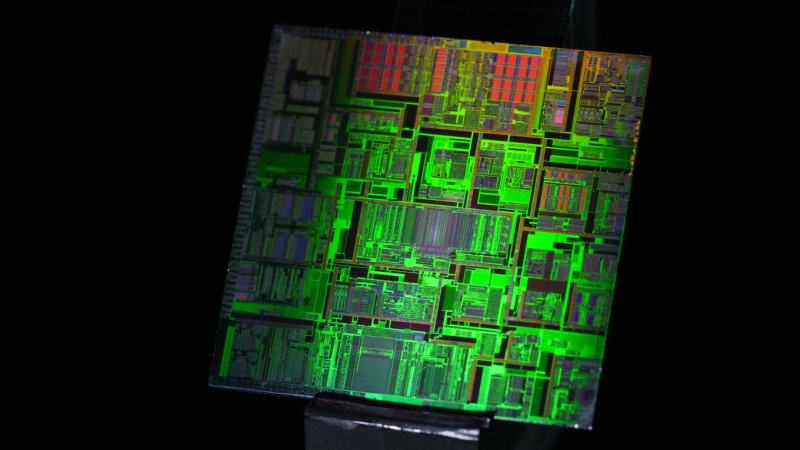

Die shots of an Intel Itanium processor courtesy of [der8auer].

not another youtube video summary…

Oh FFS, so much this…

Title is a little close to “great replacement theory” for comfort.

A concise summary of a 45 minute long YouTube video I can read in two minutes? Yes please!

Indeed!

+42

+84

That particular channel produces good videos. Computer history is always interesting especially when exploring what could have been. e.g. Lisp machines, OS/2, Amiga, etc.

Honestly these are preferable to an article that doesn’t even tell me what’s in the video but instead just alludes to how interesting it is.

If I want to watch the video I can, but if I just want the key points I can read the article – I don’t see this as a problem, given the world has shifted to video whether we like it or not.

I think the complaint was that there have been too many articles that are just recapping someone else’s story, that isn’t even coming from the hacker/maker community (i.e. “not a hack”) but from youtube content farmers.

if my youtube backlog wasn’t presently 100 videos deep, id probibly have seen this one already.

There is a typo in the title, one spells it “itanic”

Interesting that the POWER architecture survives, albeit in much smaller quantities – IBM i on POWER AKA AS400, and others.

There was a 586/PPC hybrid chip that could have had changed history.

https://en.wikipedia.org/wiki/PowerPC_600#PowerPC_615

Oh wow, I haven’t heard about the PPC615 in years.

I remember when I bought my PowerMac 6100 (60MHz PPC601!) the 615 rumours were all over the place, and how we might not need bridgeboards to run DOS & Windows.

That era, the late 90s, was a wild time.

Itanic was a faulty design. Its concept of executing multiple things in parallel per instruction did not work out; it was a slow and energy wasting behemoth, broken by design beyond repair. Intel did not listen to the warnings of experts and had to face a disaster. It is a pity that this bad decision killed the funding of the Alpha platform by HP joining Intel’s mistake.

The theory was attractive and Itanium had some clever engineering going on, sadly the reality is fixed instruction scheduling (or semi-fixed I guess in Itanium) can’t realistically handle normal code well.

For a subset of scientific workloads Itanium was nice.

Itanium was an HP undertaking that Intel joined in, in hopes of killing off the x86 market and making AMD’s license useless. The only reason Itanium was in production for as long as it was is that Intel was contractually obligated to produce it for HP.

When Compaq acquired DEC, the Alpha architecture was outdated, and Compaq decided against maintaining it, because it was much easier to just buy whatever Intel was making. If Itanium wasn’t around, they’d still have picked something they could buy from a third party. They weren’t in a position to design high-performance processors.

Ahhh.. nearly here and gone are the days of seeing Software that listed system requirements that sometimes would list itanium or ia64 as CPU requirements…

Still waiting to see this whole RISC/arm explosion in the server market they have been talking about..

Changes… Lots of them over 20 years of development in the computer world it’s amazing.

Wait no longer because a HUGE number of amazon machines are ARM64. As for RISC, x86-64 chips implement their ISA using microcode using an underlying RISC system. It’s not that things don’t happen, it’s that you are ignorant of the fact that they have happened.

I liked the video.would never had remembered the names woi.

Summary Itanic became Titanic.

Thank goodness for AMD and grumpy gray beards resting change.

the problem with VLIW is not that the compilers are too complicated, but actually the opposite…the compilers are too simple, and therefore you can put them inside the CPU. Since the CPU will keep changing over time, that’s the best place to put extremely specific optimizations. So obvious in hindsight.

And so you have invented the Transmeta Crusoe, another failed CPU architecture.

https://en.wikipedia.org/wiki/Transmeta_Crusoe

Discontinued in 2007.

Thin clients used the Crusoe. Such as HP t57xx line.

Today they make for nice Windows 98SE retro gaming PCs.

Though Windows Me is even better, because it uses less 16-Bit code.

Using NUSB or unofficial Service Pack for Windows 98 has a similar effect (uses Win Me system files).

https://www.parkytowers.me.uk/thin/hp/t5700/

It was generally perceived to be underpowered even for the time and not as power efficient as promised.

The main idea was that it could run JAVA bytecode “natively”, which was needed for – as you mention – thin clients, for reasons nobody could ever really explain properly.

These were machines intended for things like web cafes and libraries, but because they were just glorified and super slow web terminals, they kinda missed what people needed computers for. The software and the hardware were borderline useless. I remember trying to research some topic in a public library using one of these things, and the experience was somewhere along the lines of an early generation smart TV with a broken web browser.

Yes, that may well be true, especially Windows CE wasn’t a great performer I think (lightweight, but not “accelerated”).

As for the processor.. My HP thin client runs Windows 98 at acceptable performance, that’s all I can say.

Not worse than, say, a Pentium 133 or a Pentium MMX.

The Crusoe reminds me of NexGen Nx586, Cyrix MediaGX, AMD Geode, Via C3 or Intel Atom in terms of performance (in relative comparison to the more common CPUs of each time, I mean).

Not exactly stellar here, but okay if the surrounding chips on motherboard do assist and help reducing CPU overhead.

Such as a graphics card with 2D acceleration, an efficient NIC comparable to Etherlink III, an PCI IDE controller with bus mastering/UDMA or a soundcard with its own hardware mixing and PCM decoding capabilities (AWE32, GUS and similar cards).

I’m mentioning this because Linux or Windows CE used on these embedded systems/thin clients may run on generic standard drivers rather than the intended device drivers written by the manufacturer.

Windows 2000 or 98SE have an advantage here, because the chips used in thin clients are fully supported by these lightweight OSes.

Speaking of the thin clients, I got the impression that they were made as the corporate equivalent to the physical X11 terminals once used on Unix.

The Windows CE based thin clients I got from eBay did have a very crude feature set, I think.

Essentially just centered around a remote desktop software compatible with Windows Server or something.

And the GUI was sluggish, making me think the graphics ran on unaccelerated VBE drivers rather than proper, accelerated GDI drivers.

Seriously, I think even Windows 95 on an average 386 with a late ISA graphics card had smoother graphics than this.

Which confused me, because such a thin clint was literally made to draw remote graphics from LAN.

Whats kind of funny is superscalar is a lot like vliw in practice. if you want to max it out, you want to find the ILP, and group the instructions so they get dispatched in parallel. OoO execution only has so large a window. So effectively, superscalar does VLIW, or not, and when it does, it does it better.

The itanic had too many major flaws, but it succeeded in one respect.

Flaws:

1) conceived by hardware people, who traditionally presume software can fix up complex problems. Unfortunately software people had long tried to extract sufficient parallelsm, and failed. They failed again.

2) enormous amount of non-architectural context (i.e. all the speculative registers) had to be saved during context switches, which made them very slow.

3) heat dissipation was becoming a major problem in processors (and in computing centres for that matter). Itanic chose to exacerbate that by doing many speculative computations that were ultimately discarded.

Success: it persuaded customers to stay with HPUX/HP-PARISC systems, since there was an evolution path. That worked for a decade or so.

Can we please kick support for video of this site, for the quality is 0 for about all these articles.

No I’m not interested in marketing here!

I actually own a quad socket Itanium development platform with 2 x 733MHz Itaniums and 8GB RAM. It’s massive (6U chassis) and is about as computationally as efficient as a space heater but it’s cool seeing it run. It had one of the SCSI drives die and is now inoperable (OS was on a RAID-0 for speed and fun). I figured it wouldn’t matter if it died, I could just reload it. Now, I can’t find the installation media and product key I used. I got an ISO of a install disk but can’t find a good key now….