A computer does one thing at a time, even if it feels like it’s doing multiple things at once. In reality, it’s just switching between tasks very quickly. But a VLIW (Very Long Instruction Word) computer is different. Today, [Asianometry] tells us about VLIW computing and its history.

Processors have multiple functional units; for example, you might have separate units each for addition, multiplication and division. But because it runs one instruction at a time, these units tend to spend a large amount of time idle. VLIW aims to address this inefficiency by reinventing what an instruction means. Instead of telling the whole processor what to do, a VLIW instruction tells each functional unit what to do at once. Sounds good, right? Well, that was the easy part.

The hard part? How to compile a program for a VLIW computer, that can actually make use of all the functional units at once; after all, the efficiency promise is that the higher activity makes up for larger instruction words to fetch. That is the compiler’s job; VLIW compilers try to reschedule the operations in the program to convert sequential code into more parallel operations then compiled into the titular very long instruction words.

[Asianometry] goes into detail about this, the history, and more in the video after the break.

P.S.: For the sake of the video and article, we’re ignoring the existence of modern concept of out-of-order CPUs; they did not exist in the time period which [Asianometry] is talking about.

Modern CPUs do run instructions in parallel: instead of using a VLIW architecture, they use microops with schedulers for more flexible execution.

Okay, I obviously have missed that postscript..

You missed nothing.

It’s a statement of fact in a current article; the fact is just fundamentally wrong.

It should have set proper context, instead it caused confusion.

I’m not sure you quite have it right, but you aren’t referencing the more obvious multicore parallelism.

Superscalar. Its been around forever (in computer terms) yet few actually understand it and just pretend like its nothing. https://en.wikipedia.org/wiki/Superscalar_processor

Your CPU in a single core, executing a single thread has multiple load/store units, multiple alu’s, multiple vector processors, multiple floating point processors. These can all be run in parallel as long as there are not dependencies. If instruction ordering is favorable. This is the biggest bang for the buck when hand optimizing assembly code (that and abuse of registers to alleviate register pressure) as long as you aren’t selecting “slow” instructions (Unless those slow instructions do exactly what you want in which case theyre faster than chaining fast operations together…fine, its an art :D The OoO processing attempts to find this sort of thing when the human/compiler doesn’t fix it, but it can only do so much (instructions have to be “relatively close” – where relatively is defined by the processor)

Anyhow, the whole point is we have had single core processors capable of issuing multiple instructions per clock (superscalar) for some time now, yet most people writing code in any form have no clue it exists.

To be fair, most of the code most people write couldnt be ordered any different to make a dent in that execution time. To really play into that order, you would have to write something fairly low level or even straight up assembly.

when speaking of execution units, it pretty much implies assembly, or working on optimizing compilers. The former is what I usually do when I need the speed. The latter? I don’t understand why the compilers make me do it in assembly since they ought to be able to determine instuction dependence, and dependency chains to make better use of reservation stations, and figure out an optimal instruction ordering, and actually meet the claim that “you can’t beat the compiler when optimizing”. That and use all the registers even for unintended purposes (if they can hold a value, all values are just values!). To be fair, I haven’t tried the paid for optimizing compilers like from Intel, but I have used MSVC, GCC/G++, and LLVM. None of them seem to understand what a superscalar processor is.

VLIW architectures still exists. Xilinx uses it for their “AI Engines” which are just DPSs with lots of vector instructions included in some of their FPGAs. Looking at disassembled code makes it clear that the compiler has a tough time optimizing for this.

AMD Ryzen CPUs with “AI” brandings also have the same AI Engines in them, rebranded as “XDNA” NPU.

Pretty sure that Qualcomm Hexagon is also VLIW-based: VLIW is pretty common on DSP cores, where things are less complex. Still, as you have observed, the compiled results are not always great..

AMD’s old TeraScale GPU architecture was VLIW as well.

DSP seems to be a good niche for VLIW. Sophie Wilsons Broadcom FirePath powered tons of DSL modems.

to wit, out-of-order execution was around in the mid 60’s, which predates the 1970’s paper; perhaps the distinction is in the ‘modern concept’, though I don’t know what that is.

@34:42 “The low end i860 ran at 25 Hertz…The TRACE ran at 8 Hertz…” I think I found their performance problem.

“A computer does one thing at a time, even if it feels like it’s doing multiple things at once. In reality, it’s just switching between tasks very quickly.” – this is, by a wide margin, the biggest bullshit anyone has ever written in a Hackaday article. Anyone even remotely knowledgeable about CPUs knows that by definition, everything is happening all at once inside. The clock makes it synchronized, but all internal components like ALU, bus interface, control logic – it’s all running massively in parallel. And that’s not looking at all the “modern” techniques with multiple piplelines and out-of-order processing.

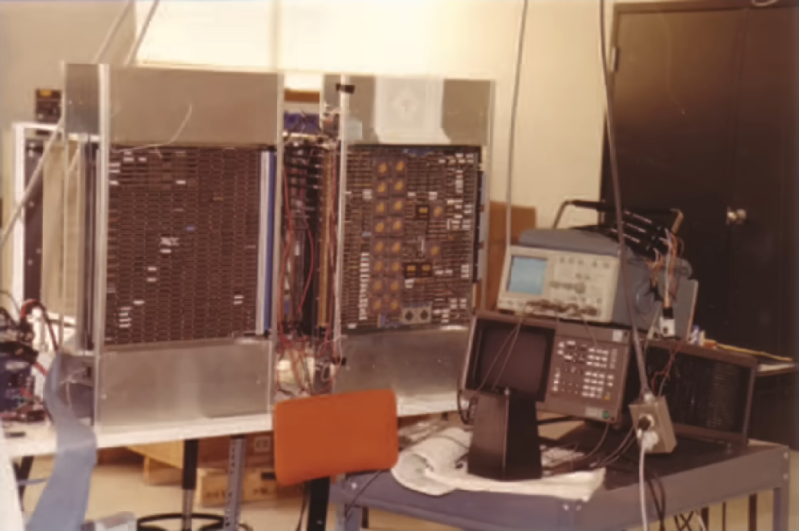

I have written lots of bit slice code back in the 8800 days 128 bits wide at that time, 40mhz on wire wrap was fast bit slice or vliw made it faster we made the first laser printer for pcn layout on plastic this was shrunk for IC masking fun fun

I played with this concept a bit – my “VLIW” is only 16-bit wide but can engage 5 CPU units simultaneously, in a rather orthogonal fashion. Each instruction is exactly 2 cycles (fetch and execute), but theoretically 5 operations can be done in each execute, therefore maximum possible throughput it 2.5 operations per clock cycle. I only have a monitor (sort of) written for it, and the parallelism was achieved by manually combining operations into instruction. https://hackaday.io/project/173996-sifp-single-instruction-format-processor