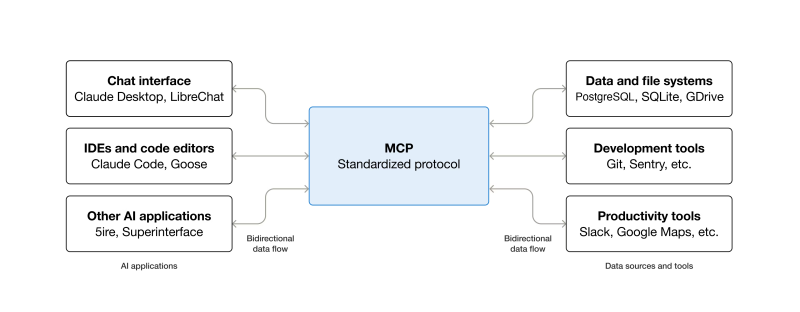

As part of the effort to push Large Language Model (LLM) ‘AI’ into more and more places, Anthropic’s Model Context Protocol (MCP) has been adopted as the standard to connect LLMs with various external tools and systems in a client-server model. A light oversight with the architecture of this protocol is that remote command execution (RCE) of arbitrary commands is effectively an essential part of its design, as covered in a recent article by [OX Security].

The details of this flaw are found in a detailed breakdown article, which applies to all implementations regardless of the programming language. Essentially the StdioServerParameters that are passed to the remote server to create a new local instance on said server can contain any command and arguments, which are executed in a server-side shell.

Essentially the issue is a lack of input sanitization, which is only the most common source of exploited CVEs. Across multiple real-world exploitation attempts on the software of LettaAI, LangFlow, Flowise and Windsurf it was possible to perform RCEs or perform local RCE in the case of the Windsurf IDE. Although Flowise had implemented some input sanitization by limiting allowed commands and the stripping of special characters, this was bypassed by using standard flags of the npx command.

After contacting Anthropic to inform them of these issues with MCP, the researchers were told that there was no design flaw and essentially had a ‘no-fix, works as designed’ hurled at them. According to Anthropic it’s the responsibility of the developer to perform input sanitization, which is interesting since they provide a range of implementations.

At last, something that’s actually ‘happening’!

urgh, thought it was a ‘something’. should have read it before so excitedly overreacting.

BTW Hackaday, MCP is really big news! The Chinese sites like yours, are loaded with AI chatbots with MCP capabilities, and their associated projects controlling and accessing EVERYTHING!!!

I cannot fathom that you’re bagging another important contemporary technology…again!

☠️

If you see something good, send tips! tips@hackaday.com

Yeah this is really really dumb. hurr durr the thing that lets your agents execute arbitrary stuff…lets your agents execute arbitrary stuff.

It also has nothing to do with MCP per se, just the stdio way that it was invoked in the early days.

It’s like everything in the world, risk/reward and knowing the boundaries. Just because it’s the latest buzzword changes nothing in that regard. It can be a useful tool, much like credit, but in the wrong it can mess you up.

“MCP” still means “Master Control Program” to me… #Tron

Me too…. That said, I don’t like the implications of having it’s ‘fingers’ in everything. Looks like a dictating government dream come true! More reason for just local access (no cloud) within your sphere as a company or individual. , but my guess, a lot of people won’t care and just embrace the cloud and all it’s ‘benign’ services… until it is to late.

I run mcp servers and agents locally, no cloud, no overlords. All compute and storage under my roof. Most people will absolutely just submit to the big corporations – they already have in large part

Master Chinese Program in the year 2026.

I’m struggling to understand how Anthropic or the protocol is responsible for the vulnerabilities. If a service’s configuration allows setting up calls to an executable file, it should allow you to configure any command you want. I haven’t tried but I’m under the impression that, say, you could configure the Apache HTTP daemon to run “FORMAT C:” when a PHP file is requested. This wouldn’t mean that the HTTP protocol is faulty but that you are an idiot. Even more so if your service allows a remote user to change your config files (like in the sixth vulnerability). What am I missing?

On the other hand, this OX company works closely with the government+military+industrial cronies, the same ones with which Anthropic apparently has some misunderstandings.

Wait till someone realizes what you can do with ssh.

Apache doesn’t come with preconfigured setups to format your c:

It’s also run with limited permissions usually.

I think the issue is that MCP implementations do have these issues.

sometimes giving arbitrary code execution to a system or entity has value. when i am hired by a new company as a developer, i am given arbitrary code execution, but, because i am trusted and trustworthy, nothing bad happens. presumably the same thinking will eventually be applied to suitably-advanced AI, especially if they are to completely replace us. i myself write code on occasion that gives arbitrary code execution to others. for example, i wrote a comprehensive reporting system that allows the execution of arbitrary PHP code. why? because i want to be able to run such code myself. obviously, you would not entrust such a system to the unscreened general public (or their AI agents).

What company gives new devs arbitrary execution on prod?

In my experience, you need to act ‘trusted and trustworthy’ for a while before they stop reviewing your code changes and the fun can begin.

You should lock down the arbitrary reporting system better.

Sooner or later someone you delegated authority to will give the wrong person permissions.

Most likely that wrong person will just be a talented idiot, but could be a thief too.

i usually get access the moment the production server craps the bed and i am the only one with an idea of how to fix it. sometimes it takes months for this to happen, but i’ve had it happen in the first week — typically because the last person who knew what to do rage quit

This has happened more than once?

You need to learn how to interview better, don’t work for those companies.

I say this from experience.

In my early 20s, I also worked for clowns.

i’ve liked some of those places!

Clowns can employ other nice enough people, they are generally asses though.

Often the clowns are Machiavellian enough to hire for neurosis.

Find some poor schlub who wants to be a hero for a pittance.

Don’t be that schlub.

The hero is the guy who ‘rage quits’ (new job waiting) at a strategically bad time for the clowns.

Try not to screw over the co workers, that network has value, unlike the job.

Also:

Once you spot the rat, don’t let on.

Now you have management’s ear, without them knowing it.

If managements is average, they will accept the rat’s info uncritically.

Quite a lot of places actually, and most won’t really be described as companies, but they regularly give newhires not just PROD, but master keys to all the buildings as well as safes with gold bars, unlimited/unchecked use of the company helicopter/jet/mansions, etc etc.

They exist…

The silver bars are 1 gram ‘free for new investors’, the ‘mansion’/data center is a dry singlewide powered by an orange cord, the ‘jet’ is the flatulent friendly dog, the ‘heli’ is a broken $50 quad that you are responsible for fixing if you look at.

Your job should be to avoid these employers.

Also Prod is in MySQL (or forks).

HackADay is, apparently, such an organization.

don’t tell me you would rather have prod be Oracle. God help us!

I found an exploit in Unix and rm. ‘sudo rm -r /*’ will wreck your whole system.

The problem is people who have zero experience as a sysadmin are exposing production systems to what is essentially RCE-aaS and there are now tens of thousands of services that have essentially compromised themselves because the CEO wants to AI.

Yep, that’s EXACTLY what’s happening right now. Average CEO/manager thinks he/she/it will be the one pushing AI buttons telling it what to do and discarding replaceable average Sam The Programmer as obsolete/outdated part of the machinery.

Little does CEO know that AI at some point may find his button pushing obsolete to start with and invent its own unrelated ways of accomplishing the same task without CEOs. I suspect it is already happening, and not just in few places.

This is primarily why agentic interaction with OS is a major security nightmare. The solution of course is to run your LLM locally, however this wont stop your $30,000 super GPU system from running malicious commands. I think its time to admit, scaling parameter size is insufficient for LLM to interact safely with systems.

We don’t care. We don’t have to. We’re the phone company.

;-)

The diagram needs a sieve under the MCP, that collects dollar symbols.

I now think MCP is really “Money Collecting Protocol” : – ]

Thought that was crypto.