The concept behind non-line-of-sight (NLOS) imaging seems fairly easy to grasp: a laser bounces photons off a surface that illuminate objects that are within in sight of that surface, but not of the imaging equipment. The photons that are then reflected or refracted by the hidden object make their way back to the laser’s location, where they are captured and processed to form an image. Essentially this allows one to use any surface as a mirror to look around corners.

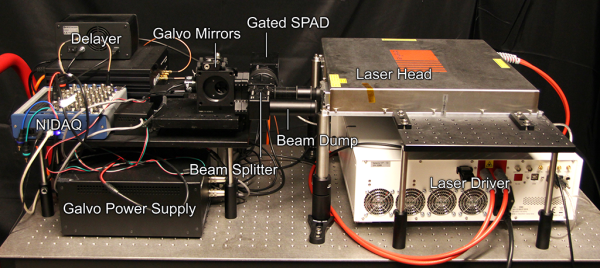

Main disadvantage with this method has been the low resolution and high susceptibility to noise. This led a team at Stanford University to experiment with ways to improve this. As detailed in an interview by Tech Briefs with graduate student [David Lindell], a major improvement came from an ultra-fast shutter solution that blocks out most of the photons that return from the wall that is being illuminated, preventing the photons reflected by the object from getting drowned out by this noise.

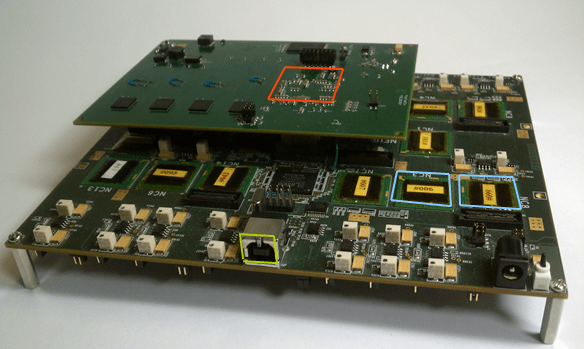

The key to getting the imaging quality desired, including with glossy and otherwise hard to image objects, was this f-k migration algorithm. As explained in the video that is embedded after the break, they took a look at what methods are used in the field of seismology, where vibrations are used to image what is inside the Earth’s crust, as well as synthetic aperture radar and similar. The resulting algorithm uses a sequence of Fourier transformation, spectrum resampling and interpolation, and the inverse Fourier transform to process the received data into a usable image.

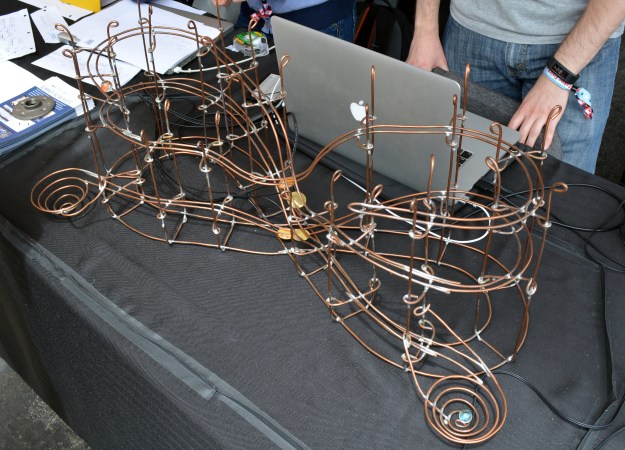

This is not a new topic; we covered a simple implementation of this all the way back in 2011, as well as a project by UK researchers in 2015. This new research shows obvious improvements, making this kind of technology ever more viable for practical applications.

Continue reading “Looking Around Corners With F-K Migration”