Getting into FPGA design isn’t a monolithic experience. You have to figure out a toolchain, learn how to think in hardware during the design, and translate that into working Verliog. The end goal is getting your work onto an actual piece of hardware, and that’s what this post is all about.

In the previous pair of installments in this series, you built a simple Verilog demonstration consisting of an adder and a few flip flop-based circuits. The simulations work, so now it is time to put the design into a real FPGA and see if it works in the real world. The FPGA board we’ll use is the Lattice iCEstick, an inexpensive ($22) board that fits into a USB socket.

Like most vendors, Lattice lets you download free tools that will work with the iCEstick. I had planned to use them. I didn’t. If you don’t want to hear me rant about the tools, feel free to skip down to the next heading.

Hiccups with Lattice’s Toolchain

Still here? I’m not one of these guys that hates to sign up for accounts. So signing up for a Lattice account didn’t bother me the way it does some people. What did bother me is that I never got the e-mail confirmation and was unable to get to a screen to ask it to resend it. Strike one.

Still here? I’m not one of these guys that hates to sign up for accounts. So signing up for a Lattice account didn’t bother me the way it does some people. What did bother me is that I never got the e-mail confirmation and was unable to get to a screen to ask it to resend it. Strike one.

I had tried on the weekend and a few days later I got a note from Lattice saying they knew I’d tried to sign up and they had a problem so I’d have to sign up again. I did, and it worked like I expected. Not convenient, but I know everyone has problems from time to time. If only that were the end of the story.

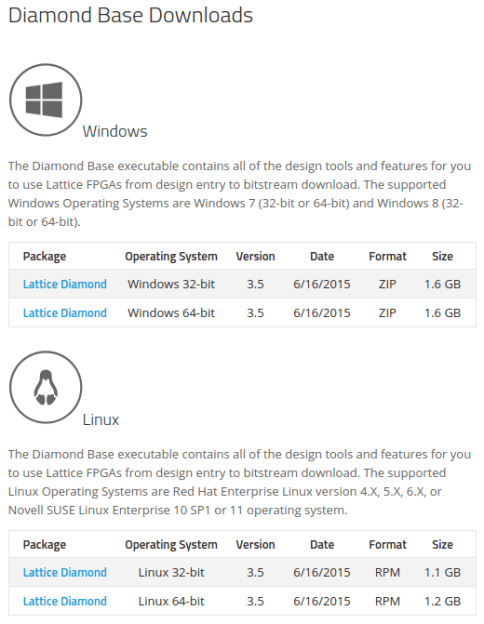

I was impressed that Lattice does support Linux. I downloaded what appeared to be a tgz file. I say appeared because tar would not touch it. I examined the file and it looked like it was gzipped, so I managed to unzip it to a bare file with a tar extension. There was one problem: the file wasn’t a tar file, it was an executable. Strike two. I made it executable (yes, I’m daring) and I ran it. Sure enough, the Lattice installer came up.

Of course, the installer wants a license key, and that’s another trip to the Web site to share your network card’s MAC address with them. At least it did send me the key back fairly quickly. The install went without incident. Good sign, right?

I went to fire up the new software. It can’t find my network card. A little Internet searching revealed the software will only look for the eth0 card. I don’t have an eth0 card, but I do have an eth3 card. Not good enough. I really hated to redo my udev rules to force an eth0 into the system, so instead I created a fake network card, gave it a MAC and had to go get another license. Strike 3.

I decided to keep soldiering (as opposed to soldering) on. The tool itself wasn’t too bad, although it is really just a simple workflow wrapper around some other tools. I loaded their example Verilog and tried to download it. Oh. The software doesn’t download to the FPGA. That’s another piece of software you have to get from their web site. Does it support Linux? Yes, but it is packaged in an RPM file. Strike 4. Granted, I can manually unpack an RPM or use alien to get a deb file that might work. Of course, that’s assuming it was really an RPM file. Instead, I decided to stop and do what I should have done to start with: use the iCEStorm open source tools.

TL;DR: It was so hard to download, install, and license the tool under Linux that I gave up.

Programming an FPGA Step-by-Step

Whatever tools you use, the workflow for any FPGA is basically the same, although details of the specific tools may vary. Sometimes the names vary a bit, too. Although you write code in Verilog, the FPGA has different blocks (not all vendors call them blocks) that have certain functions and methods they can connect. Not all blocks have to be the same either. For example, some FPGAs have blocks that are essentially look up tables. Suppose you have a look up table with one bit of output and 16 rows. That table could generate any combinatorial logic with 4 inputs and one output. Other blocks on the same FPGA might be set up to be used as memory, DSP calculations, or even clock generation.

Some FPGAs use cells based on multiplexers instead of look up tables, and most combine some combinatorial logic with a configurable flip flop of some kind. The good news is that unless you are trying to squeeze every bit of performance out of an FPGA, you probably don’t care about any of this. You write Verilog and the tools create a bitstream that you download into the FPGA or a configuration device (more on that in a minute).

The general steps to any FPGA development (assuming you’ve already written the Verilog) are:

- Synthesize – convert Verilog into a simplified logic circuit

- Map – Identify parts of the synthesized design and map them to the blocks inside the FPGA

- Place – Allocate specific blocks inside the FPGA for the design

- Route – Make the connections between blocks required to form the circuits

- Configure – Send the bitstream to either the FPGA or a configuration device

The place and route step is usually done as one step, because it is like autorouting a PC board. The router may have to move things around to get an efficient routing. Advanced FPGA designers may give hints to the different tools, but for most simple projects, the tools do fine.

Constraints For Hardware Connected to Specific Pins (or: How does it know where the LED is?)

There is one other important point about placing. Did you wonder how the Verilog ports like LED1 would get mapped to the right pins on the FPGA? The place and route step can do that, but it requires you to constrain it. Depending on your tools, there may be many kinds of constraints possible, but the one we are interested in is a way to force the place and route step to put an I/O pin in a certain place. Without that constraint it will randomly assign the I/O, and that won’t work for a ready made PCB like the iCEStick.

For the tools we’ll use, you put your constraints in a PCF file. I made a PCF file that defines all the useful pins on the iCEstick (not many, as many of you noted in earlier comments) and it is available on Github. Until recently, the tools would throw an error if you had something in the PCF file that did not appear in your Verilog. I asked for a change, and got it, but I haven’t updated the PCF file yet. So for now, everything is commented out except the lines you use.

Here’s a few lines from the constraint file:

set_io LED3 97 # red set_io LED4 96 # red set_io LED5 95 # green

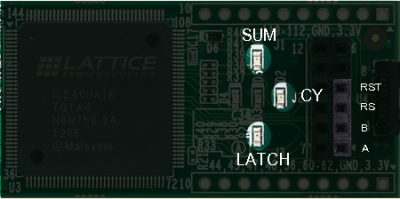

Every external pin (including the clock) you plan to use must be defined in the constraint file. Errors in the file can be bad too. Routing an output to a pin that is connected, for example, directly to ground could damage the FPGA, so be careful! The picture to the right shows the configuration with my PCF file (the center LED and the one closest to the FPGA chip just blink and are not marked in the picture). Keep in mind, though, you could reroute signals any way that suited you. That is, just because LED1 in the Verilog is mapped to D1 on the board, doesn’t mean you couldn’t change your mind and route it to one of the pins on the PMOD connector instead. The name wouldn’t change, just the pin number in the PCF file.

Every external pin (including the clock) you plan to use must be defined in the constraint file. Errors in the file can be bad too. Routing an output to a pin that is connected, for example, directly to ground could damage the FPGA, so be careful! The picture to the right shows the configuration with my PCF file (the center LED and the one closest to the FPGA chip just blink and are not marked in the picture). Keep in mind, though, you could reroute signals any way that suited you. That is, just because LED1 in the Verilog is mapped to D1 on the board, doesn’t mean you couldn’t change your mind and route it to one of the pins on the PMOD connector instead. The name wouldn’t change, just the pin number in the PCF file.

Configuring an FPGA

Different FPGAs use different technology bases and that may affect how you program them. But it all starts with a bitstream (just a fancy name for a binary configuration file). For example, some devices have what amounts to nonvolatile memory and you program the chip like you might program an Arduino. Usually, the devices are reprogrammable, but sometimes they aren’t. Besides being simpler, devices with their own memory usually start up faster.

However, many FPGAs use a RAM-like memory structure. That means on each power cycle, something has to load the bitstream into the FPGA. This takes a little time. During development it is common to just load the FPGA directly using, for example, JTAG. However, for deployment a microprocessor or a serial EEPROM may feed the device (the FPGA usually has a special provision for reading the EEPROM).

The FPGA on the iCEstick is a bit odd. It is RAM-based. That means its look up tables and interconnections are lost when you power down. The chip can read an SPI configuration EEPROM or it can be an SPI slave. However, the chip also has a Non Volatile Configuration Memory (NVCM) inside. This serves the same purpose as an external EEPROM but it is only programmable once. Unless you want to dedicate your iCEstick to a single well-tested program, you don’t want to use the NVCM.

The USB interface on the board allows you to program the configuration memory on the iCEstick, so, again, you don’t really care about these details unless you plan to try to build the device into something. But it is still good to understand the workflow: Verilog ➔ bitstream ➔ configuration EEPROM ➔ FPGA.

IceStorm

Since I was frustrated with the official tools, I downloaded the IceStorm tools and the related software. In particular, the tools you need are:

- Yosys – Synthesizes Verilog

- Arachne-pnr – Place and Route

- Icestorm – Several tools to actually work with bitstreams, including downloading to the board; also provides the database Arachne-pnr needs to understand the chip

You should follow the instructions on the IceStorm page to install things. I found some of the tools in my repositories, but they were not new enough, so save time and just do the steps to build the latest copies.

There are four command lines you’ll need to program your design into the iCEstick. I’m assuming you have the file demo.v and you’ve changed the simulation-only numbers back to the proper numbers (we talked about this last time). The arachne-pnr tool generates an ASCII bitstream so there’s an extra step to convert it to a binary bitstream. Here are the four steps:

- Synthesis:

yosys -p "synth_ice40 -blif demo.blif" demo.v - Place and route:

arachne-pnr -d 1k -p icestick.pcf demo.blif -o demo.txt - Convert to binary:

icepack demo.txt demo.bin - Configure on-board EEPROM:

iceprog demo.bin

Simple, right? You do need the icestick.pcf constraint file. All of the files, including the constraint file, the demo.v file, and a script that automates these four steps are on Github. To use the script just enter:

./build.sh demo

This will do all the same steps using demo.v, demo.txt, and so on.

Try It Out

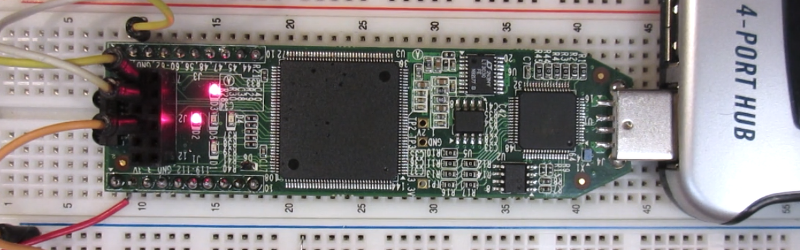

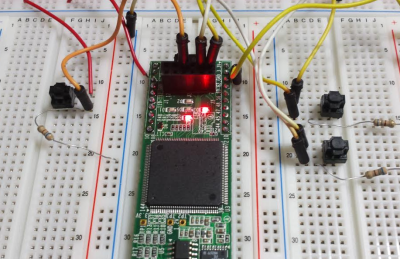

Once the board programs, it will immediately start operating. Remember, this isn’t a program. Once the circuitry is configured it will start doing what you meant it to do (if you are lucky, of course). In the picture below (and the video), you can see my board going through the paces. I have some buttons for the two adder inputs on one side and a reset button on the other. I also soldered some headers to the edge of the board.

If you are lazy, you can just use a switch or a jumper wire to connect the input pins to 3.3V or ground. It looks like the device pins will normally be low if you don’t connect anything to them (but I wouldn’t count on that in a real project). However, I did notice that without a resistor to pull them down (or a switch that positively connected to ground) there was a bit of delay as the pin’s voltage drooped. So in the picture, you’ll see I put the switches to +3.3V and some pull down resistors to ground. The value shouldn’t be critical and I just grabbed some 680 ohm resistors from a nearby breadboard, but that’s way overkill. A 10K would have been smarter and even higher would probably work.

If it works, congratulations! You’ve configured an FPGA using Verilog. There’s still a lot of details to learn, and certainly this is one of the simplest designs ever. However, sometimes just taking that first step into a new technology is the hardest and you’ve already got that behind you.

Although you can do just about anything with an FPGA, it isn’t always the best choice for a given project. Development tends to be harder than microcontroller development (duplicating this project on an Arduino would be trivial, for example). Also, most FPGAs are pretty power hungry (although the one we used is quite low power compared to some). Where FPGAs excel is when you need lots of parallel logic executing at once instead of the serial processing inherent in a CPU.

FPGAs get used a lot in digital signal processing and other number crunching (like Bitcoin mining) because being able to do many tasks all at once is attractive in those applications. Of course, it is possible to build an entire CPU out of an FPGA, and I personally enjoy exploring unusual CPU architectures with FPGAs.

Then again, just because you can make a radio with an IC doesn’t mean there isn’t some entertainment and educational value to building a radio with transistors, tubes, or even a galena crystal. So if you want to make your next robot use an FPGA instead of a CPU, don’t let me talk you out of it.

Why is it so hard to get a good, all-encompassing tool for FPGA development? When I want to develop firmware on MSP430s, I just download Code Composer Studio from TI and I don’t need to do ANYTHING ELSE; just start debugging. This seemingly obfuscated environment surrounding FPGAs is a major reason people only approach the subject and never embrace it.

Agreed. Setting up the toolchain is a pain for a lot of uC development, but these FPGA toolchains are far worse. Of course I haven’t tried any of the super-expensive software that targets professional development. My guess is there’s no money in making FPGA development available to a wider audience. These companies are trying to land a whale that will order tens of thousands and establish a legacy need for the parts.

True and for the whales I’ll send someone out to hold your hand and/or train you.

I actually think it’s a bit stranger than that.

I think there’s money in making “decent” FPGA development available to major manufacturers. I think there’s actually disincentive to make high-performance FPGA development available *at all*. Why? Because if you do “decent” development, and end up resource or performance limited, you spend more money and use faster chips.

Imagine if Intel was the only x86 compiler out there – it’d be like them providing a “good but not great” compiler (while showing off performance from an in-house group using hand-tuned assembly).

If you look at Xilinx’s tools, for instance, they look nicely integrated, they look like they do a good job synthesizing/implementing things, and modern FPGAs are *so big* that honestly, what the tools do looks like magic.

But then go and open the FPGA editor, and look at the design carefully – and in a *lot* of cases, the tool is just monumentally stupid for what you’re trying to do: because *there’s no way to tell it* what you’re trying to do. There aren’t any attributes, or constraints, or macros available to pass that information along.

You can actually *still fix this* yourself, by implementing the FPGA directly in the editor as a hard macro, and using that hard macro. But Xilinx’s FPGA editor hasn’t been updated in forever – it’s ungodly buggy, and it takes a ton of time to do even simple things. It can’t even handle the fact that the FPGA has symmetry – so you have to build “left-hand side” macros, and “right-hand side” macros, even though you’re trying to say “use the thing closest to this other thing.”

We’re not even talking about super-advanced things here – even something as simple as “use the carry bit from the adder of a 12-bit counter as the comparator for ‘count == 0xFFF'” requires you to know a trick.

Usually designs are limited by one or two slow paths (where there is nested logic that must complete in a single clock cycle).

As long as those critical paths meet timing, then others can be placed and routed almost randomly, as doing a super job on these paths won’t improve the performance of the overall design. In some cases it is actually better to use what looks like really bad routing, leaving the nice clean routing paths for other more critical signals.

This is why you can improve max clock speed of an FPGA design by increasing the clock speed constraint. If you build a design to run at 200 MHz you will get much tighter placing and routing then if you target 20 MHz.

“This is why you can improve max clock speed of an FPGA design by increasing the clock speed constraint.”

I would argue yes and no.

It is possible to completely confuse synthesis tools by deliberately over-constraining too much and it spending way too long trying to achieve the impossible (otherwise all constraints would be 1GHz with zero area). I normally use +5-10% when developing ASIC designs but that’s because there’s variation in results so, when you want 20Mhz, it’s usually a good idea to design for say 25MHz to ensure you don’t get any surprises if you modify the design slightly and congestion increases which may increase path delays or if the tool changes you don’t suddenly fail your target.

However, generally, if your design will achieve 200MHz when you were only (originally) targetting 20MHz then I would argue something was wrong – either your original constraint was wrong or your design is using more registers than it needs (you have little logic between register stages).

Setting up your constraints when doing synthesis (ASICs or FPGAs) is surprisingly important and, in my experience, often overlooked until a bit too late. In correctly defining what timing constraints you need to achieve (in terms of input/output delays along with internal clock frequencies) determine what logic/primitives you should be considering and helps get the right answers (normally) out of the tool (if you care about a path achieving a specific result then make sure it’s got a suitable constraint, if you don’t care then you can always stick it to a ‘false-path’).

If you achieve your constraints then why care about the placement? (don’t forget to check the report logs to make sure your constraints are applied!)

“As long as those critical paths meet timing, then others can be placed and routed almost randomly, as doing a super job on these paths won’t improve the performance of the overall design. In some cases it is actually better to use what looks like really bad routing, leaving the nice clean routing paths for other more critical signals.”

Yes. But that’s part of the problem. The only constraint you can tell it is “you have time X to get from point A to point B.” And that’s *all it cares about*. You can’t tell it “look, this is going to be a high-toggle rate line, so I want you to put them as close together as possible, because otherwise the power consumption goes through the roof.” There’s no “do your best” constraint.

You can’t just put an arbitrarily low time constraint, and hope that it meets it. (For one thing, that screws up the algorithm that determines what’s best – it might make other portions of the design not actually *meet* the timing they need). So your best bet is to dig into the FPGA editor, and try to do it yourself using relative location constraints. Except those might not work, because the FPGA isn’t regular enough.

That being said – that’s not even all I’m talking about. There are obvious optimizations that can be made by the *synthesizer*, but never are, that you don’t see unless you stare at the actual implemented design. And there are features that are happily available in the hardware itself that you can’t even get access to without the editor, either. Some of those the manufacturers hide behind “this is closed IP, you can only use this with our designs”, but others are just not exposed, for … basically no reason.

“If you achieve your constraints then why care about the placement? (don’t forget to check the report logs to make sure your constraints are applied!)”

Power.

The actual power cost of the flipflops toggling in an FPGA is really tiny, because, well, they’re built on a super-fancy process with ultra-low voltages. If you try to push a bunch of data to a bunch of different places, the interconnect rapidly becomes the dominant power cost.

And if the most optimal placement/routing is *obvious*, you’d really, really like the stupid tools to be able to do that.

Well the Xilinx and Altera tools appear integrated. The lattice tools a little less so but still not bad. Ice storm is in its infancy and requires a few projects to work together. Maybe someone will write a unifying GUI like my shell script to streamline the process.

The Xilinx and Altera tools are much better integrated and easier to install, though they are HUGE (Altera Quartus is a 1.2 GIGABYTE download). This seems outrageous but the article above kinda explains it: it might seem pretty easy to just put a LED blinker or a full adder into an FPGA, but you’re really doing a lot of the same things as what you would do to design a circuit board.

Electronics design is a difficult problem to solve, that’s why most electronics design packages such as Eagle are so expensive. The full version of Altera Quartus is also expensive, but fortunately we live in a great time where we can just go to eBay or Arrow or whatever and buy an FPGA board for a few dollars, and use free tools to play with them.

I just recently said in another post we live in the golden age of free EDA or something like that. What really kills me about the huge tools is that once I have a production design potted I really need to baseline the tools with my code since what I built with version 19.2 may not be the same result as I’m going to get with 20.3. So my repositories get very large very fast. What’s worse is you know a lot of it is probably support for things you don’t care about. I guess if you installed very minimally, but I usually install anything I think I might need and then wind up archiving all of it.

Quite right. One problem is getting a minimal install to work properly. We just run it in a VM box and archive the whole drive for every project. That way you can reinstate the virtual machine and off you go.

As a rule we do not update FPGA tool during a project. You start with one stick with it till you are done and archive the lot

This right here is exactly why the entire concept of “shared libraries” is worthy of reconsideration at this point IMHO. When it is simpler to virtualize an entire computer than it is to resolve dependency version problems it raises the question: “is the juice worth the squeeze?”

Do we in development actually get sufficient value from shared libraries to justify the dependency rats nest it creates?

FPGA tools are doing much more complicated work than C/C++ compilers.

You are right. But the real problem for the FOSS community is not the synthesizer complexity, but the lack of documentation from manufacturers. If they would release low level documentation for their parts, I’m sure there would be a lot better FOSS toolchains.

I doubt it. The market for these tools is much smaller than for C/C++ compilers, and the implementation much more complicated. It would take a huge investment for little return.

That doesn’t mean there aren’t people willing to make this kind of thing. In fact, huge investments with little material return is what open source runs on. Unless you’re talking about it being a huge investment for the company, in which case, it would take little to no effort to release documentation they already have.

I think part of it is to do with the fact that the software is so valuable. In many ways the synthesise and place and route algorithms are remarkable (far more so than a optimising compiler afaik) and the devs simply don’t want to let it go easily! You end up with complex software with drm for something they give away for free which is some very advanced tech and you end up with a picky tool chain.

I suspect developing good synthesis and p&r software is far more expensive and time consuming than dev&fab of the ic .

Yes, a tgz file is gzipped tar file. You seem to be very inexperienced for ranting about something so obviously correct.

Sorry, I accidentially submitted; the sarcastic part was about to come:

… obviously correct. Now, packing an executable into a gzipped file is obviously correct, too. Now, it’s not as correct as Xilinx packaging 10 (no joke) 10 Java Runtime executables with their Vivado suite, but still very correct.

Asking for eth0’s MAC address is industry standard. Matlab does it, Xilinx does it, seemingly Lattice does it, so there’s nothing wrong with it. I also wouldn’t complain if I were you — this means free floating licenses, as writing a script that moves the “real” eth0, if there is one on your system, out of the way and builds an eth0 with a 00:DE:AD:BE:EF:00 MAC address that is easy to remember, will give you such a Uber-License.

Then the industry standard is wrong. Plain and simple. Especially with the modern versions of udev:

RC=0 stuartl@vk4msl-mb ~ $ ip link show

1: lo: mtu 65536 qdisc noqueue state UNKNOWN mode DEFAULT group default

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

2: enp0s10: mtu 1500 qdisc pfifo_fast state DOWN mode DEFAULT group default qlen 1000

link/ether 00:23:32:ce:05:08 brd ff:ff:ff:ff:ff:ff

3: sit0@NONE: mtu 1480 qdisc noop state DOWN mode DEFAULT group default

link/sit 0.0.0.0 brd 0.0.0.0

4: wlan0: mtu 1280 qdisc mq state UP mode DORMANT group default qlen 1000

link/ether 00:23:6c:83:b6:b7 brd ff:ff:ff:ff:ff:ff

Ohh look, ma, no eth0.

Years ago I recall confronting Altera Quartus, and could literally not make it work. I remember jumping through all their hoops that assumed you worked for some company (this was for a University), getting the tools (for Windows) installing them on a Windows XP machine, and trying to nut it out.

I gave up in the end, just couldn’t make it work.

Actually, I’ve used Unix since the 7th edition. The problem is it wasn’t a tgz file. It was an executable gzipped. No tar at all.

And yes, I’ve used node locked licenses before and I did make a fake eth0 although I like the dead beef MAC address. I usually use AA:55:AA:55:AA….

The tgz extension when there is no tar file anywhere in the chain is incorrect. I did file a ticket with Lattice, so maybe they’ll fix it.

A “tgz” file extension implies “Tar GZip”. The file was not a TAR but an executable, so should have just had a .gz extension. Not a difficult problem to solve but still annoying.

Agree and it was solved. It was the combination of hurdles that killed me. I’d have probably kept going through any one or two of them.

Hi all,

So I bought one.

Maybe it will be useful?

John

I’ll have to dig out my notes, but the best way I’ve found to install the Lattice Tools (at least for Debian based systems) is to use alien and convert the RPM to a deb. IIRC, all you need to do is run it with the –scripts option and then the deb should install normally.

Yeah I started to use alien for Diamond although that didn’t help the bogus tgz file. I was out of patience and thought it would be cool to use the open source tools anyway. If you have notes on the whole install, consider posting them on hackaday.io for posterity!

Great article! Too bad you had so much trouble with the tools.

For those who want to get serious with FPGA’s, I recommend looking around for other FPGA evaluation boards too. If you’re willing to spend $50 for e.g. a BeMicroCV, $80 for a DE0-Nano or $150 for a BeMicroCVA9 you will get a much more capable FPGA (those are all Altera). In the Xilinx camp, there are evaluation boards such as the Pipistrello ($160) and the Nexys ($270). And if you’re a student, you can get crazy discounts on some of these.

===Jac

I agree the iCEstick is a gateway drug. I have found the Digilent boards good for Xilinx and, as you say, crazy discounts for students (which I sadly haven’t qualified for in a number of years). When getting a board, though, it pays to look at what you want to do with it if you are laying down a few hundred bucks. For example, some of the boards have DRAM and mass storage that are good for embedding a processor but maybe harder to work with if you just want to do simple logic (unless, of course, you just ignore it). Other boards will have static RAM and simple peripherals. For example, the Digilent Spartan 3 board is cheap (and, sadly, I think no longer available) and falls into the latter class. I have several of them and while the S3 isn’t the latest game in town it is cheap and as you say way more capable than this. The CMOD S6 has a newer Spartan 6 on it, costs about 3X what the icestick does, and doesn’t have much of any extras, but would be another good choice for a “logic” starter board.

Well, given the community here, I will say that there’s a lot of merit in actually building a low-end FPGA board yourself. If you’re willing to spend $50-$100, you can spin a small TQFP package FPGA board yourself for that much. Heck, I have one (with JTAG programmer + USB comms all in one package, woo hoo!) that I really should put up on Hackaday.io, now that I think about it…

FPGAs and hardware are fairly integrated, so a lot of times coming at it *just* from an evaluation board misses a lot of the actual capabilities of the device. And with 4-layer boards so cheap now…

If you know one end of a soldering iron from the other a great starting board is the Papilio One 250 (US$40) – it is a little long in the tooth (Spartan 3E), but very well documented and hardware hacker-friendly. Makes a great first board.

Yes that is a good board. Avnet had a 3e board with goodies like a psoc but probably out of production by now.

If you want to try a cheap, open source CPLD board instead:

https://www.tindie.com/products/area0x33/areacpld/

If your design fits on a CPLD it’ll be much much easier (and cheaper) to use it on a project than a FPGA.

Do you have an example of a project that can be completed with this hardware? It’s not immediately obvious, to me, what “80 logic elements” means in terms of potential. I have a good grasp of RAM & FLASH parameters of microcontrollers and how complex a design can be done with, say 256B of RAM vs 32KB, etc…

How many 32-bit timers might I be able to implement with this hardware? Obviously, there isn’t enough IO for parallel interfaces on more than 2 (2×32=64 IO just to output the count and there is 79 IO), so some means to get the values serially is necessary. To me, 80 “logic elements” doesn’t sound like much, but I really don’t know what that is quantifying in terms of circuit design.

Have a look at the datasheet (search for 5M1270ZF256C4.pdf) for exact definitions, but on a quick read looks like each LE can either be a one-bit full adder, and/or perform a boolean operation on any four variables, and/or act as a 1-bit register.

So 80 LEs should be enough for two 32 bit timers and a bit of control logic.

Lattice Diamond is not used to program iCE40 FPGAs. You want iCEcube2 – also a free download from LatticeSemi. And programming the FPGA is not complex, you just program a SPI FLASH through a FTDI USB controller IC. Lots of tools available for doing this on Linux. It is the most common setup I’ve observed on third party / open source developer boards.

I did get iCEcube2 installed (it was the one with the bad tgz extension). But unless I did something wrong, when I wanted to actually burn the flash, it directed me to download another tool–that was the one in the RPM file. In addition to the flash you can program the onboard NV, but that’s a one time deal so that’s probably not a good idea on this board.

This is an interesting article but I think there are much better alternative starter boards. The education boards from Digilent (I have no affiliation with them) use the free version of the Xilinx software (called Webpack) and have a lot more connections and builtin peripherals on board. They start at $89 ($69 educational discount) for a 100K gate board. They even have a lot of free project info and some cheap books of projects too. This is what I started with and use to teach FPGA basics and hacking. I would also point out that there are cheap Altera boards that work with the free version of their software. Both of these allow you to create bitstreams for the FPGAs in Verilog or VHDL and program the boards via USB. Both work on Windows and Linux too.

No doubt there are a lot of options. This is a neat and cheap package, though, and I think it will help people make the leap and then they may choose to move on to bigger boards.

I’ve just started getting into FGPA programming, with my journey looking somewhat like: QBasic/VB -> MFC -> Qt -> GCC -> MSP430 -> Xilinx.

Admittedly the tools are daunting, especially not coming from an EE background. I’ve written very little VHDL, however Xilinx has block diagrams to stitch components and signals together, provided tons of cores, and also allows usage of High-Level-Synthesis through C code and pragmas. From what I hear from colleagues doing FPGA design 10 years ago, the tools have improved tremendously.

Great work! I was searching for free (as in freedom) FPGA tools some months ago, but ended using Xilinx toolchain, because there is no free synthesizer for VHDL (only Verilog and only for Lattice parts AFAIK). There is a VHDL simulator though…

Consider Terasic DE0-Nano. Available from Digikey for $87. Comes with Altera Quartus, web edition, on disk. Has on-board programmer, ram, etc. Have personally used it with Beaglebone. Makes a powerful system.

Hi,

Perhaps a little bit of topic here, but can somebody explain the difference between verilog and vhdl in practicle sence of this demo?

Do these tools also support vhdl? How would the same demo using vhdl look like?

Kristoff

Just two different Hardware Description Languages. VHDL is based on Ada and Verilog is (kind of) similar to C in syntax. They are both languages that describe hardware (and have some other abilities when simulating hardware). VHDL is a bigger language with some more powerful features, but it tends to be more verbose and “strict” (which can be a good or bad thing depending on your perspective). Both languages can do pretty much the same things in describing hardware (and a design can be re-written in VHDL or Verilog). Verilog tends to be more popular in the US and VHDL elsewhere (but the US DOD uses VHDL and they are both commonly used).

For comparison, here is a (semi-random) link to a similar LED blinker written in VHDL http://www.armadeus.com/wiki/index.php?title=Simple_blinking_LED

I’d argue Verilog used to be more popular in the US and VHDL elsewhere but I believe that generally Verilog has gained broader usage following the rise in fabless companies who produce synthesisable designs as most of those are Verilog based (and there’s a lot of the larger companies based outside of the US such as ARM, Samsung, etc).

Also, VHDL used to be the bigger language but since the advent of Verilog-2001 and SystemVerilog, the tide has turned a bit (all the major synthesis tools support those three languages and even allow mixed-language simulation and synthesis – even in their free licenses).

Xilinx only partially supports SystemVerilog in Vivado, and that only works for 7-series and newer devices. Spartan-3s, Spartan-6s, etc. all have to use ISE, which doesn’t support SystemVerilog at all (which *sucks*, because all I really want are interfaces, and they’re not much more than syntax sugar).

They do support mixed-language simulation/synthesis, so you can get some SystemVerilog-like behavior (like assertions) by using VHDL helper modules to generate the assertions. It’s ugly, but it works. Interfaces, however, need complicated macros to replicate their behavior, since complex types can’t be passed around.

What’s an FPGA?

I wrote a tutorial for beginners who want to learn FPGA: https://eliademy.com/catalog/basic-of-the-cx-language.html I hope it will help :-)

I followed the same steps you did at the beginning and then used alien to install the rpm package for the programmer. The software all seems to work. I just ordered a dev kit so I havent been able to actually test the tools just yet though. I was interested in the ICE40 of how low power they are. I was thinking of combining it with a lowpower cortex-m4 micro to see if it could be used on some kind of IOT wearable platform in the future.