One of the nicest amenities of interpreted programming languages is that you can test out the code that you’re developing in a shell, one line at a time, and see the results instantly. No matter how quickly your write-compile-flash cycle has gotten on the microcontroller of your choice, it’s still less fun than writing blink_led() and having it do so right then and there. Why don’t we have that experience yet?

If you’ve used any modern scripting language on your big computer, it comes with a shell, a read-eval-print loop (REPL) in which you can interactively try out your code just about as fast as you can type it. It’s great for interactive or exploratory programming, and it’s great for newbies who can test and learn things step by step. A good REPL lets you test out your ideas line by line, essentially running a little test of your code every time you hit enter.

The obvious tradeoff for ease of development is speed. Compiled languages are almost always faster, and this is especially relevant in the constrained world of microcontrollers. Or maybe it used to be. I learned to program in an interpreted language — BASIC — on computers that were not much more powerful than a $5 microcontroller these days, and there’s a BASIC for most every micro out there. I write in Forth, which is faster and less resource intensive than BASIC, and has a very comprehensive REPL, but is admittedly an acquired taste. MicroPython has been ported over to a number of micros, and is probably a lot more familiar.

But still, developing MicroPython for your microcontroller isn’t developing on your microcontroller, and if you follow any of the guides out there, you’ll end up editing a file on your computer, uploading it to the microcontroller, and running it from within the REPL. This creates a flow that’s just about as awkward as the write-compile-flash cycle of C.

What’s missing? A good editor (or IDE?) running on the microcontroller that would let you do both your exploratory coding and record its history into a more permanent form. Imagine, for instance, a web-based MicroPython IDE served off of an ESP32, which provided both a shell for experiments and a way to copy the line you just typed into the shell into the file you’re working on. We’re very close to this being a viable idea, and it would reduce the introductory hurdles for newbies to almost nothing, while letting experienced programmers play.

Or has someone done this already? Why isn’t an interpreted introduction to microcontrollers the standard?

“… a web-based MicroPython IDE served off of an ESP32 …” that’s a shell of a great idea!

Great article as always, Eliot. Thank you.

Micropython has WebREPL that works with ESP32/8266 once you get it online over wifi. https://micropython.org/webrepl/

But it’s of the get-a-file, edit locally, send-a-file type, which adds that extra step that’s fundamentally similar to flashing.

I guess I want something like the old BASIC experience, where the shell is the editor, but with a better editor, and running on the micro.

Elliot,

Thak you for initiating such an interesting discussion.

Clearly there is not a “one size fits all” solution.

Some coders seek the smallest, fastest solution that runs on the bare metal.

Others are looking for a familiar “retrocomputing” environment, where the editor and language are self hosted.

Many programmers are processor-agnostic and put their faith in commercial tool-chains to get the job done.

Between these broad camps, there are bound to be numerous splinter groups.

I fall somewhere between the first 2 camps. I like the challenge of assembly language, I have a soft spot for resource limited machines, I like the idea of self-hosted environments, and I have dabbled with Forth over the last 40 years.

The 8-bit machines of the early 1980s were designed to provide a self-hosted environment that would create a video signal suitable for a TV or monitor. They were cost engineered, and in the early machines, the overhead of producing a video display clearly consumed the majority cpu cycles.

Todays microcontrollers are tens if not hundreds of times faster and many times the address space of the early 8-bitters, and the need to directly generate a video signal is not often a priority, apart for perhaps the retrocomputing scene. The serial UART interface is almost ubiquitous even on the smallest of microcontrollers.

Whilst most mcus have a Harvard architechture, creating a simple virtual machine, which can execute user code out of RAM provides a ready solution for a self hosting environment.

Other advances such as uSD Flash, FRAM (MSP430 series) and NVSRAM provide economical solutions for the storage of users source code either on the mcu or local to it on the pcb.

I’m with you on that, but it means you need a microcontroller that can handle a display. The STM32F429ZIT6 can do that. This is the chip used on their STM32F429I-DISC1 Discovery board, which includes a 320×240 TFT LCD. Single core, though, which means you may end up with something like the Sinclair ZX80, which couldn’t display anything on your monitor while running a program. But I guess you just use an I2C display instead, which just means you have to write some terminal code to talk to the MicroPython (or whatever) REPL.

Today’s microcontrollers are far more powerful than the PDP-11s or early PCs many of us programmed on. And those systems had text editors for writing programs. There’s no reason an embedded system can’t have that. You wouldn’t ship a final product with it, but for development or just hobby work, it makes sense.

So, Edlin for Arduino? (I’m only kidding! Please lower those torches and pitchforks!)

Not Edlin. TECO. :-)

Yes, I’d go with teco. Back in the 80s I wrote a teco script that parsed a metalanguage into perfect fortran, saved me hundreds of thousands of keystrokes while writing that application.

Sounds like mortran (used for the EGS Monte Carlo Code).

FIGnition had a full-screen scrolling text editor in about 1Kb.

Microcontrollers in general are limited when it comes to self-programming and memory mapping. They can’t do some things microprocessors can…

Harvard architecture seems somewhat problematic in this regard.

That’s really the bottom line, which makes interpreted languages the only option for untethered program development.

But “interpreted” covers a wide range. If you put a really efficient virtual machine in the Flash, you can “execute” compiled vm code from RAM.

Most uCs are more potent than an Atari 520ST which had even Turbo C. So it is surely possible to have an IDE running on a uC. It only needs to be done 😏

I assume you only compare the raw processing power. Such all-in-one-computers have a lot of peripherals, that you won’t have on a normal microcontroller. No graphics card for one.

Most of those old systems, could simply output to the screen by writing to a special memory location. Here you would have to implement some remote console system first. That’s a lot more overhead.

You are comparing two completely different things. Old 8-bit microcomputers used microprocessors which could, and often had to execute programs from RAM. Actually they had address and data buses and some control signals and didn’t care if those buses were connected to RAM, ROM or set of special function registers. Microcontrollers can’t do that. The code can be executed only form internal flash memory, RAM can only hold data, and without memory management unit it’s hard to expand them. 8-bit micros suffer the most from this limitation, as 16- and 32-bit ones usually are better-suited for self-programming. Check out Maximite project…

There is no problem with that with modern Cortex-M microcontrollers, because they contain memory controller that allows you to execute code from internal SRAM, FLASH or even from external devices that can communicate over SPI without any problem or any boilerplate code.

It’s also not a problem with CortexMs b/c they can write to flash from within your program/shell. I do it all the time in Mecrisp Forth, for instance.

Play around with the function in RAM for a while, then write to flash when I’m relatively sure it’s done. Overwriting flash segments is possible, but dangerous. With the sizes of modern flash, though, it’s not much of a concern to just keep on writing new versions and update pointers until an eventual full re-flash can “defragment” the space.

Of course, at some point, I’m still stuck copy/pasting the code over to my laptop b/c I need to keep the source somewhere. I’m not sure I see the way around this yet…

Getting started guide for ‘This is your development environment ‘ please

Yay, let’s squeeze a fat, resource-heavy hog of a scripting language to use with resource-limited micro. We can always use bigger one, the way the same fat, resource-heavy scripting language is used on big servers to bring the to the crawl because people are too stupid to learn programming properly.

So you, as a “proper programmer” write in pure assembly?

No direct machine code native instructions

No, only amateurs use ones and zeroes — Real programmers use only zeroes (a classic Dilbert joke).

I use a butterfly to direct particles of cosmic radiation to flip the bits in flash memory of a micro. But first I program and compile in C so I have a hex file to work with…

‘Course, there’s an EMACS command to do that…

https://xkcd.com/378/

Is anyone watching emacs for signs of self-awareness? Idk, this might be something to be concerned about

IDK set up some monkeys hammering on keyboards into EMACS and if you start getting new Shakespeare plays before you’ve even got a thousand going, concern is warranted.

Just compile on the PC side and dynamically load the commands into ram, linking them against support libraries in flash dynamically. I’m sure dynamic linking could be extremely performant with a fixed and well-known set of base libraries at fixed memory locations. The average ESP32 program is mostly just a few kb plus a ton of support code for wifi and RTOS.

No need to actually interpet or compile on the MCU,.

Many microcontrollers can only execute code from flash, not from ram. (Basucly anything with a Harvard arcetecture)

In theory you can write a program that would move data to flash memory of micro, that’s how bootloaders are done. Some micros even include a separate memory space for bootloader fo facilitate self-programming via USART or USB. I’ve seen only one project where a microcontroller after connecting to USB appeared as mass storage device, where a programmer could drag and drop a hex file to program it…

…and wear out the program flash on your micro in no time.

uPython aint so fat. It is mostly C and you can write very fast code with it and even in assembler. https://www.youtube.com/watch?v=hHec4qL00x0

And Forth is very small and plenty fast. 4K RAM and 16K PROM gave editor and disk block editor on 6502. Today that “file system” will be even simpler with Flash.

Almost entirely, I’d agree, but a disk block editor on Flash needs a Flash Translation Layer.

You would know :-) I still have an AIM-65 and the nice Forth book that Rockwell included with the Forth ROM ends with a “complete disk controller” in 1 block of Forth code (1K of text for the rest of you). It implements the usual 3 blocks or screens in RAM. I think it was to interface to Shugart standard drives.

This is why the author says he programs in Forth, because a Forth development environment can be squeezed into just a few Kb. FIGnition, for example was/ is an AVR-based self-hosted Forth SBC, with composite video out and a semi-chordal keyboard for programming. It’s entire OS + Language + editor + USB upgrader fitted into 16Kb (external SPI RAM and external SPI Flash were used for user programs and source code storage).

Not to mention Forth in itself is the programming equivalent of psychedelics. A mind expanding, perspective shattering experience from the 1960s… :-)

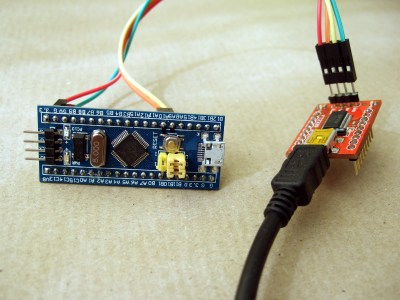

For those who may be content with a tiny BASIC there is https://github.com/Picatout/stm8_tbi

There is an REPL and program can be saved. All is done on the board and the source text is editable. All in 80’s style. The PC is only used as a terminal. At this time it is only on NUCLEO-8S208RB board.

(beware plugin my own project).

If interested in more powerfull language you can look at my eForth (original C.H. Tang) adaptation to stm8 and stm32

https://github.com/Picatout/stm8_eForth

https://github.com/Picatout/stm32-eforth (now on blue-pill and black-pill)

Also ESP8266 BASIC: http://www.esp8266basic.com/ which even does the web thing.

You can now go with ARM processors for your Arduinos and use J-Link programmers and debuggers to delve deeper into the hardware / software debugging processes in real time. Original Atmel Arduinos for many years didn’t allow this as they lacked the physical hardware interface although you could step through things a bit in some of their software IDEs at least.

Cortex M3 and M4 chips are running at 120 MHz with the Cortex M7 such as on the Teensy 4.1 clocking in at 600 MHz. It’s been really neat to see the impressive functionality that has been added to such devices.

What’s different about the old microcomputers is that they were ram-heavy and could load programs at run time. The modern microcontrollers are designed to run a fixed program from ROM. If you want to load programs on the fly, your choices are very limited — most 8-bitters are Harvard architecture and can’t do it at all, and the machines based on Von Neumann architectures have tiny amounts of ram compared to their ROM. The only real VN 16-bitter is the MSP430, and even then you have to use the FRAM variant to get enough RAM (I got Fuzix running on one of these once). The only reasonable single-chip architecture I’ve seen for this is the ESP8266, which still has way more flash than RAM but at least it’s a decent amount of RAM.

The bigger STM32s have plenty of RAM, especially the STM32F7xx series.

https://sites.google.com/site/annexwifi/home

“RAM heavy” eyeballs ZX-80, ZX-81, Kim-1, COSMAC Elf, VIC 20 ….

The STM32F7xx series has some parts with a lot of internal RAM.

Smaller Infoneon XMC 1X00 series ARMs are RAM heavy too, with more RAM than ROM

On trick is to create a virtual Von Neumann machine on top of a Harvard architecture mcu.

This is what Marcel van Kervinck did with his Gigatron TTL computer’s vCPU and what most implementations of Forth do on modern mcus.

Marcel’s Gigatron successfully emulates 6502 instructions by this technique, which allowed him to port MicroSoft BASIC to it.

It’s just one layer of abstraction on top of another. Programming becomes easier but at the expense of performance.

You first have to define a number of primitive instructions for the Virtual Machine, usually based around a 16-bit wordsize and memory space. Often fewer than 32 primitives are required.

With these primitives executed out of ROM, but the virtual machine instructions fetched from RAM, you have a machine that is more flexible and easier to program.

What about Forth?

It’s mentioned in the article

My first thought is that any of the tiny LISPs ought to work nicely. “Write a LISP Interpreter” is a junior-year undergrad assignment in CS departments that use an ACM-like curriculum. It’s a first year assignment in SICP-based programs*, albeit written in Scheme, which is kind of like cheating. :-)

* See what I did there?

ulisp just works fine on many microcontrollers and has for many years.

Its not terribly fast. Not going to do video over SPI using it. Then again most uC projects don’t do video over SPI so who cares if the uC is 99.99% idle with C code or merely 99% idle running ulisp.

uLisp is a nice own ecosystem with support for lots of different critters.

And where you have Lua (Whitecatboard?), you can add Fennel too (if you insist on Lisp), so I expect to see more on the LuaVM someday. Maybe someday it’ll be a “small Mono” with lots of languages being interoperable. I’ll keep an eye on it…

Forth suffers a lot from the “If you know one Forth, you know one Forth.” problem, especially when it comes to boiled down minimal versions of it. The way to think in Forth stays the same, but you’ll see different dialects a lot. You typically won’t need/meet many of them as you typically won’t play with every MCU on the market, so this diversity it isn’t as bad as it sounds at first, but many docs about Forths may need to be reinterpreted for the particular dialects. Some Forths even can be self sufficient and that may be a huge plus!

If your patience allows, learn Forth.

If you want results fast, use Lua or Lisp.

Lua is a strong contender. Embeds well in C, has reasonably wide support.

What Lua interpreter for micros projects are out there? Any recommendations?

I only know NodeMCU, which is some strange love-child of Javascript and Lua. I.e. to blink an LED you instantiate a timer object with your blink function as a callback. Works for me, but tough on newbies who are significantly happier with “on, delay, off, delay”. And the REPL works, but I wouldn’t call it ifriendly.

Given that most micros today have far more processing power and memory than what us greyhair types learned coding on. There is no reason not to have a text editor and BASIC(or Python) directly hosted on the micro. No need to complicate the heck out of it with a server and web based tools.

And what about Nuttx?

Nuttx has NSH, a programmable shell:

https://v01d.github.io/incubator-nuttx/docs/components/nsh/index.html

Cool shell demo on a small screen:

https://www.youtube.com/watch?v=37idnAVtlJE

I wanted to port JTAGenum.sh to it.

About your bluepill, if you flash the right bootloader which waits enough time, you could flash the stm32 directly through USB without using another USB-serial converter.

Last time I had to patch the bootloader to wait 10s because the Arduino IDE integration would not catch the bootloader:

http://www.zoobab.com/bluepill-arduinoide

My thoughts exactly. Is ESP32s2 USB OTG working yet?

If only there was a CMOS 6502 in a breadboard friendly 40 pin DIP .. wait for it .. with a full 64K static RAM in the package. One external pin for “RAM select” to put that RAM on the bus, internal to the chip, whenever your external decoder logic wants it. One could go nutty and stick a 64K flash in there, too. Let it clock anywhere from DC to heck idk 100MHz.

I can dream can’t I?

We basically have that, it’s called an FPGA and an OpenCores account.

I’ve done my own 6502 cores in vhdl for spartan6, so that is definitely one option. I’m planning on connecting a 65c02 with a spartan6 so as to free the fpga for peripherals. (Also to remove any little worries about latent bugs in my cpu implementation). But the memory devices make for either big ugly chunks of vhdl or big ugly tangles of wire wrap wire or annoying tracks of bus traces on a pcb. My point is that memory is like AIR – you can’t do without it, so wouldn’t it be nice if, like air, you didn’t even have to think about it?

You could use a $20 600MHz Teensy 4.0 to simulate the 6502, or virtually any cpu and memory sub-system from that era.

Use a Teensy 4.1 you get uSD card socket, USB host for keyboard and ethernet.

Not 5V compatible, but it’s a cheap way to play with old processors.

Yeah .. if I were banging around with a Teensy I’d probably try something more ambitious than turning it into a 6502 simulation. Layers of abstraction ARE amusing, in an academic sort of way, but when the urge to play around with old processors strikes, simulators don’t really scratch that itch. Which is probably why I have a 65c02 on a breadboard right now. (And being CMOS, 3.3V logic shouldn’t be a problem)

This looks useful: https://github.com/robinhedwards/ArduinoBASIC

This exists, micropython has a REPL on the serial port and via websockets (webrepl). Works very well on the ESP microcontrollers!

https://www.espruino.com has a nice JavaScript REPL (and IDE, libs). Works surprisingly well and was very helpful in getting started. You can flash it on practically any old esp8266 AFAIR.

Then there is http://www.ulisp.com/ which I keep wanting to try.

There is Espruino espruino.com with WebIDE https://www.espruino.com/ide/ , you can develop and debug javascript directly on microcontroller (over usb, serial or BLE). Also it is event based so you still have REPL console available while your code runs.

Runs best on nrf52 and stm32 boards. Also esp32 and esp8266.

Indeed: Espruino is very useful to quickly test out / mess with stuff already ‘flashed’ to the uC. Loading ‘modules’ is also very easy ( I used to use it a lot, on different uCs & to implement HID stuff ), and if needing some more speed, stuff can be done using ‘inline C’ ( or even adding C/C++ fcns to the interpreter & other stuff ), but I didn’t digg that yet ;)

It also runs on couple of hackable nrf52 based smartwatches (apart from official Bangle.js one of course), currently best ones to try are P8 and DK08 ($16, $12 on aliexpress), as for inline C I have even implemented whole display driver in it – P8 details here https://github.com/fanoush/ds-d6/tree/master/espruino/DFU/P8 or see what others did on top of that https://github.com/enaon/eucWatch https://github.com/jeffmer/DK08Apps

Cool. I’ll absolutely have to check Espruino out. I’ve known about it forever, but shied away b/c of my distaste for JS, which is probably stupid on my part. :)

I have a handfull of Aruino Nano clones with an ‘FF’ label on them, meaning I loaded them with Fast Forth and a bunch of STM32 and ESP32 labelled ‘uP’ to mean MicroPython is installed. Micropython has WebREPL that works with ESP32/8266 once you get it online over wifi. https://micropython.org/webrepl/

But yes. There is much to be desired and much that is frustrating. It is all still much harder than using a good Forth on a 6502 in 1980. I had an AIM-65 with FIG-Forth and a PROM burner to save code that was easier to use. In fact at the moment I am a bit stuck trying to use VSCode and a terminal program to work with MicroPython and STM32 in a reasonably painless way. (I’m not a VSCode guy, so that might be why, but all the tutorial type stuff out there stops short of being useful.)

hi!

AFAIR with LUA and the esp8266 (ye olde nodemcu) you can program via web, save, run and even edit and set to run on startup modifying a base file.

https://www.instructables.com/NodeMCU-WebIDE/ as example

the trouble is “Yay, let’s squeeze a fat, resource-heavy hog of a scripting language” – that most of the languages mentioned up ARE fat an bloaty for a micro controller.. Python, javascript, lua, are all way more than you need.. Forth I get, but I just hate the language…

RPN takes getting use to.

FIGition was / is an AVR-based Forth SBC where code was developed on the device (using an 8-key chordal keyboard) and where output went to composite video. It’s firmware contained its OS + editor + Forth, and also contained 8Kb (or 32Kb) of SPI SRAM + 0.4Mb (or 0.9Mb) of SPi Flash storage (with an Flash Translation Layer) for saving source code. So, this kind of thing does happen.

1000 FIGnitions were sold between 2011 and 2014.

https://sites.google.com/site/libby8dev/fignition

There have been at least 3 fairly recent projects to show that a minimal interpreter “shell” can be squeezed into resource limited mcus – such as ATmega, MSP430, ARM M0 etc.

One was inspired by the Hackaday “1024 byte coding challenge” a few years ago.

They set up a minimalist serial interface, and often use single ascii characters to represent a series of bytecode instructions. A bytecode interpreter can be as simple as a look up table contained within a while loop. It uses the ascii value of the input character to index into the table where it finds the starting address of the routine to be executed. On an MSP430, this can be implemented in about 300 bytes of code.

If the interpreter encounters numerical characters, it translates them into a 16 bit integer and places that on the stack. It’s a bit like Forth, but lacks the dictionary and the complexity of command parsing to do a dictionary search.

These shell interpreters can be easily written and quick and easy to use. I have used them for sending positioning commands to experimental X-Y positioning systems.

For example the serial input 100 X 200 Y would be interpreted to move the carriage 100 steps (or mm) in the X direction and 200 steps in the Y direction. Or these can be used as absolute co-ordinates relative to some origin. These commands can be put into a text file and sent to the mcu one line at a time using a terminal programme. A bit like G-Code.

With 96 printable ascii characters to use as “instructions” you have a lot of scope for creating a micro-language that is uniquely tailored to your own application. The language can be made flexible, extensible and self-hosting in under 2Kbytes of ROM. You do not need vast RAM resources, I have run with only 512bytes on an MSP430.

I have implemented it on ATmega, MSP430, ARM M0, M4, M7 and custom DIY cpus. I often use it as a start-up language to prove that the hardware is functional.

The source code can be written in C, but for further compaction re-writing in assembly language is preferable.

More information here: https://github.com/monsonite/SIMPL

Thank you. Your post is very clear, and thought provoking in a good way. It is comments like yours that make Hackaday ten times better.

Miroslav – thank you for your reply.

My current interests lie in creating extremely simple cpus, with very limited resources.

My inspirations are the very early microprocessors (8080, 6502) and the minicomputers that preceded them such as a the PDP-8, PDP-11.

Unlike the 1960s and 1970s where these machines ran at about 1MHz, when implemented in modern FPGAs, these architectures can now run at over 100MHz.

With any new mcu, I try to implement the SIMPL serial shell. This gives me a familiar working environment with which to explore the new architecture.

The SIMPL shell can be implemented in C, but where no C compiler exists, it can be quickly coded in assembly language because it only has about 6 basic routines.

Porting SIMPL to a new processor is a great way to learn its ISA!

SIMPL is an extendable toolkit for exploring new architectures and it is easily adapted to suit whatever application.

I consider it to be a more humany friendly language – one above assembly, but without the extra complexity needed by Forth.

Much of my work this year has involved simulation of old cpus using a 600MHz Teensy 4.

Fun! Will have to flash this around.

I’m currently playing with mmBasic on the Colour Maximite 2, featured on Hackaday back in June 2020: https://hackaday.com/2020/06/23/boot-to-basic-box-packs-a-killer-graphics-engine/

This is based on the STM32H743IIT6 32-bit RISC-core microcontroller with embedded graphics accelerator that executes something like 500,000 mmBasic instructions per second. This is orders of magnitude beyond my first BASIC experience on an HP2100-series minicomputer, and very, very powerful. It has a built-in editor / IDE. Other mmBasic hosts include more “industrial” versions of hardware, as well as a “hosted” version on Windows or Linux.

There is a very active and convivial developer / user community on “The BackShed Forum”

Not the same, for sure, as an Arduino (or squeezing Arduino code into an ATTiny), which does require a separate IDE, but the appropriate tool for the appropriate challenge, right?

Having written significant image processing code in Forth, I, too, can attest to its speed. Yet, the language should be called “Backth” because of it’s RPN form of calculations. That said, I can also unequivocally state that someone that writes in Forth has a twisted mind.

Keep up the good work.

Funny? I have no urge to take over the world.

Your looking for a Raspberry Pi. I don’t want an OS on my uC. I want it to start instantly and do one thing. Platform.io is a great idea and if you want experimental and productive, check out Thonny.

The notion that BASIC is “interpreted” is wrong, at least as far as MS-BASIC is…it as actually converted to what is known as “p-code” aka pseudo code, yes it’s not pure machine code, but the runtime engine is not parsing out the text that you type in…MS-BASIC is a table driven language, that the more one studies it, one will understand it…what you see on the screen when you type “list” is a decoded from the p-code

I noticed how fast people could develop an app with Micropython badges at SHA2017.

Way faster then with other tools.

Yup! Had this experience too, at different events. Twice with BASIC (Hackaday Belgrade 2018, Supercon 2018), and more with Python — basically all of the badge.team badges, and CCCamp last summer.

The hands-on immediacy of a shell onboard seems to really matter. Not only for, but especially for, newbies.

But with the Python REPL, there was still the whole edit-save-upload-run cycle between laptop and device that I felt got in the way of the “purest” experience. You may say I’m a dreamer…

… but can’t the save/upload/run step be automated or scripted away to a one-key ‘test’ step?

Yes, certainly. At least, that’s how it can be done on “real”, i.e., von Neumann architecture computers. With Harvard architectures, you can only run compiled code from the flash memory, which in microcontrollers has a limited number of writes possible. So this would be an option for ARM but not AVR CPUs.

http://www.bipes.net.br tries to solve such problems. It relies on a board with micropython. You have an interactive shell with WebREPL, can send and manage scripts on the board, and even edit , run, adjust and run programs again. Moreover, no software install is need. Just a board with standard python or micropython and bipes!

Yeah! That’s looking like what I was thinking. I’ll have to dig in further.

I got those ESP32 board (LOLIN32 lite), they came preflashed with micropython:

https://diyprojects.io/unpacking-wemos-esp32-lolin32-lite-testing-firmware-micropython-raspberry-pi-3/

For 4EUR shipped.

But I think they discontinued that model, now it is few EUR more expensive.

For everybody in the “interpreted code is easier” camp, I have this: XDS Sigma 7 BASIC from around 1970. This was a BASIC compiler with built-in editor. You edited as usual, then typed “run”. Anytime it encountered a “stop” instruction, it would bounce back into the editor/shell, which would allow you to type in BASIC commands, which it would compile and execute immediately. You could type “continue” to resume execution. If you typed “run” with no program in memory, you could type commands (without line numbers), and they would be executed immediately as well. Starting a line with a number returned it to editor mode. It was quite similar to the environment for Python and other modern interpreters, but it ran your programs at compiled speeds.

I repeat, this was in 1970.

On my list of Things To Do When I Get Around To It is a Logo for microcontrollers. My kid is into a graphic novel series called “Secret Coders” which teaches Logo, and, while there are online Logo interpreters, it would be awesome to bridge to hardware hacking as well. MicroPython is great and all, but I’m a functional programmer at heart.

Same with lisp

The language does not need to be of the “interpreted language” family to have a REPL (read-eval-print loop, aka “interpreter”). Most compiled functional languages, like Haskell, OCaml, Scala, do have a REPL. This is a misconception, like the one that says if a language is typed, you have to make the code more verbose by spelling out the types. A lot of languages have forward type inference now, but ML-style functional languages (SML, OCaml, Haskell) have bidirectional (Hindley-Milner) type inference, which allows you to not spell out any types at all (most of the time).

Source: I am a programming language researcher.

I’ll take your word for that, but to me that’s just laziness, which is a result of having more and faster cores than you usually need. Other modular compilers split the task into two jobs, with a front end translating source code into an intermediate language, usually stack-based to make it scale better, and optimized to eliminate things like results that are never used, and a back end to translate that intermediate language (aka “virtual machine”) into optimized object code for the target architecture. Of course it’s up to the implementer, whether the intermediate language gets translated at compile time, or interpreted at runtime, so yeah, you could easily be running interpreted C without knowing it.

I’ve been using uPyCraft https://github.com/DFRobot/uPyCraft for this, and while it’s not beautiful, and it has its quirks, it is a lot better than loading manually every time you try a new version of your code. It also facilitates erasing and burning firmware, watching the console, etc. No affiliation, just a happy user :)

Some Forth / ARM tools helped bring-up a GAO approved XScale ARM secure mobile phone back in the day with the QUIT loop affording more than OK interactive hardware access. The cool thing had that the kernel words arrived in C so that the existing cross development tools from the hardware (i Inside) and OS vendors (Redmond licensee ) made the Forth environment accessible in both bare metal and OS bootstrap environments and Forth was easier than shifting between two different assemblers/ JTAG debuggers, etc. As the Forth tools contributor Firmworks (creators of Open Firmware) observed, the hardest part of using Forth was explaining why and getting approval . Hardware folks didn’t really care, as long as it helped get the system running at relatively low cost in money and time. Since then (and even before) Forth Inc, MPE, and Mecrisp make Forth relatively accessible for a wide range of MCUs including Risc V. Explanations and approvals probably remain the same.

Elliot wrote:

“No matter how quickly your write-compile-flash cycle has gotten on the microcontroller of your choice, it’s still less fun than writing blink_led() and having it do so right then and there. Why don’t we have that experience yet?”

blink_led() contains so little information.

Some obvious questions…….

What pin is the LED connected to?

How many times do you want it to flash?

What is the on time?

Is it different to the off time?

Do you want to blink a LED, generate an audio tone or a multi-MHz clock frequency?

SIMPL is an interactive shell that provides all of this and much more.

13d10{h100ml200m} 16 characters plus newline

Pin 13 LED, 10 flashes, HIGH for 100mS, LOW for 200mS

8d1000{h500ul500u}

Pin 8 1000 cycles, HIGH for 500uS, LOW for 500uS – a 1 second duration of 1kHz tone

I am currently putting SIMPL up on Hackaday.IO as a project

https://hackaday.io/project/176794-simpl

Interesting project. Having read through your hackaday.io notes, I have a couple of questions:

– Can I write a multi-line program in SIMPL?

– If I specify a ‘loop count’ of zero (ie: 0{stuff} ), does it do no loops, or loop infinitely?

I could see SIMPL being used as a very cut-down BASIC or an easy-to-implement/small-interpreter-size forth.

0{anything here}

“anything here” can be a comment, as the code in the brackets is iterated zero times, not parsed, thus ignored

Fred,

SIMPL has a similar structure to Forth. Rather than “lines of code” you create new functional blocks by combining primitive functions together and assigning them to a new user definition.

For example, the LED flashing routine 13d10{h100ml200m}

You can choose to allocate this code snippet to the user definition F, for “Flash”

:F13d10{h100ml200m}

Every time the interpreter reads F it will execute that block of code. Whilst this is a trivial example, you have almost all of the uppercase and lowercase alpha characters to choose from for your user definitions.

However, SIMPL was never intended to use for creating large applications. It’s a quick and easy hack primarily intended for the initial exercising of microcontrollers.

Regarding the empty loop 0{Flash LED} F The text inside the loop will be skipped and the interpreter will proceed to execute the F definition.

One trick is to redefine the 0{ using the \ character. Any comment following the \ character will not be executed – provided that the comment is at the end of the line and terminates in a newline character.

Further update will be on my Hackaday.IO project page:

https://hackaday.io/project/176794-simpl

https://microide.com/

Is this what you’re looking for? Looks like it hasn’t been updated since July 6, 2020.