For those who have never played the hit video games Undertale and Deltarune, the games are partially known for their interesting characters, many of which have eerie, surreal, and expressive designs. One of the more memorable characters from Deltarune is Tenna, a game show host of sorts whose distinguishing feature is an old television as a head, as well as a colorful suit. As a result he’s been the subject of a number of recreations by various cosplayers and makers like [BigRig Creates].

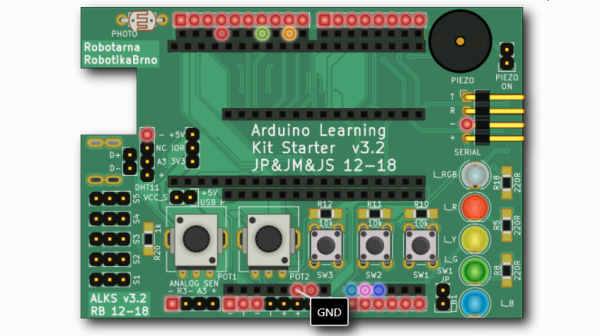

This version of the character was actually inspired by a previous build by [BunnyBii] which used an iPad as the interactive screen/face. Inside the television, though, the actual human found this to be front heavy and limiting in the ways that it could be used interactively, especially since the only way to see the outside world in this version was with a small endoscope and screen. [BigRig Creates]’s version builds on this idea but swaps out the iPad for a Raspberry Pi, allowing for much more customization, and uses a pair of Xreal glasses instead of a screen for the view of the outside world from in the television.

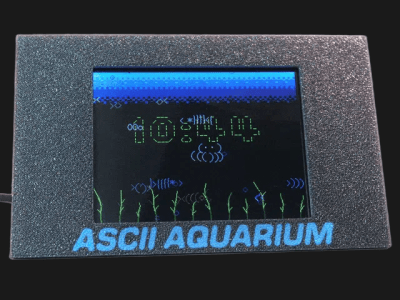

To get the whole costume together, the head is 3D printed with all of the electronics inside, and a game controller integrated into a handheld microphone controls the animations shown on the screen. A vibrant, custom-tailored suit with white gloves rounds out the ensemble, along with a pair of 3D-printed shoe covers since actual yellow shoes were a bit pricy. There were some interesting problems to solve along the way, specifically with regards to power management for all the electronics, but in the end it all seems to have come together quite well. [BigRig Creates] is no stranger to builds with unusual displays, though; one of our favorites was the world’s largest Nintendo 3DS.