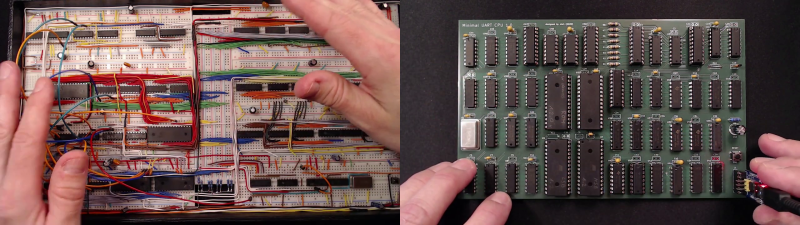

[Carsten] spent over a year developing a small CPU system, implementing his own minimalist instruction set entirely in TTL logic. The system uses a serial terminal interface for all I/O, hence the term UART in the title. [Carsten] began building this computer on multiple breadboards, which quickly got out of hand.

He moved the design over to a PCB, but he was still restless. This latest revision replaces EEPROM with cheaper and easier to use CMOS Flash chips, and the OS gains a small file system manager. As he says in the video, his enemy is feature creep.

In addition to designing this CPU project, [Carsten] built an assembler and wrote a substantial operating system and various demo programs and games. He not only learned KiCAD to make this board, but also taught himself to use an auto-router. The KiCAD design, Gerbers, and BOM are all provided in his repository above. ROM images and source code are provided, as well as a Windows cross-assembler. But wait – there’s more. He also wrote a cycle exact emulator of the CPU, which, as he rightfully brags, comes in at under 250 lines of C++ code. This whole project is an amazing undertaking and represents a lot of good work. We hope he will eventually release the assembler project as well, in case others want to take on the challenge of building it to run under Linux or MacOS. Despite this, the documentation of the Minimal UART Computer is excellent.

[Carsten] claims the project has finally passed the finish line of his design requirements, but we wonder, will he really stop here? Do check out his YouTube channel for further informative videos. And thanks to [Bruce] for sending in the tip.

Sweeeeeet!

Impressive, nice!

I have for a while been thinking of designing and building a TTL based computer, but the desire to build something that is more “useful” tend to sway the design into the territory of being too immense to be built in practice. (or at least within a reasonable budget.)

Somehow, it is easier to design a larger more feature rich architecture than what it is to build something that doesn’t use hundreds of logic chips.

Though, the design showcased here has 47 chips, a fair bit of logic. A fair bit more than some other projects I have seen before.

Iirc there were something like 110 MSI parts on the Apple 2+ motherboard, and that had a ‘VLSI’ chip for a CPU. That did not include serial or disk i/o, just video, DRAM control, and the keyboard interface.

If this project does what it seems to with a mere 47 parts *without* an integrated microprocessor, that would be truly impressive.

Well, the Gigatron project has 38 logic chips including its memory.

So 47 chips is actually not all that few.

And even the Gigatron is still on the “chip heavy” side as far as TTL computers go.

I have seen some designs before with 10-15 chips but these tends to obfuscates most things into memory instead of implementing functionality in hardware.

Building TTL computers is a bit like code golf. Get something that works with as few parts as possible.

Half of that was ROM and RAM, you replace that with single chip flash and modern SRAM and you’re down to half. Get rid of all the address decoding and buffering for the expansion slots, because we wanna compare apples to apples right? and we’re probably down to 15 or less.

Idk, 48K of 4116s is only 24 parts, and idk how many ROMs but would be surprised if it were north of 8. The temptation to discount the DRAM address mux overlooks the fact that the video address generation *was* the DRAM refresh. The more I think about it though, the more I wonder why the heck all those parts were needed. Possibly at the time there were only those 6 bit parts (like the TRS80 mod 1 was stuffed with), or the 8 bit ones cost too much. But I guess it’s time to dig up that schematic..

When the Apple II was designed, not much choice. Dynamic RAM was denser than static, but not by much. If 64Kbit DRAM was available, it was really expensive. ROM was limited to 2K, but at least that was bytes. If there were video controller ICs, there wasn’t much.

It was a big thing when 64K x 1 DRAM appeared, making things like the Commodore 64 viable. Or an improved Apple II with fewer ICs. At some point there was 2K by 8 static RAM in 24 pins like ROM, that was a big breakthrough, thiugh by then DRAM was used mostly.

The Apple II maybe could have been simpler, but if so, it would have been more expensive. TTL was cheap.

Nice project, but…

“UART interface (115.2kbps) for terminal display, keyboard input and file I/O”

Why?!? No old school terminal out there can go beyond 33.6 and you should stick to 19.2 for proper operation. If i would use this i want to be taken back to 1979, even if that is before i was born, to experience computing from back then, not sit in front of my big Ryzen running PuTTY to connect to such a board. The RC2014 is guilty of the same crime… ;.;

Get a copy of Don Lancaster’s “TV Typewriter Cookbook” and build your own from scratch.

https://upload.wikimedia.org/wikipedia/en/e/ec/TV_Typewriter_Cookbook.jpg

Assuming you can find an X-ray display that will accept NTSC or RS-170. Because it has to be a CRT, if you want that authentic 1979 experience. I haven’t even seen a CRT monitor/TV on the curb in 10 years.

Check Craigslist

B^)

Don kindly makes his older books available on his Guru’s Lair website… https://www.tinaja.com/

Well, that’s certainly kind of Don. I didn’t appreciate how many books he wrote. And indeed the TV Typewrite Cookbook is among those he is making available.

https://www.tinaja.com/ebooks/tvtcb.pdf

Radio Shack even sold it, just a different cover.

But the book came out in 1975, complete with excerpts in the early issues of Byte, so it really is a snapshot of what could be done before microprocessors. The original TV Typewriter was more for putting characters on a tv set, no means of computer control. There were additions, but still primitive. And the book teaches a lot, but not really a construction article.

His Cheap Video Cookbook is again mostly ideas, and about tge technique rather than implementation. Son of Cheap Video is more concrete, I recall. But you’d have to write software.

It’s been suggested that his TV Typewriter was a launching pad, something almost within reach, and building one primed things for microcomputers.

I’m on the flip side of this comment. I want to see retro technology pushed far past what it should be capable of doing. :) Or mixed with newer technology in ways that wasn’t possible when it was state of the art.

I like how you think. True hacker spirit maxes out things past and present.

I’m in the same boat. I’m a huge fan of “old technology, but its flaws fixed and gaining some new benefits from new processes”. Like what would happen if we had stuck with 1980s technology and only made incremental improvements in the form of things like die-shrinks, improved materials, etc. In my opinion, we could have much better computers if we spent the 1990s and later decades just fixing flaws and making minor changes like die shrinks and improvements like implementing flash and related memory locations.

It still boggles my mind how so many of my daily tasks are still easily done on my beloved old Sun 3/80. I do my email, my word processing, some web browsing, and all my system administration on it. I’m even in the early phases of building a “revisited” version that basically implements the system, but with some tweaks to take advantage of modern improvements. Like:

*Shrinking the CPU process to reduce power needs and bump up its speed

*Moving the FPU onto the die and bumping up its clock to match the CPU

*Adding some accelerator engines for crypto and image processing

*Using some very high-speed SRAM so that requested memory appears on the bus on the next cycle (If the CPU is run at 250 MHz)

*Using modern flash memory for permanent storage where reads and writes only take like 10 cycles to complete

*Adding in a USB controller.

*Adding a 100 Mbps network interface (Maybe even add a TCP / UDP offload engine to the chip)

*Dropping in a micro-controller to handle miscellaneous tasks like setting some fuses on the CPU, acting as a watchdog, handling power management, blinking some status LEDs, etc.

I have most of the chip mostly implemented and running on an off-the-shelf FPGA dev kit, so far my progress has been getting the CPU to start pulling instructions from flash and running them, and so far its been able to calculate an accurate CRC from the first 2k of flash. Still some bugs in some of the more complex instructions.

You can throw one of these together…

http://searle.x10host.com/MonitorKeyboard/index.html

( Main site searle dot wales if he shuffles hosting again)

For those who are also wondering: “Which CPU?”

Neither 6502 nor Z80, it is implemented in various TTL ICs.

I wondered that too.

Thanks

It’s a gate implementation of the 74181. AND, OR, XOR, ADD, SUB

Life is too short… the 8085 was created decades ago, and OKI made a CMOS version… depending on the clock, it can easily do 4800bps on a lowely 4MHZ thru the SIO port

Very nice. I recently finished the Ben Eater 8 Bit Computer and was wondering what to do next. I wondered if I should step up one layer of abstraction and do maybe a 6502 project or something, or if I should jump all the way up and do a high level project for a change. Now, this project seems like a natural next step from the 8 bit computer. Might very well do this next.

the more actual version of the emulator is at

https://github.com/slu4coder/Minimal-UART-CPU-System