When you want to read what is being said on a television program, movie, or video you turn on the captions. Looking under the hood to see how this text is delivered is a fascinating story that stared with a technology called Closed Captions, and extended into another called Subtitles (which is arguably the older technology).

I covered the difference between the two, and their backstory, in my previous article on the analog era of closed captions. Today I want to jump into another fascinating chapter of the story: what happened to closed captions as the digital age took over? From peculiar implementations on disc media to esoteric decoding hardware and a baffling quirk of HDMI, it’s a fantastic story.

There were some great questions in the comments section from last time, hopefully I have answered most of these here. Let’s start with some of the off-label uses of closed captioning and Vertical Blanking Interval (VBI) data.

Unintended Uses of VBI Data

While I was immersed in the world of VBI data and closed captions for several years, I kept discovering applications that were not related to the intended use.

Newsroom channel monitors

Back in the day, I discovered a small company called SoftTouch, Inc., “innovators in the obsolete” according to owner Doug Byrd. They made various niche market CC products, and in fact, I still have several of his products today. Most prized are two CCEPlus line 21 generator cards, the only full-length ISA cards I’ve ever owned, which have given me an excuse to keep a fully working Gateway 2000 486DX2-66V in my lab for over 15 years.

I enjoyed my phone calls with Doug over the years. He is quite a character, a great story teller, and I learned a lot about the captioning industry from him. While his niche products were used in all kinds of systems, one that sticks in my mind is in news rooms. Imagine a system designed to monitor a wall of televisions (or just tuners) equipped with CC decoders feeding the RS-232 text to a computer which is programmed to look for various key words and alert the staff when found. In fact, we wrote about a similar project on Hackaday about ten years ago.

Real-time translating from Spanish

One surprising application I stumbled upon was a small set top box designed to translate the English dialogue for Spanish speakers who liked to watch US daytime soap operas. This was an interesting project on two fronts. Researchers Fred Popowich and Paul McFetridge at Simon Fraser University developed a “shake and bake” machine translation algorithm which could be implemented in hardware of the day. It was about 80% accurate, but they discovered something interesting. Eighty percent worked just fine for people who only spoke Spanish, but bilingual speakers were annoyed by the mistakes.

Dictionary lookups from DVD captioning

I was discussing closed captioning and DVD subtitles with some Korean engineer friends one night over soju, and learned there was a Korean company who made a specialized DVD player. What made this player special was it had a built-in OCR engine to read the subtitles (not the captions) and offer translations and definitions to the user — it was intended as a language educational product. It did not do real-time OCR translations, instead doing the OCR when the user pressed PAUSE in order to query the machine for help.

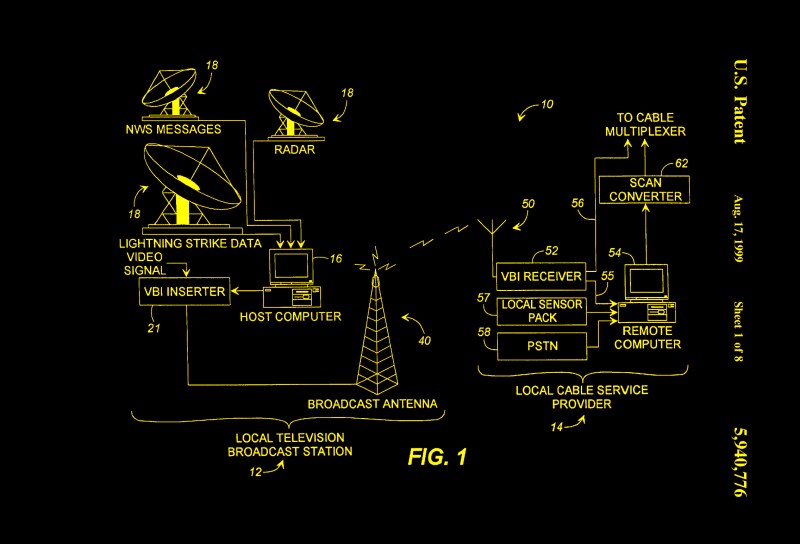

Weather radar data distribution

While teletext was primarily used in Europe, the WST standard included a variation for NTSC countries — The North American Broadcast Teletext Specification (NABTS) known also as EIA-516. Both CBS and NBC experimented with teletext service, but it wasn’t popular. However, the data broadcasting ability did find some traction in other ways. I discovered one surprising application while learning about VBI signals back in the early 2000s. I was talking to an engineer at a local weather radar company in the US, and found out they were broadcasting weather data and radar images over the VBI for the benefit of various emergency preparedness groups. He even lent me an NABTS receiver which I installed in my PC and could monitor the real time data from my desk. To my surprise, he told me that television stations were renting out the VBI lines. In crowded markets like New York City, for example, there might not even be any empty lines available.

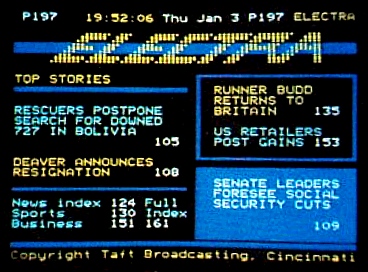

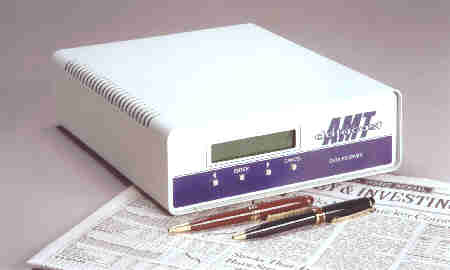

Financial Data

It wasn’t just the weather radar data folks using the VBI, either. A number of datacasting services sprang up which used the VBI to distribute financial information such as stock and commodity prices. Several networks were in operation, some since the late 1970s. They included DTN Real Time, Electra, and Tempo Text. By the mid 1990s, all these services had shut down, the internet having taken over the role of time-sensitive datacaster.

Extended Data Services

The closed caption standard was eventually amended to include what’s called extended data services, or XDS. This auxiliary data was carried in the field 2 VBI and included information like time of day, V-chip rating, station ID, and basic programming information. Some early electronic program guides (EPGs) like Guide Plus were sent over the VBI. There was also an emergency alerts portion of the XDS, which could announce all kinds of weather and other emergencies, down to the state and county region, and for a specified duration.

Making a Line 21 Decoder Today

As I mentioned in my article on analog closed captions, making an analog closed caption decoder in the 21st century is best done without relying on specialized all-in-one ICs. There are three functions required in such a receiver:

- Syncing to the video signal

- Slicing / thresholding the data

- Processing the protocol

Surprisingly, syncing to the incoming video was a real challenge. It was because of the Macrovision copy protection scheme. Wild pulses are inserted in the VBI, ostensibly to prevent making a tape recording (these pulses mess up the VCR’s AGC circuitry). Of course, people who want to make copies just built or bought a Macrovision suppression box. But if you want to reliably sync to Macrovision-ladened video, it’s not simple.

I considered making my own, but there are a lot of special cases. There were also some legal considerations at play, but I was in touch with the Macrovision engineers and wasn’t too worried about that. In the end, I went with a sync separator that was Macrovision tolerant, and had been designed and tested to work in a much wider variety of Macrovision scenarios than I could hope to reproduce in my testing.

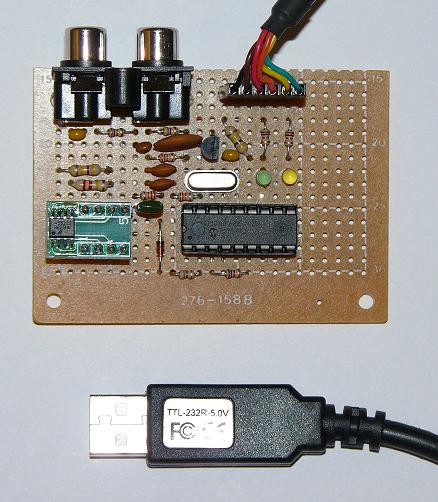

There are various ways to slice the line 21 data. I successfully built several boards based upon a clever open source project. Richard Ottosen and Eric Smith published a nice design using a Microchip PIC16F628, which was subsequently expanded on by Kevin Timmerman. These designs make good use of the PIC’s internal comparators: one as a peak detector and the other for data thresholding. Check these out if you are interested in making your own decoder.

Processing the data once you get it from the decoder can be hairy, depending on how accurate you want your design to be. Reader [unwiredben] commented on the previous article how fun it was to recently write a ground-up implementation. I wholeheartedly agree. One issue is that the requirements are spread out among various documents. Some of them were almost unobtainable back in the year 2000, and yet they make up the official, legal requirements expressed in the FCC regulations.

I particularly remember having a very tough time getting a required report from PBS and another from the National Captioning Institute. It wasn’t because they weren’t cooperative, but because the reports were so old they couldn’t find them. (I eventually got the reports, and also a real Telecaption II caption decoder on which many of the final specifications were based.)

One incident I recall is trying to buy a set of CC verification tapes. Supposedly they were available from WGBH Boston, but when I called it seemed that nobody had asked for them in years, and they weren’t sure any existed anymore. They eventually found one remaining set and shipped it to me here in Korea, where it almost got destroyed in customs due to some obscure law prohibiting the import of prerecorded media. One point to consider — if you simply want to extract text for analysis, the processing will be a lot easier than if you also want to properly display and position the text on-screen.

It is possible to build a digital CEA-708 (see below) decoder. If this is something you’re interested in, I’ve put a couple of links in the comments section below.

The Making of Captions

The process of making the caption text is too complicated to cover here. In brief, the dialogue has to be transcribed into digital format. In the case of real-time captions like for news or sporting events, techniques, skills, and equipment have been brought over from the world of court reporters. In the case of pre-recorded programs, the process can be aided by scripts. But they still have to be checked against what was really said by the actors. Next, any additional cues are added, and then the dialogue has to be broken up into chunks which need to be correctly positioned on-screen and timed with the audio track.

If you’re interested in learning more about this, check out Gary Robson’s website. Not only does he discusses the process of making captions, but Gary has been in the caption industry for a long time and written several excellent books on the subject. I’ve read all of them and sought his advice on a few occasions — a very nice and knowledgeable guy.

Captions and Digital Video

So far the focus has been on analog closed captioning which was used for over-the-air broadcasting, and more or less for cable television broadcasts as well. But what about other ways we view programs? In the case of VHS tapes, it was fortunate, if not anticipated by the designers, that line 21 signals could easily be recorded and played back by videotape equipment. Then along comes digital video in formats like Laser Disc, DVDs, Blu-ray Discs, and streaming, and the world of captioning falls apart — for awhile, at least.

Transition to Digital

First we have the Laser Disc. They stored and played back captions in both NTSC and PAL formats. No big issues here, but just wait. Next comes the Digital Versatile Disc (DVD), and things begin to get murky for closed captioning — a situation that persists more or less to this day with DVD and Blu-ray Discs (BD). The DVD specification calls out a user data packet specifically to store the digital pairs of caption data (as digital data, not encoded in a video line). Although I’m not aware of any technical reason why a PAL DVD couldn’t do the same, only Region 1 (North America) NTSC discs can store the caption data and still be compliant with the specifications.

Most DVD players use this data to generate an analog line 21 signal on the video output signal(s), which can then be decoded by your TV set’s internal CC decoder under viewer control.

- Composite video output

- S-Video output:

- on the Y/Luma signal

- Component video output:

- on the Green/Sync signal if RGB

- on the Y-channel if Y/Pb/Pr

There are a very few players that can actually decode the caption data and overlay this onto the output video as open captions, but these players are the exception rather than the norm.

We Have a Problem…

You may see where this is leading — there is a hidden assumption with Line 21 closed captions. By definition, they are only defined to exist on interlaced standard definition video (480i in the case of NTSC). While there is no technical reason not to, there isn’t any agreed upon standard to send the data over the VBI for any other video timing. Nobody thought about it back in the 1970s.

This led to upset consumers who purchased HD televisions and DVD or BD players, only to find out that they couldn’t view the closed caption data unless they watched the program in 480i mode. But still, if you use closed captions, live within NTSC DVD Region 1, and are content to watch programming in 480i standard mode. Everything is good? Not quite.

For some reason I still don’t understand, not all pre-recorded DVDs contained closed captions, even if captions existed for the movie and were available on VHS tape releases by the same studio. This seemed to be random with one exception — Universal Studio DVDs never had closed captions. Don’t worry, DVD technology offered a “new” solution to this problem — subtitles. Remember those from the early 1900s?

Because everything was now digital, DVDs and BDs could offer the old style hard-baked subtitles, but with a twist. The user could turn them on and off, and could often select from a wider variety of languages (CC was typically limited to two languages, if that, and those from a narrow choice of languages). The freedom to design subtitles was almost boundless — as they were just pictures with a transparent background, they could contain anything. It wasn’t uncommon to see both English and English CC subtitles on some discs.

Despite lots of sources claiming the contrary, BD discs can and do carry line 21 captioning. I have used BD and BD players for testing line 21 signals over the past ten years with no issues. But the catch is the same as with with DVD, they are only generated at 480i standard definition analog outputs. And the dearth of captioned discs is even worse than DVD — I would estimate that less than one third of BDs have captioning. I mentioned above very few DVDs have internal CC decoders. I don’t think I’ve ever seen a BD player with one.

Digital Television

The industry working group responsible for closed caption standards realized something needed to be done to bring captioning into the digital era. The group developed a new version of closed captioning, addressing many of the concerns of the community of analog captioning users. Recall that the analog standard was EIA-608. The new standard is called CEA-708 (CEA was spun out of the EIA when it closed down in 2011). Some features that were added, aside from a format that was compatible with ATSC digital broadcasting, include:

- true multi-language support

- different font sizes

- different font styles

- can be repositioned on-screen

- legacy EIA-608 capability

As digital television broadcasts became the norm, TV sets were replaced with those capable of decoding the new 708 style captions. Your TV set probably has this ability today.

Baseband Digital Video and Captions

The quality of our TV and monitor displays increased rapidly. HD analog component signals were soon replaced by high speed, digital differential signalling. Various standards exist, but HDMI has become the de-facto digital video interface for consumer video. Our television sets are receiving HD digital programming and are able to decode and display new style captions. All is right with the world. Well, not so fast.

One would surely expect a brand new standard such as HDMI, designed from the ground up to support all current and conceivable kinds of communications between consumer A/V devices, to handle the trivial bandwidth and format of the closed captioning signal. Well, you’d be disappointed — somebody did not get the memo. From the start, HDMI has not carried the closed captioning signal. One could be forgiven for thinking it was an intentional decision, as the standard has been updated numerous times and HDMI cables still can’t carry closed captioning.

One would surely expect a brand new standard such as HDMI, designed from the ground up to support all current and conceivable kinds of communications between consumer A/V devices, to handle the trivial bandwidth and format of the closed captioning signal. Well, you’d be disappointed — somebody did not get the memo. From the start, HDMI has not carried the closed captioning signal. One could be forgiven for thinking it was an intentional decision, as the standard has been updated numerous times and HDMI cables still can’t carry closed captioning.

The explanation from the industry was that with change to digital meant that caption decoding must now be performed in your set-top box. This might not have been too bad of a choice if these boxes also provided an ATSC-modulated RF channel output with EIA-708 captions, akin to the Ch 2/3 outputs of old computers and VHS players. As it is, consumers who rely on closed captions now have two or more decoders to fool with: one in the TV’s HD digital receiver, one in a cable set-top box, and perhaps a third in their DVD/BD player.

FCC Saves the Day?

With the shift from prerecorded media to streaming, the situation really got out of hand. The FCC stepped in and solved the situation, kind of. You might think that with a well established, existing standard like EIA-708, something already being used in TV sets and mandated by the FCC for all broadcasters, the reasonable answer would be to require streaming services to use EIA-708 also. And maybe encourage the HDMI organization to carry closed captioning information as well. Alas, that was not the decision. Instead, the FCC ruled that streaming services can use any captioning technology standard they wish, as long as they can deliver captions.

I feel that this state of affairs is less than ideal. Looking back at all the neat and unintended uses of analog closed captions, I wonder how many novel innovations are we missing out on by this lack of a uniform captioning standard. Or rather, our intentional decision not to apply the existing captioning standard uniformly. That said, I don’t want my grumbling about technical details to distract us from the big picture here. The true goal of these regulations, providing captions to the deaf and hard of hearing community, is being applied across all methods of program delivery. That’s wonderful, indeed.

No, LaserDisc was not a digital format.

True, but it has lasers, that’s cool, gotta count for something?

Oops, I goofed up. Good catch, [steelman], indeed Laser discs are analog.

“As I mentioned in my article on analog closed captions, making an analog closed caption decoder in the 21st century is best done without relying on specialized all-in-one ICs.”

Dealt with a company in Europe that provided decoder chips among with other TV and video related chips. The specialized were handy not just in the multiple functions in one device, but had been tested to deal with all the details involved especially when one was going for more than just the US market.

That’s a big benefit. Especially in Europe at the time with the more complicated teletext and the plethora of language options to deal with. The benefit of using a Philips Painter chip (or similar) was not just the hardware but the whole slew of pre-tested libraries that came along with it.

“I feel that this state of affairs is less than ideal. Looking back at all the neat and unintended uses of analog closed captions, I wonder how many novel innovations are we missing out on by this lack of a uniform captioning standard.”

True, although with more capable hardware dealing with that non-uniformity is more possible at the cost of more work on the engineering side.

Is the first paragraph under the “FCC saves the day?” header in a smaller font than the rest of the article or am I seeing things?

For some reason it was, but fixed now.

I saw that too when reviewing the text. But it disappeared after I zoomed in, so I convinced myself that my eyes were playing tricks on me.

Although i use CC , I don’t think whoever is placing the text on the screen ever actually watches it, because the placement of the text on the screen blocks out more vital images…. I’m sure there has to be codes as to where it starts on the screen

If you’re talking about captions on broadcast TV, they usually take great care to avoid covering up lower-third credits etc. Unfortunately, convention has it that only the top and bottom few rows are ever used, so at the beginning of many shows, when initial credits are running, the captions are placed on the top rows and obscure faces, etc.

does anyone out in TV land know…. are there humans typing in text, or is just some computer doing voice recogntion ….I have to laugh when a complicated word or name come over,,,, long delay

Again, if you’re talking about broadcast and premium TV (not streaming), all captioning is done by humans. It’s possible to use voice recognition (YouTube etc) but it’s not all that good.

There’s a big difference in live captioning vs post-captioning. Live captions can be, as you say, quite entertaining. Easy to spot because it’s always roll-up, always delayed a few seconds.

I wouldn’t be surprised if a lot of it is AI assisted nowadays – AI with a human to proofread it. Although then again, doing it fully manually isn’t that expensive if it’s outsourced to poor villages in India or whatever…

That’s probably worth an article in itself, but yes there are humans typing in the text, live. But they aren’t using QWERTY keyboards, they’re probably using steno keyboards. What is interesting is to watch when a particularly obscure (to the average person) word comes up, the text will hesitate, then that word will be skipped in some way.

Regarding news broadcasts, as previously discussed: In many systems the various reports or segments are prepared ahead of the broadcast and are in the teleprompter system. When the news anchor delivers the news, they’re just reading the teleprompter. Simultaneously, the same content is fed to the Closed Captioning encoder. Occasionally I’ve seen the news anchor flub a line or skip over something, yet the closed captioning was flawless. That’s because the source fed to the teleprompter and closed captioning system *was* flawless, only the anchor messed up the live delivery.

For some real-time “live” events, a skilled operator uses a chordic keyboard (like used in courtrooms). The operator can rapidly enter the sounds of the speech using the cordic keyboard. The computer does a translating into actual properly spelled words. It’s not perfect. I remember a broadcast where the reporter referred to Haifa (Israel); the computer did it’s best, but the result was “high far”. More often the computer software confuses homonyms (they’re, their, there).

In Australia, a company uses the following system. The operator listened to the show through headphones, and verbally repeated this into a microphone.

The software then did the usual speech-to-text, but on the operator’s voice. Because the software had been trained on this operator’s voice the accuracy is high.

You can see the process in action by the class of mistakes.

I have very difficult hearing issues – have to use closed captioning. Live sports shows don’t seem to care where the CC appears. Baseball game – can cover the batter. Soccer – can cover the action. Can the user relocate the CC on any TV set?

On some yes one can. Check at the store before leaving.

Thanks for that information! I’m not in the market now, but with this info I can shop intelligently when I am.

Thanks for the article(s). Nice to see a mention of Doug Bird and SoftTouch. Good people. I worked with him and his boards when developing captioning software in the 90s.

Yeah, he’s still around and kicking. I chatted with him briefly in preparing this article.

The benefit of the analog captions were that the same data was sent over and over with each frame.

The new digital scheme is absolute horse balls, because the caption data is dumped in large packets over the transport stream, and the receiver then plays it back like a recording while it’s displaying the actual frames. Well, if you drop or corrupt the packet, or tune in to the channel before the next one is sent, you will have no captions for minutes. Many set-top-boxes and even top end televisions simply fail at it. They’re really fussy about the data which appears to have no redundancy whatsoever, and they mess up the timing so captions appear late, out of sync, and crammed together even when they are received, and the number of errors is so great over terrestrial TV that you can guarantee to lose the captions at least once over a hour-long program even on good weather.

Thank you for explaining why this happens.

The main issue is with documentaries where you have multiple languages intermixed, so you may have no captions for 5 minutes, then someone says something in Japanese, and then again no captions for 15 minutes.

Well, if you didn’t catch the data packet when the Japanese guy first came on, you won’t have the subtitles 15 minutes later either because it’s all crammed into one packet. The broadcaster is also supposed to set up a flag that says “incoming subtitles”, but if you start recording or time shifting a channel before the flag comes on, your recorder won’t format the stream accordingly and will drop the subtitles.

As a result, the broadcasters which do use the standard method have to keep sending the flag all the time anyways, and fiddle with the data rate settings to force the packets more frequently. It still drops packets, and many channels simply burn the text into the picture now.

I have no idea why HDMI didn’t include closed captioning from the beginning but I suppose the future for CC will be something like YouTubes video translating system, seems to be the most logical.

The MPEG-2 transport stream used for ATSC broadcast in the US defines user data sections in the video which can hold both EIA-608 and CEA-708 captions. Most video streams carry both, which unfortunately means that most providers are still stuck with 608-era formatting, as the captions are authored for the lower common denominator.

One issue is that for MPEG-2 and H.264 video, frames are sent out of order. So, the decoder has to make sure to present the user data in the presentation order, not the order in which the frames are seen. If you get this wrong, you end up with a jumble of letter pairs.

I’ve seen broadcasters do crazy things, like have their 608-to-708 converter output all the text, but put them into windows that are always hidden then deleted before the command that would show them. There’s a lot of fallback logic to just show the 608 data if the 708 data is unusable that gets triggered from time to time.

I did a talk about one of the bugs I wrote by mistake when I was working on pulling caption data from H.265 video, it’s online at https://www.youtube.com/watch?v=8KO0LobHZvY

Oh, these remarks are bringing back painful recollections of dealing the CC on DVDs. As noted, all the pairs of CC bytes for a GOP (Group of Pictures) were sent at once. If the player entered trick mode (skipping or fast-forward), getting the captioning restarted was a hassle. Its been too long to remember all the details, but I’m pretty sure that sometimes we would find a mismatch between the quantity of caption bytes and the number of frames in the GOP. Another nightmare — applying the CC text properly when playing in 3:2 pulldown mode!

By the way, if anyone wants to experiment with ATSC captions, I found a couple of posts from five-some years ago, doing this with a SDR and software.

1. This fellow got it working with a HackRF and GNU radio.

https://medium.com/@rxseger/receiving-atsc-digital-television-with-an-sdr-76b03a863fea

2. And this guy used an SDRPlay and GNU radio:

http://coolsdrstuff.blogspot.com/2015/09/watching-atsc-hdtv-on-sdrplay-rsp.html

These are both so old I doubt they will work as-documented, but it should be enough to get you started.

One wonders why a digital closed captioning standard wasn’t firmly written in stone along with ATSC?

Well, that was the reason for EIA-708 captions. I don’t know how deeply it was etched in stone, however.

in Australia teletex was all the rage at first

after a while only channel 7 kept the service going

2,9 and 10 only used it for subtitles

all kinds of stuff, weather news, lot’s of racing

at the TAB or PubTAB (betting shops) there were heaps of TVs with teletext showing results

there is a Linux program that will decode Ttext

fun fact, if you recorded something off channel 7, you got all the teletext channels to browse

Alltough most likely created for the deaf, CC can be used to teach children to learn English words, or I guess any language , and non English viewers to learn word spellings…. I use it to understand Jeopardy questions/answers

Decades ago, when TV was analog, Motorola (where I then worked) made the 6801CC MCU with a built-in data slicer. An interrupt could be enabled to announce the arrival of the two characters from line 21 in a pair of registers. I wrote the 608-compliant code for a TV manufacturer. I got that job because of all the real-time 68xx assembly language I’d written, not because I was a TV engineer. That 608-compliant videotape was the answer to a prayer. I just about wore it out. 608, while much simpler than 708, was still capable of a lot more than I ever saw on any broadcast program or videotape. The MCU was also an I2C controller, which our customer used to control the rest of the set. Because of the high voltages for the CRT, it continuously wrote the configuration data to all the peripherals to recover from possible static discharge.

Do you have a link for that?

I see MC68HC05CC1, and also MC144143.

Hi Chris..

With the first article, i have discovered the PAL “line 22” and i have serached (and found) VHS tape with CC on it (with PAL). I have write a thing about that (in french) : https://www.journaldulapin.com/2021/05/24/pal-line-22/

That’s cool, Dandu! And to my surprise I was able to catch about 1/3 of it using my rusty French from university (it helps that it is a technical topic with many “load words” and abbreviations.

I’m very scared to let the world know of a project I thought of….a commercial killer, that read the CC,… had a DB , and muted the TV , …. now the world knows …. I’m done for

In 1997, I started using a Commodore monitor with a VCR for tv reception. And on one channel, a square block would appear and flash in the upper right corner when a commercial was about to come on. It may have happened with another channel, I forget. I assumed overscan on the monitor let me see something that wasn’t i tended for pubkic viewing, but who knows.

It was consistent, and I wondered why they’d provide such information. It was really useful, I could be ready to get a snack.

In the UK at least, that “cue mark” was a purposeful signal to control centres (in ITV’s case, the regional distributors) that a break for advertisements was imminent, and that they should queue up their adverts (which differed per region) to play. This is back when it was all done manually!

The marks were visible to all, but CRTs from the 70s/80s sometimes had such wild overscan that many people would never be fully aware of them.

When I was a kid, I remember visiting my Uncle and he showed me a widget that muted TV commercials. Don’t get too excited, it wouldn’t work today. This was in an era when many TV movies were still broadcast in B&W but the commercials were in color. This widget picked up on the presence of the color burst to signal a commercial, whereupon it muted the audio.

To expand on a couple of points that were discussed in the Hackaday podcast this week on this topic:

(1) Regarding the VBI and digital video… it’s a carry over from the CRT days, digital video timings do indeed preserve the VBI “gap”. Keep in mind that a digital video connection to a display is essentially in real-time, and while most of our modern displays aren’t CRTs and don’t require a flyback time, there are HD CRTs out in the wild. And don’t forget digital video timings support 480i Standard Definition TV as well — you don’t even need a fancy HD CRT TV to realize that digital video signals like HDMI must preserve the VBI interval. But by agreed upon standards, or rather the absence of such, there is no mechanism to transmit the piddly few bytes per frame of CC data in digital video formats. This is not for any technical reasons, as I recall, the VBI is used to send all sorts of other digital data, such as audio, for example. The point here is that while there IS a VBI defined in a modern digital video interfaces, there is no mechanism defined for it to carry the captions.

(2) Considering the conclusion of the above, you can never play with captions by looking at HDMI signals. What you can do in many cases is playback a DVD or BD (if you’re lucky and the disc is equipped with captions) at 480i mode, and use the composite video from the player (if you’re doubly lucky, and your player even HAS a composite video output) to look at the analog captioning signal that’s embedded into the video. Some players have a non-obvious way to set the analog output to 480i while playing at higher resolutions on the HDMI output. Other players don’t let you do this. If you get an OTA receiver and have the patience to hack around in the firmware, you can eventually extract the EIA-708 bitstream from the ATSC video. You might find real 708 captions, but more than likely you’ll just find older 608 captions that have been transcoded to 708.

(3) I may or may not have the WGBH CC test VHS tapes archived for safe keeping. No further comment on this.