The news is full of speculation about chatbots like GPT-3, and even if you don’t care, you are probably the kind of person that people will ask about it. The problem is, the popular press has no idea what’s going on with these things. They aren’t sentient or alive, despite some claims to the contrary. So where do you go to learn what’s really going on? How about Stanford? Professor [Christopher Potts] knows a lot about how these things work and he shares some of it in a recent video you can watch below.

One of the interesting things is that he shows some questions that one chatbot will answer reasonably and another one will not. As a demo or a gimmick, that’s not a problem. But if you are using it as, say, your search engine, getting the wrong answer won’t amuse you. Sure, you can do a conventional search and find wrong things, but it will be embedded in a lot of context that might help you decide it is wrong and, hopefully, some other things that are not wrong. You have to decide.

If you’ve ever used a product like Grammarly or even a simple spell checker, it is much the same. It tells you corrections, but you must ensure they aren’t incorrect. It doesn’t happen often, but it is possible to get a wrong suggestion.

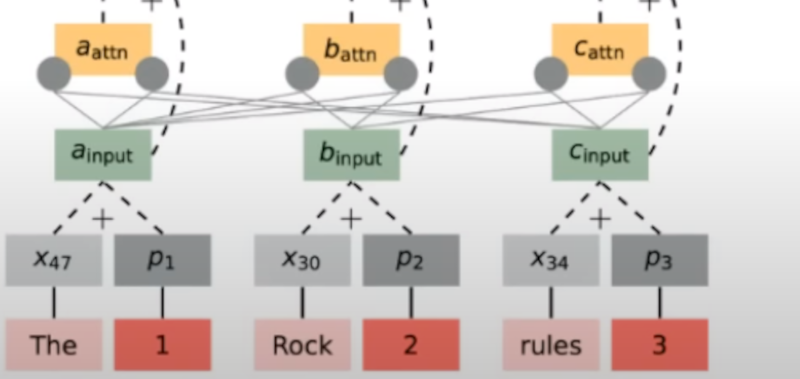

On the technical side, the internal structure of all of these programs uses something called “the transformer” that looks at input words and their positions. The idea came mostly out of Google in a 2017 paper and has — no pun intended — transformed language processing resulting in the things like GPT-3 that we are seeing today.

According to [Potts], the seemingly complex algorithm is a composition of simple parts, but no one really understands why it works as well as it does. There’s more in the hour-long lecture, but it is an hour well-spent if you are interested in this trending technology.

Of course, like all high technology, you don’t necessarily have to completely understand it to use it. Getting things wrong is one problem. Copying things you shouldn’t is another. We can’t predict where all this is going, but it is definitely going somewhere. Until then, it might be true that we don’t completely understand chat bots. But it is also true that they don’t completely understand us.

Surprisingly, Grammarly is often incorrect to the point that it irks me. It does do a decent job of fixing *ahem* grammatical errors, but it introduces more errors than it fixes.

So it’s just following Muphry’s Law?

Never used it myself but I always wondered how well it could do with regional variations in ‘correct’ as it is not a simple as colour is spelt color here lookup when dealing with grammar, there are whole stock phrases that are ‘wrong’ in a more strict interpretation of modern grammar.

ChatGPT and other LLMs seem to be excellent implementations of Neal Stephenson’s Artificial Inanity tools mentioned in his book Anathem.

Worth watching but a few caveats arise. One: guy is definitely overenthusiastic and merely mentions any and all problems that need to be solved. Two (36:40):

No, technology hasn’t democratised anything yet, regulations have. In a world of technology without regulation there is no democracy whatsoever, there is only tyranny of capital.

Well, we’ve inevitably found new ways to screw things up that we then patch with regulation. But I wouldn’t say it’s never helped. Prosthetics? Personal transport? And of course it’s much easier to participate in democracy when you’re not starving and sick.

This one? Nah, just another way to chase seeming good over being right, and making tech capable of biases in the process.

@steelman

How true.

So with a little bit of luck, hyper capitalist marketing will evolve from SEO to ChatGPT-O ? Delightful.