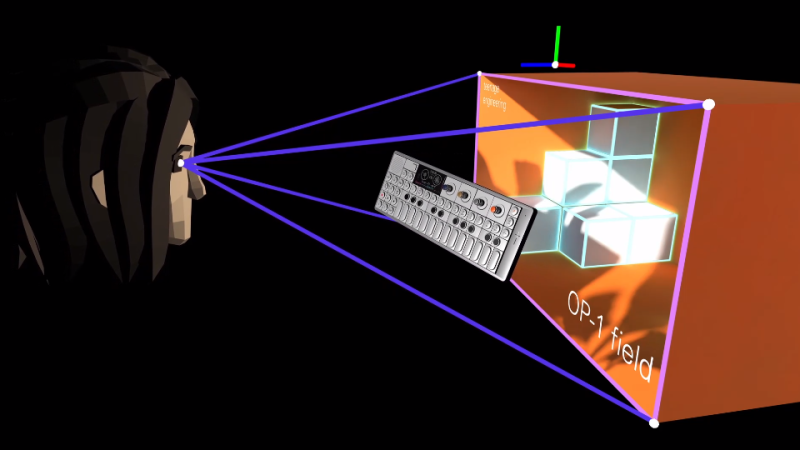

[Russ Maschmeyer] and Spatial Commerce Projects developed WonkaVision to demonstrate how 3D eye tracking from a single webcam can support rendering a graphical virtual reality (VR) display with realistic depth and space. Spatial Commerce Projects is a Shopify lab working to provide concepts, prototypes, and tools to explore the crossroads of spatial computing and commerce.

The graphical output provides a real sense of depth and three-dimensional space using an optical illusion that reacts to the viewer’s eye position. The eye position is used to render view-dependent images. The computer screen is made to feel like a window into a realistic 3D virtual space where objects beyond the window appear to have depth and objects before the window appear to project out into the space in front of the screen. The resulting experience is like a 3D view into a virtual space. The downside is that the experience only works for one viewer.

Eye tracking is performed using Google’s MediaPipe Iris library, which relies on the fact that the iris diameter of the human eye is almost exactly 11.7 mm for most humans. Computer vision algorithms in the library use this geometrical fact to efficiently locate and track human irises with high accuracy.

Generation of view-dependent images based on tracking a viewer’s eye position was inspired by a classic hack from Johnny Lee to create a VR display using a Wiimote. Hopefully, these eye-tracking approaches will continue to evolve and provide improved motion-responsive views into immersive virtual spaces.

Most of the positive discussion about these kinds of projects don’t know about, or don’t mention the following:

>You need to close one eye to go from “fun effect” to “stunning optical illusion.”

I remember the Wiimote demonstration from way back. I never realized this as well until I read this post. I’d still like to try this out for once…

If you’ve got an iPhone, search parallax on the App Store. It returns a free app that thoroughly demystifies this phenomenon and kind of kills your excitement, at least, it did for me.

Neat project!

Instantaneous binocular cues, and perspective motion parallax, are two major depth cues for human vision.. apparently.

With a display that shows one perspective only, the instant binocular cue can “argue” with the continuous motion parallax (moving head) cue, and cause discomfort for some viewers.

A display with lenticular array to provide multiple instantaneous horizontal views, while also correcting for eye position perspective, can bring some degree of added comfort for some viewers.

Very excited for displays that require no glasses or headgear, yet still provide strong 3D cues.

Tracking the pupil and perspective correcting the on-screen view is very clever :)

I have an old Toshiba Qosmio F-125 with ‘glasses free 3D’ lenticular array. The effect is awesome when done right.

I got involved with 3D cameras while working with Greaa Valley Group back in 2008 2009. One thing we learned was that you can very easily over do 3D, and make people have headachs and get sick. With 3D, less can be more. Avoid too much differences between the Left, and Right eyes. Also brightness, contrast, and colors need to be closly matched between the right, and left eye. There’s the concept of depth budget. If something goes from far away to very close, the differences between left and right can get too big for the brain to put together for the close in images resulting in the fore mentioned headachs, double vision, and motion sickness. One very interesting thing we found. If you reverse the left, and right eyes, this does some very interesting things with your brain. At first the scene looks 2D, but the brain tried to make sense of the images. So it starts to try to figure out small areas of the picture, and little patches of the 3D image start to form inside out reversed 3D pictures of what is there. But the entire scene can’t be properly put together by the brain because the 3D is reversed. So your poor brain is left to make sense of only small patches of the scene. If you stair at the image long enough, the patches get larger, but when you stop looking at the image, and look at the room, you find that your regular 3D imaging is somewhat messed up for a few minutes as your brain figures out that Oh, your back to normal now.

New forms of torture.

It’s not a case of ‘too much’ or ‘too little’, but whether you have conformed to Orthostereo (http://www.leepvr.com/spie1990.php) or have failed to conform to Orthostereo. ICD matches IPD or it does not, angle of view matched angle subtended by the display or it does not, etc. There is only one correct solution to the image projection problem (and it’s unique for each user), and deviation from it can rapidly introduce discomfort.

Isn’t this what Nintendo did for their second iteration 3DS, the “New” Nintendo 3DS? That used facial tracking to adjust the already lenticular LCD’s viewport to compensate for slight movements of the user, addressing complaints of the narrow 3d field of view some had with the previous 3DS.

Yes, although the screen isn’t lenticular; it uses a second LC layer as a variable parallax mask.

This reads as a copy/paste of a press release mailed out by a PR firm.

I’m not saying it IS. But it certainly does the corpo-buzzword rodeo.

Very much. Its an experiment by shopify to try to enhance online shopping (aka sell more stuff).