Once upon a time, the cathode ray tube was pretty much the only type of display you’d find in a consumer television. As the analog broadcast world shifted to digital, we saw the rise of plasma displays and LCDs, which offered greater resolution and much slimmer packaging. Then there was the so-called LED TV, confusingly named—for it was merely an LCD display with an LED backlight. The LEDs were merely lamps, with the liquid crystal doing all the work of displaying an image.

Today, however, we are seeing the rise of true LED displays. Sadly, decades of confusing marketing messages have polluted the terminology, making it a confusing space for the modern television enthusiast. Today, we’ll explore how these displays work and disambiguate what they’re being called in the marketplace.

The Rise Of Emissive Displays

When it comes to our computer monitors and televisions, most of us have got used to the concept of backlit LCD displays. These use a bright white backlight to actually emit light, which is then filtered by the liquid crystal array into all the different colored pixels that make up the image. It’s an effective way to build a display, with a serious limitation on contrast ratio because the LCD is only so good at blocking out light coming from behind. Over time, these displays have become more sophisticated, with manufacturers ditching cold-cathode tube backlights for LEDs, before then innovating with technologies that would vary the brightness of parts of the LED backlight to improve contrast somewhat. Some companies even started using arrays of colored LEDs in their backlights for further control, with the technology often referred to as “RGB mini LED” or “micro RGB.” This still involves an LCD panel in front of the backlight, limiting contrast ratios and response times.

The holy grail, though, would be to ditch the liquid crystal entirely, and just have a display fully made of individually addressable LEDs making up the red, green, and blue subpixels. That is finally coming to pass, with manufacturers launching new television lines under the “Micro LED” name. These are true “emissive” displays, where the individual red, blue, and green subpixels are themselves emitting light, not just filtering it from a backlight source behind them.

These displays promise greater contrast than backlit LCDs, because individual pixels can be turned completely off to create blacker blacks. Response times are also fast because LEDs switch on and off much more quickly than liquid crystals can react. They’re also relatively power efficient, as there’s no need to supply electrons to pixels that are off. Contrast this to LCDs, which are always spending power on turning some pixels black in front of a glowing backlight which is also drawing power. Viewing angles of emissive displays are also top-notch. Inorganic LEDs also have long lifetimes, which makes them far more desirable than OLED displays (discussed further below). Their high brightness also makes them ideal for us in bright conditions, particularly where sunlight is concerned.

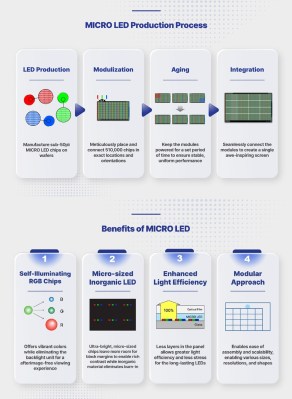

Given the many boons of this technology, you might question why it’s taken true LED displays this long to hit the market. The ultimate answer comes down to cost and manufacturability. If you’ve ever built your own LED array, you’ve probably noted the engineering challenges in reducing pixel size and increasing resolution. When it comes to producing a 4K display, you’re talking about laying down 8,294,400 individual RGB LEDs, all of which need to work flawlessly and be small enough to not show up as individually visible pixels from typical viewing ranges. Other technologies like LCDs and OLEDs have the benefit that they can be easily produced with lithographic techniques in great sizes, but the technology to produce pure LED displays on this scale is only just coming into fruition.

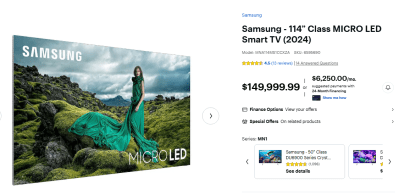

You can purchase an all-LED TV today, if you so desire. Just note that you’ll pay through the nose for it. Few models are on the market, but Best Buy will sell you a 114″ Micro LED set from Samsung for the charming price of $149,999.99. If that’s a bit big for your house, condo, or apartment, you might consider the 89″ model for a more acceptable $109,999.99. Meanwhile, LG has demonstrated a 136″ model of a micro LED TV, but there have been no concrete plans to bring it to market. Expect it to land somewhere firmly in the six-figure range, too.

If you’re not feeling so flush, you can get a lesser “Micro RGB” TV if you like, which combines a fancy RGB matrix backlight with LCD technology as discussed above. Even then, a Samsung R95 television with Micro RGB technology will set you back $29,999.99 at Best Buy, or you can purchase it on a payment plan for $1,250 a month. In fact, with the launch of these comparatively affordable TVs, Samsung has gone somewhat quiet on its Micro LED line since initially crowing about it in 2024. Still, whichever way you go, these fancy TVs don’t come cheap.

But What About OLED?

It’s true that emissive LED displays have existed in the market for some time, but not using traditional light-emitting diodes. These are the popular “OLED” displays, with the acronym standing for “organic light emitting diode.” Unlike standard LEDs, which use inorganic semiconductor crystals to emit light, OLEDs instead use special organic compounds in a substrate between electrodes, which emit light when electricity is applied. They can readily be fabricated in large arrays to create displays, which are used in everything from tiny smartwatches to full-sized televisions.

You might question why the advent of “proper” LED displays is noteworthy given that OLED technology has been around for some time. The problem is that OLEDs are somewhat limited in their performance versus traditional inorganic LEDs. The main area in which they suffer is longevity, as the organic compounds are susceptible to degradation over time. The brightness of individual pixels in an OLED display tends to drop off very quickly compared to inorganic LEDs. A display can diminish to half of its original brightness in just a few years of moderate to heavy use. In particular, blue OLED subpixels tend to degrade faster than red or green subpixels, forcing manufacturers to take measures to account for this over the lifetime of a display. Peak brightness is also somewhat limited, which can make OLED displays less attractive for use in bright rooms with lots of natural light. Dark spots and burn in are also possible, at rates greater than those seen in contemporary LCD displays.

The limitations of OLED displays have not stopped them gaining a strong position in the TV marketplace. However, the technology will be unlikely to beat true LED displays in terms of outright image quality, brightness, and performance. Cost will still be a factor, and OLEDs (and LCDs) will still be relevant for a long time to come. However, for now at least, the pure LED display promises to become the prime choice for those looking for a premium viewing experience at any cost.

Featured image: “Micro LED” displays. Credit: Samsung

Isn’t OLED non organic/non halal?

OLED is short for: Organic Light-Emitting Diode

It does not have meat as such so I don’t know how that works in terms of halalness.

I’ve heard that it is Kosher. ;)

maybe not halal but probably haram. Or if is made in this side of the world then is sinful :)

“is your TV vegan?”

My TV is not, but my tires are. Can’t leave the car parked outside in one spot for too long, the squirrels will start snacking on my Dunlops.

Maybe try those tire-shine sprays?

Or do they like that too I wonder.

Perfect black levels yay

Not perfect unless built on a substrate of vantablack! I want darker-than-dark darks!

Or hantablack… if you want a rodent-virus-based substrate.

Not if the coating reflects light from the room.

Matte coatings look less sharp and somewhat hazy, glossy coatings are sharper, but act like a mirror.

Perhaps they can use special coatings that make use of the narrow spectrum of the LEDs and block all other wavelengths. Or block light from certain angles.

Until then we need to darken the room and limit reflections from the walls back to the TV to enjoy good black levels.

We just need to come up with panels with active noice cancelling technology and light band steering, like audio and radiowaves… how hard can it be.

Antireflective coatings were common on good CRTs. LCDs seem bereft of them.

good thing no one has spent any time at all researching this or releasing products to the market to address it

just an anecdote about OLEDs…i got a phone with an OLED display a long time ago, and apart from looking really great with its true blacks, it enabled a use case…i used it to read in bed, with the brightness turned down ludicrously low. Letters were still sharp and easy to discern against the true black background. So recently when a new phone happened to have OLED, i looked forward to revisiting that use case. But it’s no good! Even though the new phone seems to truly not “emit” light from black parts of the display, its substrate is basically gray (reflective) instead of black. So even in dim room lighting, the blacks still glow from reflected ambient light. And in a dark setting with the brightness turned all the way down, the inactive pixels still ?reflect? some of the light from the active pixels. The dim letters on a black background are not sharp! Not sure if the fact that the new one’s cover plate is plastic instead of glass/transparent-aluminum matters to this.

There are so many consequential details in display technology.

Hi Greg! I noticed something similar in my last phone and it turn out to be the reading mode that in theory reduce the blue light emission. When it is enabled, the blacks are grey instead of black. Just in case you are suffering the same. It was really disappointed with the black until I discovered that.

For perfect black we will need to finally develop the LADs, Light-absorbing diodes. Unfortunately connecting the regular LEDs in reverse doesn’t seem to work for that.

And DASARs, for creating coherent antiphoton beams, projecting darkness on any surface.

Overdriving an LED does the trick – they turn black. Only problem is you can’t switch them back again.

I believe these are called Dark Emitting Diodes. DEDs, pronounced ‘dead’.

Could probably get away with coating the substrate the LEDs are mounted on with vantablack. Looks like the total area covered by the light absorbing coating would get you to a very high contrast ratio.

Even if the LEDs have longer lifespans, I’m sure manufacturers will figure out how to make the TVs obsolete after a few years.

The smart concept seems to work pretty well for them.

Within 5 years the first apps/functions will no longer function or function partially.

Then they start changing the plugs again.

Within another 5 years the screen format will become obsolete (SD -> HD -> 4K).

Then they start changing the plugs again.

The integrated DVD player has already been replaced for USB, which seems a pretty solid standard if you consider the first generation of connectors, but the codecs keep changing, so your movie won’t play… wrong filesystem, wrong codec, right codec but wrong framerate, right codec but now you soundbar doesn’t work. Yep, a soundbar, because those fancy new TV’s don’t seem to have decent speakers. But no worries, your sound system is wireless, darn now I need an extension cord to plug in the wireless soundbar. In most cases it will connect, but only when it’s really important, connection is suddenly lost and you haven’t got the slightest idea what could be wrong… By the time you’ve got all of this figured out it’s time to go to bed anyway. Somehow, 30 years ago, I expected the future of television to be slightly less complicated.

i have a 41 inch 4k smarttv i use as a computer monitor. i have never once given it access to any of my wifi hotspots in an effort to avoid any obsolecence that can arrive that way. so far it’s working great this way.

Uh, what? That’s actually bad for your eyes. The pixels are bigger, because TV’s are usually a few meters away.

He didn’t specify how he’s using it exactly, but I’m pretty sure Gus’s eyeballs will be fine, even if he’s sitting right at a normal desk working distance.

Before 4k became commonplace, a 24″ HD computer monitor would’ve been a very normal thing to see on a desk.

A multi-monitor setup made of those was obviously less common but still totally normal for enthusiasts and people who needed it.

A 41″ 4k TV is just the equivalent a 2×2 HD multi-monitor setup (albeit with 21.5″ monitors, not 24″).

This is why you use something more like AndroidTV or a media PC with Jellyfin.

I feel like if true LED tvs come to market then unless they are overdriven to death, we’d be able to swap the panel controller and keep the panel for years. New HDMI standard? Swap out the brains and be done.

There’s a ton of sub-types in OLED, especially in the phone space. QD-OLED, POLED, Super AMOLED, Super AMOLED+, Tandem OLED, AMOLED LTPO, and many more .. confusingly, some intertwine and a screen can be both.

The issue is they generally just label as OLED -or- their own branding (e.g. Super Actua) and you have to look at the device specs to see the underlining technology.

On top of that, you have different screen glasses that can change the amount of reflection and how the underlying panel looks to the eye.

Unlike some other core technologies where standardization groups were formed and some form of naming rules were developed — display companies, especially Samsung/LG, have really run wild in how they brand things and they are often very, intentionally, confusing (cough Samsung).

Agreed. There is so much OLED bashing in that article. Samsung seems to circle around LG OLED screens : QLED, mini and now micro LED.

That was a reply to Greg A .. for some reason it didn’t stick.

And then they start changing the plugs again.

Micro-LEDs also wear out pretty quickly if you get them hot, which isn’t particularly difficult seeing how densely packed they are. If you really crank the brightness up, you can cook the display in a matter of some hundreds or couple thousand hours, so the advantage of using them in very bright conditions is rather questionable.

They not only dim, they also shift in color, so even if you wear it out evenly and avoid burn-in, the primaries are shifting and the color gamut of the screen changes.

I think that shows a benefit to the very high cost of some of these displays… Faults like that can be worth dealing with using calibration if cost is high enough. Like, i have no idea what the technology is, but anecdotally i can report that a stuck pixel on the local basketball arena’s jumbotron that is present at the end of the season in March is ‘fixed’ before the opening game in October. i’m just hoping they don’t replace the whole honkin panel for that.

Standard procedure in the industry seems to be running the leds just hot enough that they start popping withing 6 months of the end of warranty. If they pop without taking the whole array out with them, will be hard to spot the first ones going on a 4 k screen.

I’d like some information on how LEDs themselves are made.

I’ve wondered for awhile why we couldn’t use the same processes that chip fabs use but on a much larger/coarser scale. I’d think the costs would go down if you aren’t shooting for nm precision and you wouldn’t need ultra purity or ultra clean everything because dust would cause less issues on really large scales.

I’d at least have thought that small devices could have these pure wafer LED screens.

True, pixels are what, 0.2 mm for PC (0.23 for mine) and maybe 0.3 – 0.5 mm for TV. Divided through 3 colors, maybe 0.05 mm to 0.15 mm per color. Pretty rough, in comparison.

The article didn’t really go into the details, but as you can see from the Production Process diagram, a big issue with some micro/nano-LED displays is that each color (red, green, blue) needs to be produced from different material substrates (due to the very nature of LEDs), then cut up into tiny dies that are then integrated onto the actual display substrate. In addition, you also need to make sure that you have uniform performance characters from all the individual LEDs you integrate.

Of course, companies are looking at ways to avoid the slicing/integrating process and instead just use a substrate of blue LEDs and then combine them with quantum dots or other materials that can change the emitted blue light into red or green light. While this greatly simplifies some aspects of manufacturing, it doesn’t solve all the problems. For one, we can’t simply produce a large blue LED substrate that is the size of a big TV screen. So either you need to produce tiles that can fit together without any seams, or else you have to figure out how to manufacture the LEDs directly onto a layer of glass or other cheap substrate that we can manufacture at the necessary size.

I’d actually like to know what the latest advances are regarding the problems I’ve mentioned. I only peek at display tech now and again. I suppose I should check out the recent SID proceedings.

I too wondered if single-color micro LED pixels plus quantum dots, so QD-OLED without the “O”, would be a good stepping stone for cheaper micro LED panels. 3 times larger pixels than individual R,G and B subpixels and of a single color should allow earlier manufacturing, better yields, etc.

But I think the industry slow walks technology transitions like this. They have no real incentive to make micro LED production more efficient, with smaller tiles maybe, larger pixels for use with quantum dot overlays, etc. Instead their incentives are to get the most out their current factories tooled for LCD-based panels. The more they can tweak backlight technology and eek out fractionally better picture quality each year, the longer they can keep people upgrading and postpone large investments in retooling whole factories, or building new ones. Iterating on production improvements at small scales is also only an upside for them, even if maybe, in theory, they could go large-scale with micro LED production today if driven to by competitors.

I don’t know how they’re doing this with the RGB micro LEDs, but at least in principle the use of phosphors could fix the problem of combining different LED colors on a single substrate. In QLED displays, a violet backlight is used, and in place of the usual RGB filters behind the LCD pixels, they have phosphor filters that turn these sub-pixels into red or green, and just a yellowish filter for the blue pixels. The same could be done with micro LEDs, where a printed filter is placed in front of an array of violet LEDs, and this filter would have both the phosphors needed for R and G, as well as the filters needed for B pixels.

Then you have another LCD+.

True, you’re still blocking & wasting some of the light emitted, but it’s a tiny fraction compared to any kind of LCD.

Quantum dot phosphors are extremely efficient; rather than starting with white and blocking the unwanted color, they absorb the vast majority of light from the LED/OLED behind them and re-emit it at the desired wavelength.

No you don’t. There is no light valve layer. The phosphors just change the color of the light they receive from the violet LEDs. Sure, they’re not 100% efficient, but they still use ZERO power when displaying black.

You can’t calibrate the color gamut back. It’s permanently shifting how the display handles color and changing what “RGB” means on a per-pixel basis.

That was one of the major drawbacks of CRT monitors. After 4-5 years of use, even a good monitor was just “done” as far as color matching and photo editing etc. was concerned.

They’re usually made of replaceable tiles.

And how well-matched are the replacement tiles to the tiles that have x hours on them?

MicroLEDs do not experience significant brightness loss over time compared to OLEDs but these displays allow panel level tuning so if by some chance you did have some variation in brightness you could adjust the new panel accordingly.

Nobody’s going to be replacing individual panels on a MicroLED display because it would require dismantling the whole sandwich of glue and glass before you can even get to them.

And yes, MicroLEDs do experience significant brightness loss and color shift in a matter of hours if you run them hot. If the screen develops a hot spot and stays there for a couple hundred hours, the color gamut of that portion of the screen will be forever different from the rest of the panel and you can’t calibrate the error out.

Nobody is going to be replacing…..

Yeah you know everything about all as usual. DUDE seriously. Did you even watch the video? Do you even know whats being talked about here?

Not talking about your teeny little apartment microLED TV. We are discussing MicroLED videowalls. We run a 129 inch AWALL microled display at our church. We swapped out 3 panels due to manufacturing defects causing dead pixels. It took longer to address the return box than it did to swap out the panels.

Im so sorry that Im not specific enough for your pedantry. YES microleds will have issues if you run them out of their ideaL specified parameters…duh.

UNLIKE OLEDS MicroLEDs do not experience significant brightness loss over time UNLESS A MORON OPERATES THEM OUTSIDE OF THEIR INTENDED POWER RANGE.

That was in reply to stadium LED displays getting their panels replaced, but the comment system misplaced it.

I recently saw this guy on YouTube with a 157″ awall micro led “TV”.

Modular, seamless micro led panels arranged in the desired size.

Great concept for screens this size. Makes transportation a lot easier.

50k $.

But not a real tv, actually just a big display. Needs extra external signals input device.

Power consumption was also not a joke. I think it needed a dedicated circuit.

But the picture was superb.

youtube. .. /qBd7_uxrq6c

And what is QLED exactly?

Also still LCD TVs. Just with a fancy backlight. A layer with quantum dots is illuminated/stimulated by short wave blue LEDs. The quantum dots convert this blue light into a special white light, supporting an extended color space.

LCD panels employ a backlight, a matric of liquid crystal elements that act as switchable light valves, and a set of color filters to convert the white backlight to red, green, or blue light.

OLED (Organic Light-Emitting Diode) TVs do not use LCD (Liquid Crystal Display) technology. They are entirely different, self-emissive technology.

Traditional OLED displays use white emitting OLEDs along with red blue and green color filters. No quantum dots.

Newer QD Oled screens use Blue emitting OLEDs two thirds of their OLEDs are coupled to quantum dots that convert their emission to red or green light. Three closely placed OLEDS, one raw blue, one red qdot coupled, and one green qdot coupled are needed to produce white light.

(or as we used to call quantum dots, ‘phosphors’.)

phosphors and quantum dots are similar but not the same.

Phosphor emission color is primarily determined by

the specific activator ions (rare-earth or metal ions) used to dope the material

Quantum dot emission color is primarily determined by the physical size of the nanocrystal due to the quantum confinement effect. Smaller dots (approx. 2–3 nm) emit higher-energy, shorter-wavelength light (blue/green), while larger dots (5–6 nm) emit lower-energy, longer-wavelength light (red/orange).

Quantum dots (QDs) offer superior color purity, higher brightness, and better energy efficiency compared to traditional phosphors

Thanks – good to know. I thought it was just a marketing thing.

What I long for are non-emmissive displays however. Where the pixels only change the color of the surface, without being a lamp.

Well if you can live with REALLY slow refresh rates, Theres a few 23-36 inch color epaper options on the market.

AWALL makes a 157″ direct lit RGB LED TV you can get right now for between $50K ~ $55K.

Here is a review video of this glorious beast in an actual home.

https://youtu.be/qBd7_uxrq6c