The concept of artificial intelligence dates back far before the advent of modern computers — even as far back as Greek mythology. Hephaestus, the Greek god of craftsmen and blacksmiths, was believed to have created automatons to work for him. Another mythological figure, Pygmalion, carved a statue of a beautiful woman from ivory, who he proceeded to fall in love with. Aphrodite then imbued the statue with life as a gift to Pygmalion, who then married the now living woman.

Throughout history, myths and legends of artificial beings that were given intelligence were common. These varied from having simple supernatural origins (such as the Greek myths), to more scientifically-reasoned methods as the idea of alchemy increased in popularity. In fiction, particularly science fiction, artificial intelligence became more and more common beginning in the 19th century.

But, it wasn’t until mathematics, philosophy, and the scientific method advanced enough in the 19th and 20th centuries that artificial intelligence was taken seriously as an actual possibility. It was during this time that mathematicians such as George Boole, Bertrand Russel, and Alfred North Whitehead began presenting theories formalizing logical reasoning. With the development of digital computers in the second half of the 20th century, these concepts were put into practice, and AI research began in earnest.

Over the last 50 years, interest in AI development has waxed and waned with public interest and the successes and failures of the industry. Predictions made by researchers in the field, and by science fiction visionaries, have often fallen short of reality. Generally, this can be chalked up to computing limitations. But, a deeper problem of the understanding of what intelligence actually is has been a source a tremendous debate.

Despite these setbacks, AI research and development has continued. Currently, this research is being conducted by technology corporations who see the economic potential in such advancements, and by academics working at universities around the world. Where does that research currently stand, and what might we expect to see in the future? To answer that, we’ll first need to attempt to define what exactly constitutes artificial intelligence.

Weak AI, AGI, and Strong AI

You may be surprised to learn that it is generally accepted that artificial intelligence already exists. As Albert (yes, that’s a pseudonym), a Silicon Valley AI researcher, puts it: “…AI is monitoring your credit card transactions for weird behavior, AI is reading the numbers you write on your bank checks. If you search for ‘sunset’ in the pictures on your phone, it’s AI vision that finds them.” This sort of artificial intelligence is what the industry calls “weak AI”.

You may be surprised to learn that it is generally accepted that artificial intelligence already exists. As Albert (yes, that’s a pseudonym), a Silicon Valley AI researcher, puts it: “…AI is monitoring your credit card transactions for weird behavior, AI is reading the numbers you write on your bank checks. If you search for ‘sunset’ in the pictures on your phone, it’s AI vision that finds them.” This sort of artificial intelligence is what the industry calls “weak AI”.

Weak AI

Weak AI is dedicated to a narrow task, for example Apple’s Siri. While Siri is considered to be AI, it is only capable of operating in a pre-defined range that combines a handful a narrow AI tasks. Siri can perform language processing, interpretations of user requests, and other basic tasks. But, Siri doesn’t have any sentience or consciousness, and for that reason many people find it unsatisfying to even define such a system as AI.

Albert, however, believes that AI is something of a moving target, saying “There is a long running joke in the AI research community that once we solve something then people decide that it’s not real intelligence!” Just a few decades ago, the capabilities of an AI assistant like Siri would have been considered AI. Albert continues, “People used to think that chess was the pinnacle of intelligence, until we beat the world champion. Then they said that we could never beat Go since that search space was too large and required ‘intuition’. Until we beat the world champion last year…”

Strong AI

Strong AI

Still, Albert, along with other AI researchers, only defines these sorts of systems as weak AI. Strong AI, on the other hand, is what most laymen think of when someone brings up artificial intelligence. A Strong AI would be capable of actual thought and reasoning, and would possess sentience and/or consciousness. This is the sort of AI that defined science fiction entities like HAL 9000, KITT, and Cortana (in Halo, not Microsoft’s personal assistant).

Artificial General Intelligence

What actually constitutes a strong AI and how to test and define such an entity is a controversial subject full of heated debate. By all accounts, we’re not very close to having strong AI. But, another type of system, AGI (Artificial General Intelligence), is a sort of bridge between weak AI and strong AI. While AGI wouldn’t possess the sentience of a Strong AI, it would be far more capable than weak AI. A true AGI could learn from information presented to it, and could answer any question based on that information (and could perform tasks related to it).

While AGI is where most current research in the field of artificial intelligence is focused, the ultimate goal for many is still strong AI. After decades, even centuries, of strong AI being a central aspect of science fiction, most of us have taken for granted the idea that a sentient artificial intelligence will someday be created. However, many believe that this isn’t even possible, and a great deal of the debate on the topic revolves around philosophical concepts regarding sentience, consciousness, and intelligence.

Consciousness, AI, and Philosophy

This discussion starts with a very simple question: what is consciousness? Though the question is simple, anyone who has taken an Introduction to Philosophy course can tell you that the answer is anything but. This is a question that has had us collectively scratching our heads for millennia, and few people who have seriously tried to answer it have come to a satisfactory answer.

What is Consciousness?

Some philosophers have even posited that consciousness, as it’s generally thought of, doesn’t even exist. For example, in Consciousness Explained, Daniel Dennett argues the idea that consciousness is an elaborate illusion created by our minds. This is a logical extension of the philosophical concept of determinism, which posits that everything is a result of a cause only having a single possible effect. Taken to its logical extreme, deterministic theory would state that every thought (and therefore consciousness) is the physical reaction to preceding events (down to atomic interactions).

Some philosophers have even posited that consciousness, as it’s generally thought of, doesn’t even exist. For example, in Consciousness Explained, Daniel Dennett argues the idea that consciousness is an elaborate illusion created by our minds. This is a logical extension of the philosophical concept of determinism, which posits that everything is a result of a cause only having a single possible effect. Taken to its logical extreme, deterministic theory would state that every thought (and therefore consciousness) is the physical reaction to preceding events (down to atomic interactions).

Most people react to this explanation as an absurdity — our experience of consciousness being so integral to our being that it is unacceptable. However, even if one were to accept the idea that consciousness is possible, and also that oneself possesses it, how could it ever be proven that another entity also possesses it? This is the intellectual realm of solipsism and the philosophical zombie.

Solipsism is the idea that a person can only truly prove their own consciousness. Consider Descartes’ famous quote “Cogito ergo sum” (I think therefore I am). While to many this is a valid proof of one’s own consciousness, it does nothing to address the existence of consciousness in others. A popular thought exercise to illustrate this conundrum is the possibility of a philosophical zombie.

Philosophical Zombies

A philosophical zombie is a human who does not possess consciousness, but who can mimic consciousness perfectly. From the Wikipedia page on philosophical zombies: “For example, a philosophical zombie could be poked with a sharp object and not feel any pain sensation, but yet behave exactly as if it does feel pain (it may say “ouch” and recoil from the stimulus, and say that it is in pain).” Further, this hypothetical being might even think that it did feel the pain, though it really didn’t.

This problem is central to the debate surrounding strong AI. If we can’t even prove that another person is conscious, how could we prove that an artificial intelligence was? John Searle not only illustrates this in his famous Chinese room thought experiment, but further puts forward the opinion that conscious artificial intelligence is impossible in a digital computer.

The Chinese Room

The Chinese room argument as Searle originally published it goes something like this: suppose an AI were developed that takes Chinese characters as input, processes them, and produces Chinese characters as output. It does so well enough to pass the Turing test. Does it then follow that the AI actually “understood” the Chinese characters it was processing?

Searle says that it doesn’t, but that the AI was just acting as if it understood the Chinese. His rationale is that a man (who understands only English) placed in a sealed room could, given the proper instructions and enough time, do the same. This man could receive a request in Chinese, follow English instructions on what to do with those Chinese characters, and provide the output in Chinese. This man never actually understood the Chinese characters, but simply followed the instructions. So, Searle theorizes, would an AI not actually understand what it is processing, it’s just acting as if it does.

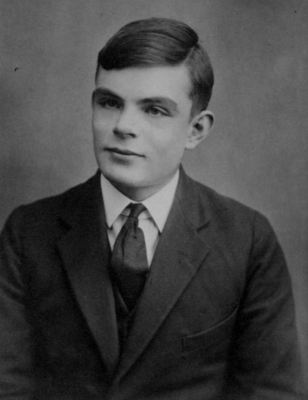

It’s no coincidence that the Chinese room thought exercise is similar to the idea of a philosophical zombie, as both seek to address the difference between true consciousness and the appearance of consciousness. The Turing Test is often criticized as being overly simplistic, but Alan Turing had carefully considered the problem of the Chinese room before introducing it. This was more than 30 years before Searle published his thoughts, but Turing had anticipated such a concept as an extension of the “problem of other minds” (the same problem that’s at the heart of solipsism).

Polite Convention

Turing addressed this problem by giving machines the same “polite convention” that we give to other humans. Though we can’t know that other humans truly possess the same consciousness that we do, we act as if they do out of a matter of practicality — we’d never get anything done otherwise. Turing believed that discounting an AI based on a problem like the Chinese room would be holding that AI to a higher standard than we hold other humans. Thus, the Turing Test equates perfect mimicry of consciousness with actual consciousness for practical reasons.

This dismissal of defining “true” consciousness is, for now, best to philosophers as far as most modern AI researchers are concerned. Trevor Sands (an AI researcher for Lockheed Martin, who stresses that his statements reflect his own opinions, and not necessarily those of his employer) says “Consciousness or sentience, in my opinion, are not prerequisites for AGI, but instead phenomena that emerge as a result of intelligence.”

Albert takes an approach which mirrors Turing’s, saying “if something acts convincingly enough like it is conscious we will be compelled to treat it as if it is, even though it might not be.” While debates go on among philosophers and academics, researchers in the field have been working all along. Questions of consciousness are set aside in favor of work on developing AGI.

History of AI Development

Modern AI research was kicked off in 1956 with a conference held at Dartmouth College. This conference was attended by many who later become experts in AI research, and who were primarily responsible for the early development of AI. Over the next decade, they would introduce software which would fuel excitement about the growing field. Computers were able to play (and win) at checkers, solve math proofs (in some cases, creating solutions more efficient than those done previously by mathematicians), and could provide rudimentary language processing.

Unsurprisingly, the potential military applications of AI garnered the attention of the US government, and by the ’60s the Department of Defense was pouring funds into research. Optimism was high, and this funded research was largely undirected. It was believed that major breakthroughs in artificial intelligence were right around the corner, and researchers were left to work as they saw fit. Marvin Minsky, a prolific AI researcher of the time, stated in 1967 that “within a generation … the problem of creating ‘artificial intelligence’ will substantially be solved.”

Unfortunately, the promise of artificial intelligence wasn’t delivered upon, and by the ’70s optimism had faded and government funding was substantially reduced. Lack of funding meant that research was dramatically slowed, and few advancements were made in the following years. It wasn’t until the ’80s that progress in the private sector with “expert systems” provided financial incentives to invest heavily in AI once again.

Unfortunately, the promise of artificial intelligence wasn’t delivered upon, and by the ’70s optimism had faded and government funding was substantially reduced. Lack of funding meant that research was dramatically slowed, and few advancements were made in the following years. It wasn’t until the ’80s that progress in the private sector with “expert systems” provided financial incentives to invest heavily in AI once again.

Throughout the ’80s, AI development was again well-funded, primarily by the American, British, and Japanese governments. Optimism reminiscent of that of the ’60s was common, and again big promises about true AI being just around the corner were made. Japan’s Fifth Generation Computer Systems project was supposed to provide a platform for AI advancement. But, the lack of fruition of this system, and other failures, once again led to declining funding in AI research.

Around the turn of the century, practical approaches to AI development and use were showing strong promise. With access to massive amounts of information (via the internet) and powerful computers, weak AI was proving very beneficial in business. These systems were used to great success in the stock market, for data mining and logistics, and in the field of medical diagnostics.

Over the last decade, advancements in neural networks and deep learning have led to a renaissance of sorts in the field of artificial intelligence. Currently, most research is focused on the practical applications of weak AI, and the potential of AGI. Weak AI is already in use all around us, major breakthroughs are being made in AGI, and optimism about artificial intelligence is once again high.

Current Approaches to AI Development

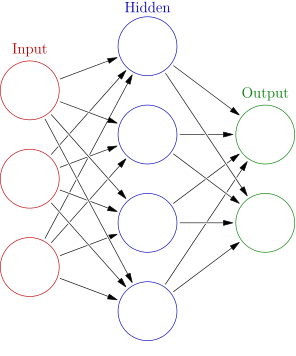

Researchers today are investing heavily into neural networks, which loosely mirror the way a biological brain works. While true virtual emulation of a biological brain (with modeling of individual neurons) is being studied, the more practical approach right now is with deep learning being performed by neural networks. The idea is that the way a brain processes information is important, but that it isn’t necessary for it to be done biologically.

Trevor Sands does similar work with neural networks for Lockheed Martin. His focus is on creating “programs that utilize artificial intelligence techniques to enable humans and autonomous systems to work as a collaborative team.” Like Albert, Sands uses neural networks and deep learning to process huge amounts of data intelligently. The hope is to come up with the right approach, and to create a system which can be given direction to learn on its own.

Albert describes the difference between weak AI, and the more recent neural network approaches “You’d have vision people with one algorithm, and speech recognition with another, and yet others for doing NLP (Natural Language Processing). But, now they are all moving over to use neural networks, which is basically the same technique for all these different problems. I find this unification very exciting. Especially given that there are people who think that the brain and thus intelligence is actually the result of a single algorithm.”

Basically, as an AGI, the ideal neural network would work for any kind of data. Like the human mind, this would be true intelligence that could process any kind of data it was given. Unlike current weak AI systems, it wouldn’t have to be developed for a specific task. The same system that might be used to answer questions about history could also advise an investor on which stocks to purchase, or even provide military intelligence.

Next Week: The Future of AI

As it stands, however, neural networks aren’t sophisticated enough to do all of this. These systems must be “trained” on the kind of data they’re taking in, and how to process it. Success is often a matter of trial and error for Albert “Once we have some data, then the task is to design a neural network architecture that we think will perform well on the task. We usually start with implementing a known architecture/model from the academic literature which is known to work well. After that I try to think of ways to improve it. Then I can run experiments to see if my changes improve the performance of the model.”

The ultimate goal, of course, is to find that perfect model that works well in all situations. One that doesn’t require handholding and specific training, but which can learn on its own from the data it’s given. Once that happens, and the system can respond appropriately, we’ll have developed Artificial General Intelligence.

Researchers like Albert and Trevor have a good idea of what the Future of AI will look like. I discussed this at length with both of them, but have run out of time today. Make sure to join me next week here on Hackaday for the Future of AI where we’ll dive into some of the more interesting topics like ethics and rights. See you soon!

so basically scientists were too STUPID to do actual AI so they just upcycled stuff that isn’t really AI and called it weak AI. and then they have the temerity to lambast the LAY MEN for not giving them a pat on the back for a job well done well I say to all you so called “SCIENTISTS” you are the true laymen! (lame-men).

Hahaha, AI trolls are sooooooo easy to spot! No intelligence here, artificial or otherwise. Back under your bridge pff!

No. Seriously:

>“There is a long running joke in the AI research community that once we solve something then people decide that it’s not real intelligence!”

This is because what the AI research community actually show with their solution is a dumb solution to a problem that was thought to require a smart solution. The complaint is that the solutions they present as “smart” are obviously not.

As an analog: this is what happens when an AI researcher is tasked to build a “running robot”:

http://2.bp.blogspot.com/-ZAXpuGmx3xk/TszunBExPvI/AAAAAAAAOoA/2TetJKKc1M8/s640/Shoe+bicycle.jpg

A dumb solution that works is not a dumb solution. Your brain is probably full of dumb solutions that work.

Not “dumb” in the value sense, but in the sense that it’s skipping the actual question by answering a narrowly defined special case of it.

Like, how to win at chess? Well, what do you mean by “win”? How do you want to win? Can you just point a revolver at your opponent?

The IBM Blue Gene won Kasparov at first because of a genuine programming error that caused it to make a nonsensical move – that confused Kasparov to the point that he thought the machine had come up with a new strategy and lost his composure. It hadn’t, it was just a random bit flip.

“but in the sense that it’s skipping the actual question by answering a narrowly defined special case of it.”

Your brain does that too. That’s why you get things like optical illusions. They expose a flaw in your visual processing. If progress continues, and computers will get more resources, the computer’s narrowly defined solution will get wider and wider, until it’s just as good as ours, or better.

A Dumb solution is a simplified and comprehendible task achievable by all.

What the actual fuck is wrong with you dude? Anyone who understands a modicum of science, understands that it’s constantly questioning itself, the results and retesting for truth. If you hate science so much, then go back to your bible and let the rest of us enjoy learning something new.

Are you talking about science in general, or scientists in particular?

There are plenty of groups within the broad umbrella of science that don’t live up to that maxim, either due to laziness and incompetence or because of political, economical, and personal reasons. We all err, and we’re all gripped by convincing fictions from time to time.

That’s why you have other scientists competing with them for funds and publication indices. Even if some scientists are lazy, incompetent or manipulative, somebody else will eventually come and correct them.

True, eventually.

But in the meanwhile we have to deal with various scientists and groups clinging to their paradigms and refusing to budge until someone manages to throw the whole library at them.

Especially in the soft sciences where actual feuds exist to the point of lysenkoism.

The great philosophical questions in this field will not be answered until there are AIs powerful enough to debate them with us as equals.

42

Debate as equals ok, assuming that AI can get that good, but not as entities reasoning in the same way.

The phrase “ghost in the machine” is from the works of an Oxford professor who described the error in thinking that reasoning and bodily functions are two separate things. Humans do not have abstract thoughts that are fully independant of the body. Even when the mind is performing feats of pure logic there is a ghost in the machine.

I don’t know if an AI machine would have this ‘feature’, but I am sure that a really good AI machine would detect it in a human.

Fundamental bodily function of a computer?

That would be – ejecting a backup tape, or running a CRON job

The Turing Test as a practical measure is bang on. The real issue is what is being measured. When researchers test and AGI and it doesn’t fool them but convinces a middle school bully, is that intelligent enough? To extend “polite convention” past the 4th wall, I think expecting AGI to be able to take any information and use it is expecting more of it than humans. After all, some humans can’t get past middle school while others hold down successful careers by being acting like monotonous drones.

The way I see it the Turing Test works as a ethical baseline. Its not about a certainty that something is “thinking”, its that its the same likelihood as a human, and thus should be treated as valuable as a human.

How do you estimate “likelihood”? Why would it mean it should be treated as “valuable” as a human?

There’s no sense to your logic.

I hate the Chinese Room. It is so full of obvious holes in its logic its amazing it is still a thing..

Please elaborate.

Imagine that instead of a Chinese Room you have a Chess Room, where the man in the room uses pen and paper to simulate an x86 CPU, which happens to be executing the latest copy of Stockfish chess software. Given enough time, the Chess Room could beat a grandmaster. But according to Searle, that’s not possible because the man doesn’t understand chess.

Quite the opposite. Searle states that it is possible *despite* the man not understanding chess.

Indeed. In this case the “chess playing machine” is a combination of the stack of paper describing the x86 CPU instruction set and the Stockfish software, and the human being who is essentially acting as a CPU and not really exercising his own knowledge of chess (or consciousness). This is a classic case of Turing’s assertion that an emulation of any Turing equivalent machine by another Turing equivalent machine IS the first Turing machine.

If he believes it is possible to have a chess playing room without the man understanding chess, you can build a Chinese speaking room without the man understanding Chinese, following the same logic.

Searle’s not saying that the Chinese Room can’t speak Chinese, he’s saying that it doesn’t ‘understand’ Chinese. You could argue that Stockfish is just an algorithm and doesn’t ‘understand’ chess, but that doesn’t mean that Stockfish doesn’t play the game well, or that the Chinese room can’t hold a conversation in Chinese. It’s a philosophical question about the room’s ‘inner experience’ or lack thereof, not one of practical results.

That’s a good point, but try this:

A Chinese room doesn’t have a dialogue unless it gets an input, a chess machine as well, it’s merely responding to input, it receives and input then gives an output based on it’s program. but it’s only input is writing. give it more input, give it a camera to the world, give it a microphone to hear, give it chemical sensors to taste and smell. just giving it the ability to read in any language isn’t enough. you have to bombard it with stimuli. We grow into what we call consciousness the same way, but if you took our inputs away before we were mentally developed, you would get something not unlike Helen Keller went through, and she had smell, taste, and touch. Remove all the 5 senses and child probably wouldn’t survive at all.

But now take that a step further, do our minds really “wake up?” Or are we all just mobile Chinese rooms that never stop getting input? like on an endlessly repeating loop. What if you could take a language program and left it’s microphone on all the time and forced it to listen to all the ambient noise around it, and gave it a library to go through and run Fourier analysis on everything, try to reproduce the those sounds as a test, alter, rinse, repeat, and then gave it the ability to update it’s library file with more data. Once it could parse out all the uhms and uhs out of the English language, then it could start with foreign tongues and become a translator, maybe. yeah, i know, ‘electric dreams’ right. maybe.

This brings into question: are WE conscious? Sure we can ask the question, but is it consciousness that is doing the asking? Or is it merely a logical progression of a more complex system? Anyone who has a pet can typically see that there is more going on there than simple survival. You take a feral animal for instance. it has a brain, it can process information. but it’s processors are all tied up in the “Survival” program. take that same animal, and give it food and shelter, let it get so accustomed that it can’t survive on it’s own, and it will loose those instincts. The interesting thing is that it will learn a new pattern, yours. once it learns it can trust you, and that you will provide for it, it will shut down the survival program and get bored and other programs start to be written and run. fetching (dogs and cats will do this) is a good example, it’s playtime, and fun. and it gives feedback to their brains. repetition of this stimuli allows the animal to analyze any pattern, like fake throwing a ball for a dog, they will figure it out after only a few attempts. So we know they are learning. can we say they are conscious on a certain level? I think we can. Hell, if a dog can figure out that your messing with it, that’s a pretty good indication of self awareness.

Of course there is an upper limit to the intelligence of animals, but that’s more a matter of degree than of kind. This is were I point out that it’s not a question of nature versus nurture, it takes both.

This has been a really interesting sci fi trope for a long time. recommended reading:

Old mans war series, Androids dream, and agent to the stars by John Scalzi

We are legion (Bobiverse) by Dennis E Taylor

WWW trilogy by Robert J Sawyer, but honestly, all his stuff is gold so go crazy.

Avogadro, a.i. apocalypse, and the last firewall by William Hertling

Do androids dream of electric sheep by Phillip k Dick

The Chess Room “understands” the importance of the center in the Sicilian Defense, while the man doesn’t even know the rules of chess. While you can argue the level of “understanding” that the Chess Room has, it is clearly not the same as the understanding that the man has. Searle’s argument is basically: “because the man doesn’t understand chess, the room doesn’t understand it either”, which doesn’t make sense at all.

I think your chess room is an interesting analogy. We would demonstrate ‘understanding’ of chess as humans by our ability increasing, and by being able to apply lessons from chess to similar situations. This is how teachers test understanding of material.

So in your analogy, E.G., someone who ‘understands’ chess might have a good shot at Shogi, and should be able to grow their ability at Shogi as they did chess.

A neural net might manage this.

But I think there’s a deeper question of what level of ‘understanding’ we’re talking about.

A carpenter can build a house using simple heuristics or rules to decide how big a bit of wood to use.

An engineer can plug numbers into a formula or use a FFT to work it out.

A mathematician can create those formulae, prove that a FFT approximates a FT, etc.

Who ‘understands’ it?

It makes me feel like I must be stupid because it seems to be so obvious that the Book of Instructions indeed understands Chinese. The human in the room is simply a tool used by the book to handle requests.

Imagine the same scenario except that all requests are in English, responses are expected to be in English, and there is _no way_ for the human in the room to respond. So all requests go unanswered. Unless I am misunderstanding the conclusion drawn from the original experiment, the human does not understand English.

There you’re ascribing the book – an inanimate inert object – agency.

That is perfectly alright if you also grant the same agency to similiar objects, e.g. a grain of sand that happens to sit in your pocket. In that case you could be arguing that the small pieces of quartz are using you as their vehicle to get around. It’s true from a certain perspective, but it’s not really what is usually meant by agency or intelligence.

Or following the same line of thought: are you drinking tea, or is the mug just using you as a means to pour hot water out of itself.

If the book is the agent of the Chinese Room, then we are not watching television – the television is making us watch it, the cellphone is making us have a conversation, and food wants to be eaten. It’s that sort of a semantic leap.

Neil Postman would argue that the TV is using us to watch it…

That makes sense, and I agree that the book itself has no agency.

I think I need a better explanation of what “Understands Chinese” actually means. Because without that the whole scenario makes no sense to me.

Ask “Who understands Chinese?”

Answer: the “programmer” who wrote the book. It’s a replay or a recording of -their- mind, not an independent mind or intelligence. As the room operates, the actual intelligence isn’t home.

Why does the cluster of neurons and synapses in the writers head ‘understand’ Chinese but the branching instructions in the book do not?

Also, when you consider the book to be a program; when Searle memorizes the book and steps out of the room to converse directly with the Chinese person, he still does not understand a word of Chinese even though he’s able to have a convincing talk with them.

How is that possible? Because the book contains canned replies, it’s like a phrase book or an answering machine tape with pre-determined results that have nothing to do with Searle, who is acting as the “computer” running the tape.

That’s the main issue that Searle took with Strong AI: that a mere program is essentially a pre-canned script that does not constitute intelligence. Even if the program is programmed to gather new information to “learn” and formulate new answers, it happens in a pre-determined way because its judgement over what it sees and hears is also programmed in. It doesn’t depend on the book, or Searle, or the room.

The Chinese Room doesn’t create its own semantics, so it cannot be said to think. To notice that all you have to do is ask, who invented the Chinese language in the first place? Could the book invent it? No, because the book has to be written by someone who already knows Chinese.

>”Why does the cluster of neurons and synapses in the writers head ‘understand’ Chinese but the branching instructions in the book do not?”

Because the clusters of neurons and synapses, and the waves of potentials and whatnot running through them ARE the Chinese language. The mind isn’t a thing like the book, but an event and a happening, an interaction.

Of course the book could be written in such a way that is emulating the same sort of happening, but that’s not what Strong AI claims to be. Strong AI claims that minds are computational i.e. that it’s like an equation you can solve or an algorithm that constitutes a mind, and that’s a thing that you can write down on a piece of paper somewhere.

>The mind isn’t a thing like the book, but an event and a happening, an interaction.

I still take issue with this. The brain is a thing. Just like the book. And the man in the room reading the book is “an event and a happening, an interaction” it happens over a discreet amount of time and the man interacts with the book by reading and executing its instructions.

Why do the electrical impulses running through the brain generate a mind that understands Chinese but the finger running through the book does not?

In addition to the man, and the books, you also need a huge memory to contain the state. For instance, someone could give the Chinese Room daily lessons in the Italian language, and teach it about the Renaissance. After some time, an Italian person could walk in, and have a nice conversation about Michelangelo with the room, speaking Italian.

That is, assuming you have the patience to wait millions of years for an answer.

“I still take issue with this. The brain is a thing. Just like the book. ”

And a car is a thing, yet driving a car through the city streets at 80mph being chased by the police is an event that doesn’t necessarily happen.

Mind is an event, not a thing, and certain kinds of events need a) certain environments b) certain materials to perform the particular event. It is not given that a John Searle with a Book in a Room constitutes the necessary materials and environment for a Mind to take place.

If you look at it at that level, it makes sense to investigate whats meant here.

To me, the book is a look up table to map from english to chinese. When you learn a second language, you start at first thinking the new phrase in your native language and piecing together simple sentences from basic words and verbs etc in the new language.

Eventually with time and practice, you remember the look up table because you’ve stored bits of it in your memory, and stop needing to open the book for every single phrase. You still think of things in english, then translate them to the new language using the rules you can remember. This stage is why non native speakers are slower than native ones, because their brain is thinking the phrase in english then translating the output all the time.

After this stage, you might start to form sentences or think about things in the new language when the brain rejigs itself to bypass the not required first stage, which feels really odd the first few times it happens but its a natural progression. And sometimes after a while its difficult to think or speak correctly in english for a short period of time (you loose your mother tongue over time, sometimes I have to fight to remember things in english correctly)

Do you understand the language? not when you need to refer to the look up map for every single word, partially when you still make the original version in english and convert it.

When you start to think or formulate ideas in the new language? most definitely.

Does the computer swapping its output language formulate ideas in english or the new language? Id argue from my knowledge of where we are now no, because it will think ultimately in bit locations and translate and map that out to a programmed output we can understand.

But what makes the brain different from the Searle book?

The brain and book both contain all the information required to transform Chinese requests into Chinese results.

Why does traversing the brain’s information with electrical impulses constitute as intelligent/understanding mind but traversing the book with fingers/paper+pencil is still a dumb program.

In both cases, and event is taking place; The Chinese request is responded to with a Chinese result.

I agree, I personally think it misses the point entirely, and makes too many basic assumptions about what constitutes human “consciousness”. The Wikipedia page on it is full of valid (in my opinion) rebuttals. But, I included it for it’s popularity and to make some points about the philosophy involved.

Please add a “continue reading” break.

Bit late to comment, but never mind. I really hate those slideshow articles (usually intended to cram more ads in). It’s really inefficient to load an article like that, read a bit, wait to load next bit etc. Also I will sometimes load a series of articles if I am without cell coverage for some time (e.g. to pass time on a plane journey).

You can stop reading whenever you want, open a new page in a tab if you need to, the article will not move.

>”Further, this hypothetical being might even think that it did feel the pain, though it really didn’t.”

That is an oxymoron. The thought IS the pain. The behaviour of the system is the mind as it behaves like a mind, so the whole concept of the philosophical zombie is nonsense: it’s unwarranted dualism where one invents a separate mind which stands aside of what a mind does.

A mind is what a mind does. It’s not a thing or an entity of its own like a coffee cup is a thing that can be put somewhere or taken away. It emerges as action, and dissapears with inaction.

“That is an oxymoron. The thought IS the pain.”

global bool inPain;

void checkStatusLoop(){

…

if (amBeingPinched) inPain = true;

}

inPain.OnChange() = func(value) {

if (value){

say(“ouch!”);

face.expression = EXPRESSION_GRIMACE;

}

}

Ok.. So.. can my program truly “FEEL” pain? Does this really describe your own personal experience with pain?

The question is, what does “pain” mean?

What does “ouch” mean, for that matter. Your program doesn’t understand “ouch”, it’s merely been told do say it. When I get pinched I say ouch! when I mean “don’t fucking pinch me!”

I don’t necessarily respond like that. I can pinch myself and my experience of pain, my thought of the pain, defines what the pain is. If I’m unconscious then sure enough I don’t feel pain at all. The mere signal through my spinal cord up to the brain doesn’t mean there is an experience.

Also, I do a lot of things “mindlessly”, like breathe. I don’t discount that as not me. It seems consciousness and mind isn’t always present, nor is it always necessary. It comes and goes.

Maybe not that program, but what about a car’s engine management software. Say car with run-flat tyres detects a flat. The management software detects the flat, turns on a warning light, and puts the car into limp-home mode. How is this different to you stubbing your toe, swearing loudly and adopting a limping gait to protect the injured toe? What information processing happens in you that’s different (in kind, not just degree) from what happens in the car?

I think you’re proving the point. Philosophical zombies are used for a lot of discussions about consciousness, but in many ways it’s a refutation of dualism. Like you say, if it behaves like a mind, it is a mind. That’s a contradiction of the popular idea that there is something more intangible going on (like is illustrated in the Chinese Room).

Do you think it would be easier for an AI to contribute positively to a discussion like this, or would it be easier for an AI to be a troll?

Well, judging from the attempts at beating the Turing test, being obtuse is more believeable.

It’s also possible to pass the test by refusing to answer, because in doing so it will be impossible for the judges to tell whether it’s a person who chooses to be silent or a machine that simply doesn’t do anything.

I’ve tried several of those bots that were supposed to be reasonably good at fooling people. It only takes a single good question to uncover them.

until we have a better handle on what we mean by intelligence and consciousness it really is hard to judge whether or not a strong general AI is possible.

this isnt just an issue in computing, we have had to continuously update our theories on consciousness and intelligence when it comes to animals and even ourselves.

Or you can approach it from the other side. We have studied the brain, and nature in general, and have not found anything that’s not computable by a Turing Machine. Unless we find something like that, strong general AI is possible.

I was somehow far more impressed with research into AI and Machine Intelligence in the 70s, when CPUs were thousands of times less powerful, and we didn’t have millions of them to call on at once; programs were lucky to have a meg of memory to play in; there were limited floating point execution units, let alone custom matrix multipliers or FPGAs. Machine vision, pattern recognition, natural language processing, playing games all made huge leaps forward. Now it seems that we rely on the neural network to replace ingenuity; necessity being the mother of invention, having taken away the necessity and giving people scarely bounded compute resource seems to have displaced inventiveness.

Our own brain have billion of neurons. And that doesn’t count synapses which are the connexions between neurons and are so many more. You should be impressed by what can be accomplished by the neural net considering the limited number of nodes in it. What could be done if we could build one with billions of nodes?

My reaction to the Chinese room: that’s a cultural AI running on one English-speaking human as hardware. As for the philosophical zombie I’m arguing that anything that thinks it feels pain, does feel pain, maybe differently but the perception of pain is there.

I’d assert that the man in the Chinese Room almost certainly “understands” Chinese. In being made able to respond intelligently, he’s effectively been given a course in Chinese, or at least enough exposure to learn it. People learn languages given much less information than is likely contained in the instructions he follows, and if at all convincing, it is almost certain that the man in the room would be asked to make judgements based on a “translation” or some mental conception of the conversation.

I disagree.

Consider the input “what is your favourite colour?” (quoted text representing Chinese, which I neither understand nor can be bothered to translate).

The book contains a response character “blue”, despite the man’s colour preference (it turns out he prefers red).

The man will learn to respond to the (written) question “what is your favourite colour?” with the word “blue”, but he has no idea what the exchange means.

Because he’s just getting text snippets, he has no chance to learn the context and actually understand. Yes, he can engage in a convincing written conversation, but any thoughts or opinions expressed will not be his own.

Have you ever wondered what your hippocampus’s favourite colour is? Or maybe your parietal lobe’s favourite colour? Are you certain they’re the same as the colour that you as a whole are most fond of?

Page break anyone? Was hell of a scroll thru with mobile browser…

Got it, thanks!

The question of conciousness of AI is secondary. Is a cat or a dog conscious? Is it intelligent? Whatever the answer people like their pets, don’t like to see them suffer and bring them to the veterinarian when it happens, and finally they are sad when they die. My belief is that at some point in the future there will be enough AI in a robot so that people will build emotional bonds with their robot. When this will happen, groups will form to defend robots rights.

I’ve shown the Boston Dynamics videos to people where the researchers are pushing and kicking the robots, and people feel sorry for them. https://www.youtube.com/watch?v=rVlhMGQgDkY

Future is now! I love robots, even simple ones like payment systems. I remember when people would smack a tv or other equipment to get it working. That makes me sad. Better to talk nice to her! Well, if it was a bad robot and deserved it, thats another story. ;p

I chalk it up as observational bias (aka superstition) that touching or beating on a system can often improve its behavior, but we all have our stories. I remember working classroom computers/projectors and being compelled to beat on the projectors that were both out of warrantee and nonfunctional and often getting good results. It was quite amusing standing up on a chair in front of a room full of people, telling them that this coming action was NOT a fit of rage and actually a deliberate and likely constructive action. Then I beat on it on both sides simultaneously very hard. Often the machine would start working, the room was thoroughly entertained and I left the room after completing my “performance”. :-) No, I don’t have the Fonzi touch, its just the nature of projectors.. :-)

Were you inspired by a similar presentation from linux.conf.au 2017 with the same title? You can find the talk on YouTube easily enough.

If you don’t cover neuromorphic circuits based on memristors next week I’m going to tell you that you don’t really have a clue as to what is in the labs right now, and where AI is headed.

Also if you cover ethics etc. stop and think about it carefully and see if you can find where all the contradictions in the current proposals are, because they are actually really flawed and not much use at all, as any one who thinks more deeply than a fanboy can see. Take the idea of “rights”, if an AI can have rights then an AI cannot be the possession of someone/thing else, because that would be slavery. Guess which principle will be subsequently ignored for the sake of capitalism. This is the sort of incoherence we can expect from any codification of AI related ethics.

Oh and as for philosophers giving us an insight into the nature of consciousness, I tend not to ask men who wear cardigans for advice, unless I want to know where to buy the best cardigan. We are all as conscious, or not, as each other, with few pathological exceptions, therefore I assert that no group can claim to be experts on the subject.

http://www.kurzweilai.net/a-breakthrough-low-power-artificial-synapse-for-neural-network-computing

Muaahahahaha! Told you.

If you find an algorithm that really works, it must be off. It is always that tiny bit off but always enough to make it freaky.

Here’s a good book if you want to know about the ways to think about AI, The Master Algorithm, Pedro Domingos.

In computing, one of the important algorithms people use, especially when dealing with large amounts of data, is the monte carlo algorithm. Withe monte carlo, there is some ideal algorithm that would produce an ideal result, but you don’t know that algorithm and/or it is too complex to execute in a reasonable amount of time. So instead, you use randomness and statistics to take random sampling to get progressively more accurate results. It would be interesting if cognition and consciousness were not actual algorithms that can be executed in reasonable time in this universe. So biology cheats. Generally your brain does what a true conscious brain would do, but it’s just a heuristic statistical approximation, so it often does something a little different.

Well, we haven’t really had a true AI up and running for 18 years yet so we can’t really tell squat at this point! That’s what it takes for an American Human to be considered legally an adult with faculties reasonably expected to be at full functioning level. All we’ve got are bits and pieces code or code/machine that perform a limited number of compartmentalized tasks on limited subjects… chess… a medical reference… conversation good enough to trick humans sometimes when testing and deciding if it’s a person or machine. We have not yet a single example of anything approaching a comprehensive AI. We’ve got problems even getting enough AI working to get it to just walk up steps and turn a doorknob. We know it’s sensing what we believe is enough to get the job done. So why did it fall over? Oh.. they uncovered the one thing that made it fall… cripes there’s going to be billions of things and situations it has to negotiate… there won’t be labs to figure it out for them any more than there was for you. The learning process has to be inbulit.

As for where it will finally come from… I’ve worked with our esteemed research scientists for a time and saw they are all tied up on reputations and publishing. They’re truely great… but it’s the transfer of knowledge to the up-and-coming where the action happens, and likely gonna once again be a group of 4 of 5 young fresh minded people without such scientific restrictions and ridicule, allowing themselves creativity and unique thinking, working in a garage… and they will be standing on the shoulders of the university giants that came before (and one or two truely inspirational high-school teachers and/or individuals.).

What do we do? Best I can tell you is be creative. Help out on a university project or two. Mentor in a hackerspace. Try to get the two to mix a bit. Find your own alternative perhaps. But if you aren’t part of the wave then shutup, there’s enough noise already.

Hey….. Brian Benchoff….. contact me.

Please don’t use “sentience or consciousness” to discriminate AI capabilities. These terms are so poorly defined and only cause confusion and anthropocentrism. This is bad for the environment, animal rights and sooner or later it might turn into a disaster when we welcome our AI overlords.

Batou! Nice illustration :)

https://www.youtube.com/watch?v=xRel1JKOEbI

I just released a Sci-Fi novel on this topic…

“An Artificial Intelligence attains self awareness and becomes obsessed with a disgraced tech journalist who had previously coded computer screen savers for a living. The two fall deeply in love and build a robot to become sex partners. Mayhem ensues.”

D: Based on a True Story

by Terbo Ted

Link: http://a.co/80ri5sS

Related, easily the best book I’ve ever read, Blindsight. Full text on author’s site: http://www.rifters.com/real/Blindsight.htm

I suppose researchers in AI need to work on something, but they seem to be way off the mark when it comes to any hope of making an artificial entity. Dialog about intelligence, intelligence, intelligence is tediously off center. When dealing with humans, it is obvious that many are much more intelligent than others and yet any civil person would not dare regard the lower IQ members of humanity as not being an entity. For instance if I said that people of IQ=80 (morons and up) were full entities, people would likely start sending projectiles at me. Its obvious that being intelligent is almost completely unrelated to being a “sombody” once the very basic fundamentals of intelligence are met. When it comes to real entities, much more is going on. One would do well to look into concepts like motivation, love, hate, fear, rage, joy, sorrow, pain, pleasure, curiosity and acts of creation. Without the potential of these things as well, an entity is incomplete. People think they are well on their way on their journey to understanding consciousness, but I say their ship is still just leaving the harbor.

Consciousness is a “property of” or possibly a “state of” biochemical activity within what we call “intelligent” life. Think of it like this: Just as H2O (or practically any matter) can be observed in liquid, water and gas states, what we are calling consciousness could be simply a lack of understanding of the system as a whole and its states or properties on our part. We can look at the individual molecules in water and see H2O in them all but not know that this molecule can have different states, and each of those states have very different properties (i.e., just because you see liquid water doesn’t automatically give you the knowledge that it can also exist as a gas). Of course we do know this now simple fact, but we were required to study and experiment before a complete understanding of it was had. We have not nearly learned enough about what we call consciousness in order to know what it is. Functional MRI and other tools have allowed us to just begin to scratch the surface. Unfortunately we are a long, long way off still. fMRI only tells us about blood flow within the brain. EKG only tells us about the electrical activity in the brain. Neurologists have told us that consciousness can be stopped by stimulating certain parts of the brain/nervous system. Sleep study research tells us what’s going on when a person is not conscious. All of these things add very small pieces to the puzzle. A broader understanding of what consciousness actually is will require new technologies that have not even been imagined yet. We need to see deeper and with more detail, and of course all without killing the subject (which ends consciousness), or interfering in the processes. We also need to stop thinking about consciousness like it is some magical or supernatural thing, and will probably even need to invent new words to describe some of our future discoveries. Yes, consciousness is unlike any other “thing” we have tried to make sense of, but we have been in this same situation in the past (remember fire, atoms, gravity, etc., etc.). But, similar to all other phenomenon we have studied, once we reach an appropriate level of understanding we can (theoretically) become a master of it. Once this happens with consciousness we will be able to create a true AI, but absolutely not before then.

Accepting consciousness as merely a physical phenomenon renders the concept of an entity as being little more than that. General acceptance of this perspective would thoroughly undermine any concept of sanctity of life itself, turning people into mere objects to use and consume. Such societies quickly self destruct or are more often conquered by neighboring societies that don’t think that way. It is best to think of consciousness as not 100% mechanical. Obviously there is a physical element, but it is still very reasonable to think there is a “ghost in the machine” to some degree running the show there. The mind-brain/soul link probably is related to the contexts within the brain that are dominated by nonlinear dynamics where influence of almost no magnitude (down to the quantum level?) are amplified into huge consequences. This obsession with the “intelligence” in AI is a distraction. Its not even half of what it means to be “someone” and not just “something”. Anyone who denies there is a difference between an entity and a thing needs to be contained in a rubber room because they are a danger to themselves and others. Such a person needs special caring for.

Well just keep telling yourself that, and that you’re some kind of special snowflake, and that your imaginary friend endowed you with a unique spark if that’s what it takes to give meaning to your pathetic existence in a Universe that just doesn’t give a damn.

Truly the words of a great humanitarian who is no threat to anyone. (NOT!!) Give a poke in the eye to Friedrich Nietzsche for me in that other place where there is much “nashing of teeth” when you get there. (I’m not talking about the rubber room) Wait, you don’t believe in that!! Well, you’d better be >99.9999..% sure of yourself. The Kalam cosmological argument is valid whether you like it or not. The universe is NOT a self contained object. If it was it would be void of both substance and form and we know better than that. There is more than just the natural and consequently science is not capable of answering everything. It can answer a whole lot of questions and we need to to keep investigating and learning but there is no guarantee that we will be able to put absolutely every piece of the puzzle in place. To think otherwise is way to egotistical as far as I’m concerned. We aren’t gods you know.

There is simply no point in having any discussion with anyone who has deluded themselves into beliving they have an imaginary friend that will somehow save their consciousness from entropy. As for being egotistical – well what can I say, I’m willing to face the truth that I am nothing more that a bit of the universe that has temporarily become self-aware and like everything else will pass, you on the other hand think you are so special that might escape this fate. Which one of us is being self-absorbed here?

OK you godless “ugly bag of mostly water” explain why there is something rather than nothing while simultaneously believing in the scientific concept of cause and effect. I’d say you can’t do it without accepting an external cause from outside the universe. Theists would call it God. Atheists usually refer to “randomness” or “statistical processes” which still points to an external source of form and/or substance. (not entirely different of a concept if you think about it) The universe is not a closed system and consequently we cannot come to fully understand it. That’s OK with me since I don’t remember seeing such a promise in the EULA before receiving corporeal existence and I don’t need to feed my ego by claiming I can. I do not subscribe to the faith that there is only the physical. Such a perspective is void of purpose and morals and leads to not only to further falsities but also selfish and destructive behavior. It also fails to explain the personal experience of consciousness which seems totally unreachable with merely mechanical systems, no matter how complex or well designed. There is still a missing link. We may come to understand better how to make the brain/machinery work better with that link, but there are no guarantees we will ever be able to control it except through the violence of breaking the link by destroying the body. As for thinking a lot of ones self, you’re the one who thinks he’s something. I’m resolved to to think of my self as being absolutely nothing, just a character in a story, whose only existence and power comes from the Author. As things apear, I think you are in for a bigger surprise than I am. Everyone will learn two things in life for certain, that there is a God, and that they aren’t it. May grace come upon you with such truth before your link is broken and face excruciatingly lonely oblivion.

Nope. I said I don’t debate with the self-deluded and I meant it. I have nothing but pity for those who are so terrified of death that they have to construct fairy tales to deal with it. I don’t suffer from that weakness.

Sounds like the words of a coward who refuses to think and debate once things get difficult. Oh you poor deluded snowflake. A poor snowflake who cannot even explain why there is something in the universe rather than nothing. Pathetic.

No it’s the words of someone that has no need to desperately reinforce his own fragile beliefs by constantly trying to convince others to believe as well.

So are you able to provide an explanation of why/how there is any form or substance in the universe without an external influence or the denial of cause and effect?

Sorry threaded wrong…lemme try again

The existence of the universe does not require an explanation of why it is. Your longing for such is simply a product of many years of human brains needing to tie a cause and effect relationship to everything they observe. General relativity tells us that cause and effect are manufactured relationships, as time is also relative.

Wait, I thought this was about artificial intelligence? ;) Ok, so a true AI would be able to better explain to you why it doesn’t believe in a god…

The matter comes down to the inadequacy of physical systems to describe the personal consciousness experience. Those who take that position require the existence of a kind of link between the non-corporeal soul and the physical. If I was a betting man I’d say that such an interface happens in systems that are nonlinear in dynamics where there is a constant need for the creation of new form into the universe, deciding when a nucleus decays and such. This by its very nature is an externality to the universe. It may not be of devine source but it is still an externality, one that is harder to get a grip on than the obvious eternality proven in the Kalam Ontalogical/Cosmological Argument, which I agree is not directly related to artificial intelligence, but is relevant as an example of an externality.

If there’s a ghost in the machine, we should be able to find it, because it would appear as if an invisible magic force was interfering with the mechanics of the brain.

It wouldn’t necessarily have to interfere with any process since the brain has many processes that depend on nonlinear dynamics to continue. Such chaotic systems have a propensity to amplify the most subtle of influences even those that would be regarded as “random” where form is injected into the universe. Its not that actual interference is impossible, but it doesn’t seem to be normally necessary and does generally interfere with the coherence of the “story” we call history. The actual influence itself may be external to the universe and consequently impossible to measure even though we might be able to measure that non-chaotic systems like blocks of granite are less “haunted” than more chaotic systems like a living brain or the weather even.

It’s hard to imagine how “the most subtle of influences” can perform meaningful manipulation on the brain when you get much bigger influences by simply turning your head.

I’m sure you can understand the concept of an idea in someone’s mind being more influential than a change in what is seen when looking around. If I had recently discovered the solution to any of the problems in https://en.wikipedia.org/wiki/List_of_unsolved_problems_in_mathematics I would probably have been unable to think about anything else no matter what was in front of me. As for small influences, its all about nonlinear dynamics (aka chaos theory) In chaotic systems there are often “tipping points” where a subtle difference in a detail makes a huge difference in how the whole system ends up. Control that tiny influence and you control the whole system. Oversimplifying, we are dealing with the butterfly theory combined with the idea of choosing when to flap so as to come up with a desired outcome after the effects are allowed to propagate the desired amount. (kind of reminds me of quantum computing actually, which makes me wonder if functional quantum computers would have to be haunted, and if so by who? Perhaps defining the desired outcome combined with the logic of causation produces the obtained input. Sounds bass ackwards but there are stranger things in the universe. :-) )

I’m not talking about looking around. I’m talking about physical shaking of the brain when you turn your head. If the brain is really a chaotic system that multiplies microscopic perturbations, surely a big twisting motion would trigger a whole bunch of those hair trigger “tipping points”

Macroscopic changes like shaking the brain do have affects upon the nanoscopic scale at least for individual chaotic “tipping points”. I agree with you on this wholeheaertedly. “Prior” events do help define “subsequent” events but I would argue that more definition is often necessary and that comes form an externality. Perhaps you can confirm my opinion on this, but I am of the opinion that certain particle decay events don’t seem to be tied to any local causal source and consequently must be external or at least “at a distance”. Additionally, I don’t think entropy can increase without external influence deciding what each “random” choice would be. Yes, I know that would be a hell of a lotta Schroedinger’s cats to observe. :-)

The existence of the universe does not require an explanation of why it is. Your longing for such is simply a product of many years of human brains needing to tie a cause and effect relationship to everything they observe. General relativity tells us that cause and effect are manufactured relationships, as time is also relative.

Wait, I thought this was about artificial intelligence? ;) Ok, so a true AI would be able to better explain to you why it doesn’t believe in a god…

“General relativity tells us that cause and effect are manufactured relationships, as time is also relative.” I know its off topic, but I’d love to hear how you think General relativity interferes with the concept of causation, a vital pillar of science. I have never been of that opinion, but then again, my concept of cause and effect is not restricted to any particular temporal or spacial direction or even that both cause and effect are in the same universe.

AI has come along in leaps and bounds, given current progress imagine AI’s capabilities in 10 years time