The lights dim and the music swells as an elite competitor in a silk robe passes through a cheering crowd to take the ring. It’s a blueprint familiar to boxing, only this pugilist won’t be throwing punches.

OpenAI created an AI bot that has beaten the best players in the world at this year’s International championship. The International is an esports competition held annually for Dota 2, one of the most competitive multiplayer online battle arena (MOBA) games.

Each match of the International consists of two 5-player teams competing against each other for 35-45 minutes. In layman’s terms, it is an online version of capture the flag. While the premise may sound simple, it is actually one of the most complicated and detailed competitive games out there. The top teams are required to practice together daily, but this level of play is nothing new to them. To reach a professional level, individual players would practice obscenely late, go to sleep, and then repeat the process. For years. So how long did the AI bot have to prepare for this competition compared to these seasoned pros? A couple of months.

So, What Were the Results?

Normally, a professional Dota 2 game is played on a stage with 5v5 teams. This was the bot’s first competition, and the AI only had a couple of months to learn how to play Dota 2 completely from the ground up. It seemed more fair to start off simple with 1v1 matches. Those first matches were against [Dendi], one of the top players in the world, who lost to the bot shown below in the first match within about ten minutes, resigned in the second match, and then declined to play the third.

The OpenAI team didn’t use imitation learning to train the bot. Instead, it was put up against an exact copy of itself starting with the very first match it played. This continued, nonstop, for months. The bot was constantly improving against itself, and in turn it would have to try that much harder to win. This vigorous training clearly paid off.

While the 1v1 results are stellar, the bot has not had enough time to learn how to work in a cohesive manner with 4 other copies of itself to make a true Dota 2 team. After the roaring success of the International, the next step for OpenAI is to form an ultimate 5 bot team. We think it will be possible to beat the top players next year and we’re eager to see how long that takes.

What Does OpenAI Like to do When it is Not Busy Crushing Video Game Competition?

OpenAI has worked on a number of projects before the Dota 2 effort. They explored the effect of parameter noise to learning algorithms which has proven to be advantageous across the board. During exploratory behavior used in reinforcement learning, parameter noise is used to increase the efficiency of the rate at which agents learn.

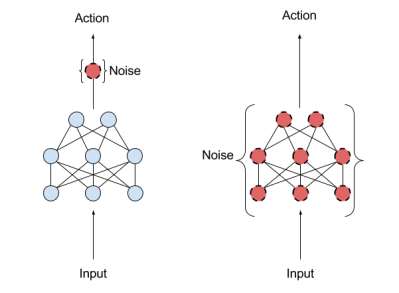

The left diagram represents action space noise, traditionally used to change the likelihood of each action step by step. The right diagram represents the newly implemented parameter space noise:

“Parameter space noise injects randomness directly into the parameters of the agent, altering the types of decisions it makes such that they always fully depend on what the agent currently senses.”

By adding this noise right into the parameters, it has shown that it teaches agents tasks far faster than before. It’s part of a wider effort focusing on new ways to optimize learning algorithms to make the training process not only faster, but also more effective.

They are not done with Dota 2 either. When they come back with their five bot team next year, it will undoubtedly require a level of teamwork never before seen in artificial intelligence. Think of the possibilities. Will this take the shape of a collective hive mind? Will team dynamics among AI look anything like those of their human counterparts? This really is the stuff of science fiction being developed and tested right before our eyes.

Now, Why Might a Billion Dollar AI Startup Be Meddling in a Video Game Competition?

OpenAI is an open source company dedicated to creating safe artificial intelligence and working from a $1 Billion endowment established in 2015. On their website, they state that the Dota 2 experiment was, “a step towards building AI systems which accomplish well-defined goals in messy, complicated situations involving real humans.” The International was proof of concept that they could in fact implement AI that handled random situations successfully — even better than humans. It leaves us wondering if the next field AI dominates in won’t be something quite as trivial as a video game competition. It is notable that OpenAI’s chairman, Elon Musk, gave a warning statement directly after the victory:

“If you’re not concerned about AI safety, you should be. Vastly more risk than North Korea.”

This is not the first time that Musk has conveyed hesitations towards the upcoming dangers of our superior Dota 2 players. In fact, he has a history as a leading doomsayer:

“With artificial intelligence, we are summoning the demon. You know all those stories where there’s the guy with the pentagram and the holy water and he’s like, yeah, he’s sure he can control the demon? Doesn’t work out.”

It is clear why someone so worried about the future of AI has devoted his time and resources to a company dedicated to ensuring its safety for humanity with this advancing technology. But it almost seems paradoxical. Teaching an AI to compete better than humans appears to be marching that dreaded outcome one step closer. But at the same time, you can’t temper the advancement of technology by refusing to take part in it. The company’s approach is to make sure everyone can study and use the advancements they are making (the “Open” in OpenAI) and thereby prevent an imbalance of power presented if the best AIs of the future were to be privately controlled by a small number of companies, individuals, and state actors.

Earlier this year, Hackaday’s own Cameron Coward wrote up an in-depth article about the potential future of artificial intelligence. He delves into one of the hotly debated topics with this subject: the ethics of strong AI. Will they be malevolent? What rights should they have? These questions will be answered in the upcoming years — whether we want them to be or not. It is our job to make sure that the answers to these questions in the near future are not answered for us. OpenAI is debugging AI before it debugs us.

While this AI was a great step forward you missed the fact that after the competition 50 rare sets were offered to the first 50 people to beat it, All of them were claimed withing 24 hours using a variety of strategies.

1) abnormal builds

2) not fighting it and overwhelming it with creeps

3) in a few cases superior technical skill

These just show that no matter how much training data you give a AI when it reaches a situation which it hasn’t seen & been trained with before it won’t necessarily make a correct decision.

Well, they at least just now added at least 50 successful new datasets to the database.

You actually missed even more. The rules against pros also restricted certain items such as soul ring (to my knowledge). Ignoring that the problem of expanding this to 5v5 is astronomical. There are well over 100 heroes in dota2. 100 chose 10, there are a lot of match ups this AI would have to learn and even that grossly simplifies the problem since even with the same 10 heroes there are dozens of starting options (for lanes). The scope of the problem goes from 1 hero having to face 1 other hero to learning how every hero plays against every other hero and how those heroes work as a team.

It’s a lot of hype for them sure but I’ll eat my hat if in 5 years they can field a team at the international.

https://xkcd.com/1838/

+ “AI” build was hardcoded and picked by pro dota player. There was very little AI in that “AI”, it was a glorified macro bot.

I’d be impressed if they prove it’s not digitally coupled to the game engine. Give it a camera, robot fingers and a keyboard, then how does it do?

even just delaying some of it’s actions times would make it a fairer contest

that was done

This. Especially the mouse cursor/pointer. One of the challenging things to do on a mouse in these kinds of games are ‘kiting’ which is the back and forth movement of your hero. If the AI can very precisely point where it needs to point a hundred times a second then just that one aspect can win you a fair amount of games.

I’ve watched a few of the games, and I can tell that it only reacts to your actions. It’s not yet smart enough to actually set you up. As in, force you to be in a position you’d be vulnerable. And if you’re smart enough you’ll even use that to your advantage to set up the AI.

i think the point was to prove it could learn to analyze dynamic and random situations successfully, not to claim to be better than humans at dota. Hooking a camera to it would be a 5+ year research project… which I really hope they do!

You don’t need a camera – that’s just silly. You’ve got a digital image of what’s sent, you can just feed that directly to the AI as well.

But there *is* a point here, which is that humans don’t have the same information that the bot has: they can’t see the whole screen at once. They’ve got eyes, and have to shift focus, and things in peripheral vision aren’t as clear as things directly viewed. *That* would be an impressive thing to see a bot have to deal with.

The robot hands crawl over to the opponents and choke them to death…

Then they return and win the game unopposed…

“OpenAI is debugging AI before it debugs us.” <– Mission Failed.

“OpenAI is debugging AI before it debugs us.” <– Fission Mailed.

Fixed

Also, one of the main reasons why players can only compete at the top level for so long is that the APM (actions per minute) is absurd and players with a high amount, in general, do better. Obviously an AI has no real limit to APM but humans most certainly do. In humans though, RSI and other types of injuries start to happen when sustained APM for long periods of time gets so high.

Curious what the API is and are we assuming that the computer is literally screen scraping real time video and only has mechanical inputs (as in it has to physically use a mouse?)

The differences may seem slight but are still quite noteworthy if you are trying to legitimately perform an apples to apples comparison here which this is not quite. Still, it’s a rather noteworthy achievement. Even if it is not really exactly people vs AI.

Yah, it’s not apples to apples if the AI is able to make decisions before the pixel even changes on the human’s monitor… however many milliseconds that takes from game code through video hardware etc.

As pointed out, it’s also much more interesting to see the 5v5 type matchups than a single 1v1 match. It seems likely that a game such as this that is dependent on small calculations, pixel perfect actions, high actions per minute and minor but additional over time resource gathering advantages is likely to be done better by an AI. However, the physical interface problem being “skipped” by the AI does detract at least somewhat from the overall achievement. It’s not quite cheating but it’s also a somewhat unfair advantage at the same time.

The other thing to note is that in this game, small advantages can quickly spiral out of control. So if a player makes a single mistake, it is very difficult if not impossible to recover from that if the opponent is playing perfectly. Once things get gapped much at all, the other player gains so much of advantage in resources that it is impossible to come back from, barring major misplays by the opponent.

It would be fairer to pit AI vs physical players that could also bring their own programs to bear to help them.

It’s also noteworthy that most of these games attempt to ban such activity when players use it to play against other players.

Unclear what types of programs or scripts would even be available for players though? I do not follow the competitive (or even casual) Dota 2 scene very closely. Does anybody know any more about that?

Well it seems that the AI has not learned everything through self-play, but has hardcoded tactics ( https://news.ycombinator.com/item?id=15001521 )

Most games, and specifically RTS, have bots, and thus an ‘AI’.

Are we to pretend it’s ‘new’ since AI is in?

we must attach the buzzword to everything!

I dunno, should we be taking our first steps in AI training with combat games? If there was any instance where “This is how SkyNet begins” is appropriate, it seems like this would be the one.

OpenAI’s work is amazing, very interesting progress, but they are not making the world safer from AI, nothing can do that unless there is an AI technology developed that is orders of magnitude superior to all other methods (so it can dominate them) and that method has safety as an intrinsic aspect of it’s ability to function at all. If you actually read all of Asimov’s robot related works you note that he does illustrate this problem, the positronic brains will irreversibly “lock-up” if faced with a paradox or with the awareness that they have or may harm a human. In reality we have nothing like this, not even in theory, we simply do not know how to even express the idea in more formal terms and therefore have no chance of implementing it at this stage.

The only thing more dangerous than North Korea is North Korea with a copy of all of OpenAI’s work.