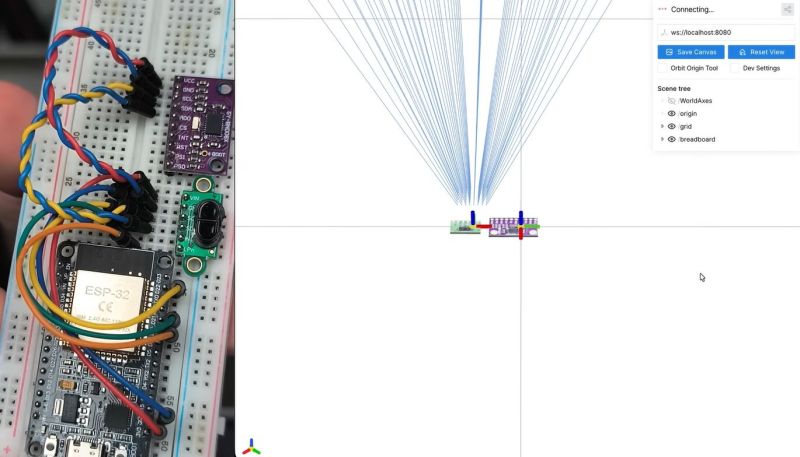

ST’s VL53L5CX is a very small 8×8 grid ranging sensor that can perform distance measurements at a distance of up to 4 meters. [Henrique Ferrolho] demonstrated that this little sensor can also be used to perform a 3D scan of a room. The sensor data can be combined with an IMU to add orientation information to the scan data. These data streams are then combined by an ESP32 MCU that streams the data as JSON to a connected computer.

Of course, that’s just the heavily abbreviated version, with the video covering the many implementation details that crop up when implementing the system, including noise filtering, orientation tracking using the IMU and a variety of plane fitting algorithms to consider.

Note that ST produces a range of these Time-of-Flight sensors that are more basic, such as the VL53VL0X, which is a simple distance meter limited to 2 meters. The VL53L5CX features the multizone array, 4-meter distance range, and 60 Hz sampling speed features that make it significantly more useful for this 3D scanning purpose.

I wonder about the structured light scanner and IMU on mobile phones.

It’s a shame most phones are difficult to interface with now, they are packed with sensors, storage and processing power, they’d be great platforms for small robotics projects.

With enough people using an app, it could be possible to make an almost real time 3D map of an entire city in a relatively short space of time.

When people start ignoring live facial recognition, as is mostly the case with regular CCTV and smart phone cameras, virtual reality content could be crowd sourced.

You don’t need structured light or even an IMU to build a model. CubiCasa is a fine example of a commercial app that’s used by average idiots (i.e. real estate agents) to make quite good models of (e.g.) house interiors from just the photos. (though it’s not clear how much smart-human work is done to massage the data on the backend for this particular app.)

CubiCasa is an example of photogrammetric software. AliceVision is just one of several open source implementations.

Okay, I was’t aware of TOF sensors being available in grid version and at affordable prices, wow. The project shown here is pretty neat and inspiring, cool concept.

AMS TMF8829 spits out 48×32 depth points. Costs around 15,77€.

What software is he displaying this data with?

Hey! The viewer I used for this project is called Viser – https://viser.studio/main/

So opensource DIY object laserscanner on the horizon?

Look in the rearview mirror for David Laserscanner and all its glorious variants

If he could solve the issue with accurately tracking translation movement, this could be an amazing handheld tool for lo-fi 3d-object capture.

Or build a rig that holds the pivot point stable, and turns it around. Then move it, repeat. You should be able to detect common surfaces and use that to estimate the distance moved?

That’s essentially the way photogrammetry works: estimating the camera’s location and movement by correlating across images. This could do the same but with significantly richer data from the IMU, if less rich from the sensor.

More people should be playing around with these things. The sensors are cool enough that the rest is a software / math problem.

It would be interesting to see this combined with ultra wideband position tracking so the sensor knows where it is in the room.

Unfortunately TOF is really sensitive to sunlight :(

So are engineers, so it works out.