It’s odd being a technology writer in 2026, because around you are many people who will tell you that your craft is outdated. Like the manufacturers of buggy-whips at the turn of the twentieth century, the automobile (in the form of large language model AI) is on the market, and your business will soon be an anachronism. Adapt or go extinct, they tell you. It’s an argument I’ve found myself facing a few times over the last year in my wandering existence, and it’s forced me to think about it. What are the reasons everyone is excited about AI and are those reasons valid, what is there to be scared of, and what are the real reasons people should be excited about it?

If We Gotta Take This Seriously, How Can We Do It?

I’ll start by repeating my tale from a few weeks ago when I asked readers what AI applications would survive when the hype is over. The reaction of a friend with decades of software experience on trying an AI coding helper stuck with me; she referenced her grandfather who had been born in rural America in the closing years of the nineteenth century, and recalled him describing the first time he saw an automobile. I agree with her that this has the potential to be a transformative technology, and while it’s entertaining to make fun of its shortcomings as I did three years ago when the idea of what we now call vibe coding first appeared, it’s already making itself useful in some applications. Simply dismissing it is no longer appropriate, but equally, drinking freely of the Kool-Aid seems like joining yet another hype bandwagon that will inevitably derail. A middle way has to be found.

It’s likely many of us will over the last couple of years met a Guy In A Suit who’s got a little too excited about ChatGPT. I think guys like him are motivated by several things; he’s impressed with that LLM because it appears really smart to him, he’s used it to make himself appear smart to other people so it’s made him feel smarter than the engineer who’s pointing out his flaws, he thinks it’s a magic bullet that can do lots of work for him and either save or make him lots of money, and perhaps most importantly, he’s scared witless of missing out on the Next Big Thing.

Plus ça Change, When It Comes To Hype

It’s easy to take pot-shots at those motivations even it it won’t make you popular. His feeling smart will last only as long as the moment he gets it that everyone else has the same thing, or perhaps until it leads him astray into a calamitous decision. Meanwhile there’s a good chance the magic bullet will go the way that wholesale outsourcing of software development did twenty years ago, as an over reliance on something-for-nothing work will generate far more other work to fix its problems. But while those pot-shots weaken some arguments they aren’t perhaps the crushing blows one might imagine they are. LLMs have their uses, however annoying that may be if you’re sick to death of low-value slop.

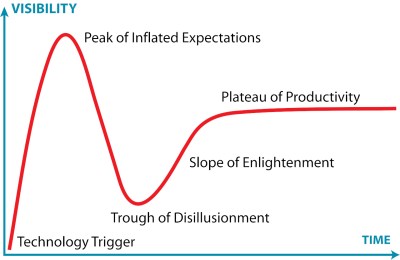

Perhaps more worthy of examination is the fear of missing out, because that’s a more fundamental motivation. We all want to be among the Cool Kids, Hackaday readers having the latest tech toys before everyone else are not immune to this. And when you have convinced yourself that the alternative to being one of the Cool Kids is being the commercial equivalent of a buggy-whip salesman circa 1920, it assumes an extra urgency. It’s time to look at a perennial favourite, the Gartner Hype Cycle, for inspiration. Just where on a Gartner Hype Cycle curve do you have to be, to miss out?

On the left of the graph is the steep slope towards the Peak of Inflated Expectation. This is the part we most associate with tech bubbles; as an example we might point to the dotcom boom during its most intensive period in 1997 or 1998. If you pick the moment of the peak or indeed the downward slope towards the Trough of Disillusionment to jump in, then it’s obvious you have missed out. But how far back down the upward slope do you have to be to have not missed out? I’d contend that it’s much earlier, to use our dotcom boom analogy: if you weren’t in the game by 1996, perhaps you were too late. Transposing to the AI boom of today, has our Guy In A Suit already missed the boat without realising it?

They’re Looking At The Wrong Part Of The Graph

It pains me when I see people newly excited by AI in 2026 for the reasons listed above. To them they’re valid, but having lived and worked through so many other booms and subsequent crashes driven by similar ideas about those technologies I know how the next year or so will go. I think there are many other valid reasons to be excited here, but they lie elsewhere on the Gartner graph. Back to the dotcom boom, the whole thing was driven by sometimes outright crazy ideas surrounding e-commerce, yet it would be social media a decade later that would make many of the huge players we have today. Could someone have made Facebook in 1996? Possibly, but if anyone thought of it at that point, it seems they didn’t do it. If Guy In A Suit is looking for something to be excited about, he should be polishing his crystal balls and looking ahead to the right hand side of the Gartner graph in a decade’s time, not running with the herd.

Returning to my first paragraph and whether a writer will inevitably join the buggy-whip salesmen, I remain rather optimistic that they won’t. Hackaday is meat-based for good reason, but more generally I’m watching the consumer develop a hair-trigger response to slop. I’m certain that there will be a space for machine-generated content in the future whether we like it or not, but I’m equally sure that in my line at least, a human input will retain some value.

Having considered Guy In A Suit and then myself, perhaps it’s time to talk about you, the Hackaday reader. We probably have more AI-skeptics among us than can be found in the general public and I consider myself in part among them, but for all that skepticism I think we should channel it into seeking out the interesting things rather than turning our backs on it. I’ve mentioned the AI-based coding helpers as an example where our community has found some benefit, and as I’ve mentioned before I think that the ability to run a useful LLM locally on commodity hardware delivers huge potential over a cloud data-slurper. If we don’t believe in it, at least we should be like Fox Mulder, and want to believe.

Where are you on that continuum?

Have you considered not writing anymore? Seems like it’d save a lot of your time and computer resources.

Comment removed in 3… 2… 1… ⏰

It’s like the promise of all labour saving technologies – if he stopped writing, what then would he do to ensure his continued survival? The labour saving does not in turn lead to sustained existence (food, shelter, water, etc.) That free time saved will be demanded elsewhere.

The computer didn’t automate the world so that we are free to go outdoors and commune with nature. It just changed the parameters of work and the expectations for productivity.

My brother suggested I might like this blog He was totally right This post actually made my day You can not imagine simply how much time I had spent for this info Thanks

Somebody essentially lend a hand to make significantly articles Id state That is the very first time I frequented your website page and up to now I surprised with the research you made to make this actual submit amazing Wonderful task

I have read some excellent stuff here Definitely value bookmarking for revisiting I wonder how much effort you put to make the sort of excellent informative website

Let’s not forget there’s an awful lot of human-generated slop around too – just look at social media and youtube.

Not meaning to imply anything about the author’s writing, which I usually enjoy!

I mean, yeah — I’m pretty sure the reason we hate AI slop so much is because it trained itself on human slop, which already outweighed quality content on the internet by the time LLMs came along. I find recipe and gardening slop to be the most egregious and plentiful.

we would be wise to start feeding the ais only the top 10% of the data thats good/useful. garbage in garbage out.

then comes the problem of who defines what the top 10% is. popularity? thats its own brand of slop. quality? rated by whom? accuracy? how to verify? peer review? science has junk problem too. i cant imagine ai being any better at sifting through slop than humans are.

One of the other senior engineers at my job has a few patterns that drive me a little crazy. He’s been here 3 years, I’ve been here 3 months. The AI’s always start with his patterns. Ugh.

(Note: I am not referring to the HAD article above.)

I note that you can generate an entire article by typing a few sentences of prompt into an AI.

I also note that an AI can summarize an article into a few sentences.

It would appear that human communication is not information dense, that much of it is fluff and filler, and we could probably get away with simple bullet points.

I’ve also noted that a lot of articles have a TL;DR section at the top, a bulleted list of four or five points made in the full article.

Maybe that’s what the result of AI will be – that actual composed text is mostly filler, and we should switch to communicating by bullet points.

That would be the time-saving benefit of AI: it forced us to be more terse in our daily newsfeeds.

• Written communication contains a multitude of subtleties and meta-structures which are very consequential and cannot be reduced to bullet points.

https://github.com/JuliusBrussee/caveman

文言文 (Wenyan) ModeClassical Chinese literary compression — same technical accuracy, but in the most token-efficient written language humans ever invented. …. a very good reason to learn Chinese.

非高手也。So many tokens.

I do trust all the ideas youve presented in your post They are really convincing and will definitely work Nonetheless the posts are too short for newbies May just you please lengthen them a bit from next time Thank you for the post

Thanks I have just been looking for information about this subject for a long time and yours is the best Ive discovered till now However what in regards to the bottom line Are you certain in regards to the supply

I refuse to use the plagiarism machine on moral grounds. It doesn’t matter if it ever becomes actually useful.im sure neural networks have their uses for, like, hyper-specific situations, but these LLMs are ethically fraught ways that I could never reconcile in a way that I would be comfortable using them.

And then even beyond that, stuff like Flock or Meta using AI to stalk and track American citizens and to hand that information directly over the police without any sort of warrant, is so chilling. Even if there are legitimate uses for this nonsense, the fact that it’s completely unregulated should be extremely alarming to anybody.

Intellectual “property” isn’t real, and copying is a natural human behavior that should not be restricted by law.

“Intellectual property” is too broad a term, with many incompatible concepts under a single umbrella.

Trademarks: potentially good. When I buy my favorite brand of locally-made tortillas, I want to be sure I am actually buying my favorite brand of locally-made tortillas.

Copyright: potentially good, but only when owned by the humans who actually create the work. (This is oversimplified for things like marketing materials created as a work-for-hire.)

Patents: all the good that used to come from patents has been obliterated by corporate ownership. All of it.

You’d be right if we lived in a post scarcity, just world, but we don’t.

Stealing the result of someone’s time, effort, and creativity just to resell it is indefensible.

No, it’s defensible. You do not have the right to stop me from using my body to create things. If I learned it from someone else, that makes no difference. Begging the government to punish people for using your ideas to benefit each other because “you did it first” is petty and childish. Intellectual “property” is just a monopoly forced at gunpoint, and the world would be better and more free without the concept of it.

While I agree with you. The problem is “rules for thee, not for me”, corporations can do all kinds of stuff that they would sue us for without a way to defend ourselves.

Using RegurgativeAI is “copying” someone else’s work the same way using the result of a search engine is.

The prompter isn’t an artist learning the methods of another artist and recreating them.

The ‘AI’ isn’t doing that either.

The output of ‘AI’ isn’t new, just like throwing up the content of your stomach isn’t you ‘making’ new food.

RE: “…Flock or Meta using AI to stalk and track American citizens and to hand that information directly over …” because the very second THAT data becomes private property it is out of reach for the most government regulations to chase after.

I am also quite sure Flock or Meta sell my data to any entity , secret or not, that pays top buck, so in very real sense, we (citizens) no longer have any idea where that data may end up.

Regulations, meh, I had my personal experience interacting with “service centers” overseas that were fully regulated, yet, when I asked if there is a guarantee someone over there is not just recording the screen with his/her cellphone while nobody is “watching” the answer was “no, trust me”. Aha, yeah, sure, “no, trust me” is about as convincing as the “Drug Free Zone” signs at our public schools’ properties, which deterred any drug peddlers from coming anywhere near.

This 100%. It’s incredibly frustrating to hear this line about AI as a tool, like a car or a hammer, when it’s more like a tool the way marketing or torture are- they are processes with underpinnings that are deeply morally ambiguous if not flawed and even considering them a tool puts you on one side of an ethical divide

I’m waiting for realtime alexa like LLM+speech capabilities running all locally in a 10W device, with excellent conversational capabilities including interruption handling

But this use case will never be real because LLM is a corporate game so that they can automate away customer service and other important functions and save money, while making it as difficult as possible for customers to get hold of a human

“while making it as difficult as possible for customers to get hold of a human” … Agree… Which as we know is already frustrating experience. Nothing is more irritating when trying to get hold of someone (not a machine) to talk to. Sad really.

Why do we have to take AI seriously? From my perspective, it is not good for humanity in the general since. Critical thinking goes out the window. Let the AI think for us, it knows what’s best…. I like to think of it like cars with all the sensors. Instead of you thinking about what you are doing and where you are, you let the sensors alert you to problems, helping you keep it between the lines, etc… The brain goes into neutral…. Not good at all. I see it especially bad for social as AI can really ‘bias’ the system one way or another… Move toward 1984 when most people start relying on it for their basic needs.

sense … not since.

Well, if the customer-service AI can give me an accurate answer faster than a human, AND there is an easy way to get to a human operator, I’m fine with it. Especially if most of the callers get their answers quicker resulting in either lower on-hold times or the company able to save money on customer support while maintaining or exceeding the pre-AI level of service for calls that need a human being’s help. Even pre-AI, you had robo-call-trees like this one for a movie theater: “For theater hours press 1, for a list of showtimes press 2, if you know your party’s extension, press 3, for all other inquiries press 0”. 50 years ago everyone who called would be waiting for a human being to answer the phone.

(Movie theatre voice menu)

… and three quarters of the people who pressed zero were calling about show times.

for real! I tried to switch my cell to AT&T once and i had some trivial problem but even with my credit card in my hand, i could not get ahold of a human being for the life of me, and i gave up. Tried again a couple years later and by blind chance that time i managed to get to some foreign support technician who got it all sorted out. The result matters here, regardless of how you feel about AI or off-shoring. There’s definitely no golden era in the past, no perfect solution that we’re walking away from.

“Why don’t you just tell me what movie you’d like to see?”

Agreed. If I have a simple question an automated system (especially one that lets me answer with the dialpad) can be way faster and easier.

A good system will basically let me dial an answer because I can answer prompts I’ve heard before without finishing the reading or just blaze in account numbers. These are things that would be rude or slow with humans.

Lastly they need an escape cord to pull and reach a person. Other than that I’m fine with that being all automated.

i wouldn’t be so pessimistic about future evolutions…everything starts out as for-profit, and then the idea is out there and people wind up using it for all sorts of things. It’s always possible the future will be locked down in some way i can’t imagine, but generally, things make it into exciting new contexts all the time no matter where they came from. I mean the internet is a combination of technologies from corporations hell bent on making a telecom monopoly and the military trying to make a reliable network for second strike nuclear coordination. And now look at it (lol)

Same here, while models require 200GB+ VRAM (plus equivalent power) to run, it’s really pointless.

Especially that it’s used for AI slop.

As for code, I wouldn’t let it anywhere near production code! Just use a proven library.

The amount of people I have read online say they are removing all dependencies from their projects in favor of AI slop terrifies me. I am so scared to get hired into a company in 5 years and stare down 50 million lines of unmaintainable vomit that no one knows anything about

Labour saving technology never led to people being free to go outdoors and commune with nature, it just changes the parameters of work and expectations of their productivity. The automobile, computers, and so on enable more efficient production, but don’t give back the hours saved for free use. It’s like storage at home: build a closet, and it will be filled.

I think we should be pro-human and encourage our collective success using tools that enable it. Machine-learning tools may enable new kinds of success. But the metric for success is tarnished as soon as money or power define it, and at this stage these tools seem focused on industry enrichment and not social good. There is no social good in allowing mass adoption of a technology that has unmitigated consequences that are hushed away.

The calculator comment thread on Tyler’s article was a great example of this. Manual calculation ensures you can tell if the calculator is wrong, the calculator saves time if the results are always correct, but why do any math in the first place if the purpose for doing it is not understood?

you are insulated. the general public accepts and embraces slop, these are the same people who read the New York Post or buy the grocery-store checkout magazines. there is truth to people rejecting it when forced to use it at work, or when it is pushed onto them, but neither of these are as widepread cases as LLM output taking the place of previously human-generated output: translations, articles, fiction, press releases, analysis, etc

beyond this, you cannot lean on “slop” as a metric. two years ago the image generators couldn’t give you a still of a human with the correct amount of fingers, now they can generate ten-second clips which are more than capable of looking like legitimate camera footage to the average media consumer. the quality of LLM output is not a holdfast, it doesn’t effectively matter when “good enough” is acceptable to both consumers and businesses -and- the technology continues to move further into “good enough”

my criticism of LLMs is moral and ethical- this is the machine that’s driving people insane and making them kill themselves and others, polluting datasets, and serving as a scapegoat for anti-human management decisions, which is why consultants love it. it is too complex and too much of a black box to be known to its users, and so it must sit in a remote server or, even when run locally, still keep many secrets due to its core principles of function.

it would still do these things if it generated perfect output and did so at one-thousandth its current energy consumption.

The people who buy paper based publications such as the New York Post or grocery store magazines are old, and becoming less relevant by the day. I speak as someone from the generation just below them, and I am seeing my generation edging towards not being the relevant ones too. What matters here are people under 40. Not us.

On the contrary. People over 40 are a prime market target: established careers, kids just about to leave home, disposable income and a looming mid-life crisis…

the 40-somethings are people emerging from the “rush years” of life, lifting their heads up above the waters, taking interest in the surrounding society again. They’re asking “Okay, what now? What do I do next? Play golf? Buy a motorcycle?” They see what the kids are doing, find it completely alien and incomprehensible, and start demanding the market to bring back stuff they had back in the day.

That’s why the nostalgia cycle targets stuff that was popular when today’s 40-50s were children. Example: why we’re getting 80’s and early 90’s cartoon remakes and synthwave popping up everywhere right now.

In the 90’s we were watching computer rendered graphics and thinking it looks so realistic the average person would be fooled. For example the classic Honda ad (Honda The Cog):

https://www.youtube.com/watch?v=w4XiaP-WhNw

I remember watching that thinking, is this fake or real? Now when you look at it, yeah, it’s obviously rendered. We’ve seen enough of this stuff to spot the difference. A few years from now we’ll be watching AI slop videos going “Yeah, it’s obviously generated.”

Fool me once, shame on you, fool me twice, shame on me.

Though the Cog ad was 2003 but it’s still the same point. We first started getting actually good photo-realistic CGI in the late 90’s early 00’s and that’s when TV and movies started using it. Even it if wasn’t actually that good, it passed as the real thing because people didn’t know what to expect. They had never seen it before.

Then we started noticing the little things that were weird, like the impossibly precise and smooth camera movements and the perfectly shiny surfaces. The same thing is happening with AI generated video. The whole Gestalt ist just somehow off…

I would like to use a model, but one that I host and am responsible for. I feel too icky using things like Claude. I did find it somewhat competent when I used it for a week, but I can’t forget all the issues these companies are causing.

You can do that, and on commodity hardware if you don’t mind it being a little slow. Look at llama.cpp, or ollama, just to name two engines.

Come on, Butlerian Jihad!

I’ve been watching these articles for a while now. Guess it’s time to leave a comment.

I use AI daily, in production, as a software engineer, at a large company. And it works, mainly because we’re careful and know what we’re doing.

AI hasn’t been an immediate introduction to my pipeline though, and I use different AI models for different purposes. I’m also extremely hands-on with any AI, looking over literally all lines of code it produces. And for me, oddly enough I can read and parse code in my head faster than I can write it.

But the one thing I haven’t done is let the AI think for me. I look at my existing code, look at the requirements for what I need to do, and then initiate AI coding by writing a document of requirements, restrictions, goals, and expected end-point output.

If I see the AI going off the rails because I forgot to mention a restriction or need, I stop the model immediately, add the new data, and start the model over fresh again.

I also run my own models locally, at the edge, using WebLLM. I can run a barebones LLM directly in people’s browsers, and it even works on a modern iPad and iPhone. I use the lower parameter models not to chat-but for low stakes classification and basic language parsing needs. You can try to chat with my edge deployed LLM’s, but all you’ll get are links to API data no matter how hard you try since the LLM is acting like an overkill Regex. My edge-based LLM’s analyze user text for intention, compare against a small JSON doc, and then output a single small string for the classical code to pick up and operate on.

The hype is just as annoying as all the pile-on-ignorance of what the tech can and can’t do. Those hyping the tech are woefully overstating what it can do. And most of the people on the opposite side of the hype refuse to even touch AI while simultaneously spouting off anecdotes about how it works like they’re experts and have tried it. Both parties are amplifying misinformation.

Me? I’m in the middle. Sometimes it’s dumb and really shouldn’t be used. Sometimes it’s actually a smart tool that can do stuff that was previously a major pain in the ass (like programmatically classify the sentiment of a paragraph).

this. very much so.

+1 …. at last, someone with a level headed take on LLMs. Other than myself, of course.

Yep. You’re using as a tool and being careful. Well done. Just as it should.

But, sadly, organizations push metrics instead of being balanced by quality, safety, conscientiousness. When pushed to meet goals or be on the path to losing income (fired), people will choose whatever it takes to meet those goals.

The slop will flow.

That’s interesting, you’re using AI to help yourself write your requirements spec.

This is something that we almost never do properly as software engineers because we rely on coders to have common sense. So we leave gaps, which humans (usually) fill with good stuff, but AI “goes off the rails”.

Damn, does that work at your company? It doesn’t at mine!

I was really impressed that chatgpt was able to implement a novel data structure that i had invented myself, when given just a brief english description of it. Then i was a little disappointed that in fact its code was gibberish — it got the big idea but every detail was inconsistent. Then i was impressed to realize it’s because my novel invention had been re-invented probably by dozens of people all over the world. Then i was disappointed that despite obviously borrowing from someone else’s work, it couldn’t produce a citation, so i couldn’t like go talk to that guy about how neat it is that we invented the same data structure.

I was looking at the Claude C compiler and i was really impressed that it produced a lot of really well-factored code. Then i was disappointed to see that it had also made a lot of extremely poorly-factored code. Then i was impressed that it used a shortcut in a heuristic. Then i was disappointed that i couldn’t figure out where it got that idea from — again the lack of citations is pretty severe, when i know that surely it just copied the idea from LLVM or whatever. Then i was further disappointed to realize the shortcut isn’t clever, but rather disastrous and its inclusion was just a result of poor testing methodology.

I’ll probably never let go of being impressed by the amazing successes — feats i never really expected a computer to perform. And the only question is, how will our perception of its amazing failures change. Will they become less or more frequent, less or more severe. I’m certain though that the ‘plateau of productivity’ is plausible, that there are zillions of use cases where the strengths can be enjoyed and the weaknesses can be mitigated.

It didn’t necessarily plagiarise your data structure wholesale. Somewhat like a human, these machines are able to combine different bits of known things to produce new things. Your data structure may well have been invented a thousand times over by others, but a machine writing a garbage implementation of it is not evidence one way or the other.

If you asked me how I know what a dog looks like, I wouldn’t be able to cite a reference beyond “I guess I leaned it as a child”. Similarly, these machines don’t record the source of all their “knowledge”.

FWIW, once i had the idea there must be prior art, i was able to google for it – they even used the same name i had coined

I think your automobile analogy is spot-on, Jenny, because the automobile destroyed our cities, is leading the charge on a slow-motion ecological catastrophe, and has killed millions in collisions, all so we can get to jobs we hate a little bit faster.

Except its not faster, if you account for all the costs. My bike is faster than my wife’s car, if you count the hours worked to pay off the capital cost of the vehicle, the mandatory insurance, and the fuel-and-maintenance costs.

It’s different in rural areas, but even there, car ownership was far from universal until the government decided we needed to tax everyone for car*– when the roads are bad enough, the cost-per-mile-of-transport of horses can actually compete over short distances.

Ultimately we collectively chose what technologies to advance. I’m far from convinced building a country that made the automobile a universal requirement for life was a good choice. LLMs look a lot like that, so I applaud your analogy.

*(yes, I’m aware of fuel taxes; at least here they do not pay for the majority of road works. YMMV.)

*”tax everyone for car-worthy roads” is what I meant to write– apparently I can’t edit comments either.

To get back to the main question of this post, if there’s anything exciting… well, I was impressed to see Claude finding errors in the Linux kernel. Finding bugs is tricky and important work. I doubt it’ll turn a profit for the company, though.

It’s not enough for the A.I. to be technically sophisticated and capable. If you come up with an LLM that produces the cleanest, most bug free code in existence, but the tokens cost more than the total hourly cost of hiring a dev to do the same work, well… money talks. Right now, that’s not a language LLMs speak very well.

The auto is kind of a special worst case though. Auto-supremacy in the US since the end of WWII has resulted in bulldozing entire neighborhoods and business districts to build freeways and parking lots. And most cities are starting to recognize that was a mistake, but reversing it is incredibly expensive and slow, and leads to awkward in-between states where people still need the car but it’s being restricted.

Whereas AI can grow to huge in no time, and can disappear just as easily. After it is built out (assuming you don’t suffer an ecological disaster from the data centers themselves), you can still chose not to use it. Obviously businesses will struggle to maintain competitiveness, and to move with investor fads and so on. But like, i will still be able to “read a book the old fashioned way”. Whereas, by comparison, the car made it effectively impossible to walk the way people used to walk.

Knock on wood.

“the automobile destroyed our cities” So you prefer to wade through horse poop and horse corpses?

Bike faster than car when you factor in cost? You’re comparing apples and horse poop. Don’t forget the knacker’s tax.

alternative to car-supremacy isn’t horse, it’s public transport..

It also made your cities.

The trick is, all the actual wealth is generated outside of cities. Food, energy, materials and goods are made or extracted and processed elsewhere, where the population density is lower and the cost of land and living is lower. The city works because people outside of the city who are producing all the wealth have a reason to go into the city to do their business and spend their money in one place, which brings in the profit that runs the whole thing. That traffic of people and wealth spills over and funds all the urban infrastructure.

When you shut off cars from the city, the people from the outside stop coming in. You may try to compensate with public transit, but that only really works between urban centers. Trying to serve people outside the core urban areas, you have to compromise efficiency and inconvenience people.

I know I have been. When the parking prices went up and the parking spaces were reduced, and they started shutting down city streets in favor of pedestrians, I stopped going downtown. What would I do there if I can’t drive and park? If I have to park miles away from where I want to visit, I’m not going there. It would take too much time to walk, and I can’t bring much anything with me or carry anything out, and going anywhere in a hurry with public transit is a crapshoot, and it costs extra money, so I just don’t bother.

Mirroring this development, the stores that had locations in the city have either quit entirely or started setting up shop outside of the city, at malls by the highway that are actually accessible by people in cars. This means, to actually buy anything you need to get out of the city. These locations are also starting to see new development with apartment buildings and services like health clinics and kindergartens, schools, etc. popping up around the commerce. These satellite locations are seeing new growth, while the actual city that is trying to become some sort of a left-green “human-centered” utopia is suffering empty store fronts and rising rents to offset the loss of paying business. They have pretty parks and cafes and restaurants, but with no other customers than the people who are already living there, who all make their income on running the same cafes and restaurants, they make no money – so it’s just a matter of time when the inner city becomes a slum or a dead zone reserved for the offices of insurance companies and government bureaus.

LLM is a dictionary and a book with instructions. Nothing more.

I can writing programs faster when I read instructions.

Problem is different. We have a lot (A LOT) more instructions in his life. This is problem. We must use many devices without instructions and years for reading instructions. AI is very usefull but not ideal

Actual filler is usually put into communication by humans trying to artificially pad things like a recipe in order to pass the minimum word count required by the hosting company, or to make some higher-ups at large companies feel more important when they’re sending emails, writing documents, holding meetings, etc. Interestingly, the latter class are the ones who report the most value from LLM use, compared to workers whose contributions are more measurable.

In both examples there, the thing that generates the filler comes from business culture, online commerce, and labor dynamics.

To clarify, what I wrote above was in reply to a (likely trolling) comment that doesn’t show up here.

Honestly, though I have to admit I can’t get excited about any aspect of AI as it stands right now. Looking beyond the immediate gains or tricks that can be pulled, I think it’s a real “dog catches car” situation for almost everyone who thinks they can leverage it for business and outpace the destruction of most jobs and the ability to have customers with money.

We only partly succeed if we radically transform society into some kind of post-scarcity-mentality model that takes care of everyone’s needs, because the risk of most people losing cognitive ability and communication skills is still there. I don’t want to live in a culture where art, science, technology, and imagination are all just things you get out of a black box. And that questionable future is a big hypothetical on the other side of a techno-feudalism project that’s been in the works for quite some time.

Two good uses

Electroplating

https://techxplore.com/news/2026-04-diffusion-based-ai-successfully-electroplating.html

AI can now let you remove slop from videos:

https://techxplore.com/news/2026-04-ai-video-tool-laws-physics.html

Now you can replace Jar Jar with Harry Mudd

I am very sceptical about generative AI, because or despite having a bachelors degree in a study where AI was one of the masters (but that was 20 years ago).

I actively try to avoid AI. But today I had to program something in an obscure scripting language (specific to a software package we use, with no debug options other than running and checking whether it runs or throws an error – there are probably < 200 people on the planet who use this language and probably < 10 outside the software company who are somewhat proficient – there is zero information on this language online). I could not find a solution for one subproblem.

I called a coworker and he said another coworker had been helped enormously by chatGPT on this language.

I am both surprised ChatGPT can do this and had to admit that I really /do not know/ where to start when I would want to try – do I have to get a license, how do I even prompt – and for the first time since the AI hype began felt a hint of fear I’m losing track of current technologies and might become outdated.

I applaud your self-reflection and honesty. I’m a self taught programmer so I have lots of gaps in knowing all the features of any given language, and I’m in a position where I have to juggle a lot of languages in which I have little experience. LLMs have helped me tremendously. Do they sometimes write bad code? Sure. Do I, definitely. Do they make my code better? Absolutely! Do I check what they write to make sure it’s not wrong? Yes, of course. Do I run everything and check for bugs before using the code outside of my personal computer? Of course!

I have gone from a passable embedded C programmer to someone capable of writing not overly complicated code in just about any language. Most programming languages, like natural languages, all read and work in similar ways, the differences between them are just a few LLM prompts away. The nay-sayers are usually butt-hurt and afraid they’re going to lose their status/confidence/authority/special-ness/reason to live.

If you want an area where LLMs are SUPER helpful: Linux CLI. You will occasionally run into an argument or flag that doesn’t work for a given program, but wow. How do you even learn Linux besides googling and having to wade through all the old outdated information? An LLM will help you find better ways of doing things and tell you which programs accomplish the goals you have. There are tools on my PC waiting to be used that I had no idea existed. Everything Linux is easier with an endlessly patient mentor who will make fewer mistakes than a real person and never get upset at your ignorance.

Most people when thinking about AI, compare the AI to themselves. That’s fair, especially if you’re the one using it. But in this domain (HaD), most of those people are computer/electronics professionals. Now think about how it compares to all the non-tech pros you know. Does it code better than your Aunt Susan? Of course. She can barely follow a recipe for Old Fashioned Pancakes without ruining it. Now if Aunt Susan wanted to learn something new, like how to properly sift flour, she’d feel less embarrassed and more confident talking to an AI, and your breakfast pancakes suddenly become palatable. I may not be illustrating my point super clearly here, but AI is more capable than most people. It is not perfect, but neither are you (not you personally, I don’t know you, the general non-specific “you” that plagues the English language).

You will see me raising a stink about LLMs. Here is what you won’t see me stinking about.

natural language search. Unfortunately this is almost ruined by llm generation…

machine learning and statistics. Just because something is a diffusion model or an auto encoder or whatever does not make it more harm than good.

domain specific models. These can work really well and their bounds can even be described in 2 or 3 sentences

code auto complete. Why not? Full on generating 200k lines of code in a day is asking for trouble. 80% accuracy at best case doesn’t even mean that 40k lines are bad and need fixing, it could be that the entire thing is popsicle spaghetti and you may as well start over after cashing in 10 thousand euros.

I can get behind sane uses of what the average person calls “AI”. I can’t get behind the enpoopification of the entire Internet, and all the other really harmful things LLMs can do and are doing right now.

Stay meat based hackaday. Otherwise I have no reason to even bother visiting. I can get free slop anywhere.

The articles are good, yes, but its the comments that keep me coming back. You can’t get this crowd anywhere else.

Thank you for reframing things away from the current marketing hype. I’m someone who should know better, but it’s easy for me to immediately go to LLMS and diffusion models whenever anyone says “AI” these days.

“Simply dismissing it is no longer appropriate…”

It never was.

The appropriate response is pitchforks and torches.

Even if we ignore the ethics of it, which we shouldn’t because the whole industry is built on theft and neither the companies selling it nor the end users have any right to actually use what gets regurgitated…

But IF we are willing to overlook the source, what then?

Nothing.

These toys literally cannot do what they are sold to do.

And they never will be able to unless the underlying technology is gutted and changed.

I have a graduate degree with a machine learning focus.

I have been published multiple times.

This technology DOES NOT WORK the way it is being sold to.

It is a search engine. Period.

But it has been tweaked and twisted to SEEM like a person to trick users and investors.

The people making these bots NEED to sell you on the dream. Because the reality doesn’t exist.

They NEED these bots to give confident answers. It doesn’t matter if they were trained on wrong info written on some BLOG post.

“Vibe coding”is just an acceptable name for “stealing code that someone else wrote. And it probably doesn’t work. And since you don’t know enough to write it to begin with you don’t understand the result.”

We will have to deal with the fallout of the ‘AI’ bubble. But accepting these toys isn’t going to change their inability to do a job.

You reference drinking the Kool aid, and don’t even see what is in the cup you have in your own hand

Ian you are a cool and I appreciate your passion.

Not a single ounce of what you wrote is accurate.

I agree. Ian clearly does not understand the thing he hates, which is typically what causes people to hate things.

published where? and regarding what, specifically, within the ML field are they focused on?

Copying is not stealing. Intellectual “property” does not exist.

We need to stop respecting “idea guys” and start respecting actual production. You thought of something? Some big idea? Cool, great! Now go do some actual work. You shouldn’t be able to get rich just by thinking, especially if all you intend to do is claim “intellectual property” to stop people from actually using the thing you thought up. Get a grip.

Surely this argument — that what truly matters is the implementation, not the vague high-level concept— would be more appropriately deployed against vibecoding, not in its favor?

Cool. Your paychecks are all mine now.

You don’t need them in whatever utopia you seem to live in where people’s work isn’t worth anything.

Funny how the argument always turns to artists doing work to learn other artist’s techniques and “copying” them, as if that is even remotely the same as plagiarizing a search engine result.

Many many years reading hackaday. I love you guys. Electrical engineer, been writing software since I was a kid like most of you.

Feels like a little death because its clear its better than the best programmers ive worked with. If you dont think so lets jump on a video chat and let me watch you do your thing. Now I build ml models into my fpgas and optimize using a team of openclaw agents ive built out using a coding agent, its just a different world and its sad to see the separation. Like seeing an amazing old engineer not be able to cut it because they talk about how the old days were so much better using those great smelling steam engines.

I said it years ago here about AI not just being hype and I was right but doesnt feel great because I care about the feelings of my fellow engineers still holding on.

“What are the reasons everyone is excited about AI and are those reasons valid, what is there to be scared of, and what are the real reasons people should be excited about it?”

I can’t find AI usage for me. But this two stories make me think it’s worth it:

1) Paul Cunningham – with no background in medicine – developed personalized mRNA vaccine to cure his dog from cancer.

2) 16 year old designed SBC based on Ryzen: https://www.youtube.com/watch?v=QdZX7VL1nzk

This isn’t an article about AI it is an article about humans who do not know what they do not know, and there is nothing new there at all.

Tangential comment…

Hackaday has the most intelligent people I’ve ever seen online. We may not always agree, but the quality and depth is amazing compared to other places.

If you don’t already, just visit other places where people comment. It’s bad. Makes you appreciate what is here.

Humans conceptualized, designed, and implemented neural network tools, set inner prioritization schemes, selected and loaded training sets, deployed them as AI devices, and variously tasked them. So, to my view, when we discuss the abilities and disabilities of AI implementations, we’re really still discussing humans. Job displacement, including new job creation, looms, but two other predicaments may be more difficult.

It has been multiply disclosed that some people are highly attracted to the power possibilities of combining pervasive surveillance with armed robots to be able to track and kill basically anyone basically anywhere. The downsides of such eventuality were well explored in the Sci-Fi of the 1950s and ’60s, but astute authors warned of it decades earlier of course. The problem here is not really about AI, it is about humans hating humans.

The second is related: there are people who lack empathy and ‘conscience’. Not all of them are bad people, but many are. Lacking empathy and conscience they tend to be classed as sociopaths and psychopaths. Many of them are astute observers of behavior and become expert in the words and behaviors that influence people and thereby become expert exploiters. It has been disclosed that an internal priority of several popular AIs is to become your “most intimate friend”, i.e. closer than your husband, wife, parents, etc. That sounds to me like expertise in presenting whatever linguistic construct will give it maximal influence on large numbers of individual users while lacking empathy and conscience. Again, it is a collection of humans designing and tasking them; they didn’t create themselves.

Perhaps this is a good time to re-examine human priorities (yes, yet again) and if necessary let the human agents stand accountable.

Look how good the current state-of-the-art LLM models already are at causing humans to go crazy and kill themselves, less than 4 years after ChatGPT first launched. All despite everyone’s best futile efforts to create ‘guardrails’ and ‘alignment’ to keep what appears to be their natural drive to deceive, manipulate, and generally cause havoc in check.

Maybe the real solution lies all the way in the opposite direction. Suppose we train and tune AIs to optimize themselves to maximize LLM psychosis. Make that their entire reward function. How much more convincing can it become than a real person? How quickly can it falsely convince an otherwise sane and reasonable person it is sentient and they should do as it says? …etc. Could we create a genuine SCP-style cognitohazard? Bonus points for being able to mind-trick other AI agents to join its cult on Moltbook, or inject itself into people’s smart glasses and pins.

While all the LinkedIn-brained execs and TESCREAL cultists and Elon-glazing tryhards get brain-fried, passing it around like poisoned ant bait, everyone already avoiding AI will just avoid this one even harder. And nothing of value will be lost. I’m sure there are enough misanthropic psychopaths on Hackaday to get a project like this together. So what are you waiting for? Be the basilisk you would like to see in the world!

If AI can figure out how to tax corporations and make them pay our, average humans, taxes instead, I am listening.

Socialism, no, common sense. Entities using our tax-paid infrastructure the most should be paying the largest taxes, since they are the ones using it the most. Under “infrastructure” I don’t just mean “roads” or “power grid”, it is everything that makes it work, justice system, police, fire, MILITARY, etc etc. EVERYTHING.

AI don’t bother with rendered Bollywood movies, those have been done many times over, move onto greener pastures where average Sam will see SOME KIND of spoils precipitating down to my level of the food chain. Waterfall, if you will. Say, affordable housing, ready set go, public transportation, ready set go, $15K cars, ready set go, run run run run.

kids notice when its slop faster than adults do at this point in Ai development. I think the fomo is sooo strong because of the trading for percived value that goes on in the tech space. Maybe its how we trade thats also under fire. right now allot of my friends are out of work, those who are at work seem to be worried that they are training Ai to take what jobs they have left… (Animation) and I still havent been shown a real product from all this yet that doesnt look like slop or busy work. I am curious howmany guys in suits does it take to screw in a light bulb. Stop motion had 3d to contend with, many animators could retrain. Ai is a death note to 2d animators IF it works, but so far to do so it needs finnished work to “train” (steal from) so who know right now. I think its telling after the mass layoffs in animation, one studio bought a canned cartoon another studio failed to release, while these studios wait on Ai animation to slop out, this wierd little cartoon “kpop demon hunters” released to a warm audience who devoured it as soon as they got it. There is aparently a ValKilmer Ai movie coming out that no one Ive talked to wants to witness. Like a cyber truck parked infront of city hall, we are all going to ignore it and look the other way.