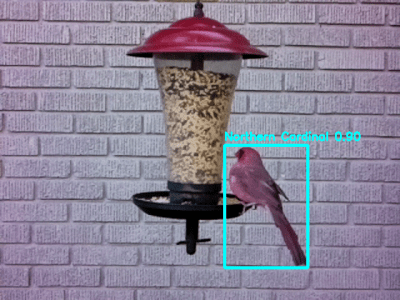

Real-time object detection, which uses neural networks and deep learning to rapidly identify and tag objects of interest in a video feed, is a handy feature with great hacker potential. Happily, it’s also possible to make customized CNNs (convolutional neural networks) tailored for one’s own needs, and that process just got easier thanks to some new documentation for the Vizy “AI camera” by Charmed Labs.

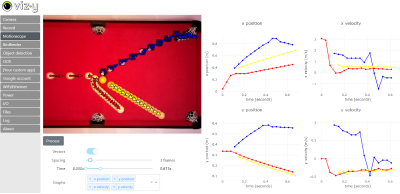

Charmed Labs has been making hacker-friendly machine vision devices for a long time, and the Vizy camera impressed us mightily when we checked it out last year. Out of the box, Vizy has a perfectly functional object detector application that runs locally on the device, and can detect and tag many common everyday objects in real time. But what if that default application doesn’t quite meet one’s project needs? Good news, because it’s possible to create a custom-trained CNN, and that process got a lot more accessible thanks to step-by-step examples of training a model to recognize hands doing rock-paper-scissors.

The basic process is this: Start with a variety of images that show the item of interest. Then identify and label the item of interest in each photo. These photos (a “training set”) are then sent to Google Colab, which will be used to generate a neural network. The resulting CNN model can then be downloaded and used, to see how well it performs.

Of course things rarely work perfectly the first time around, so at this point it’s pretty common for some refinement to be needed to increase accuracy. Luckily there are a number of tools to help do this without creating a new model from scratch, so it’s just a matter of tweaking until things perform acceptably.

Google Colab is free and the resulting CNNs are implemented in the TensorFlow Lite framework, meaning it’s possible to use them elsewhere. So if custom object detection has been holding up a project idea of yours, this might be what gets you over that hump.