Announced at the beginning of this year, Intel’s Edison is the chipmakers latest foray into the world of low power, high performance computing. Originally envisioned to be an x86 computer stuffed into an SD card form factor, this tiny platform for wearables, consumer electronic designers, and the Internet of Things has apparently been redesigned a few times over the last few months. Now, Intel has finally unleashed it to the world. It’s still tiny, it’s still based on the x86 architecture, and it’s turning out to be a very interesting platform.

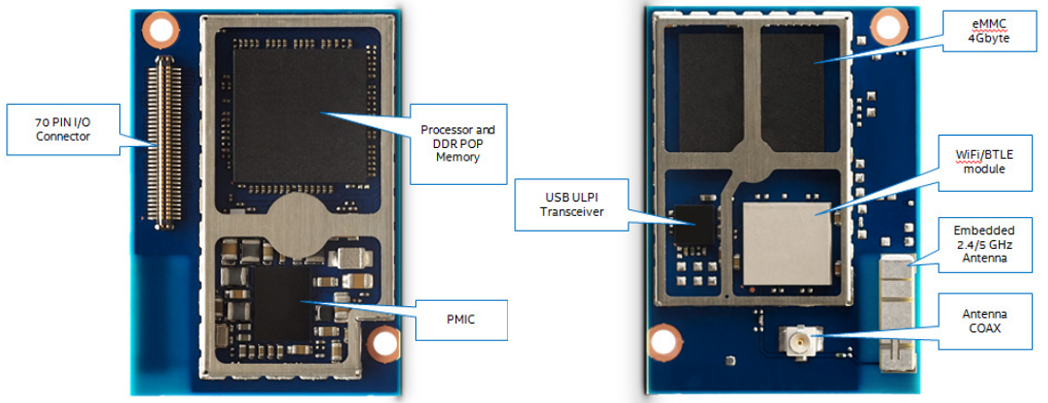

The key feature of the Edison is, of course, the Intel CPU. It’s a 22nm SoC with dual cores running at 500 MHz. Unlike so many other IoT and micro-sized devices out there, the chip in this device, an Atom Z34XX, has an x86 architecture. Also on board is 4GB of eMMC Flash and 1 GB of DDR3. Also included in this tiny module is an Intel Quark microcontroller – the same as found in the Intel Galileo – running at 100 MHz. The best part? Edison will retail for about $50. That’s a dual core x86 platform in a tiny footprint for just a few bucks more than a Raspberry Pi.

When the Intel Edison was first announced, speculation ran rampant that is would take on the form factor of an SD card. This is not the case. Instead, the Edison has a footprint of 35.5mm x 25.0 mm; just barely larger than an SD card. Dumping this form factor idea is a great idea – instead of being limited to the nine pins present on SD cards and platforms such as the Electric Imp, Intel is using a 70-pin connector to break out a bunch of pins, including an SD card interface, two UARTs, two I²C busses, SPI with two chip selects, I²S, twelve GPIOs with four capable of PWM, and a USB 2.0 OTG controller. There are also a pair of radio modules on this tiny board, making it capable of 802.11 a/b/g/n and Bluetooth 4.0.

The Edison will support Yocto Linux 1.6 out of the box, but because this is an x86 architecture, there is an entire universe of Linux distributions that will also run on this tiny board. It might be theoretically possible to run a version of Windows natively on this module, but this raises the question of why anyone would want to.

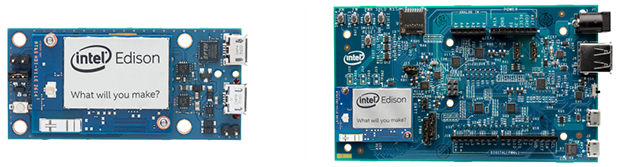

The first round of Edison modules will be used with either a small breakout board that provides basic functionality, solder points, a battery charger power input, and two USB ports (one OTG port), or a larger board Edison board for Arduino that includes the familiar Arduino pin header arrangement and breakouts for everything. The folks at Intel are a generous bunch, and in an effort to put these modules in the next generation of Things for Internet, have included Mouser and Digikey part numbers for the 70-pin header (about $0.70 for quantity one). If you want to create your own breakout board or include Edison in a product design, Edison makes that easy.

There is no word of where or when the Edison will be available. Someone from Intel will be presenting at Maker Faire NYC in less than two weeks, though, and we already have our media credentials. We’ll be sure to get a hands on then. I did grab a quick peek at the Edison while I was in Vegas for Defcon, but I have very little to write about that experience except for the fact that it existed in August.

Update: You can grab an Edison dev kit at Make ($107, with the Arduino breakout) and Sparkfun (link down as of this update never mind, Sparkfun has a ton of boards made for the Edison. It’s pretty cool)

Gumstix killer. 4 PWM is a little light though, they should have 3-4x as many.

That will be included in ‘The Tesla’

For real?

They probably just assumed that you would hook a Pic or Atmel to an I2C line if you wanted more. Goodness knows that’s what I would do. If you feel fancy then you could even use one for A/V-out.

Would it be possible to install Debian on this?

Absolutely. The questions are: how much work will it be to get all the peripherals to behave and how smoothly will it run?

Since the supported OS is also a Linux distro, it’s probably not that bad; just a matter of copying the necessary kernel modules and firmware blobs.

In fact, someone already put Debian on Galileo doing more or less what I described: install Debian, copy the kernel modules, fix a couple of issues and done: https://communities.intel.com/message/218148#218148

Blobs for what? It’s only peripherals are USB and network, SD card and a few UARTs. And the radio I suppose. Doesn’t most of that stuff have source available for the Linux drivers? Not sure about the radio, but I’d guess “yes”.

If it were like the Raspi, with complex on-board graphics and sound, it’d need complex drivers and probably, yep, blobs, since the implementation details are an expensive secret.

It might not use a PCI interface, in which case you’d probably need to build a device tree for the drivers to lay themselves out correctly.

probably to stop OpenBSD from running on the hardware.

@willrandship: You can download the datasheets for the quark from ark.intel.com. In fact, you can download datasheets for just about all of Intel’s processors. I’d love to try my hand at doing something with the Atom z3735G…

Quark has 2 PCIe 2.0 x1 lanes, probably used by the bluetooth and wireless modules in this.

It uses Broadcom radio = good luck with open source drivers :D

https://learn.sparkfun.com/https://learn.sparkfun.com/tutorials/loading-debian-ubilinux-on-the-edisontutorials/loading-debian-ubilinux-on-the-edison

I have one reason to install Windows on such a device: Printer support.

No, seriously. I’m working on a photobooth and the super-fancy dye sublimation printer we have doesn’t have functional linux drivers. Being able to fit the print server on such a device would be really nice.

I would say that at this point in time that any printer manufacturer that doesn’t either supply Linux drivers, or has native Postscript or standard PCL support has an untenable product.

correction: I can’t read, forget my other comment.

I think it’s valid, assuming Jamie’s photo booth is running Linux on whatever hardware it has. And it probably is, everything else that needs an OS is.

This gadget, if he can get it to do what he wants, might be nice. But still he shouldn’t HAVE to, the printer should have a standard interface, or give the information for the Linux people to code a driver.

Do they still make those brainless “Windows printers” or whatever they’re called? Where the PC does all the rendering and most of the work, and just feeds simple ink-squirting data to the printer’s simple electronics? I know lasers and inkjets went that way for a while, dunno about dye-subs.

Just like those win-modems, another blast from the past, at one point Intel were after encouraging as many peripherals to do their processing on the main CPU as possible. Anything to use up all those cycles they kept selling us. The idea of using your $100s CPU to do the work of a $2 microcontroller is great when you’re the ones selling the $100s CPUs.

I’d accuse Microsoft of being in on it, writing slower software year by year. But I don’t think they’re capable of doing it on purpose.

The “winprinters” were basically a breakout board, a couple of stepper drivers and some specialized I/O for the print nozzles and some sensors for the cover, paper out etc. Without the host software directing the printer’s every move it could do nothing, not even print a simple nozzle test pattern. I called them brainless printers.

HP later made some “improved speed” or “enhanced” or somesuch nonsense almost brainless printers that put some of the printer control back into the printer. Those can be identified by their just having enough internal smarts to spit out a simple nozzle test pattern. I presume HP put printhead control in them while still having the host software in complete control of the motors.

I’d think either of those types could be a good hacking platform for light duty CNC, if you can figure out the motor control protocols and either write that into your software or write a G-Code to printer protocol module. Never know, the HP ones might be using HPGL or HPGL/2 commands to move the motors.

If the printer can print a self test page that includes text, test patterns and sometimes graphic images, then it’s either a “smart” printer or a semi-smart one with a firmware chip that does nothing but hold the data for the self test page.

Say what you want, doesn’t make any difference to the situation the OP is in…

I wouldn’t get my hopes up too much -> it is really slow, and if you’re unlucky the printer driver expects CPU features that all other Intels in the last 15 years had… (e.g. MMX extensions)

A VIA pico-itx board might be a better fit if this doesn’t work.

Too lazy to look it up.. but this is a proper Atom (686+ class) core instead of that buggy 586 thing they also have right?

Nope, it’s not an Atom (otherwise it would be called that). It is an 586, no MMX extensions, no SSE. So no ready-made kernels with many distros…

EDIT: I can’t read and just saw the bit about the Quark. Yes, it is a proper Atom, with all the features you want.

The 586 part would be fine really for the things this targets.. but their current stuff has at least one really crappy bug.

https://bugs.debian.org/cgi-bin/bugreport.cgi?bug=738575

So it being a proper Atom is good :)

Wow, really? I thought, for the silicon vs the improvements in program speed, it was worth putting those in any of their CPUs. In the embedded market, media stuff doing FFTs, codecs, MPEG and the like, would really benefit. Certainly more use than including a second core, or some sort of third core, whatever the Quark thing is.

This chip really seems like it doesn’t fit anywhere. It isn’t enough of anything, it isn’t any one thing. Lack of x86 compatibility is something the world’s been getting by fine without, and in the meantime we all standardised on ARM, as much as we needed to. And embedded stuff is mostly custom code anyway, there is no mass-market software.

Still pretty sure you can just trap the missing opcodes and emulate in software, as a patch for the time being. And hopefully software checks for the presence of MMX etc before it tries using it.

” It isn’t enough of anything”

This is a really terrible critique of a computer, and I’m getting REALLY TIRED of hearing it. A 1 MHz 6502 is enough computer for some applications. Shoot, the world got lots of stuff done on old VAX 11/780 systems.

“in the meantime we all standardised on ARM, as much as we needed to. ”

What do you mean “we all”, paleface? Ever heard of MIPS? Or maybe PowerPC? Do you even read hackaday? Do you see all the atmel projects here?

The rest of your comment is not even worth the time to critique.

>“in the meantime we all standardised on ARM, as much as we needed to. ”

>What do you mean “we all”, paleface?

Even funnier when you consider how broad a “standard” ARM is.

Sure the 6502 is (or was) enough. There’s still a use for low-power 8-bit chips. They’re cheap and consume little current. That’s their niche, their purpose. But you wouldn’t use one in medical imaging, and you wouldn’t use a whatever-medical-imagers-use to run a Tamagotchi. Fast, low-current, or cheap, seem to be what people want. This is none of them.

THAT’S my point. The Edison seems to be in the middle of the road in every way. It doesn’t seem like it’s the best for any particular purpose. How much x86 software is there that doesn’t also need a PC?

I know MIPS and PowerPC are used in embedded chips, and maybe the odd supercomputer from 10 years ago. AFAIK ARM is much more popular. In any case, none of those are x86 compatible and it doesn’t seem to have hurt them. Which is my point.

As for the rest you can’t bring yourself to think about, I’ll just imagine some angry nonsense to fill the gap.

Ugh. As someone who ported for MIPS a couple of years ago, I would just let that one go. It would be nice if they could just get ARM (and its many permutations) hammered out finally.

As per this Edison device in particular, I would have to see it in action honestly. I haven’t had the pleasure of ever coming across an atom behaving properly. They seem to have some sort of problem offloading jobs between the cores when used but that is just my feeling and opinion based on the few I have had to work with-I have absolutely no proof of that statement.

It would be fun to muck about with an x86 lil fella again but I will probably wait until some robust repos and forums/fixes have been done. It should be snowing by then and back to coding time.

Of course, your detailed critique is based on your hands-on experience with the Edison, right? No? Then maybe you shouldn’t be so quick to judge something you actually know nothing about. For all we know, you could be right: It could be a waste of time and silicon. Then again, it could be something nice and useful to a ton of people. I know I’m looking forward to testing it out; $50 for an x86 platform the size of the RasPi sounds like something both fun and versatle.

Until you’ve had an opportunity to actually try one out though, all your supposition does is make you appear condescending and judgmental.

You’re allowed to be judgemental about inanimate circuit boards. It doesn’t hurt their feelings or denigrate their social standing.

I am, of course, basing my “critique” on what I know about processors, and Intel’s processors. I’m assuming there’s no hidden surprises, that it’s just a dual-core 500MHz x86. Everyone knows about x86s, 500MHz, and dual-core. It’s therefore fairly easy to speculate on what it might be like.

Buggy 586 thing? You mean F00F or FDIV? That was 20 years ago. There are much more interesting Intel platform bugs these days: http://www.phoronix.com/scan.php?page=article&item=msi_x99_fail&num=1 http://www.legitreviews.com/intel-x99-motherboard-goes-up-in-smoke-for-reasons-unknown_150008 http://www.techpowerup.com/204076/intel-haswell-tsx-erratum-as-grave-as-amd-barcelona-tlb-erratum.html

It’s Atom Silvermont. Good thing they didn’t break out graphics on this, since it has the much-hated PowerVR GPU on chip.

Any word on Coreboot support? It would be a shame if this shipped with a traditional vendor BIOS.

Digging through the Intel site, it looks like U-Boot (yay) on top of the IFWI blobs (boo). Good news is that it looks like these are “unbrickable” and can be recovered from completely corrupted flash. Look at the “edison-image-ww36-14.zip” (or later) on their downloads page.

>Buggy 586 thing? You mean F00F or FDIV? That was 20 years ago.

See link to Debian’s BTS above.

“It might be theoretically possible to run a version of Windows natively on this module, but this raises the question of why anyone would want to.”

You don’t need to have a tiny computer to raise the question of why anyone would want to run Windows.

DAE hate windows lol xD umad bro

Widest application support of any operating system in the world?

http://media.giphy.com/media/JWZmzj1KNzZBu/giphy.gif

Not everyone hates Windows.

All of the old fart elitists do because it’s the hip thing.

Exactly.. and I don’t know about the rest of you but I would rather write code in C# with modern framework. Java is OK, but still not as nice or clever..

OTOH they are both dog slow and should never be considered when performance is important, especially on embedded systems.

Sure, and this being Hackaday, embedded systems are on the brain, but most people writing software for a living are writing applications rather than system-level stuff, and that’s the domain that Java and C# are intended for. There developer efficiency and source code maintainability are much more important than performance. (Not that performance is ever totally unimportant, but the amount lost or gained by the choice of language/platform isn’t significant).

Time-critical stuff goes on the cheap $4 micro you just wired to one of the I2C ports :P . From my view, this is most of all a hobby-level CPU to orchestrate cheap-but-slow microcontrollers: this is a 500-MHz dual core + 100 MHz single core which should all have built-in multi-processing support, whereas a quick Google search pulls up a post saying that the fastest 2013 Pic chip was 200-MHz, and the fastest that I at least have is 64-MHz.

And there’s a lot of memory. This can probably do the standard microcontroller stuff, but I think you’d ideally use it to e.g. control a robotic arm or CNC machine, with a bunch of cheap microcontrollers acting as the actual motor controllers.

I use the .NET Micro Framework all the time. It’s C# for microcontrollers. The runtime and low level hardware drivers are written in C/C++ so SPI at 50MHz isn’t a problem. And that runtime and those libraries are open-source. So you can always go native if you need performance for a specific task and just interop from C#. The idea is that most the code we write doesn’t need to be fast. Using a high level language let’s us get that 90% done then, based on where bare metal performance is needed, a sprinkle of C/C++ is added. Honestly, I don’t think you can build embedded solutions faster.

Wifi and Bluetooth on board? Even if this were the same speed as a Raspberry Pi that right there is a Pi killer.

Not a Pi killer, since I can’t see any audio or video outs. More for embedded projects.

What about HDMI? Dont call it a pi killer just yet!

Yeah, it’s those cheap small computers that end up costing more to be practically usable. I hope they learned that from the raspberry pi.

When you don’t need a video out you don’t want a video chip adding costs, generating heat and consuming memory/cycles for its drivers for no reason.

But with this one, you can’t even connect a proper PSU without a break out board. If this had the micro USB on-board, I might take back what I said, since it can be interfaced through the on-board bluetooth, and wifi.

What is exactly the advantage of running an x86 Atom over some of the newer ARM boards?

The x86 compatibility would be relevant only if someone wanted to run DOS (not likely – no drivers) or Windows (too weak hw). And Linux runs on both ARM and x86 just fine, the development environments are also equally good.

The only reason for being interested in this thing is the price, but I doubt that they will maintain the $50 – that is pretty much being sold at cost or even at a loss.

Even so, aren’t there already ARMs in a similar form factor/price point that can beat the pants off this thing, performance-wise?

My initial reaction is that this is an attempt by Intel to reclaim some lost market share in the embedded market, but I doubt they’ll be able to steal much away from ARM.

Show me one, because I’ve been looking for a while. All the modules of this size that I’ve found are much more expensive.

Depends on the margins. SoC aside this is already a win at $50 given the onboard memory and wireless. The SoC’s Silvermont core should be able to lay a good pants-beating on the Cortex-A8 and A9 based boards IPC-wise that are in this price range. We’ll have to wait to see how it is in practice.

The problem is that it is practically unusable without the breakout board or something equivalent – so add another $50 or so to the price. Heck, it doesn’t even have proper power supplies built-in.

Comparing an essentially bare-bones SoC with a complete single board computer you get with e.g. a Cubieboard (or RasPi or many other similar machines) is not really apples to apples comparison. Once we get to the $100 price point, there are plenty of choices – Beagleboard, Cubieboard, Netduino, the various Allwinner boards …

It has a fairly fancy PMIC onboard and only needs 3.15-4.5V to run. If you’re good with an iron you should be able to do without the breakout board just to get it talking to a PC over USB.

i think you do not get that it is a 25 x 35mm module with wifi and bt

http://imgur.com/8ZVVr2z

Just because software is for linux doesn’t mean it runs on ARM… Either because it contains assembler is or is distributed as (gasp) binary only.

Also, it works the other way round: same price, same performance, what is the advantage of running ARM? ;)

That said, Intel is going to have a hard time in this market, but it seems like they are willing to throw money at it, so if that gets us cheap toys, good for us.

And what exactly are you planning to run on an embedded Linux that it is available only as an x86 blob? A wifi dongle that has only proprietary x86 driver? Or Matlab?

For ARM there are plenty – if nothing else, you can pick from many vendors and switching from one to another is a lot less work than when you would have to switch from Intel to ARM (or vice versa). Nothing is manufactured forever and if you rely on this sort of board for some project, you need to count with obsolescence and the need to be able to replace the module in the future.

Then you have also power management where Atom historically sucks, tons of mature development tools for embedded (which are not so common for x86, as that is used more as desktop/Windows platform), etc.

You cannot distribute Linux binary only. You know that right?

Of course you can. Not “Linux” (kernel + most of the userspace covered by the GPL) but Linux software you can without problem. There is plenty of binary-only software for Linux (games, Matlab, Mathematica, etc.), but not much that would be relevant to an embedded developer in general case.

Usually you would sign an NDA to get vendor blobs for things like the Broadcom GPU in the Raspberry Pi or drivers for some specific hardware (e.g. network or graphic chips/cores), but if you are at that level, then the vendor likely supports ARM and perhaps MIPS, as that is what is most used. Actually finding stuff for x86 could be more problematic, because those chips are rare as hen’s teeth in the embedded world.

The Linux kernel? No.

Software that runs ON the Linux kernel? Yes.

Pretty sure you can, as long as you make the sources available to people who ask. This can be as simple as offering to mail CDs to people for the cost of the media plus postage.

Because Intel doesn’t develop or manufacture their own ARM chips?

from wikipedia: Intel still holds an ARM license even after the sale of XScale; this license is at the architectural level.

Intel is a multi-billion dollar operation with more resources than many countries, they can make any chip they want to.

And why would I pick Intel if I wanted ARM? There are plenty of ARM SoC vendors around.

why do you “want” ARM? Why get emotional about it? It’s an engineering choice, not a football team.

+1

I think I gave plenty of engineering reasons why. You haven’t given any point for why one should choose an oddball x86 board though.

I am not in love with ARM, I just can’t see a meaningful use case where this board would be actually advantageous.

@Jan:

“I am not in love with ARM, I just can’t see a meaningful use case where this board would be actually advantageous.”

I don’t get why so many people here are against yet another choice in embedded boards. I’m sure you won’t get one, as you say you don’t see it meeting your needs. But maybe others might be interested in hacking away on it, if nothing else for the uniqueness of it? I agree with “F”, I think it’s more about an emotional attachment to ARM than common sense.

If this board doesn’t suit your needs as a developer, by all means skip it and use what works best for your needs. In the mean time, those of us who do see a use for it (I certainly do in a couple of scenarios at my job) will be happy to test it out. We won’t even bash ARM in the process. ;)

Intel is at the top of semiconductor manufacturing, I’m sure they could make ARM SoCs cheaper than pretty much anyone else

Somehow I doubt that, or they would have already. The ARM market is HUGE.

“Intel is at the top of semiconductor manufacturing,”–Yes

” I’m sure they could make ARM SoCs cheaper than pretty much anyone else” — not so much

Intel is years ahead in process technology(the 22nm Atom used here), but the advanced logic stuff is still made in the United states and Europe (assembled and packaged else where), so not as cheap as you might think. now, the the latest PC ‘ARM’ chip would compare to an Intel chip made 2-3 years ago (and that is most likely being produced in china by now; 32nm +) .

Intel is sell these embed and mobile products for cheap (at cost or bellow for now) to push enough volume to actual make money.(i.e. not trying to rake in the dough with these products, just try to keep the factories loaded and busy)

Intel can’t sell their premium product at a premium price because they haven’t proven them selves in this market either ….. yet

Yes the ARM market is HUGE and I can only imagine there are not in it for political reasons it would be admitting defeat. It would be like Apple making a phone with Android

Considering that metal can around the processor, my guess is that the redesign was needed because it didn’t pass FCC part 15 testing.

the metal can is for the wifi chip

seriously just about evey wifi module is in a metal can, why should this one be different?

Transceivers require shielding to work properly, genius.

It is not really required for it to work. However, you want shielding to cut down on external noise getting into the receiver (bad, could swamp useful signal) and also to cut down on unwanted radiation (or you blow your EMC compliance testing!). It also helps with avoiding de-tuning of various things by fingers or proximity of metal.

Good explanation is here: https://www.youtube.com/watch?v=fpD_mDCViPE

Please note the inclusion of the word “properly” in my above post.

Has anyone found a way to order one yet? Makershed says it’s an add-on item only.

You can backorder one on Sparkfun:

https://www.sparkfun.com/products/13097

99.95?! For that money one can buy two Android tablets with similar CPU power. Of course, hacking tablets is not so easy and fun.

Sparkfun link is open now. They have this posted:We have a purchase for 500 units. We expect some of these to arrive next on Sep 13, 2014.

Their price – $49.95.

Take my money.

I found the Sparkfun link just after I posted and back ordered a couple of modules. I guess they’re just bringing up the products.

The question of whether or not it will be able to run DOS or Windows depends not only on the x86 instruction set but also on whether it is PC compatible. There is more to a computer than the CPU and storage you know; if the peripherals aren’t presented to the OS as legacy PC hardware then DOS would certainly choke, and Windows might too if it didn’t support ACPI and other such standards.

Yep. I was wondering if they were running a variant of the EDK2. I know they have done something similar for a past Atom platform. ACPI, or something similar will be a must if they want to be able to effectively decouple the OS from the hardware.

A friend of mine works for a company that was pursuing ARM, Android, and Linux development. The company gave up because they effectively had to rewire core parts of the OS for each SoC. It means you can’t segregate development as nicely as you can with a PC (the OS guys take care of their stuff, the firmware guys take care of their stuff, the hardware guys take care of their stuff, etc).

–Shudder– Windows for embedded…legacy no less –Shudder–

Microsoft has released a version of window for Intel Galileo gen 1, they could here too.

there is also a lot open source backbone and support for this(or will be), so that making application specific android/linux os for what ever you want will not be such pain.

Crikey! That’s more powerful than my first 3 PCs! In every way! I wonder does it have a graphics output?

Shouldn’t be too hard to add a home-made video card thru GPIO if necessary, or some other interface. If you were gonna run Windows on it, you’d just need to write a driver for it that provides the minimum functions necessary. I’m thinking for Windows 3.1, but maybe XP would get up running on it, if you kept it lean.

Even graphics on MS-DOS might be possible, if you trapped the I/O and memory ranges for the standard VGA card, and emulated a VGA from there, driving the actual hardware. Maybe that’s a job for a spare processor, and it can do Soundblaster while it’s on it. And IDE.

My use would be a PC gameboy, or a PC wristwatch. Not decided yet.

It’s difficult actually, cos for most low-powered x86 code you could just run an emulator on something like ARM. The majority of this horrendous albatross of legacy code we supposedly have round our necks is just applications for PCs. Nobody runs those in embedded stuff anyway. So I wonder what the imagined use is for a chip that’s basically capable of running 15-year-old PC applications?

If they add a graphics core in as an option, it’d play Return to Castle Wolfenstein or Quake 3. That’d be worth having in your wristwatch. How tiny do they make those Retina displays?

You know, I bet one of those Android Wear watches would be easy enough to get to run the Quake 3 Android port…

Yea, make a WinXP touchscreen watch, similar to this hack http://hackaday.com/2014/09/08/upgrading-the-battery-in-a-wrist-pda/

useless, sure, but cool (I guess).

It’s not the Windows, it’s what you can run on the Windows.

So theoretically I could run Windows Embedded on this, due to this being x86 compatible.

Which brings me to my next question: What is the state of windows embedded these days… I can’t imagine very good!

It’s not bad… we use it all the time in industrial automation to run some Windows-only software (or software to interface with Windows-only drivers) on fanless embedded PCs. Atom N270 master race.

But is embedded Windows practical, or even possible, on a board with no video output?

windows embedded runs on credit card scanners and gas pumps and many other devices with no video display

Is that Sean “RGVC” / “Classic gaming” Kelly? Nice to see you! I remember when that old group was alive and had posters and stuff!

haha, no, no clue what RGVC is.

Big question is what market do you *really* plan to shake up @ $50 per module? This may be a play for the maker community but adding $50 to my BOM cost isn’t going to happen any time soon.

You can build a $15 mobile phone running an A7 core clocking ~1.5GHz able to address 4GBytes of Nand Flash with multiple SDIO interfaces, huge range of peripherals, and also with video, audio, etc. Also able to run Linux and with a bigger peripheral set. Oh, and yes it can be also do Wifi and BT4.x (read: BLE).

***WTF intel?! Where’s the production-worthy version of this? Nice try, but I vote ‘fail’. I don’t need another bloody Wifi dev kit, even if it runs Linux. They’ll sell but they fail the “product” test.

Look at Mediatek or Ralink if you want low cost Wifi / BLE capable of running linux. And that has an OpenWRT build already out there, datasheets are public and the SDK is easy to get. 3G is cheaper from Taiwanese and Chinese companies with integrated Wifi and BLE. Pffftt.

I think you’re missing the point. This is not intended to just build into a product – at least not a mass produced product. If you have the ability to produce a mobile phone, you surely don’t need to use a module such as this, you’ll just design the phone from scratch, which will make it much smaller and cheaper.

In my mind, the big win of this product is it provides a bridge between products such as the RPi and a production product. If you want to prototype a IoT or wearable product, this is much more compact than an RPi, and saves you having to solder BGA packages, etc to produce a “smallish” prototype.

There also appears to be a market for products such as the Gunstix, and this module is much cheaper.

The point there is that there are solutions taken from the mobile phone marketplace that can replace this and do it at a cost (both in terms of dollars and cents, and comparable cost to get it up and running) that are already far more competitive (more features, better support, additional xceivers) and which WILL allow the enterprising entrepreneur a path toward production. This model from Intel fails at this.

True: if all you want to do is control *your* toaster or *your* lights or *your* home heating, then great…this is a nice and expensive and seemingly user-friendly way to do it. *However* the point I’m making is that both a) that market is already well served by the existing hardware and you needn’t jump on this new form factor to get there and b) you will never get from garage band to store shelves adopting this model, so say hello to your day job, you’ll be there a while.

At least not unless you are charging a premium as someone else said and even that model is a rubbish excuse for someone to gouge industries like medical, mil/aero, etc. Wonder why your doctor charges so much? It’s the mentality that it’s ok to haphazardly add $50 to a BOM cost. If you want to charge more, fine. But do it for margin and not simply because a device in en vogue. What’s missing is all of the reasons this is better than what already exists. Maybe it’s because it can be easily plugged into a bread board? Seems again like a market well served.

So my gut reaction is to say…ok. Another Wifi board. A little more horsepower. Not sure I need it. Not with these peripherals. Better cpupower-to-peripheral performance can be had. A long wait to come up short.

Actually such chip is ideal for small-run designs. Designs for niche markets and industries where you make runs of 200 to 1000 pcs a year (and sell at high margin because these are custom made and/or run some very specific technology)

Oh, priced-high-because-we-can…Sorry – forgot about that design strategy. o-0

You mean… you forgot how capitalism works? 0.o

I think the minnow max is the most interesting Intel dev board.

http://www.minnowboard.org/meet-minnowboard-max/

Full bay trail atom (single or dual core), 1 or 2gb RAM, USB3, SATA, HDMI, Ethernet and GPIO. Also open source. It can run full windows just fine.

Its obviously no ‘Pi killer’ but I’d definitely consider it against the ARM boards at the same price point. The new atoms are nice chips IMO.

Its made by circuitco, the Beagleboard people.

Minnow Max is only interesting if your definition of “interesting” has no concern for the nearly $200 price tag.

What other dev board is cheaper with those features and performance?

Ordroid XU3 looks OK, only 10/100 Ethernet though, I’m not sure the Exynos is as open or performs as well as the Intel board though.

While it’s mildly interesting that Intel created this specifically for the Maker community (and as a possible competitor to the Raspi and BBB), I can’t help but wonder what the problem is for which this is supposed to be a solution. So it comes with Linux; well, every other tiny computer that’s smarter than a microcontroller does so too, and there is a lot of useful Linux-based software for embedded platforms running some sort of ARM CPU. So it can possibly run FreeDOS or an old Windows; but there are plenty of emulators that can do that, and probably better.

I’m excited to see an embedded x86 platform. It would be fun to get one and play with it, just to try running some old DOS/Windows code that might no longer run, or to see if I can get a Windows CE or Windows Embedded to run. But I wonder if it will be perceived by the community as a “me too” gesture that will soon be written off as “too little, too late”. We’ll see.

Well this is even more stupid than Galileo.

You want to know what happened to Quark/Galileo intel planned to push a year ago? They GAVE IT to M$, M$ in turn gave it to developers pretending they have some sort of IoT platform/plan.

Intel lost 2 Billion dollars this year alone giving away processors nobody wants, mostly Atoms in China.

http://www.extremetech.com/extreme/186367-intels-mobile-division-has-lost-an-astonishing-2-billion-dollars-so-far-this-year

http://www.kitguru.net/components/cpu/anton-shilov/intel-sells-quad-core-atom-for-tablets-for-5-per-chip-report/

its so slapped together in a hurry they couldnt even get a working USB inside that Soc and had to resort to external transceiver – Intel, the biggest baddest mofo with best fabs on the planned couldnt/didnt care even get USB right on this thing.

Well this is even more stupid than Galileo.

You want to know what happened to Quark/Galileo intel planned to push a year ago? They GAVE IT to M$, M$ in turn gave it to developers pretending they have some sort of IoT platform/plan.

Intel lost 2 Billion dollars this year alone giving away processors nobody wants, mostly Atoms in China.

its so slapped together in a hurry they couldnt even get a working USB inside that Soc and had to resort to external transceiver – Intel, the biggest baddest mofo with best fabs on the planned couldnt/didnt care even get USB right on this thing.

“Intel lost 2 Billion dollars this year alone giving away processors nobody wants”

http://finance.yahoo.com/echarts?s=INTC+Interactive#symbol=INTC;range=5y

You seem to be the only one concerned about this, the market thinks they are doing just fine.

I cant wait for China to start investigating Intel for abusing its monopoly.

Shouldn’t they be the ones wearing the bucket? That is pretty funny. They probably do call for investigations but their storage devices for the evidence are fake and overwrite after 345kb lol. Nope China should probably never utter a word about copyright infringement or monopoly until LA gets renamed Shenzen Square and we are making their iphones. I give it another 12 years tops once all of the world figures out facebook and twitter are retarded and their bloated models crash. We are then left with Apple and probably MSI as Dell launches its Steam box in 2030 complete with Bargain Buddy lol. Good times.

That is a lot of supposition there, Lou. Probably should stick with your lil toy ;)

The Atom CPU has 1.8V IO, so (AFAIK) they would need some sort of external USB phy.

No other SoC has any problem with it. USB is differential, not absolute voltage, difference between 0 and 1 is 400mV.

This module also uses Broadcom radio. Again Intel , biggest baddest mofo with tons and tons of IP, engineers and in house designs doesnt believe in this product enough to integrate one of their own Wifi IP blocks in the SoC? WTF?

This feels like some undermanned side project they will abandon in few months, like they did with Quark/Galileo.

A 72 pin header is not DIY friendly for most people… Intel could not get a good idea through thier BS management… FAIL… I’ll pass…

Then buy a breakout board. The pin spacing is similar to standard QFP pin spacing, which many DIYer solder just fine. Can you suggest a better idea for a header to put on this (tiny) board that doesn’t make it much bigger?

The “good idea” here is the small size. If you want a big board, then there are plenty of alternatives, but if you want something this size there aren’t very many options.

if you have to build a custom board… why buy from intel?

Who said anything about building a custom board?

For the hacker that needs a spiffy controller for say a 3d printer, or some kind of sensor/robotics project, this could be quite useful. But for anyone looking to replace the Rpi, the complete lack of of A/V output makes this a no go. Even at 500mhz, with only a single core, the x86 instruction set should give this platform a huge advantage. It can’t be denied that even with properly optimized code for each system, running at the exact same MHz/GHz rating, the x86 part will beat the ARM part every time given the exact same work load.

Its all about the work completed per CPU cycle. X86 was designed first for maximum work completed per CPU cycle, while simplicity and efficiency came later. Arm was designed first and for most as a power efficient design and there for required a simpler architecture that results in a lower work/CPU cycle when compared to x86 parts of equivalent speed or translated count.

Take that into account, add the fact that its a dual core chip with twice the ram, and computationally the Edison is a better part. Unfortunately, lack of AV out and a higher price point instantly relegates the Edison to industrial short-runs/one-offs and small time projects lacking need for video or audio. I would have been punching in my CC number into the first website I found with it in stock right now if it had AV out. What a waste.

As a side note, the minimum specs for xp pro is a 233mhz CPU with 64mb of ram, 1.5GB of HDD space and Gfx card capable of 800×600. So, disregarding the lack of built in video out, windows xp would run just fine on the Edison.

Umm, any actual source for this? “It can’t be denied” is not exactly a good argument.

“windows xp would run just fine on the Edison.”

Maybe you can suggest an operating system that is actually available for purchase

I bet it could Windows 3.1 pretty well too.

I haven’t suggested that – wrong comment, perhaps?

It is available, depending on how hard you look, or how much you want to violate the law. And besides, whether or not YOU can buy it isn’t relevent to whether it will run on the Edison.

You might want to do some research yourself. not every x86 is the same. 1.6GHz atoms ran at a speed comparable to ~600MHz Pentium3

The older (32nm) 1.8GHz or so Atoms are on par with P4s. The one on the dev board in question probably is indeed most comparable to a pair of 600MHz P3s.

Its called research. Do some. It’s good for you. If you disagree, then go find out for your self. I don’t care to get into an argument over the merits of one architecture over another here, nor do feel like educating you on the subject simply because you seem to dislike my confidence in my own knowledge on the subject.

The actual benchmarks (e.g.: http://www.computingcompendium.com/p/arm-vs-intel-benchmarks.html) show exactly the opposite, so I am not sure why are you pushing this information.

Moreover, ISA is of little relevance in an embedded system – the actual architecture (e.g. how exactly is memory and peripherals connected to the CPU) will have much more impact.

Did you ever try to run a RPi on a small single cell lipo battery for more than … a few minutes.

No !

Now you have this

Actually… My RPI running xbmc over Debian runs on a single cell 1200mah lithium ion for about an hour, provided I don’t play 1080p video on it. Also, li-po’s are not really made for endurance but rather light weight and high output. Sure they’re getting better, but there’s a reason li-ion is still the go to battery in consumer electronics, and li-po is still mostly used in Rc cars/planes.

At Intel’s absoute best their performance will have parity with ARM, clock for clock. The atom cpus in this board get ‘up to two’ instructions per cycle. The cortex a9s in some other boards have two to four AND they are clocked faster. It is absolutely not true that Intel is more efficient than ARM, and certainly not more powerful at this clock speed.

MIPS or instructions per clock can be very misleading across different architectures. Perhaps, and I’m pretty sure it’s the case, x86 instructions do more work than the simpler ARM ones. You’d need some real-world test of some sort. That said I don’t know if the embedded world is big on benchmarks, since there’s such variation in their uses.

Intel docs say this chip has out-of-order execution, two cores, SSE 4.2 and 64-bit extensions.

Awesome this changes almost everything for my personal robotics project, singularity here we come.

:)

66 comments and counting, I’d say that Intel has at least hit a nerve with this device.

More like a ton of fools thinking they will be able to run Windows on it because it is x86, so it has to work, right? (never mind the lack of anything resembling a PC architecture …)

pretty much this.

People see x86 and think ‘wow I can run this program i downloaded 5 years ago without recompiling’.

It’s not foolish at all to think this will run Windows because it likely already can. The Windows Embedded available now for Intel Galileo runs Win32 based Windows applications just fine. Edison is just a more compact and more powerful version of the Galileo…

If this little thing has at least one PCIe lane, I will hook it up to a MXM CUDA capable GPU module, just for fun.

Damn, that’s a lovely pricetag for that. That’s about what you’d pay for a arduino with wifi/bluetooth, or for that matter, a rasppi with bluetooth/wifi.

The only thing it’s missing is a HDMI/VGA, but that may be tameable with a PCIe card, or something simpler. And besides, the only thing people really use the VGA/HDMI for is mediastations, MAME cabinets and the like. Otherwise, a terminal can handle you quite nicely.

Shame it won’t be available for the rest of the year, those things have got to be backordered into the next century.

You’re not getting a PCIe card on this thing. I linked to the pinouts. It’s just not there.

Hm. According to the FAQ it should be there. If it’s not on the 70-pin, I wonder where it is. It’s also not listed in the design brief, so I wonder what’s up.

http://communities.intel.com/docs/DOC-23201?sr=stream&ru=42620

Yeah… so evangelist for the Edison here. I’m guessing we copied the Galileo FAQ and didn’t make sure a bunch of stuff was correct. Kinda annoyed this happened.

There’s no PCI-e, unfortunately.

why would anyone choose a 500MHz x86 over one of the numerous GHz ARMs ?

and $50 ? you can get a dual core +1GHz tablet with more memory for less

Here here! +1

I suppose because they have a project in mind that a tablet is unsuited for. I would consider this board from Intel to be more of an embedded microcontroller with some handy features than a tablet myself.

sure the form factor is different, my point is that if someone can build a device with double the performance, a battery, a display, an enclosure and put it in box with a charger for less then Intel is probably doing something wrong

You’re still comparing two very different products. I’m sure the bottom of the line tablet market has a orders of magnitude larger market base. What you should be comparing to is the embedded market. Take a look at the price of other SOMs, or just look at the price of the majority of the mbed prototype boards that are generally not much more than a cheap microcontroller, and most cost similar to or more than the Edison.

I know it is two very different products but the base is the same, an SoC, some memory and a PCB. And I doubt Intel intends to make money making these they are basically advertising hoping to get some one back from ARM

Because sometimes you dont need more processing power. This is very small, thats a plus. And the fact its x86 for me is a huge plus. cross compiling ARM is a PIA, so many lib’s have ARM compatibility issues that Ive given up on ARM. I have two ARM devices and trust me, I wont be buying another.

why cross compile then? install gcc and build on the target so much simpler and it’s not like a GHz ARM is that slow

I could buy a Hershey bar for less as well, but that doesn’t do much good if I’m looking for a tiny Linux SOM! Show me another SOM with similar specs for less and I’ll buy it.

umm, how about because the Edison can do double the calculations per second of the CPU on the Raspberry Pi.

so does $50 android tablet, so?

Problem is that those shitty ‘hirose’ connectors are no good for multiple insertions & extractions.

They break REAL easily. Not to mention the issue of the breakout boards only having the facility to connect one PCB rather than them being stackable.

Absolute nightmare… I already have a design using this sort of connector, and good luck on replacing it without lifting tracks….

The $0.70 is bull as well, unless you want 4,000, Iv’e been trying to get hold of a set of Molex parts of similar construction , but no one is interested, including molex…..

So buy the parts from a distributer like everyone else? Of course they’re not going to talk to you if you want to buy less than their minimum quantity.

http://www.digikey.com/product-detail/en/DF40C%282.0%29-70DS-0.4V%2851%29/H11908CT-ND/2530299

http://www.digikey.com/product-detail/en/DF40C-70DP-0.4V%2851%29/H11630CT-ND/1969509

I remove these connectors all the time without breaking traces. I agree it’s difficult to get the solder to flow. The combinations of preheating the board using a hot air reflow station and flooding the connector with solder makes removing it with an iron possible. Afterwards you can clean everything up with a solder wick and used paste to put the new part down.

It would be nice if they brought out a PCIe x1. Wire up a dual Gigabit NIC and then it would make a great router.

Poor choice of naming such a beautiful board after such a douchebag dude Edison

http://theoatmeal.com/comics/tesla

I wonder if you could take sixteen or so of these and build yourself a (very) small cluster…

Or you could get an 8-core PC that’d wipe the floor with it.

(Yawn) I think I still have some 500MHz Pentium II boards somewhere.

Honestly, the embedded world is constantly moving towards ARM, even MIPS is now a rare guest, and Intel seems to be the one small village of indomitable Gauls…

And their desktop department’s really ran out of ideas. It just doesn’t seem possible to go much over 4GHZ, and there’s only so many CPUs you can parallel together and still have it make any difference. That number’s 4, btw, although 2 isn’t far behind.

Either CPUs in the future’s going to have to parallellize EVERYTHING, where each line of code gets it’s own CPU that spends it’s life second-guessing every other CPU, or we’re going to have to split programs into small parallel blocks. Which only works so well.

Or we might just be stuck with the chips we have, for a long time, and learning how to write software that runs on it will be the next challenge.

I’m sure one of the primary drivers behind this is that Intel sees the writing on the wall that the average user doesn’t need faster CPUs anymore, unless you’re a hardcore gamer. The slower CPUs in tablets and smart phones work just fine for reading email and surfing the web. If that’s the case, why do you need a 4GHz 8 core CPU in your desktop system. Most people don’t even need a desktop system anymore. The future is small, low-power and cheap.

Tablets do seem to be doing remarkably well. My friend’s going to get one as an Xmas present for her 1-year-old grandson! A special, baby tablet, of course (!).

It’s nice how they managed to almost completely kill off people’s desires to own a PC. Especially with cellular modems, which are huge in the UK, you don’t need a wired Internet connection any more. Ironically most of the cellular Internet users I know are not international businessmen, but people too poor to afford a wired connection. Cellular is cheaper! It’s also available as pay-as-you-go, so you don’t even have to pay for a month if you don’t want to. Wired Internet (only 15 quid a month, plus 20 for the obligatory landline phone!) really needs to learn a lesson.

Turns out the Windows / Intel hegemony meant shit! We really were only buying it cos there was no other choice. As soon as Android popped up, off we all went! Since “being the only choice” was a major part of MS / Intel’s business strategy, this has got them panicking! Who’d have thought we’d see Windows for ARM? And that nobody would want it!

Overpowered, lumpy, heavy garbage that relied on a monopoly, being defeated by free-ish software and nimble Oriental manufacturers. Same thing happened to American cars. “We’re shit, but you have no other choice” is no longer good enough! Hurray!

Of course we now have to worry about a country with an evil totalitarian government that tortures people and doesn’t recognise human rights, having nearly all the world’s manufacturing capacity. But that’s a bigger issue!

“Of course we now have to worry about a country with an evil totalitarian government that tortures people and doesn’t recognize human rights, having nearly all the world’s manufacturing capacity.”

Up to “world’s manufacturing capacity”, I thought you were talking about the US.

Is it possible to communicate between the microcontroller and linux-sides of the system?

what about this one

http://www.acmesystems.it/arietta ?

Small, less powerful but also cheaper

That is about the closest I could find to what I want, and almost ordered some a few months back, bu but it is significantly bigger, and not much cheaper when you include shipping and EUR to USD conversion. The 100 mil connector is easy for mating with, but it also takes a significant amount of board space on any mating board.

May this thing be able use with a RTLSDR dongle? I mean, WiFi, USB power and USB OTG. This would make a rtl_tcp-server even more portable!

Well, you can use a RTLSDR dongle with an Android phone or tablet…

sure, you could use this intel thingie, or a $20 TPlink router running openwrt

Current yocto image for the edison (edison-src-weekly-68.tgz) has bluez5 that does not support hfp/hsp (bluetooth headset). Even when bluez5 is replaced by bluez4+alsa-lib the headset does not work. But a2dp and ble works.

When another bluetooth dongle is connected to the intel edison, the headphone works so it is not a bluez4 of alsa-lib library issue.

I suspect the problem is in the bcm43341 bt firmware in the intel-edison.

tl:dr, don’t use bluetooth headset with intel edison, it doesn’t work atm.

So whats on the 40 pin connector they didnt populate? Maybe PCIe?