What if you could give yourself a standard eye exam at home? That’s the idea behind [Joel, Margot, and Yuchen]’s final project for [Bruce Land]’s ECE 4760—simulating the standard Snellen eye chart that tests visual acuity from an actual or simulated distance of 20 feet.

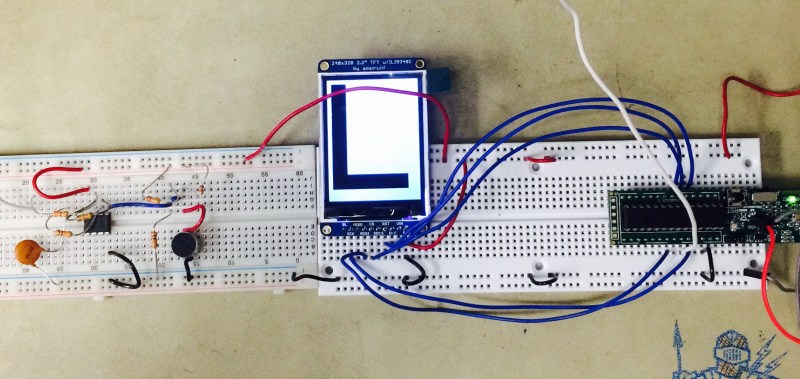

This test is a bit different, though. Letters are presented one by one on a TFT display, and the user must identify each letter by speaking into a microphone. As long as the user guesses correctly, the system shows smaller and smaller letters until the size equivalent to the 20/20 line of the Snellen chart is reached.

Since the project relies on speech recognition, the group had to consider things like background noise and the differences in human voices. They use a bandpass filter to screen out frequencies that fall outside the human vocal range. In order to determine the letter spoken, the PIC32 collects the first 256 and last 256 samples, stores them in two arrays, and performs FFT on the first set. The second set of samples undergoe Mel transformation, which helps the PIC assess the sample logarithmically. Finally, the system determines whether it should show a new letter at the same size, a new letter at a smaller size, or end the exam.

While this is not meant to replace eye exams done by certified professionals, it is an interesting project that is true to the principles of the Snellen eye chart. The only thing that might make this better is an e-ink display to make the letters crisp. We’d like to see Snellen’s tumbling E chart implemented as well for children who don’t yet know the alphabet, although that would probably require a vastly different input method. Be sure to check out the demonstration video after the break.

Don’t know who [Bruce Land] is? Of course he’s an esteemed Senior Lecturer at Cornell University. But he’s also extremely active on Hackaday.io, has many great embedded engineering lectures you can watch free-of-charge, and every year we look forward to seeing the projects — like this one — dreamed and realized by his students. Do you have final projects of your own to show off? Don’t be shy about sending in a tip!

Not a voice expert by any means, but this assumption they make seems rather inaccurate:

“The range of spoken frequencies is 300 Hz to 3400 Hz. This means we only need to worry about frequencies in that range, so we can filter out the rest.”

From what I’ve seen, the real range of human speech is more like 100Hz to 4000Hz. Depending on the source you look at the low end can get down to about 65Hz and the high end as high as 5000Hz. Even after they say the range is 300-3400, they set their bandpass filter to operate between frequencies of 324Hz and 3058Hz, reducing the range even further.

I believe the 300-3400 range cited is what is used for telephony purposes, where the goal is to provide a perception of normal speech, not to accurately capture the spoken words.

Go look at a quality microphone used for spoken words and you’ll see frequency ranges way, way outside the 300-3400 range listed. Perhaps expanding the bandpass range would improve the speech recognition.

The system obviously needs only legible speech, so it’s unnecessary to include frequencies outside that range. (A microphone made for vocal recording has an ideally flat frequency response from 20 Hz to 20 kHz, but in recording you need it to capture tonality, timbre, harmonics, etc., all of which contribute to the musicality of the piece).

“We’d like to see Snellen’s tumbling E chart implemented as well for children who don’t yet know the alphabet, although that would probably require a vastly different input method”

That would actually be much easier, you could use a joystick for input.

Actually the preferred chart for kids is “HVOT” that is the four optotypes H – – V – – O or T are shown in random order and the child points to it on a card that they hold in their hands – this would be easily implemented using a 4 input device or perhaps a smartphone or tablet. Illiterate or (the more PC name “tumbling”) E could be done using gesture recognition (like a kinetic) since patients already naturally tend to “swipe” their hand in the direction of the open E.

What I think is more surprising is why we still determine eye problems with a method that was devised over 150 years ago.

How hard would it be to create an app to do the same with (structured?) light and the camera of a mobile phone ?