Among the many facets of modern technology, few have evolved faster or more radically than the computer. In less than a century its very nature has changed significantly: today’s smartphones easily outperform desktop computers of the past, machines which themselves were thousands of times more powerful than the room-sized behemoths that ushered in the age of digital computing. The technology has developed so rapidly that an individual who’s now making their living developing iPhone applications could very well have started their career working with stacks of punch cards.

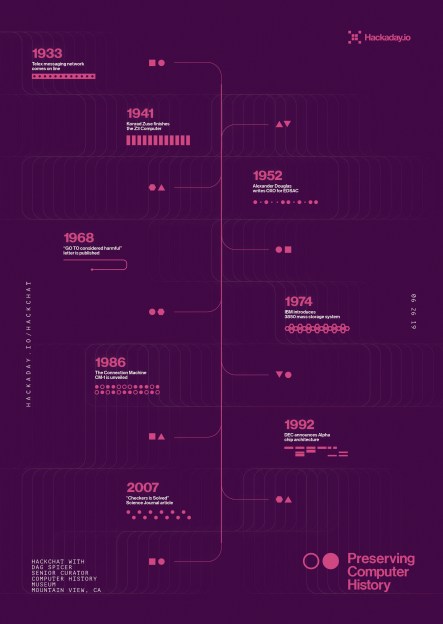

With things moving so quickly, it can be difficult to determine what’s worth holding onto from a historical perspective. Will last year’s Chromebook one day be a museum piece? What about those old Lotus 1-2-3 floppies you’ve got in the garage? Deciding what artifacts are worth preserving in such a fast moving field is just one of the challenges faced by Dag Spicer, the Senior Curator at the Computer History Museum (CHM) in Mountain View, California. Dag stopped by the Hack Chat back in June of 2019 to talk about the role of the CHM and other institutions like it in storing and protecting computing history for future generations.

To answer that most pressing question, what’s worth saving from the landfill, Dag says the CHM often follows what they call the “Ten Year Rule” before making a decision. That is to say, at least a decade should have gone by before a decision can be made about a particular artifact. They reason that’s long enough for hindsight to determine if the piece in question made a lasting impression on the computing world or not. Note that such impression doesn’t always have to be positive; pieces that the CHM deem “Interesting Failures” also find their way into the collection, as well as hardware which became important due to patent litigation.

To answer that most pressing question, what’s worth saving from the landfill, Dag says the CHM often follows what they call the “Ten Year Rule” before making a decision. That is to say, at least a decade should have gone by before a decision can be made about a particular artifact. They reason that’s long enough for hindsight to determine if the piece in question made a lasting impression on the computing world or not. Note that such impression doesn’t always have to be positive; pieces that the CHM deem “Interesting Failures” also find their way into the collection, as well as hardware which became important due to patent litigation.

Of course, there are times when this rule is sidestepped. Dag points to the release of the iPod and iPhone as a prime example. It was clear that one way or another Apple’s bold gambit was going to get recorded in the annals of computing history, so these gadgets were fast-tracked into the collection. Looking back on this decision in 2022, it’s clear they made the right call. When asked in the Chat if Dag had any thoughts on contemporary hardware that could have similar impact on the computing world, he pointed to Artificial Intelligence accelerators like Google’s Tensor Processing Unit.

In addition to the hardware itself, the CHM also maintains a collection of ephemera that serves to capture some of the institutional memory of the era. Notebooks from the R&D labs of Fairchild Semiconductor, or handwritten documents from Intel luminary Andrew Grove bring a human touch to a collection of big iron and beige boxes. These primary sources are especially valuable for those looking to research early semiconductor or computer development, a task that several in the Chat said staff from the Computer History Museum had personally assisted them with.

Towards the end of the Chat, a user asks why organizations like the CHM go through the considerable expense of keeping all these relics in climate controlled storage when we have the ability to photograph them in high definition, produce schematics of their internals, and emulate their functionality on far more capable systems. While Dag admits that emulation is probably the way to go if you’re only worried about the software side of things, he believes that images and diagrams simply aren’t enough to capture the true essence of these machines.

Quoting the the words of early Digital Equipment Corporation engineer Gordon Bell, Dag says these computers are “beautiful sculptures” that “reflect the times of their creation” in a way that can’t easily be replicated. They represent not just the technological state-of-the-art but also the cultural milieu in which they were developed, with each and every design decision taking into account a wide array of variables ranging from contemporary aesthetics to material availability.

While 3D scans of a computer’s case and digital facsimiles of its internal components can serve to preserve some element of the engineering that went into these computers, they will never be able to capture the experience of seeing the real thing sitting in front of you. Any school child can tell you what the Mona Lisa looks like, but that doesn’t stop millions of people from waiting in line each year to see it at the Louvre.

The Hack Chat is a weekly online chat session hosted by leading experts from all corners of the hardware hacking universe. It’s a great way for hackers connect in a fun and informal way, but if you can’t make it live, these overview posts as well as the transcripts posted to Hackaday.io make sure you don’t miss out.

You are correct any museum containing technology should be closed and there inventory scrapped. Lets start with the Smithsonian National Air and Space Museum.

/s ?

I assume so ;-)

I’d hoped it would be obvious!

The athletics are why people want to see the actual artifact.

Who can look at a PDP-11 and say that was built in anytime but the 70s. The colors and bezel shapes matched the era so obviously.

Ha ha – I guess you mean the aesthetics? I immediately thought of a row of glass display cabinets with different frozen athletes, so people could see the “actual artifacts” – a discuss thrower with the discus about to leave his fingers, a sprinter just about to touch the finishing tape, one cabinet filled with water, with Chad le Clos about to touch the wall.

Back to the debate – They say that people can either read maps, or they can’t. If they don’t grok it in the first five minutes, usually no amount of explaining about trying to match landmarks with what you see on the paper, etc. etc. helps. You can’t explain to someone how to actually read a real map, unless they’re already able to. Guess it’s the same with why computer history is important. If you never programmed a CPU on a breadboard in assembly language, or tried to build a circuit from logic gates that can multiply two numbers, or debugged a computer you built yourself (the bug could be in hardware or software), you won’t know why a 60 year old computer is worth keeping, and what it took to make it.

If you can’t read the map, it’s ok – just use you GPS. But after the battery dies, you better be hiking with someone that understands how and why.

Absolutely right and surprised any reader of hackaday would be against preservation of the past technology.

Why some people never want to learn from our past nor look outside the box of the current state of technology to broaden their perspective I will never understand…

The misuse of “your” is the LEAST wrong thing in this comment. Impressive.

I can think of several new things we’ve learned from old equipment in just the past few years.

Here’s a big one…..

https://www.zdnet.com/article/intel-has-a-secret-security-lab-full-of-old-hardware-to-hunt-for-bugs/

Those who ignore the perils of the past are destined to repeat them.

I can just imagine a younger person looking at something from the 1950’s that is still working and questioning why it is that they have to replace their iPhone so frequently.

Looking at old things always poses the question “is what we have today: better or worse than what we had before.

And it’s the context that’s important here. For example; is it better or worse for the planet.

By you logic, every piece of hardware in existence today, will be a fart in 10 years time. It doesn’t magically morph into a fart, which means every piece of hardware is already a fart. Since that’s not true, neither is 1950s hardware.

Technology any cultural artifact is a fart. Whether it’s a pyramid, or a painting or a shipwreck or a signed baseball. Or even that old rocket engine Jeff Bezos recovered off the ocean floor some years ago. Somehow somewhere those farts have value to “someone”.

For me the primary reason for preserving computing’s past is to expose the computing myths we currently surround ourselves with. And much of this is to do with, for want of a better phrase, a Promethean idea.

For example, how many times have you heard people say things like:

“There’s more computing power in a Casio digital watch than in the computers that took astronauts to the moon.”

And it’s only when you can point out that the AGC was a 16-bit computer with about 76kB of ROM+RAM that you can demonstrate it certainly wasn’t that primitive.

Or when people claim that in the 1980s we never thought that we’d ever need more than 64kB or 640kB; or that we couldn’t conceive of a hard drive >524MB. And of course that’s rubbish – in the 1980s we were expecting computers to double in capability every year or 2; we knew that the micros of the near future were going to catch up with then current minicomputers and they had MB of RAM and hundreds of MBs of disk space.

Or the common misconception that before the 486DX / Pentium / AMD 64 (whatever computer you were introduced to first); all computers were primitive beyond usability. In reality, we could do many of the things we do now, just in a more arcane manner. For example, we could read and write documents; Hackaday would have been a BBS (or CompuServe location); teletext could deliver computerised news; linear video editing was possible (2x VHS units + a computer to cue edit decision lists); MIDI, sequencers and sampled sound was mainstream; video games; 3d graphics and animation were possible or easily conceivable. Development IDEs existed in the 1980s as did hypertext (e.g. Hypercard) and computer prices for home computers were low (as low as <$100 in some cases).

Basically, people then were no more stupid, nor no less creative than now and that's what the museums demonstrate most clearly.

Slightly related question: how much compute power is there in a [sic – which?] Casio digital watch?

Thank you Julian, It’s easy to be smug about today’s tech, and forget the creative things that people did in the past with the tech of the time.

What’s going on here on Hack-A-Day?

“13 THOUGHTS ON CLASSIC CHAT: PRESERVING COMPUTER HISTORY”, but only 5 actual “thoughts” on the page. Some I saw on Saturday aren’t here anymore. There were some comments about farts, (including one of mine :), but they’re gone. Is there now a hidden “edit” or “delete” button on the page I didn’t see?