Many of the projects we serve up on Hackaday are freshly minted, hot off the press endeavors. But sometimes, just sometimes, we stumble across ideas from the past that are simply too neat to be passed over. This is one of those times — and the contraption in question is the “Kataka”, invented by [Jens Sorensen] and publicised on the cover of the Eureka magazine around 2003.

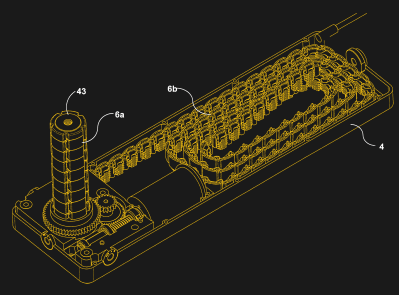

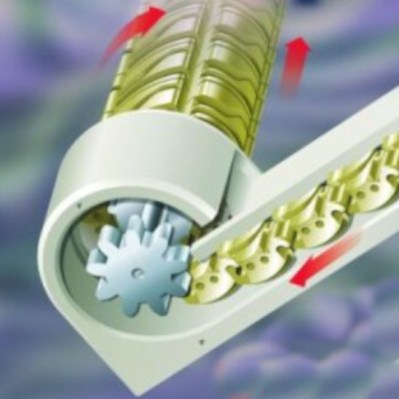

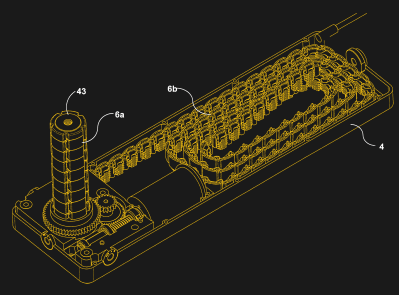

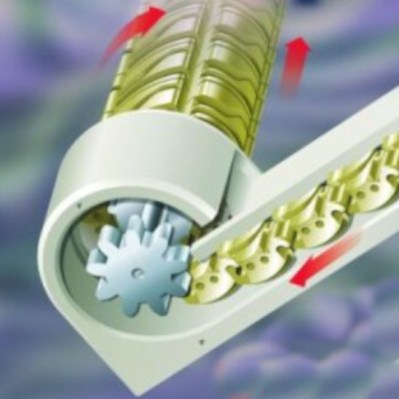

The device, trademarked as the Kataka but generically referred to as a Segmented Spindle, is a compact form of linear actuator that uses a novel belt arrangement to create a device that can reduce to a very small thickness, while crowing to seemingly impossible dimensions when fully extended. This is the key advantage over conventional actuators, which usually retract into a housing of at least the length of the piston.

The device, trademarked as the Kataka but generically referred to as a Segmented Spindle, is a compact form of linear actuator that uses a novel belt arrangement to create a device that can reduce to a very small thickness, while crowing to seemingly impossible dimensions when fully extended. This is the key advantage over conventional actuators, which usually retract into a housing of at least the length of the piston.

It’s somewhat magical to watch the device in action, seeing the piston appear “out of nowhere”. Kataka’s youtube channel is now sadly inactive, but contains many videos of the device used in various scenarios, such as lifting chairs and cupboards. We’re impressed with the amount of load the device can support. When used in scissor lifts, it also offers the unique advantage of a flat force/torque curve.

Most records of the device online are roughly a decade old. Though numerous prototypes were made, and a patent was issued, it seems the mechanism never took off or saw mainstream use. We wonder if, with more recognition and the advent of 3D printing, we might see the design crop up in the odd maker project.

Most records of the device online are roughly a decade old. Though numerous prototypes were made, and a patent was issued, it seems the mechanism never took off or saw mainstream use. We wonder if, with more recognition and the advent of 3D printing, we might see the design crop up in the odd maker project.

That’s right, 3D printed linear actuators aren’t as bad as you might imagine. They’re easy to make, with numerous designs available, and can carry more load than you might think. That said, if you’re building, say, your own flight simulator, you might have to cook up something more hefty.

Many thanks to [Keith] for the tip, we loved reading about this one!

Continue reading “The Black Magic Of A Disappearing Linear Actuator” →