The OAK-D is an open-source, full-color depth sensing camera with embedded AI capabilities, and there is now a crowdfunding campaign for a newer, lighter version called the OAK-D Lite. The new model does everything the previous one could do, combining machine vision with stereo depth sensing and an ability to run highly complex image processing tasks all on-board, freeing the host from any of the overhead involved.

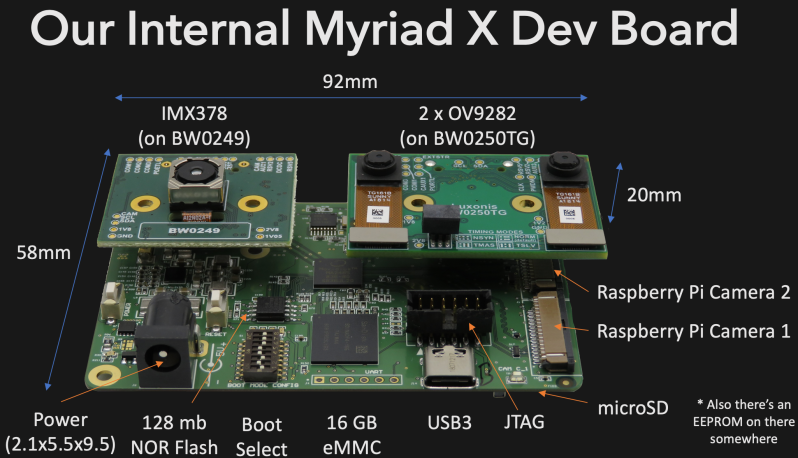

The OAK-D Lite camera is actually several elements together in one package: a full-color 4K camera, two greyscale cameras for stereo depth sensing, and onboard AI machine vision processing with Intel’s Movidius Myriad X processor. Tying it all together is an open-source software platform called DepthAI that wraps the camera’s functions and capabilities together into a unified whole.

The goal is to give embedded systems access to human-like visual perception in real-time, which at its core means detecting things, and identifying where they are in physical space. It does this with a combination of traditional machine vision functions (like edge detection and perspective correction), depth sensing, and the ability to plug in pre-trained convolutional neural network (CNN) models for complex tasks like object classification, pose estimation, or hand tracking in real-time.

So how is it used? Practically speaking, the OAK-D Lite is a USB device intended to be plugged into a host (running any OS), and the team has put a lot of work into making it as easy as possible. With the help of a downloadable application, the hardware can be up and running with examples in about half a minute. Integrating the device into other projects or products can be done in Python with the help of the DepthAI SDK, which provides functionality with minimal coding and configuration (and for more advanced users, there is also a full API for low-level access). Since the vision processing is all done on-board, even a Raspberry Pi Zero can be used effectively as a host.

There’s one more thing that improves the ease-of-use situation, and that’s the fact that support for the OAK-D Lite (as well as the previous OAK-D) has been added to a software suite called the Cortic Edge Platform (CEP). CEP is a block-based visual coding system that runs on a Raspberry Pi, and is aimed at anyone who wants to rapidly prototype with AI tools in a primarily visual interface, providing yet another way to glue a project together.

Earlier this year we saw the OAK-D used in a system to visually identify weeds and estimate biomass in agriculture, and it’s exciting to see a new model being released. If you’re interested, the OAK-D Lite is available at a considerable discount during the Kickstarter campaign.