While a punch card is perhaps the lowest-density storage medium available, it has some distinct advantages. As [Bitroller] points out in the write-up of his punch card project, if he was using stainless steel instead of PLA his 3D printed punch cards would likely outlast everything he owns, and survive a five-alarm fire to boot. If you have 16 bytes you really, really don’t want to forget — or are willing to store your private key in a shoe box — this project might be of interest.

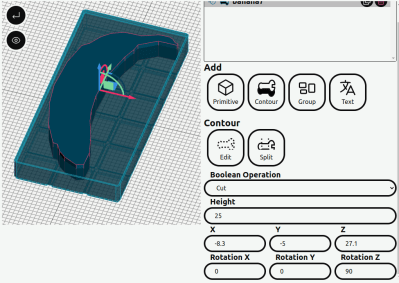

The nice part is that he’s built a handy Python script to generate printable files for the punch cards, which encode 16 bytes of information and 4 bytes of error correction using the Reed-Solomon algorithm. That’s just enough for a password and the error correction means up to two bytes can be recovered in the case of read failure.

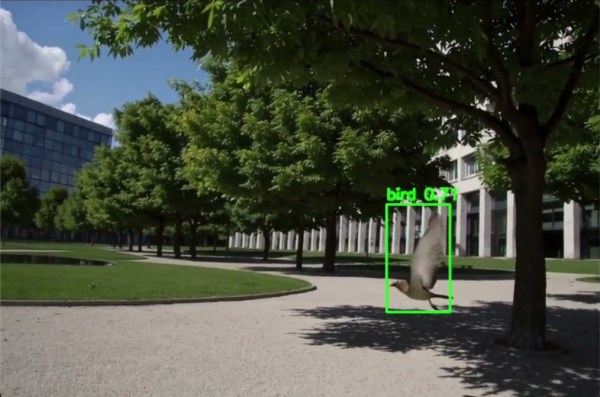

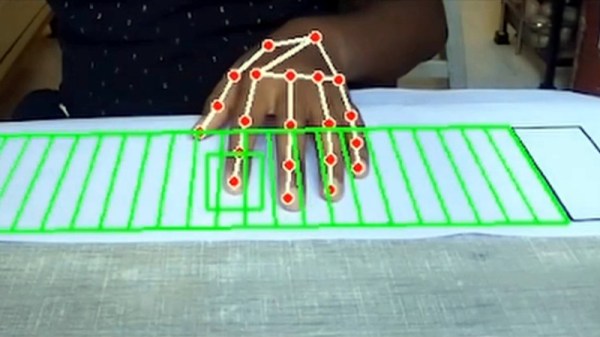

The reading is where this gets interesting — again, [Bitroller] provides a handy script, but this one uses OpenCV to read the entire punch card at once from a webcam image, using the contrast between a black table and the light-colored PLA cards. It’s massively overkill and would have needed a supercomputer in the days when punch cards were common I/O, but that’s what makes this a great hack.

We only have one quibble: if you use additive manufacturing, can you still call it a punch card? Nothing was punched out, after all.

If you think punch cards are totally irrelevant in the modern day, well, you might be right– but that doesn’t stop us from playing with them. If punch cards make you think of Big Iron in the early days of computing, maybe think further back– they were used for everything from Jacquard looms to the original MIDI.