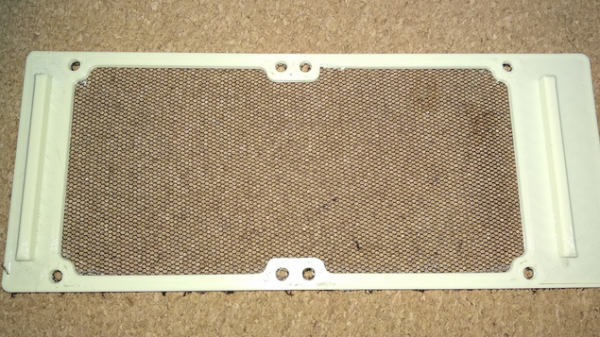

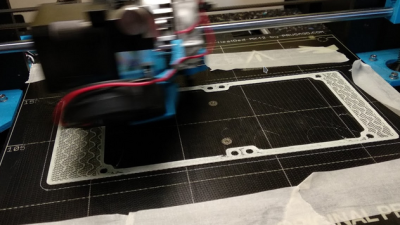

This custom fan filter created by [Kolomanschell] is a clever application of a technique used to create wearable 3D printed “fabrics”, which consist of printed objects embedded into a fine mesh like a nylon weave. The procedure itself is unchanged, but in this case it’s done not to embed 3D printed objects into a mesh, but to embed a mesh into a 3D printed object.

The basic idea is that a 3D print is started, then paused after a few layers. A fine fabric mesh (like tulle, commonly used for bridal veils) is then stretched taut across the print bed, and printing is resumed. If all goes well, the result is 3D printed elements embedded into a flexible, wearable sheet.

The basic idea is that a 3D print is started, then paused after a few layers. A fine fabric mesh (like tulle, commonly used for bridal veils) is then stretched taut across the print bed, and printing is resumed. If all goes well, the result is 3D printed elements embedded into a flexible, wearable sheet.

The beauty of this technique is that the 3D printer doesn’t need to be told a thing, because other than a pause and resume, the 3D print is nothing out of the ordinary. You don’t need to be shy about turning up the speed or layer height settings either, making this a relatively quick print. Cheap and accessible, this technique has gotten some traction in the costume and cosplay scene.

As [Kolomanschell] shows, the concept works great for creating bespoke filters, and the final result looks very professional. Don’t let the lack of a 3D model for your particular fan stop you from trying it for yourself, we’ve already shared a great resource for customizable fan covers. So if you’ve got a 3D printer and a bit of tulle, you have everything you need for a quick afternoon project.