[Aldric Negrier] wanted to make 3D-scanning a person streamlined and simple. To that end, he created this voice-controlled 3D-scanning rig.

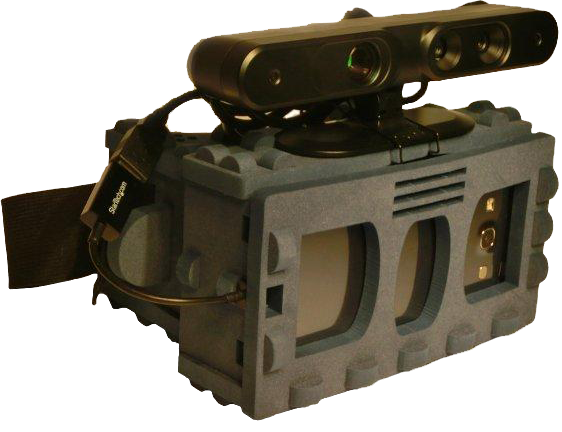

[Aldric] used a variety of hacking skills to make this project, and his thorough Instructable illustrates this nicely. Everything from CNC milling to Arduino programming to 3D-printing was incorporated into the making of this rig. Plywood was used to construct the base and the large toothed gear. A 12″ Lazy Susan bearing was attached to this gear to allow smooth rotation. In order to automate the rig, a 12V DC geared motor was attached to a smaller 3D-printed gear and positioned on the base. When the motor is on, the smaller gear’s teeth take the larger gear for a spin. He used a custom dual H-bridge motor driver made by a friend, which is connected to an Arduino Nano. The Nano is also connected to a Bluetooth module and an ultrasonic range finder. When an object within 1-35cm is detected on the rig for 3 seconds, the motor starts to spin, stopping when the object is no longer detected. A typical scan takes about 60 seconds.

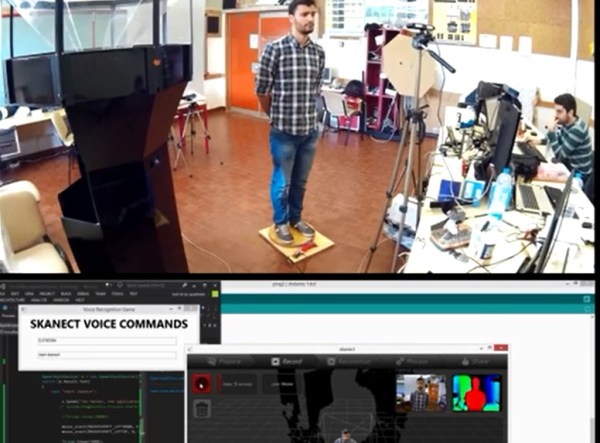

This alone would have been a great project, but [Aldric] did not stop there. He wanted to be able to step on the rig and issue commands while being scanned. It makes sense if you want to scan yourself – get on the rig, assume the desired position, and then initiate the scan. He used the Windows speech recognition SDK to develop an application that issues commands via Bluetooth to Skanect, a 3D-scanning software. The commands are as simple as saying “Start Skanect.” You can also tell the motor to switch on or off and change its speed or direction without breaking form. [Aldric] used an Asus Xtion for a 3D-scanner, but a Kinect will also work. Afterwards, he smoothed his scans using MeshMixer, a program featured in previous hacks.

Check out the videos of the rig after the break. Voice commands are difficult to hear due to the background music in one of the videos, but if you listen carefully, you can hear them. You can also see more of [Aldric’s] projects here or on this YouTube channel.

Continue reading “Take A Spin On This Voice-Controlled 3D Scanning Rig”