The World Health Organization estimates that around 90% of the 285 million or so visually impaired people worldwide live in low-income situations with little or no access to assistive technology. For his Hackaday Prize entry, [Tiendo] has created a simple and easily reproducible way-finding device for people with reduced vision: a bracelet that detects nearby objects and alerts the wearer to them.

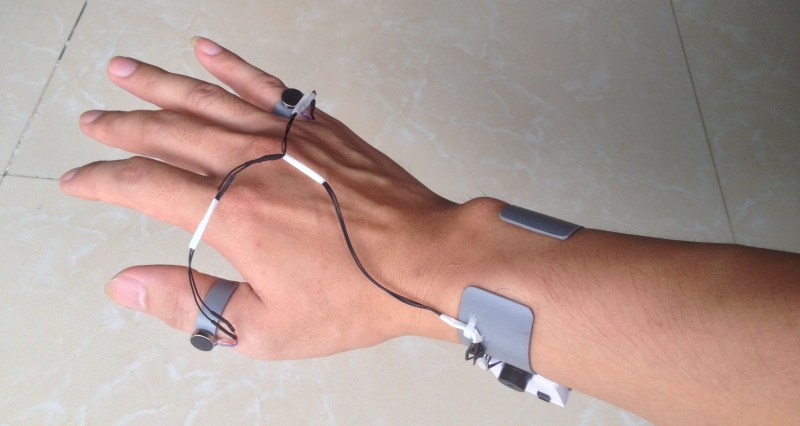

It does its job using an ultrasonic distance sensor and an Arduino Pro Mini. The bracelet has two feedback modes: audio and haptic. In audio mode, the bracelet will begin to beep when an object is within 2.5 meters. And it behaves the way you’d expect—get closer to the object and the beeping increases; back away and it decreases. Haptic mode involves two tiny vibrating disk motors attached to small PVC cuffs that fit on the thumb and pinky. These motors will buzz differently based on the person’s proximity to a given object. If an object is 1 to 2.5 meters away, the pinky motor will vibrate. Closer than that, and it switches over to the thumb motor.

To add to the thriftiness of this project, [Tiendo] re-used other objects where he could. The base of the bracelet is a cuff made from PVC. The nylon chin strap and plastic buckle from a broken bike helmet make it adjustable to fit any wrist. To keep the PVC cuff from chafing, he slipped small pieces from an old pair of socks on to the sides.

It’s easy to see why this project is a finalist in our Best Product contest. It’s a simple, low-cost assistive device made from readily available and recycled materials, and it can be built by anyone who knows a little bit about electronics. Add in the fact that it’s lightweight and frees up both hands, and you have a great product that can help a lot of people. Watch it beep and buzz after the break.

Was this tested with a blind person? How is it better than traditional white cane? I have a white cane, I learned to use it when I was 5 (my mom was afraid I’ll be blind because of glaucoma, so she wanted to prepare me). With white cane one can detect obstacles that are in front, on the sides, it shows, where are doors while going down a corridor or sidewalk, detects steps going up and down, streetlamps, curbs, fences or low walls, road signs, etc., and other people. Can this sonar do the same and is it cheaper than piece of pipe painted white?

We did something similar but not focused on object detection but environment information (at a hospital, supermarket, etc):

http://jamsa-project.github.io/

Video is in Spanish only, sorry

I would think this would supplement and complement a piece of pipe painted white, not replace it.

Absolutely. This is not a substitute but an improvement.

Indeed, what users need it a good interface for their smart-phones that doesn’t interfere with their other senses in traffic.

I have seen guys counting menu clicks in public trying to navigate their phone, and text-to-speech is not user friendly at all if you can’t hear it over the traffic.

Anyone know how far the 3d printed brail-display people progressed?

While I applaud the creator for going down this route, I have to say be careful as lots of other people have done similar implementations and failed in terms of gaining acceptance or having a large of an impact as they had hoped for. There is at least one engineering capstone project using sensors to help the blind almost every year at most universities. It may be worthwhile hitting them up and asking why they their implementation did not readily appeal/seem worthwhile in their target population.

https://www.fastcompany.com/3038963/can-this-bracelet-help-the-blind-better-understand-their-surroundings

https://www.usnews.com/news/stem-solutions/articles/2015/01/06/example-of-campus-innovations-bat-signal-for-blind

I’m not sure how old this project is, but the new TimeOfFlight laser modules would potentially replace the bulky ultrasonic sensor, and be smaller and take less power, and also be more directionally accurate

However their range may be slighly shorter

TOF’s cap out at around 1.2 meters which is fine for small device navigation, a tad short for people or flying things

There are dozens of projects to assist visually impaired people. The most simple ones use ultrasonic sensors, but lacks precision. The accurate ones use image recognition, which are too complex and need specific services subscription, like Microsoft and IBM. You can use Matlab and also OpenCV to do that, the link below shows an real example from Microsoft and all its capabilities: https://youtu.be/R2mC-NUAmMk