At one point, the Motorola 6809 seemed like a great CPU. At the time it was a modern 8-bit CPU and was capable of hosting position-independent code and re-entrant code. Sure, it was pricey back in 1981 (about four times the price of a Z80), but it did boast many features. However, the price probably prevented it from being in more computers. There were a handful, including the Radio Shack Color Computer, but for the most part, the cheaper Z80 and the even cheaper 6502 ruled the roost. Thanks to the [turbo9team], however, you can now host one of these CPUs — maybe even a better version — in an FPGA using Verilog.

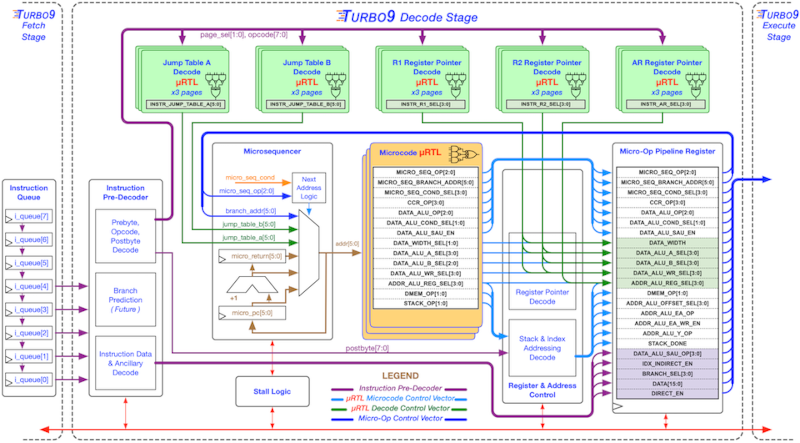

The CPU may be old-fashioned on the outside, but inside, it is a pipeline architecture with a standard Wishbone bus to incorporate other cores to add peripherals. The GitHub page explains that while the 6809 is technically CISC, it’s so simple that it’s possible to translate to a RISC-like architecture internally. There are also a few enhanced instructions not present on the 6809.

In addition to the source code, you’ll find a thesis and some presentations about the CPU in the repository. While the 6809 might not be the most modern choice, it has the advantage of having plenty of development tools available and is easy enough to learn. Code for the 6800 should run on it, too.

Even using through-hole parts, you can make a 6809 computer fit in a tiny space.You can also break out a breadboard.

I don’t think the MPU price was a major factor. If it was, I don’t think it would have been used in the CoCo, since this was a budget computer, competing with the likes of the VIC-20 and Atari 400. The main issue was that the 6809 was one of the last 8-bit architectures, and 16/32-bit chips were already taking over. Nobody was interested in new 8-bit machines.

This is the likely this best answer. I remember everyone was tiring of the Z80, 8080, 6502 etc and wanted at least a 68008, if not a full 68000 in their computer. It wasn’t so much of a processing limitation (although being about 5 times slower than a 68000 clock adjusted didn’t help.) It was mostly the memory addressing capabilities that had people’s attention. The ability to run programs and OSs which needed at least a megabyte or more of memory (woot!) was starting to put real pressure on the CPU designers. However the 6809 was a great solution for several memory / price constrained design targets, specifically the new value-oriented entry level computers (Radio Shack CoCo, BBC Dragon series) and especially arcade video games. Williams particularly used the 6809 to great effect in its Defender, Stargate, Joust and Robotron arcade games.

Another factor was the increasing use of sound and graphics. 64k was just too great a limitation when you really start pushing pixels and audio streams through something like a real OS. Not impossible, just a PITA. That’s why the next generation of PCs tended to use the 68000, notably the Amiga 1000, the Macintosh and the Atari ST.

But the 6809 was really a beautiful and elegant design. One of the most efficient general purpose CPUs for anything, but especially for FORTH.

I don’t recall any 68000 chips running 4x faster in reverse.

1x, less 5x = -4x.

Do you mean that the 6809 had 1/5 the speed?

In my memory, the 6809 was “nice but slow”.

Clock for clock about twice as fast as a 6502 in terms of processing power. So probably your memory is a little faulty…

I think a large mistake in PR for the 6800 and 6809 as they was labeling the chip clockspeed at the crystal frequency and not at the internal instruction speed.

The clock chip for a 68xx at 2MHz was turned in to 4MHz instruction stepping.

Intel speced its clock as 4MHz and used that also as internal internal instruction speed.

Higher numbers did sell better to people who did not know that the technical details.

I started my working carrier with programming the 680x based regional processors for the Ericsson AXE telephone exchange in assembler at the end of the seventies.

That one is very hard to quanify. The 6502s were mainly in machines with better graphic and sound chips except the Apple II which really was under powered when it came to graphics and sound. The 6502s also tended to have very slow floppy drives except the Apple II which had pretty fast drives. Then you have the simple fact that between the Apple II, the Commodore 64, and the Atari line were all very popular so there was a lot of effort by programmers to optimize the software.

It is a real shame that Motorola didn’t create graphics and sound chips and create a reference design and offer it cheap to manufactures. Then make a business style system with OS/9 and 80cols along with the gaming goodness. We might have had an 6809 OS/2 then that would evolve into a 68000 OS-9 world instead of the intel / DOS wintel world.

Today it should be possible to make a 6809 where every instruction takes a single cycle and runs at a much faster clock than any of the orignal 6809.

Hey is it a good idea? Why not? You could make a great little 8 bit computer that is fast and could even run Nitro-9 which would be very fun for some folks.

Motorola did make a reference design for the 6809. The TRS-80 Color Computer (USA) and the Dragon 32 (UK) were both 6809 systems with mostly the same design as they were based on the same set of Motorola parts, including the MC6883 SAM and MC6847 VDG. See https://archive.org/details/Motorola_MC6883_Synchronous_Address_Multiplexer_Advance_Sheet_19xx_Motorola.

Software was trivially ported between the two machines.

And even CODIMEX in Brasil jumped on that 6809 and put a clone of the Coco on the market.

I was told that they sold about 380 of them – what a pity.

But when you sea and read the manual, you understand that this was not really serious.

My Coco 2 running OS-9 was pretty snappy for the day, Thankyoverymuch

The 6809 was at the time of its development an outstanding high-performance device. – 1979!

Unfortunately the micro computer market was booming in the USA only in the beginning of the 80s.

European supplier focused on games and delivered a suicide competition with decreasing the prices until a machine was a penny business forthelft dealers. Except Commodore over several years, as they launched new machines on the market without a long term strategy – cash was the game. So, the 6809 came too late to the consumer market and disappeared a few years later.

All of those home computer guys underestimated the power of Microsoft on a substandard Intel base and thus, nearly all manufacturer of game engines, who did not jump on that horse, went bankrupt in the second half of the eighties.

The 6809 today has still a small group of enthusiasts but there is no room to make any money – it’s nostalgia only.

I’m waiting for someone to take one of these old architectures, figure out its bottlenecks, and give us a 8/16 screaming FPGA implementable processor. Perhaps one of these days (or maybe it’s already here and I’ve not been paying enough attention).

Isn’t this exactly what this is?

It’s an interesting thought but once you start changing them to improve them how far can you go before it’s a new CPU (This is not the greatest CPU in the world, it’s just a tribute) anyway?

I’ve played with a few 8 bit cores on Altera and they’re *really* simple, so much so that I’m not sure they actually have bottlenecks as such?

Might end up going too fast.

I seem to recall a pipelined Z80 that would synthesize at well over 50 MHz on some fairly cheap FPGA. Wish I could remember more…

I bought a Dragon 32 specifically to learn 6809 assembler and get some experience so I could apply for dev jobs in the arcade game industry. The 16 bit X register, program counter and stack pointer along with the hardware multiplication and 16bit maths were the appeal. Although a cobol career was far better paying if less sexy, walking along lines of arcade machine being play tested on one interview was a fun experience that has stayed with me.

As has the green 6809 Assembly Language Programming by Lance Leventhal which sits next to his 6502 book on my topmost shelf. Not far beneath the Dragon in the attic.

IIRC (and it’s debateable at my age), 6809 had add/subtract to the X register, whereas 6800/6802 had only increment and decrement.

Right!

The best learning book on the 6809 is “Learningbthe 6809” by Dennis Bathory Kitsz, Green Mountain Micro, Roxbury, Vermont.

You may down load the pdf and the tapes from the web.

This is the best I’ve ever found on that superbe engine.

All about the 6809:

https://tlindner.macmess.org/wp-content/uploads/2006/09/Byte_6809_Articles.pdf

Funny enough there was a project that modified an Atari xl and replaced the 6502 with 6809

There was a bunch of POC code but if you want to do that you’ll need to make an interposer PCB and logic yourself

16 bit 68xxx

Or 16 bit 6502

Which one runs better? On the same chipset

what can 6809 do that using a the same memory mapping

Well the SNES was more powerful than the Sega Genesis….

You can actually run Sonic the hedgehog on SNES faster than the Genesis

Especially with mode 7 and Dma graphics

In what context? If we’re talking raw CPU performance, the Genesis was beefier than the SNES, Dhrystone for Dhrystone. However, every other metric is so deeply nuanced that it’s difficult to make 1:1 comparisons.

Also “runs better” is very nebulous. You’d need to be more clear on what aspect you’re aiming for.

Literally the only thing the Genesis has in spec vs SNES is that the 68k is clocked a few mhz higher and also Nintendo also doesn’t use stock 65816 it’s a custom CPU actually…

The SNES was Nintendo first accident of building a more powerful console than the competition

Spec wise it’s more capable

That’s why you need a 32x and Sega CD that an SNES with a superfx or DSP on a cartridge can do.

Stock SNES can play CD audio and technically is actually backwards compatible with nes cartridges…..

In reality you measure the CPU performance in a actual memory map and system that actually puts load on the CPU and it’s bus

And how fast you can clock it

That’s back in the days where the specialized GPU and graphics chips mattered more than the CPU, and Dma

Now all that is slapped on a pci-e or m.2 controller for a GPU which is effectively slapping another computer on top over a fast serial link, you can run an os on a GPU using a few shaders…while running another os or two on the cpu, as gpu has is own dedicated ram and instruction sets and runs independent of the mobo other than the link.

Then you have all yo io and audio slapped on a north bridge and or south bridge chip or even on the GPU if you have a high end one.

And slapp some cheep dram because most people can afford 64gb of sram 64bit

*Most can’t afford 64bit sram*

Maybe the mobo and chip manufacturer and Microsoft can….

Supafas asynchronous that runs at 4ghz full CPU bus speed no wait stating

The quirky and wonderfuly weird Vectrex console was 6809-based!

I still have a working Vectrex.

We had an active 68xx self building community in sweden, this after the largest electronic magazine published a design of a 6800 based computer with possibility to buy unpopulated cirquitboards.

A club in Stockholm based on this had more than 500 members and created an 6809 kit and later a complete 6810 based idris computer.

With 6809 AND 6502 on FPGA, a Commodore SuperPET clone could be done and I can finally sell my 40 years old SuperPET. So when will someone come up with a SuperPET clone PCB with standard composite video out?

There’s a lot of cores being emulated on the DE10 Nano.

For arcade game emulation, as part of the Mister FPGA project.

After reading this article, I’d consider the Hitachi 6309 as a target.

As I recall, the 6809 was going to go into the original Macintosh, but the 68000 was so appealing (it was being used in the LISA) it ended up in the Mac as well. I think it ended up pushing the price up quite a bit as well, unfortunately.

The 6809 was a neat upgrade to the 6800 which I had used on a project. I made a Tiny C compiler back end for it with a peephole optimizer and ran the code on a CoCo. But it was slow, particularly on the indexing modes of addressing with the added registers.

Microcomputer history interesting … but

Data Center ARM M series/RISC-V hardware platforms, Linux servers, vs power consumption, malware prevention, software maintainability and costs far more interesting … and perhaps profitable for those software engineers/managers who can guide companies

to avoid the 10x/superprogrammer/manager obfuscating employment security-seeking teams?

South West Technical Products made a business computer running multi-tasking / multi-user OS/9. Until the IBM PC arrived, it was a popular alternative to mainframe time sharing services.

TanRu Nomad a collector of computers has a video of one working, here:

https://www.youtube.com/watch?v=SATjR-MWHDM