It’s amazing how many things have managed to move online in recent weeks, many with a beneficial side effect of eliminating travel making them more accessible to everyone around the world. Though some events had a virtual track before it was cool, among them the DARPA Subterranean Challenge (SubT) robotics competition. Recent additions to their “Hello World” tutorials (with promise of more to come) have continued to lower the barrier of entry for aspiring roboticists.

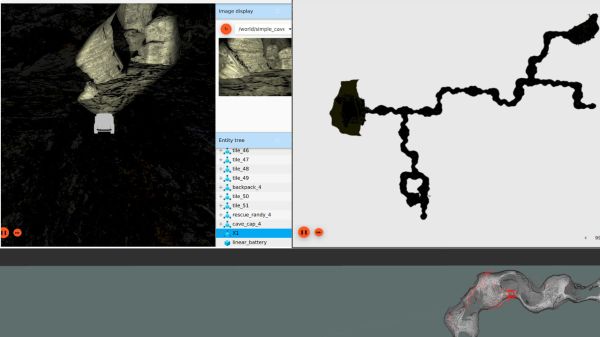

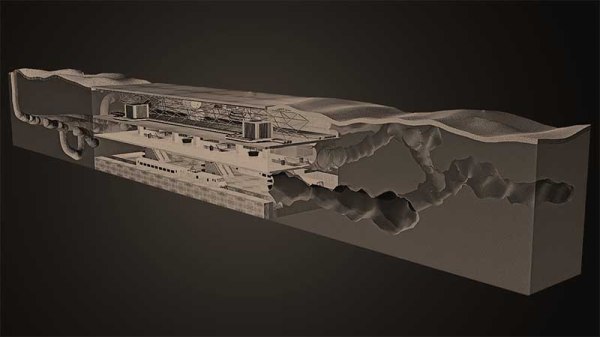

We all love watching physical robots explore the real world, which is why SubT’s “Systems Track” gets most of the attention. But such participation is necessarily restricted to people who have the resources to build and transport bulky hardware to the competition site, which is just a tiny subset of all the brilliant minds who can contribute. Hence the “Virtual Track” which is accessible to anyone with a computer that meets requirements. (64-bit Ubuntu 18 with NVIDIA GPU) The tutorials help get us up and running on SubT’s virtual testbed which continues to evolve. With every round, the organizers work to bring the virtual and physical worlds closer together. During the recent Urban Circuit, they made high resolution scans of both the competition course as well as participating robots.

There’s a lot of other traffic on various SubT code repositories. Motivated by Bitbucket sunsetting their Mercurial support, SubT is moving from Bitbucket to GitHub and picking up some housecleaning along the way. Together with the newly added tutorials, this is a great time to dive in and see if you want to assemble a team (both of human collaborators and virtual robots) to join in the next round of virtual SubT. But if you prefer to stay an observer of the physical world, enjoy this writeup with many fun details on systems track robots.

![The 3D scan of a small cave near Louisville (source: [caver.adam's] Sketchfab repository)](https://hackaday.com/wp-content/uploads/2016/06/bildschirmfoto-2016-06-27-um-20-02-11.png?w=250)