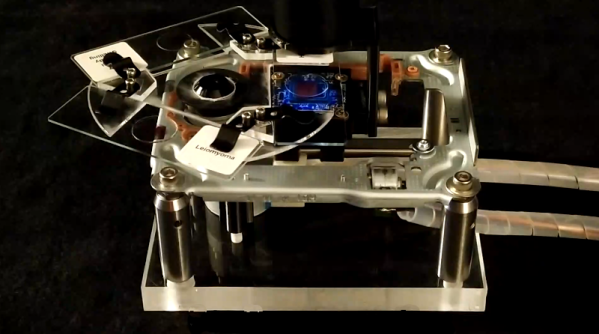

We have all seen those cheap digital microscopes, whether in USB format or with its own screen, all of them promising super-clear images of everything from butterfly wings to electronics at amazing magnification levels. In response to this, we have to paraphrase The Simpsons: in this Universe, we obey the laws of physics. This applies doubly so for image sensors and optics, which is where fundamental physics can only be dodged so far by heavy post-processing. In a recent video, the [Outdoors55] YouTube channel goes over these exact details, comparing a Tomlov DM9 digital microscope from Amazon to a quality macro lens on an APS-C format Sony Alpha a6400.

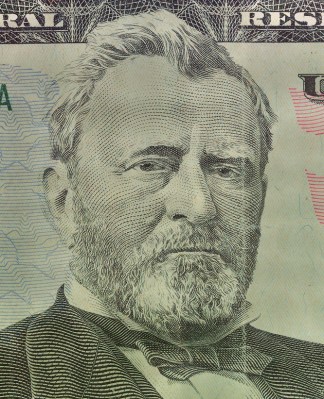

First of all, the magnification levels listed are effectively meaningless, as you are comparing a very tiny image sensor to something like an APS-C sensor, which itself is smaller than a full-frame sensor (i.e., 35 mm). As demonstrated in the video, the much larger sensor already gives you the ability to see many more details even before cranking the optical zoom levels up to something like 5 times, never mind the 1,500x claimed for the DM9.

On the optics side, the lack of significant depth of field is problematic. Although the workarounds suggested in the video work, such as focus stacking and diffusing the light projected onto the subject, it is essential to be aware of the limitations of these microscopes. That said, since we’re comparing a $150 digital microscope with a $1,500 Sony digital camera with macro lens, there’s some leeway here to say that the former will be ‘good enough’ for many tasks, but so might a simple jeweler’s loupe for even less.

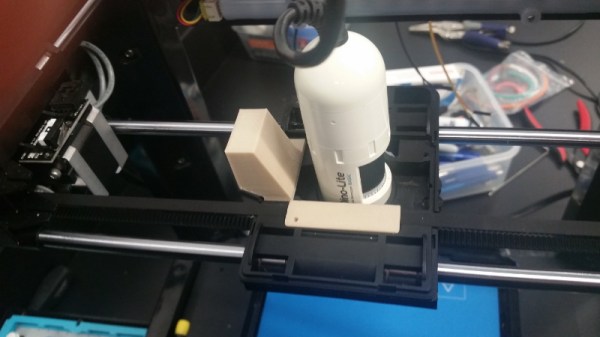

There are some reasonable hobby-grade USB microscopes. There are also some hard-to-use toys.

Continue reading “Why Cheap Digital Microscopes Are Pretty Terrible”