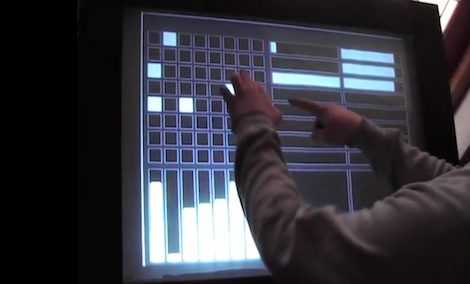

If you’re going to build a giant touch screen, why not use an OS that is designed for touch interfaces, like Android? [Colin] had the same idea, so he connected his phone to a projector and a Kinect.

Video is carried from [Colin]’s Galaxy Nexus to the projector via an MHL connection. Getting the Kinect to work was a little more challenging, though. The Kinect is connected to a PC running Simple Kinect Touch. The PC converts the data from the Kinect into TUIO commands that are received using TUIO for Android.

In order for the TUIO commands to be recognized as user input, [Colin] had to compile his own version of Android. It was a lot of work, but using an OS designed for touch interface seems much better than all the other touch screen hacks that start from the ground up.

You can check out [Colin]’s demo after the break. Sadly, there are no Angry Birds.

Continue reading “Control Android With A Projector And Kinect”